Vectors in SEO: The Ultimate Guide to Embeddings, Retrieval & AI Overviews

Discover the ultimate guide to vectors in SEO—how embeddings transform retrieval, ranking, and AI Overviews. Get actionable strategies and a comprehensive checklist now.

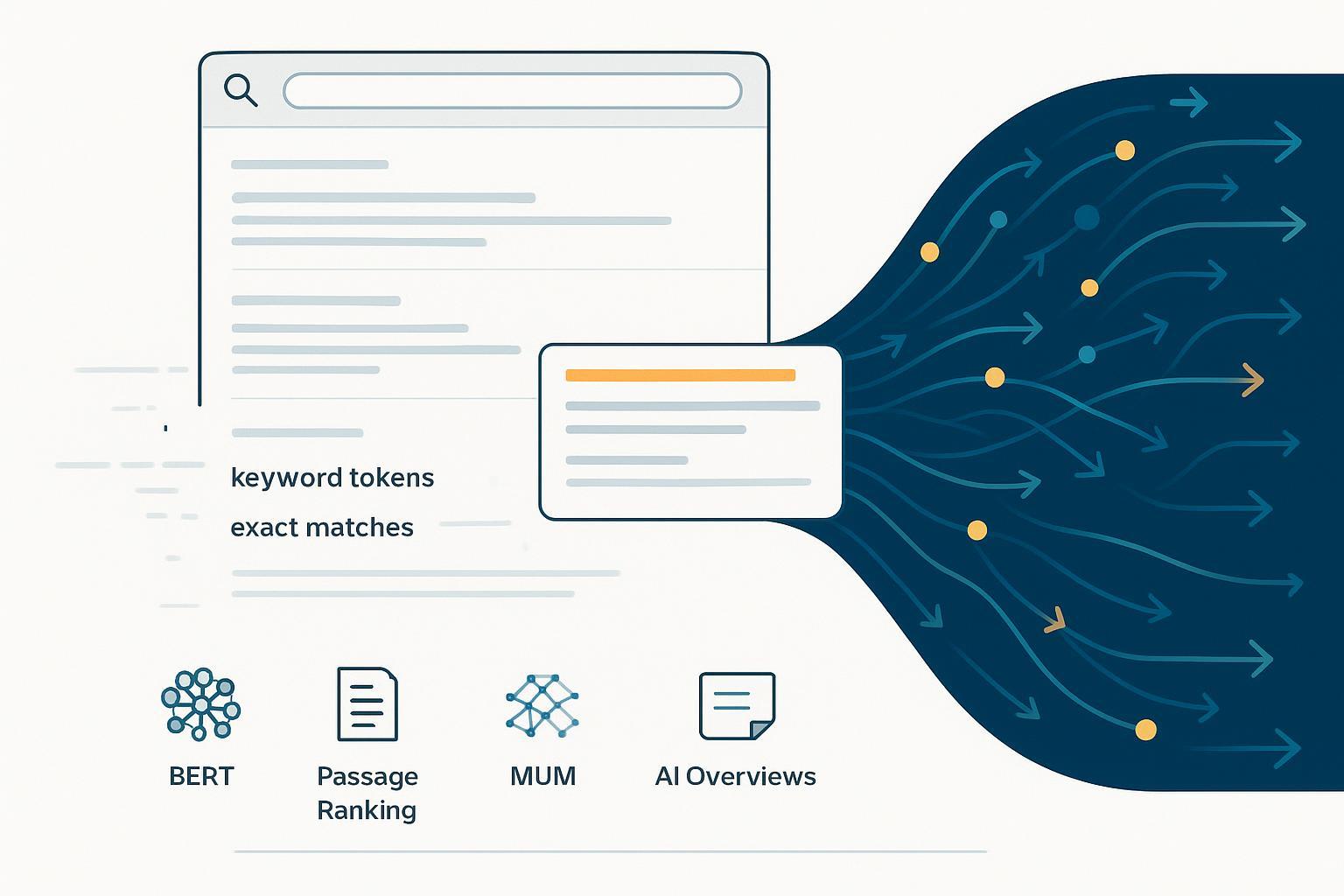

The way search finds and summarizes your content has changed: from strict keyword matching to systems that interpret meaning. But here’s the catch—keywords didn’t disappear; they teamed up with vectors. Think of it as a duet: sparse signals (terms, entities, anchors) and dense semantics (embeddings) working together to surface the best passage for a task, and increasingly, to provide citations in AI Overviews.

This guide turns that shift into a practical playbook. We’ll clarify what embeddings actually changed (and didn’t), connect key Google milestones to on-page tactics, and give you a site architecture and block-level checklist to make your content extractable, cite-worthy, and measurable across search and AI experiences.

Four myths to retire

“Vectors mean keywords don’t matter.” Hybrid systems often beat single methods. A cross‑dataset benchmark shows that combining sparse and dense scoring frequently outperforms either alone; see the BEIR benchmark (NeurIPS 2021).

“Generate embeddings and you’ll rank.” Google doesn’t use your site’s own embeddings to rank pages. Ranking depends on satisfying intent with helpful, reliable content and broad signals of quality and authority, as emphasized in Google’s Helpful Content guidance.

“Passage indexing created a new index.” Google describes passage ranking as identifying relevant sections to better assess a page’s relevance—it’s a ranking change, not a new index. See the Search Central ranking systems guide.

“AI Overviews always reduce traffic.” Impact varies by query and whether your content is cited. Google frames AI Overviews as snapshots with links to explore sources; details are in the AI Overviews product explainer.

Milestones that mattered—and what to do about them

Search didn’t flip a single switch; it evolved through milestones that each nudged strategy. Here’s what changed and the immediate on‑site implications.

Hummingbird (2013): Conversational intent

What changed: Core rewrite to better interpret query meaning, especially conversational/voice queries.

What to do: Map content to intents. Cover related questions naturally on the page and cluster supporting articles around the main topic.

RankBrain (~2015): Concepts, not just words

What changed: Machine learning to interpret relationships between words and concepts; better handling of novel queries.

What to do: Use clear entity names, definitions, and synonyms. Write for people; avoid keyword stuffing. Clarify who/what/where with unambiguous references.

Reference: “How AI powers great search results” (Google).

BERT (2019): Context matters

What changed: Bidirectional understanding to interpret nuance and prepositions; improved featured snippets and query interpretation.

What to do: Use natural sentence structure. Avoid vague pronouns without antecedents. Place concise, direct answers near the question heading.

Reference: Google’s BERT in Search announcement (2019).

Passage Ranking (2021): Section‑level relevance

What changed: Google can evaluate the relevance of individual passages within a page to inform ranking.

What to do: Structure pages with descriptive H2/H3s, each addressing a sub-intent. Start each section with a 40–60 word answer before expanding.

Reference: Ranking systems guide—Passage ranking.

MUM (2021→): Multimodal, cross‑language reasoning

What changed: A system designed for complex tasks and understanding across text, images, and languages.

What to do: Add complementary visuals and step-by-step explainers where appropriate. Consolidate topic clusters so users (and systems) can navigate breadth and depth.

Reference: Introducing MUM (Google).

AI Overviews (2024→): Generative snapshots with citations

What changed: Summaries for certain queries, powered by a customized Gemini model working with Search. Links provide paths to deeper reading.

What to do: Make blocks “cite‑ready”: precise answers, sources, and clean markup. Maintain quality signals and clarity so your passages are eligible.

Reference: AI Overviews product page.

What vectors actually change in retrieval

Embeddings map text into high‑dimensional space so that “socks for cold weather” sits near “thermal wool socks,” even if the words differ. Sparse systems like BM25 excel when the query contains exact terms or entities. Most modern search uses both: hybrid retrieval.

Why does this matter to you? Because your pages must satisfy both modes. Titles, headings, and anchors should still include the phrases real people use. Meanwhile, the body needs semantic breadth—definitions, paraphrases, and examples—to capture dense signals.

A quick analogy: sparse retrieval is a lock that opens with an exact key; dense retrieval is a smart lock that recognizes your hand even if you forgot the key. The best doors use both.

Site architecture for the vector era

The single biggest upgrade you can make is information architecture that mirrors user intents and entities, then connects them with meaningful links.

Topic clusters and entities: Define pillar pages around core entities (e.g., “vector search”) and support them with deep articles addressing sub‑intents (“dense vs. sparse,” “hybrid retrieval examples,” “schema for AI engines”). On each pillar, summarize and link to the best passage on its child topics.

Internal links by intent: Use descriptive, human anchors (e.g., “hybrid retrieval examples” instead of “read more”). Add short context sentences above links so the destination’s passage aligns with the referring intent.

Multimodal assets: Where a visual clarifies steps or comparisons, add an image or short video. MUM showcases value in multimodal understanding, and visuals can increase extractability of how‑to content.

Cluster pattern example: A pillar on “Semantic Search” links to H2‑level sections within each child page (e.g., “What is dense retrieval?” “How hybrid scoring works”). Each child page links back to the pillar with an anchor reflecting the pillar’s framing.

For foundational distinctions between GEO and AEO and why AI visibility is now cross‑engine, see the primer on AI visibility and brand exposure in AI search.

Block‑level optimization checklist

Below is a practical checklist oriented to passage extraction, featured snippets, and AI citations. Use it during content creation and audits.

Element | Do this | Why it matters | Pitfalls to avoid |

|---|---|---|---|

H2/H3 framing | Phrase subheads as questions or crisp statements; include the main term/entity | Increases alignment with query intents and passage ranking | Vague headings (“Miscellaneous notes”) |

Concise answer block | Lead each section with a 40–60 word direct answer, then elaborate | Creates a cite‑ready snippet for snippets/AI Overviews | Burying answers deep in long paragraphs |

HTML semantics | Keep answers in simple//and useonly when it adds clarity | Clean structure aids extraction and reduces noise | Styling answers with complex divs/spans |

On‑page citations | Attribute facts with links to primary sources in‑line | Supports E‑E‑A‑T and summary confidence | Linking to low‑quality sources or bare URLs |

Structured data | Add schema that truly matches content (e.g., HowTo, FAQPage where eligible) | Helps systems understand page purpose and content types | Forcing schema on non‑eligible content |

Entities & synonyms | State canonical entity names and common variants | Balances lexical and semantic recall | Over‑using synonyms to the point of clutter |

Images & captions | Add explanatory images with alt text and captions | Supports multimodal understanding (MUM) | Decorative images without explanatory value |

Internal links | Link related blocks with descriptive anchors | Reinforces cluster context and discoverability | “Click here” anchors or orphaned pages |

Need a deeper markup refresher? See Google’s intro to structured data and remember that rich results aren’t guaranteed. Google also reduced visibility for certain types (e.g., FAQ/HowTo changes), so focus on clarity over chasing deprecated surfaces.

AI Overviews—eligibility, citations, and controls

What’s known from Google’s documentation:

Eligibility: AI Overviews are generated when systems judge a query will benefit. They use a customized Gemini model alongside Search’s ranking and the Knowledge Graph, and they include links for deeper reading. See the AI Overviews product page.

Be cite‑worthy: Provide precise answers near relevant headings, show your work with sources, and maintain people‑first quality. Google’s guidance on creating helpful content remains the north star.

Controls and trade‑offs: There’s no AI‑Overviews‑only opt‑out. You can control snippet usage with robots meta directives—nosnippet, data‑nosnippet, and max‑snippet—but they also affect standard snippets. See robots meta tag specs and AI features and your website. If you must prevent reuse of blocks, consider data‑nosnippet on specific elements rather than site‑wide nosnippet.

A pragmatic stance: Optimize for eligibility and attribution where it helps users. For sensitive content you don’t want summarized, use fine‑grained controls. For everything else, design sections as if they’ll be quoted in isolation—because they might be.

Measure, compare, iterate

You can’t improve what you don’t measure. Define a simple but durable set of metrics:

Citations: How often your pages are cited in AI Overviews and other answer engines.

Impressions: Passage/featured snippet appearances and overall visibility.

Clicks and CTR: From snippets/AI Overviews where your link appears, and from traditional results.

Share of voice: Your citation and mention share against competitors across engines.

Workflows:

Manual/Google-native: Use Search Console to track impressions and CTR for queries where you suspect passage extraction. Maintain a log of AI Overviews sightings with screenshots and prompts; tag entries by URL, section, and query class. Validate structured data with Rich Results Test and URL Inspection.

Tool‑supported (disclosure): Geneo (Disclosure: Geneo is our product) can be used to monitor AI visibility and citations across engines (ChatGPT, Perplexity, Google AI Overviews). It provides metrics like link visibility, brand mentions, and reference counts, plus competitive comparisons and white‑label reporting. Keep your usage neutral: use it to verify when blocks get cited and to benchmark against peers.

Alternatives and parity: For parity, teams combine manual logs, SERP trackers that capture featured snippets, and custom dashboards (e.g., storing prompt/citation evidence in a data warehouse) to approximate multi‑engine visibility. Evaluate tools by scope (which engines), citation capture, and reporting needs.

For an end‑to‑end framework on instrumentation, see the AI visibility audit workflow and a neutral overview of AI search visibility tracking features.

Case vignette (hypothetical but realistic)

A B2B documentation hub struggled to appear for long‑tail “how to configure X” queries and rarely showed up as a citation in AI Overviews.

What changed: The team restructured articles by task. Each H2 framed a specific question (“How do I enable Role‑Based Access Control?”). Under each, a 50‑word answer block came first, followed by step‑by‑step detail and a short table of parameters. They added on‑page citations to primary docs and clarified entity names and synonyms. Internal links were rewritten to reflect user intents.

Measurement: Over 12 weeks, they tracked “cite‑rate” (number of AI Overview citations per 100 relevant queries observed), featured snippet impressions, and CTR in Search Console for task queries.

Observed outcome (hypothetical numbers): Cite‑rate rose from 2 to 11 per 100 observed queries; featured snippet impressions for task queries increased 38%; CTR on those snippets moved from 9.1% to 12.4%. The largest gains clustered around sections with the cleanest 50‑word answers and primary-source citations.

What they learned: Short, precise answer blocks and intent‑descriptive anchors amplified both dense and sparse signals. They also discovered several sections overusing synonyms; trimming them improved clarity without hurting coverage.

If you want guidance on making blocks more cite‑ready, review this primer on optimizing content for AI citations.

Troubleshooting and pitfalls

No headings, no extracts: If a page is a wall of text, systems struggle to identify passages. Add H2/H3s tied to discrete intents.

Answers buried under fluff: Lead with the answer, then elaborate. If your first sentence meanders, expect lower extractability.

Schema without substance: Markup can’t fix unclear content. Ensure the on‑page block is intelligible without the schema.

Over‑templated phrasing: Repeating identical sentence structures makes sections feel generic. Vary cadence and include concrete nouns and verbs.

Weak sources: If you cite low‑quality or derivative sources, you transfer that weakness to your page. Favor primary documentation and original research.

Snippet controls used bluntly: A site‑wide nosnippet can suppress beneficial surfaces. Use element‑level data‑nosnippet for sensitive bits.

Internal links without context: “Click here” anchors miss both humans and sparse retrieval. Use anchors that reflect the destination’s intent.

Your next 30 days

Week 1: Audit 10–20 top pages. Identify missing H2/H3s, absent answer blocks, and vague headings. Prioritize fixes by query class and business value.

Week 2: Implement block‑level upgrades on 5 pages: question‑framed subheads, 50‑word answers, on‑page citations, semantic HTML. Add or correct schema where truly applicable.

Week 3: Map a single topic cluster (one pillar, 5–8 children). Rewrite internal links to use intent‑descriptive anchors; add short context sentences.

Week 4: Instrument measurement. Set up a lightweight log for AI Overview sightings and track key queries in Search Console. Optionally, evaluate a multi‑engine tracker; agencies may prefer white‑label reporting. For executive alignment on AEO strategy, see AEO best practices for leaders and, for schema specifics, schema integration for AI engines.

The bottom line: Hybrid retrieval means you need both lexical clarity and semantic depth—and clear, cite‑ready blocks that can stand alone. Build that into your architecture, write for people, and measure what gets extracted. The rest is steady iteration.