From SEO to GEO: The Ultimate Agency Survival Guide

Master GEO and AI Overviews with this ultimate guide—practical workflows, measurement KPIs, and reporting frameworks for agencies. Adapt and thrive!

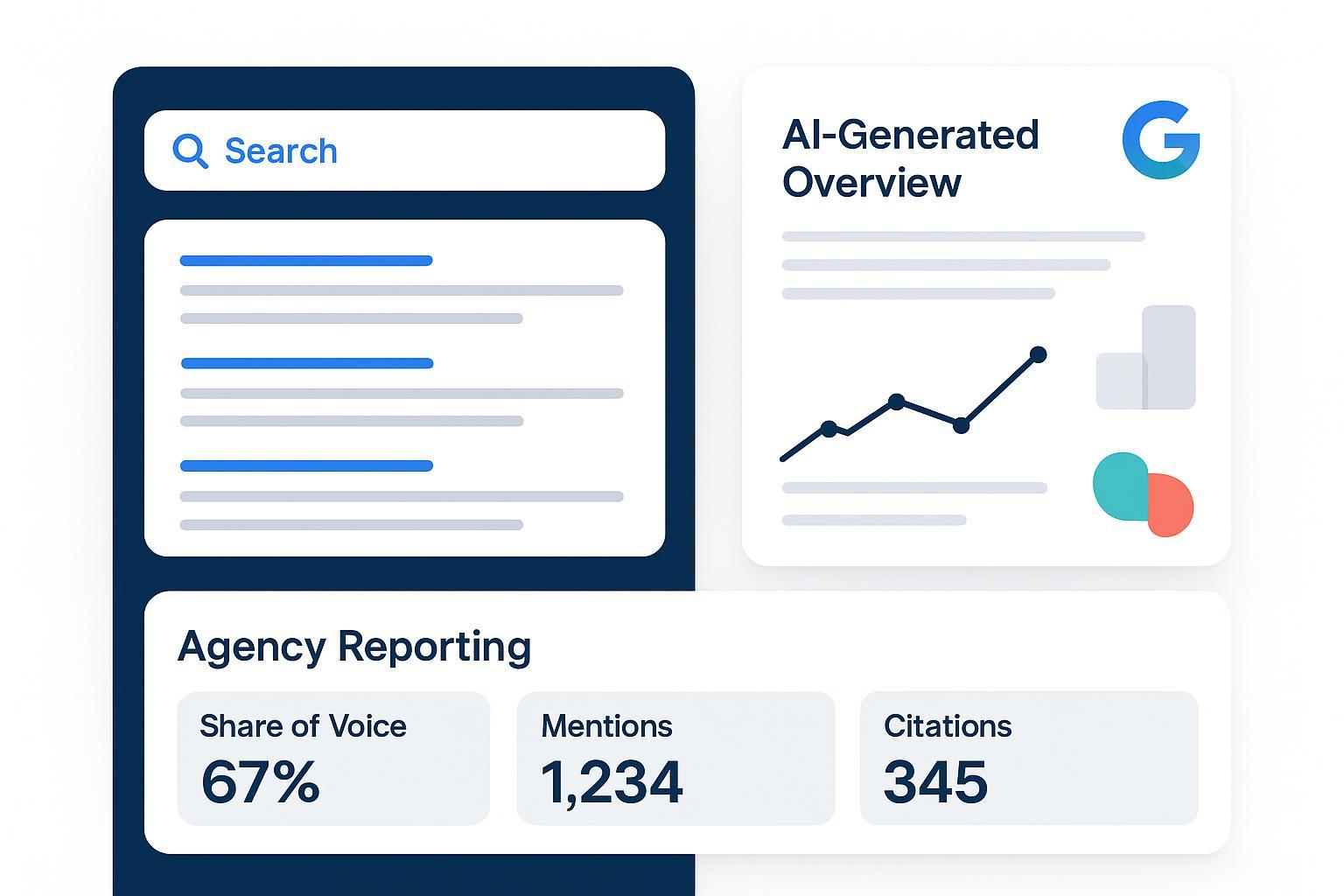

Modern search isn’t just ten blue links. Clients see synthesized answers, source cards, and product boxes before they ever reach a website. That’s the shift from SEO (optimize for rankings and clicks) to GEO—generative engine optimization—where the goal is to be cited, mentioned, or recommended inside AI answers across Google AI Overviews, ChatGPT, Perplexity, and Bing Copilot. Agencies that survive this transition align strategy, content, and reporting to these answer surfaces without abandoning proven SEO foundations.

This guide shows how to run SEO and GEO together, measure “visibility without clicks,” and present clear progress to clients using reproducible workflows and neutral, evidence-led practices.

1) GEO, defined: what AI Overviews actually do

Google’s AI Overviews present synthesized summaries on queries where they “add value beyond traditional results,” including links to sources as a jumping-off point. Google’s site-owner guidance emphasizes people-first content, crawlability, and structured data that matches visible content rather than tricks or shortcuts. See Google’s official documentation in AI features and your website for how the system treats structured data and site quality, and their 2025 blog post on succeeding in AI Search for practical guardrails: Google Search Central — AI features and your website and Top ways to succeed in AI Search (Search Central blog, 2025).

From the product side, Google has stated that AI Overviews lead to “over 10% increase in usage of Google for the types of queries that show AI Overviews,” highlighting their role as a discovery layer rather than a dead-end: Google Product Blog — AI Mode/Overviews update (2025).

Takeaway for agencies: GEO isn’t a replacement for SEO. It’s a parallel objective—becoming a trustworthy cited source in AI answers, while protecting and growing traditional organic performance where it still matters.

2) The impact in 2025: prevalence and clicks, with nuance

How often do AI Overviews appear and how do they affect clicks? The honest answer: it depends on the query set, timeframe, and market. That said, multiple 2025 sources document meaningful, sometimes sharp, changes.

- Independent tracking shows fluctuating prevalence through the year, often in the low-to-mid teens of queries, with peaks and pullbacks by category, according to the Semrush AI Overviews study (2025).

- Reporting throughout 2025 observed lower CTRs when AI Overviews appear. BrightEdge data summarized by Search Engine Land showed organic CTR declines for informational queries and increased impressions with overall CTR down year over year; ad placement also shifted when Overviews were present. See the roundup in Search Engine Land — Top SEO news stories of 2025 and related coverage on Overviews’ pullbacks.

- User behavior research indicates people are less likely to click when an AI summary appears, per the Pew Research Center short read (July 2025).

How do we reconcile Google’s “usage up” with independent CTR declines? People may use Google more for AI-summarized queries while clicking less on classic organic results. That’s precisely why GEO reporting must foreground citations, mentions, and share of voice instead of rank-only metrics.

3) A dual-track operating model: run SEO and GEO together

Think of your program as a two-lane highway:

- Lane 1 (SEO): maintain and grow organic visibility for high-intent queries, technical health, internal linking, and long-tail content that still earns clicks.

- Lane 2 (GEO): make your content citable and recommendation-worthy inside AI answers across engines.

Team roles shift subtly. The strategist sets the query set and cadence for AI visibility alongside classic KPIs. The content lead ensures question-led structures, crisp summaries, and visible sourcing. Technical SEO enforces crawlability, structured data aligned to visible content, and clean metadata. Analysts log answers, measure Share of Voice and citations, and present trends by engine. Yes, it adds overhead at first, but standardizing a GEO checklist per content piece and automating monitoring helps it fold into normal sprints after a few cycles.

4) Build GEO‑ready content (without gimmicks)

Generative engines reward clarity, explicit entities, and sources. Lead with questions and direct answers—create a 2–4 sentence summary for each primary query that’s accurate and quotable. Make entities and relationships obvious by naming products, categories, and criteria in plain language. Show your homework by attributing claims with descriptive links to primary sources and add author bios or methodology sections where relevant to strengthen E‑E‑A‑T. Use supported structured data when it genuinely applies (FAQ, HowTo, Product, Article) and make sure it mirrors visible content; validate with the Rich Results Test. Google’s guidance stresses alignment over “schema stuffing”: Search Central — Appearance docs and the 2025 success tips. Keep local and product feeds current—Merchant Center, Google Business Profile, and inventory data can influence the AI layer for shopping and local queries, per Google’s AI features guidance.

A helpful mental model: write the answer you’d want cited in a college paper—concise, sourced, and unambiguous—then wrap it in a well-structured page that’s fast, crawlable, and internally linked.

5) Engine‑by‑engine tactics (Google, Perplexity, Bing Copilot, ChatGPT)

While principles overlap, engines differ in how they construct answers and show citations.

- Google AI Overviews (Search): Answers synthesize across multiple sources and can display citations inline or in expandable cards. Prioritize freshness, clear titles, and on-page citations that match the claims you want attributed. Google does not publish detailed “ranking factors” for Overviews; follow their quality and structured data guidance.

- Perplexity: Tends to foreground numbered citations and recent sources. Keep pages current and explicitly reference data with dates. See the Perplexity Search Quickstart (docs) for how retrieval and citations are handled.

- Bing Copilot: Emphasizes verifiable links and web grounding for answers. Ensure your pages are crawlable and that your references are authoritative so you’re a safe citation. Microsoft’s documentation outlines how search grounding works: Microsoft Learn — Copilot Search.

- ChatGPT: Browsing and citation behavior varies across modes and updates; detailed publisher guidance hasn’t been published in 2025. Treat it like an evolving channel—log answers and learn which content themes tend to be referenced.

Tip: Build small, engine-specific checklists (titles/metadata style, citation density, freshness expectations) and test changes on a limited query set before scaling.

6) Measurement and reporting frameworks

Clicks and average position don’t capture what matters in AI answers. Agencies need an AI visibility layer that tracks mentions, citations, and share of voice by engine and intent. Start with a manageable, repeatable framework.

- Query sets: Group by intent (informational, commercial, navigational) and by product/service category.

- Weekly snapshots: Log full answer text, citations, and placements for each engine.

- KPIs: Focus on visibility signals before downstream traffic proxies.

| KPI | What it measures | How to calculate (practical) | Why it matters |

|---|---|---|---|

| AI Share of Voice (SOV) | Your brand’s presence relative to competitors in AI answers | Mentions of your brand / total brand mentions in the answer set; weight earlier/primary mentions higher | Shows competitive presence even when clicks are suppressed |

| AI Mentions | Count and type of brand mentions | Tally mentions by role (headline/body/recommendation) per engine | Reveals where and how you’re being referenced |

| Citations (Domain Citation Rate) | How often your domain is cited | % of queries with ≥1 citation from your domain; segment primary vs. secondary | Links are a trust signal and often drive some clicks |

| Sentiment of Mentions | Tone of the reference | Manual/assisted labeling (positive/neutral/negative) applied to the answer text | Distinguishes good visibility from bad visibility |

For deeper implementation ideas, see neutral KPI primers on share of voice, mentions, and citation tracking: Exploding Topics — Share of Voice primer and Conductor — AI mention/citation tracking overview. For an applied perspective tying these metrics together, this overview of AI visibility KPIs provides practical considerations: Geneo — AI visibility KPI best practices.

Baselining tips: start with 50–150 queries per client and expand once your process is stable. Segment by engine and topic cluster—don’t mash everything into one average. Track change, not absolutes; a modest SOV lift in a key commercial cluster can be more meaningful than a broad, flat average.

7) A 7‑day action plan to kickstart GEO

- Day 1 — Define your query set. Compile 50–150 queries per client by intent and category. Include competitive alternatives and document selection rules.

- Day 2 — Audit 10–20 core pages. Add question-led sections with 2–4 sentence summaries; verify claims have descriptive, primary-source citations; tighten titles and H1s.

- Day 3 — Implement structured data where relevant. Ensure schema mirrors visible content and passes validation. Remove stale or misleading markup.

- Day 4 — Log baseline answers. Capture Google AI Overviews, Perplexity, Bing Copilot, and ChatGPT outputs for your query set. Record AI Mentions, SOV, citations, and sentiment.

- Day 5 — Optimize two content clusters. Improve clarity, entity naming, supporting evidence, and freshness; add FAQs or HowTos that genuinely help.

- Day 6 — Build a lightweight dashboard. Even a spreadsheet or Looker Studio view can visualize SOV and citation trends by engine.

- Day 7 — Present the plan. Align stakeholders on dual-track goals, zero-click realities, and a monthly iteration cadence.

If you need a primer on combining GEO and SEO in one program, this neutral walkthrough can help orient execs and practitioners: Geneo — Hybrid SEO + GEO guidance.

8) Client reporting and portals (neutral example and alternatives)

Disclosure: Geneo is our product. Many agencies need white-label, client-facing reporting that tracks AI visibility across engines and can be hosted on a custom domain. In practice, you can centralize weekly snapshots of AI answers and their citations, calculate AI SOV, Mentions, and Domain Citation Rate per engine and per topic cluster, and provide role-based client access with exports that explain methods and uncertainty. A neutral way to do this is with a spreadsheet-backed data model and a BI layer (e.g., Looker Studio, Power BI, or internal dashboards). Some teams use purpose-built platforms; for example, Geneo can be used to monitor mentions across Google AI Overviews, ChatGPT, and Perplexity, and to present Share of Voice and citation trends in a white-label client portal. For a practical setup walkthrough in a white-label context, see: Geneo — Set up a white‑label AI visibility dashboard. If you prefer to build in-house, mirror the same concepts: cross-engine coverage, consistent labeling, and exportable, client-friendly views.

9) Localization and multilingual GEO

Generative answers vary by language and market. Two principles matter most: create localized content that truly targets each audience, and measure visibility per locale. Google has expanded AI Mode to more languages, with behavior that differs by market; treat hreflang and localized metadata as must-haves: see the product update coverage at Google — AI Mode expands to more languages (2025). Tests across vendors and analyses suggest engines prefer citing content in the user’s language. Translated and localized sites have shown substantial visibility lifts in AI answers in some studies, as documented in Search Engine Journal’s 2025 coverage of translation impact. A short primer on schema and automation for AI search can help teams align technical implementation with multilingual content: Geneo — Schema automation for AI search visibility.

10) Opt‑out reality and governance

As of late 2025, there’s no supported way for sites to stay in Google’s index while categorically opting out of inclusion in AI Overviews. Blocking Google‑Extended influences training allowances, not whether your content appears in Overviews; standard Googlebot indexing is what the feature draws from. Industry reporting and legal coverage highlight ongoing debates and attempts to establish publisher controls, but nothing agencies can rely on operationally today. See background in Digiday — publishers’ legal and policy responses (2025) and broader regulatory reporting like Fortune — European probes on content use (Dec 2025).

So what can you control? Quality, clarity, sourcing, and measurement. Set expectations with clients that citations and recommendations are the new leading indicators, while you continue to capture the clicks that still occur. It’s not either/or; it’s both.

What to do next

- Commit to a dual-track model: keep your SEO foundations strong and build a GEO layer on top.

- Lock a weekly measurement cadence for AI SOV, Mentions, Citations, and sentiment by engine.

- Educate stakeholders on zero-click realities and the value of being cited inside answers.

Want to see how an AI visibility workflow looks end to end before you build it yourself? Review a neutral, step-by-step walkthrough and adapt the concepts to your stack: Geneo — Beginner’s operational guide to AI search visibility. The tools may differ, but the principles hold. Let’s get to work.