The Science Behind Predictive Scoring for New Content Topics: How AI Signals Inform Content Strategy

What is predictive scoring for new topics? Learn how AI signals inform GEO/AEO strategy and reduce waste with a practical, step‑by‑step workflow.

When you bet on a new topic, you’re making a decision under uncertainty. Some topics take off and earn citations in AI Overviews and answer engines; others stall, consuming time, budget, and stakeholder goodwill. The fix isn’t more brainstorming—it’s adopting a signal‑based forecast. Predictive scoring estimates the likelihood that a proposed topic will gain visibility in AI answers before you produce the content, so you prioritize bets with higher expected payoff.

What “predictive scoring” means in GEO/AEO contexts

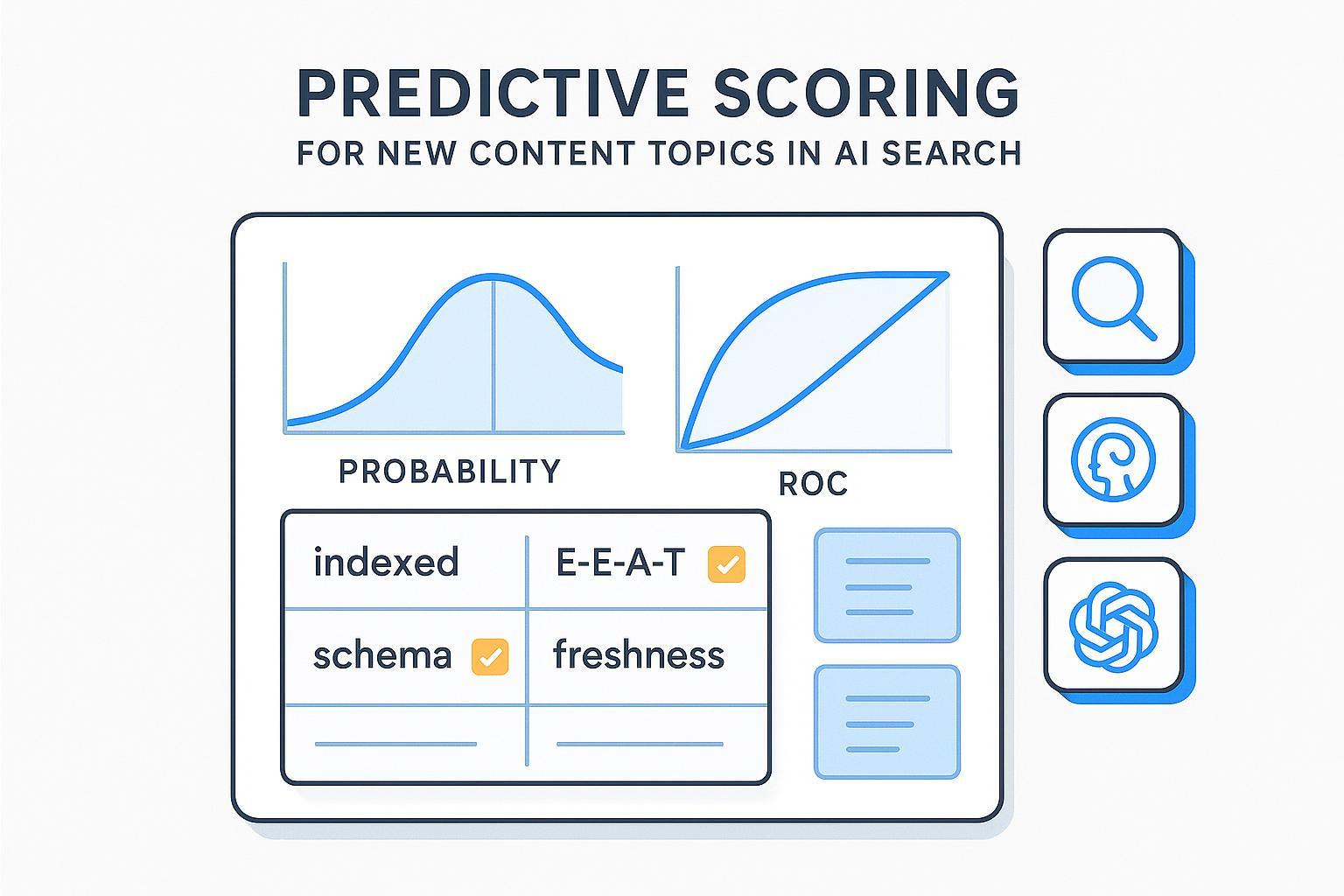

Predictive scoring for new content topics is a quantitative method that estimates a topic’s propensity to earn citations, mentions, or recommendations in answer engines (e.g., Perplexity, ChatGPT) and Google’s AI experiences within a defined window (typically 30–90 days after publish). It is an estimate, not a promise. The score draws on cross‑engine signals—indexation and snippet eligibility, E‑E‑A‑T indicators, freshness, structured data and answer‑first formatting, semantic intent alignment, and cross‑engine citation consistency—plus historical visibility.

Two boundaries matter:

Scope: We’re optimizing for AI citations and answer selection (AEO) and broader AI‑generated visibility (GEO), not classic rankings alone.

Controls: You can manage inclusion in Google’s AI features with standard Search controls (nosnippet, max‑snippet, noindex). Google states that supporting links in AI features must be indexed and snippet‑eligible; there are no extra technical requirements beyond normal Search. See Google’s AI features eligibility overview and guidance on helpful, reliable, people‑first content.

Brandlight’s “AI Signals” vs. GEO/AEO signals

Brandlight has published vendor‑specific “AI Signals” across posts—momentum (velocity to visibility), divergence across engines, localization consistency, share of voice, citation breadth/credibility, freshness, attribution clarity, and unmet‑intent prompts—often prioritized via an Impact/Effort × Confidence rubric. Treat these as practices rather than a standard taxonomy.

Where do they overlap with GEO/AEO signals? Share of voice and citations map to visibility; freshness and attribution clarity align with E‑E‑A‑T; localization and intent/framing echo semantic alignment. Distinctions include heavier emphasis on governance, anomaly detection, and cross‑engine normalization at scale.

Practical takeaway: vendor signal sets can inform your weighting, but validate any adoption against actual inclusion outcomes. In Google’s world, technical eligibility and people‑first quality still gate visibility; structured data should match visible content per Search Central guidance.

The minimum viable signals to collect

Before modeling, collect a baseline aligned to primary documentation and widely accepted practice:

Eligibility and indexation: Confirm the page will be indexed and snippet‑eligible; Google’s AI features cite such pages, and site owners can control inclusion via robots/meta directives. See AI features eligibility.

E‑E‑A‑T indicators: Transparent authorship, accurate sourcing, and people‑first helpfulness influence selection across search surfaces. See Google’s guidance.

Freshness and update cadence: Up‑to‑date content improves odds in dynamic systems; Perplexity’s Deep Research mode iterates searches and tends to surface recent, clear sources. See Perplexity Deep Research.

Structured data and answer‑first formatting: Use Q&A blocks or concise answer capsules and relevant schema, aligned with visible content. Note recent limits on FAQ/HowTo rich results; schema remains useful for machine readability. See Search Central updates on structured data.

Semantic intent alignment: Address variants and constraints that engines fan out across subtopics. Google documents multi‑search expansion in AI Mode (“query fan‑out”), strengthening the case for comprehensive intent coverage. See the AI Mode overview.

Cross‑engine citation consistency: Topics that exhibit stable citation patterns across engines are more predictable than volatile ones; track variance in citation share by engine.

If you need a refresher on AI visibility and how to audit it, this primer explains concepts and metrics: What is AI visibility? Brand exposure in AI search.

How to build a predictive scoring workflow

Think of this as a repeatable loop. You define an outcome, collect signals, compute a score, publish, then compare predictions to reality and adjust.

Step 1: Define the outcome and window

Choose a measurable outcome: “Earn at least one citation/mention in Google AI Overviews or Perplexity within 60 days of publication” is a practical start. Set a prediction window (30–90 days) and specify which engines/modes count.

Step 2: Collect baseline signals

For each proposed topic, inventory signals available pre‑production:

Indexation/snippet‑eligible plan (technical readiness).

E‑E‑A‑T proxies: author profile and credentials, references count, accuracy checks, transparency pages.

Freshness plan: expected update cadence and last‑updated fields.

Structured data and answer capsules: FAQPage/HowTo/Article schema aligned with visible content; concise 40–60‑word answers.

Semantic variants: list of sub‑questions, constraints, audiences, locales.

Cross‑engine history: any prior citations or mention patterns for adjacent topics.

For a detailed audit method, see How to perform an AI visibility audit for your brand.

Step 3: Engineer features and build an interpretable scorecard

Convert signals into features:

Binary flags: eligibility ready (yes/no); schema aligned (yes/no).

Recency windows: days since last substantial update.

Author transparency: presence of bio, credentials, and references.

Intent coverage: count of addressed question variants.

Cross‑engine consistency: variance of citation share across engines.

Start with a transparent, weighted additive score. Eligibility can be a gate (no index/snippet → no score). Assign weights based on correlation with past outcomes (cited vs. not cited). Output two numbers per topic: an overall score and a confidence interval (via bootstrap sampling of historical data). A simple rubric is faster to calibrate and explain.

Step 4: Validate and upgrade to ML when data permits

Hold back a set of historical topics to test your rubric. Measure precision/recall at your publish threshold, lift in the top deciles, and calibration (how close predicted probabilities are to observed rates). If you’ve accumulated enough data, fit a logistic regression for a binary outcome like “citation within 60 days.” Evaluate ROC‑AUC and log loss; inspect coefficients for interpretability. If signal interactions are non‑linear (e.g., freshness × schema), consider gradient boosting (XGBoost/LightGBM) and monitor PR‑AUC and calibration. Useful vendor references: Google Cloud’s propensity modeling patterns and SAS guidance on AUC/ROC and lift charts.

Step 5: Prepare for production and technical readiness

For high‑scoring topics, ensure crawlability, indexation, and snippet eligibility. Align structured data with visible content—Google notes that mismatched schema won’t help and may be ignored. Keep answer capsules concise and factually sound, add author transparency, and plan a realistic update cadence. If you need tactics, see AEO Best Practices 2025: Executive Guide.

Step 6: Post‑publication monitoring and iteration

Disclosure: Geneo is our product. After publication, you can use Geneo to track cross‑engine citations and mentions (e.g., whether Google AI Overviews cite your page, how Perplexity references you, and if ChatGPT mentions your brand), then compare outcomes to predictions. This supports calibration and error analysis without making outcome guarantees. For agencies, white‑label reporting can publish share of voice and citation trends on a custom domain; see agency reporting options.

Whether you use a platform or manual tracking, the principle is the same: measure real citations/mentions by engine and mode, analyze near‑misses, adjust signal weights, and re‑score your backlog. Rinse and repeat.

Modeling notes: thresholds, metrics, and drift

Thresholds and confidence: Decide practical cutoffs—publish, revise, or defer—and report a confidence band. It’s better to say “70% ± 10%” than a single number.

Evaluation metrics: ROC‑AUC and PR‑AUC for separability; lift in top deciles for marketing relevance; calibration plots and Brier score for probability reliability. Google Cloud’s operational guidance on ML metrics and SAS calibration papers are useful references.

Drift and divergence: Engines change. Re‑score topics after major updates and monitor cross‑engine divergence (variance in citation share). If one engine’s behavior shifts materially, retrain with a rolling window.

Risk controls and boundaries

Predictive scoring reduces waste; it doesn’t remove uncertainty. Keep these guardrails in place:

Scope limits: A score estimates propensity, not inclusion. Communicate uncertainty explicitly.

Bias and representativeness: Don’t overfit to one engine or locale. Audit performance by market and vertical.

YMYL caution: For health, finance, or safety topics, apply expert review and higher evidence thresholds. Minimize tool promotion; focus on accuracy and trust.

Compliance: Respect robots/meta directives for AI features; ensure schema reflects visible content. Per Google’s guidance, success in AI experiences follows from helpful, reliable content and technical readiness.

Next steps: A lightweight checklist

Define a 30–90 day prediction window and a binary outcome (“citation/mention occurs”).

Collect minimum viable signals: eligibility/indexation, E‑E‑A‑T proxies, freshness, structured data + answer capsules, semantic variants, cross‑engine history.

Build a weighted scorecard; calibrate with past topics; report confidence intervals.

Validate on holdout topics; if data supports, fit logistic regression; monitor ROC‑AUC, PR‑AUC, lift, and calibration.

Publish high‑scoring topics with technical readiness; monitor citations and iterate.

For deeper tactics on earning citations, see how to optimize content for AI citations.

The upside of adopting predictive scoring isn’t perfection; it’s discipline. It gives you a repeatable way to decide which new topics to produce first, reduce wasted cycles, and learn faster from the real behavior of AI engines. Ready to put a scoring loop around your next slate of topics? Let’s get specific and start measuring.