The Science Behind Tracking Keyword Rankings on Perplexity: Geneo’s Methodology Explained

Discover the authoritative framework for tracking keyword rankings and competitive benchmarking on Perplexity. Learn best practices, visibility metrics, and proven change detection strategies. Get actionable insights for executive decision makers.

Perplexity doesn’t show a traditional results page with “positions.” It synthesizes an answer and surfaces citations and a source list you can open with one click. If you’re a brand marketing leader, this changes how you think about “keyword rankings.” Instead of asking, “What position are we?” you ask, “Are we cited, how prominently, and how has that changed versus competitors over time?” This white paper offers an executive-ready framework to measure Perplexity visibility credibly, with competitive comparison and change detection at the core.

What “Ranking” Really Means Inside Perplexity

Perplexity compiles relevant insights into an answer, displaying numbered, inline citations and an associated source list that lets users verify provenance quickly. The official overview explains that answers are built with real-time web retrieval and transparent sources you can inspect from within the result, as described in Perplexity’s Help Center guide on how it works. Advanced modes like Deep Research orchestrate many searches and synthesize comprehensive reports with grouped citations, according to the Deep Research announcement.

So what counts as a “ranking” proxy in this environment? Rather than a ladder of ten blue links, you’re competing for the “footnotes and proof points” Perplexity uses to justify its answer. Credible proxies include whether you appear at all, how often you’re cited in the answer, where your domain sits in the displayed source list, the recommendation class of your mention (primary pick vs. list inclusion vs. neutral), and whether your presence persists across follow‑ups in the same thread.

The Measurement Model: From Mentions to Position‑Weighted Visibility

To make Perplexity visibility executive‑readable, translate raw citations into a small set of KPIs:

Appearance rate: In what share of your defined prompts does your brand appear (mention and/or citation) at least once?

Citation rate and density: How often are you cited per prompt and how many total citations from your domain appear in the answer?

Position‑weighted visibility: Apply reciprocal weights by source order (e.g., 1.00 for the first source, 0.50 for the second, 0.33 for the third…). This adapts classic weighting logic to Perplexity’s source list to reflect prominence across the answer.

Recommendation class: Tag each occurrence as a top recommendation, a list inclusion, or a neutral mention to capture qualitative prominence and intent fit.

Sentiment layer (optional): Track polarity (positive/neutral/negative) of the language around your brand; queue low‑confidence classifications for human review. For definitions and governance practices across AI visibility metrics, see our overview on AI Visibility and KPIs.

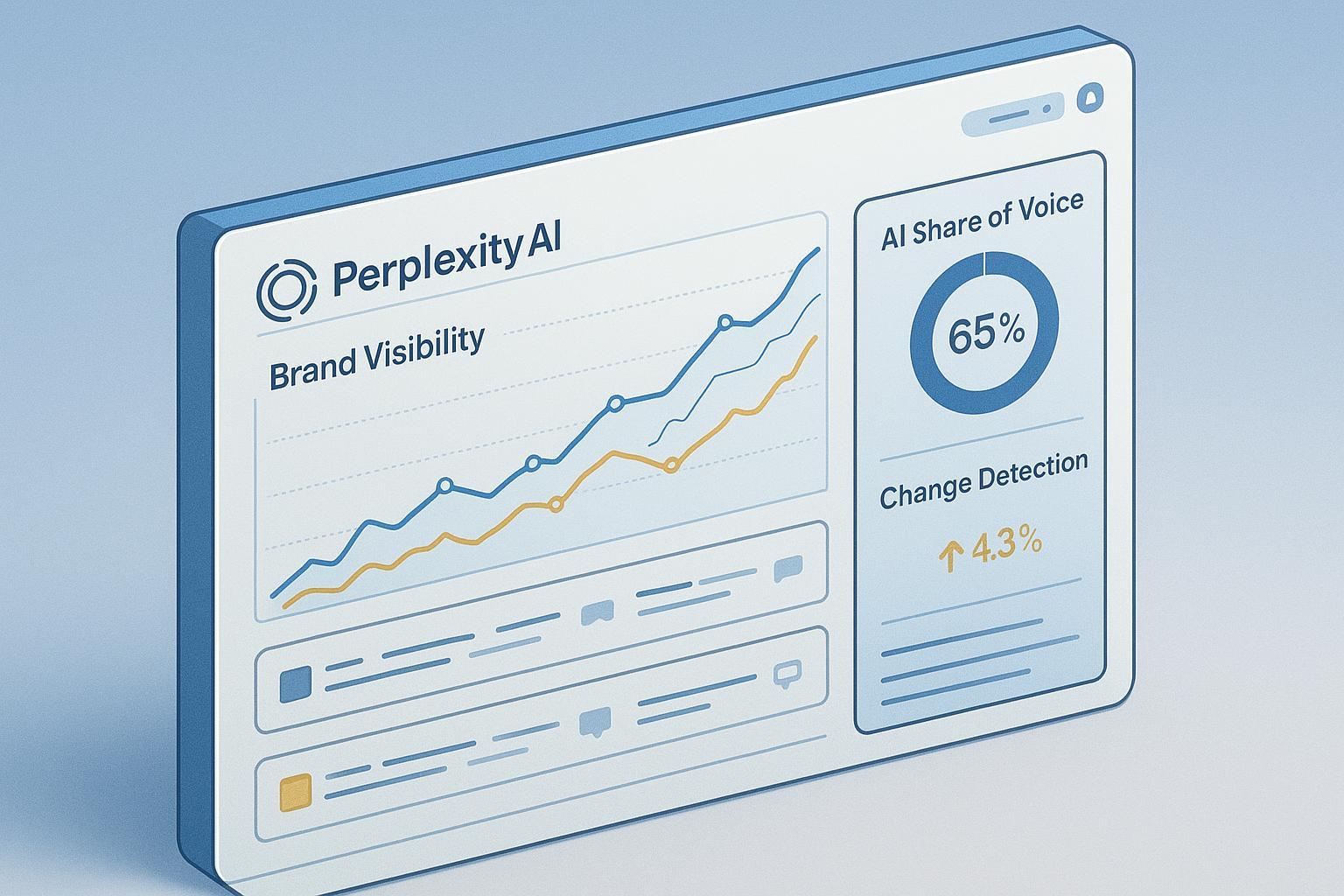

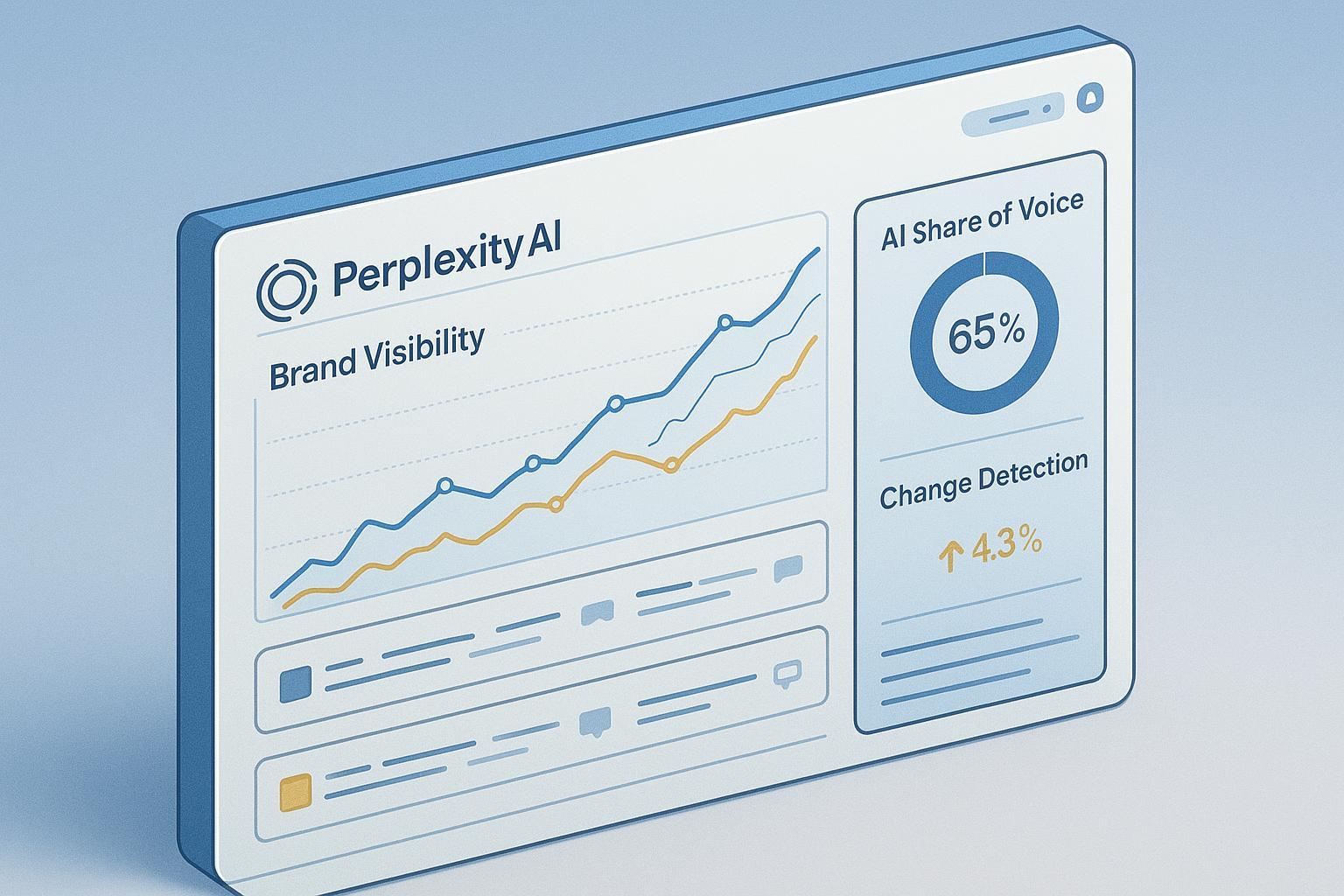

Roll these into an AI Share of Voice (AI SOV) view: your brand’s position‑weighted visibility divided by the total across competitors, multiplied by 100. Cross‑engine comparisons are helpful because behavior varies by platform; industry tools and primers discuss AI SOV rollups and competitive tracking, such as Conductor’s AI search performance framing.

A Reproducible Protocol for Executives (Without the Jargon)

Perplexity can change citations and source ordering as the web changes. Reproducibility improves when you fix key variables and log them rigorously. The table below outlines what to control and capture during each run:

Variable to Control | Why it Matters | What to Log |

|---|---|---|

Subscription tier (Free/Pro/Enterprise) | Features and retrieval depth differ; Pro and Enterprise access advanced modes that can change sources | Tier, account, quota status; refer to plan overview |

Model selection | Models may differ in reasoning/retrieval behavior | Model name/version; see models overview |

Retrieval mode | Quick vs. Pro Search; Deep Research on/off changes depth and citation set | Mode used; Deep Research flag; per Pro Search quickstart and Deep Research |

Source constraint | Enterprise “Choose sources” (Web, Org Files, Web+Org Files, None) affects citation pool | Setting used; see focus/source setting reference |

Prompt version | Small phrasing shifts can change retrieval and citations | Canonical prompt text and version ID |

Timestamp & freshness | Real‑time retrieval means drift over time | Strict timestamps; evidence snapshots (screenshots or saved answers) |

Programmatic evidence | When using API, store structured sources | search_results payload; see Search API quickstart |

Two practices are non‑negotiable: snapshot every answer (so the citations and source list are visible) and record the exact timestamp. Without these, you can’t defend changes or audit anomalies later.

Competitive Comparison and Change Detection (The Centerpiece)

Executives don’t just need visibility—they need explanations. Who gained or lost ground this week, and why? Here’s a pragmatic workflow that keeps competitive change detection front and center:

Build a prompt library mapped to buying stages (e.g., 25 “money prompts”). Fix your tier, model, and retrieval/source settings for consistency.

Run weekly scans. For each prompt, log brand presence, citation count, position‑weighted score, recommendation class, and the cited URLs.

Produce a delta log. For each prompt, record who replaced whom since last period and where (answer citations vs. source list). Annotate likely drivers: competitor page refreshes, new studies, PR hits.

Investigate anomalies with a checklist and evidence. Verify the cited pages, note any homepage/mirror link substitutions, and capture snapshots.

Triangulate with content operations. When you lose visibility, inspect competitor pages: freshness, structured data, clear methods, first‑party studies. Prioritize counter‑actions (refresh your page, add FAQs, implement schema, publish validated data assets).

Report monthly. Show trend charts for AI SOV and position‑weighted scores, before/after snapshots for key prompts, and a narrative of “what changed and why,” alongside prioritized next actions.

Anomaly checklist (use this to separate signal from noise):

Prompt drift: Did phrasing or intent change?

Mode/tier/model drift: Did your settings change (or quotas force a mode switch)?

Source restriction toggles: Did “Choose sources” limit or expand the pool?

Time‑based variance: Did a new study or major page update enter the corpus?

Citation reliability: Are any citations wrong, paywalled, or substituted with mirrors?

Why the extra rigor? Independent evaluations show Perplexity is transparent but not infallible. A comparative study in 2024 found it produced fewer incorrect news answers than peers but still made wrong claims in 37% of tested cases; verification is essential, as noted by the Tow Center at Columbia Journalism Review. Treat citations as auditable leads, not ground truth.

Governance and Reporting That Leaders Can Trust

Assign clear ownership for prompt libraries, weekly scans, and monthly executive rollups. Establish KPIs (appearance rate, position‑weighted visibility, AI SOV, recommendation class distribution, sentiment where relevant) and define confidence bands based on observed variance. Maintain an evidence repository with snapshots, timestamps, and cited URLs for every run.

For governance patterns and an audit‑friendly cadence, see the executive guide on AEO/GEO best practices and the how‑to on performing an AI visibility audit.

Practical Example: Turning Weekly Scans into Decisions

Imagine you track 25 commercial-intent prompts weekly. Over two months, your appearance rate holds steady, but your position‑weighted visibility drops five points in three prompts where a competitor’s fresh comparison page is now the first cited source. The delta log shows they published a methods section and linked supporting data.

Disclosure: Geneo is our product. In this scenario, Geneo can be used to centralize Perplexity prompt libraries, log weekly scans, and surface competitive change alerts (e.g., “Competitor X replaced your citation at Prompt Y”). The workflow remains the same whether you use a platform or a spreadsheet: you still validate citations, capture snapshots, and translate shifts into content actions. Keep the playbook pragmatic—refresh your comparison page, add structured FAQs, and publish a lightweight data explainer that Perplexity can cite.

Prefer a manual baseline? Use a structured sheet with columns for prompt, tier/mode/model, timestamp, brand presence, citation count, position‑weighted score, recommendation class, cited URLs, and a link to the screenshot. Run weekly for 8–12 weeks to establish variance bands before making high‑stakes decisions.

Improving Your Odds of Being Cited in Perplexity

You can’t “optimize for positions,” but you can improve your likelihood of being cited.

Publish answer‑ready pages: clear definitions, scannable sections, methods/data, and a direct response to the prompt’s intent.

Strengthen provenance: add bylines, references, and links to primary data; avoid thin or derivative content.

Implement structured data and clean markup: help retrieval systems interpret topical authority.

Maintain freshness: update with recent studies, examples, and clarified guidance.

Earn credible mentions: digital PR and expert quotes on reputable domains increase your footprint in the corpus.

For a deeper playbook tailored to AI citation models, see how to optimize content for AI citations.

Alternatives, Limits, and What Not to Overpromise

Manual tracking is slow but auditable; automation scales but still requires human verification. Don’t promise precision beyond your evidence. Establish confidence bands for each KPI based on observed variance and note when prompt intent may have shifted. Remember, Perplexity’s citations are transparent yet occasionally wrong or substituted—so always click through.

If your leadership asks, “Can we get back to position #1?” the answer is: there is no #1 in Perplexity. There is citation prominence and recommendation quality. The most reliable path is disciplined tracking, competitive change detection, and content that deserves to be cited.

Next Steps

If you manage a brand or agency program and want executive‑ready reporting without building everything from scratch, consider neutral, auditable workflows and tools that support weekly scans, delta logs, and white‑label dashboards. For agencies, see Geneo’s white‑label reporting overview. Then, set your cadence, define your prompt library, and start logging. The sooner you capture changes, the sooner you can explain—and improve—them.