Optimizing SaaS Content for ChatGPT, Perplexity, and Google AI

Pro strategies for SaaS content optimization on ChatGPT, Perplexity, and Google AI. Evidence-based best practices and tracking for agency professionals.

The AI answer layer isn’t a sideshow anymore—it’s where many SaaS buyers first see (or don’t see) your brand. Exposure is uneven and volatile, but it’s measurable. Multiple large‑scale studies in 2025 reported that when Google’s AI experiences trigger, traditional organic CTR can drop for those queries, with ranges from roughly one‑third to well over half depending on dataset and timeframe. For example, Semrush found month‑to‑month variability in AI Overviews prevalence with notable CTR declines for impacted queries in its late‑2025 analysis, while Search Engine Land covered research estimating much steeper CTR drops in certain cohorts. See the evidence and caveats in the Semrush AIO study and this coverage of CTR effects by Search Engine Land for scope and methodology context: Semrush on AIO prevalence and CTR impact (Dec 2025) and Search Engine Land on CTR drops (Nov 2025).

If you run content for a SaaS brand or an agency, the mandate is clear: design pages that are easy for AI systems to cite, summarize, and trust—without abandoning the fundamentals of search.

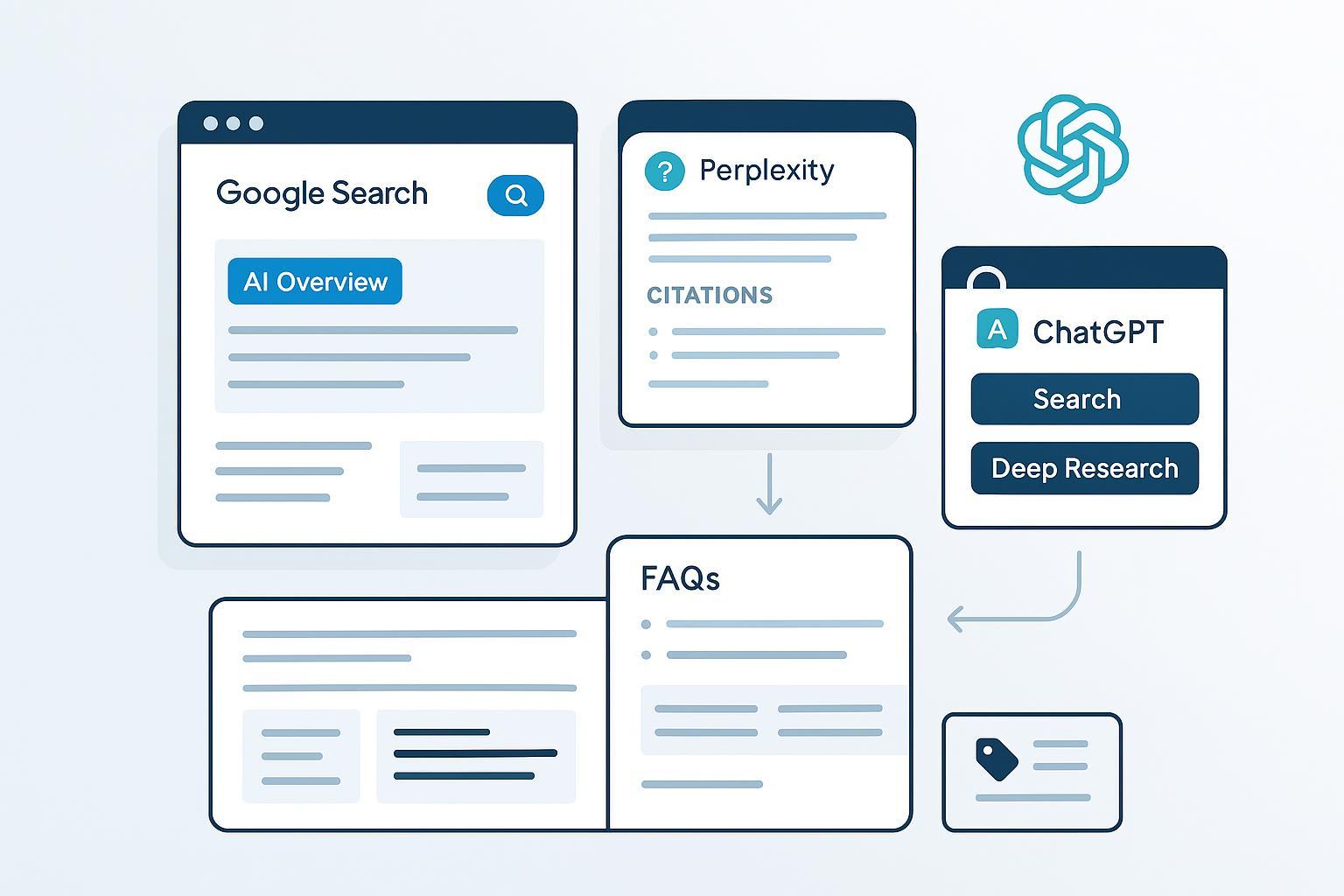

What’s different about ChatGPT, Perplexity, and Google AI

All three surfaces present synthesized answers, but their expectations feel different from a publisher’s perspective. Google AI (AI Overviews and AI Mode) draws from the open web and links sources so users can “explore more on the web.” Google’s own guidance emphasizes that there’s no special markup for eligibility; success rests on standard Search best practices and people‑first content, as explained in AI features and your website (2025). Perplexity centers transparency. In deeper modes, it aggregates many sources and displays citations inline and in a bibliography‑like list. That pushes your content toward crisp claims and verifiable facts; their product explainer for Deep Research outlines how multi‑query synthesis works in Introducing Deep Research. ChatGPT’s Search and Deep Research can surface external sources and show steps/sources used, but OpenAI doesn’t publish hard rules for when links appear. Practically, you need statements that are unambiguous and citable, with clear anchors; see Introducing ChatGPT Search for capability context.

Implication: structure trumps fluff. The same SaaS page should present short, answer‑first passages; cite authoritative references; and segment supporting details so an AI system can lift what it needs without misreading the page.

Build a SaaS content architecture that travels across AI surfaces

Think of each priority page as a bundle of citation‑ready modules. Lead with a 40–60‑word answer block for each key sub‑question. Treat it like a quotable executive summary for that slice of the problem. Support each block with specific steps, examples, and a clear rationale. Where appropriate, reference primary data or authoritative docs in‑line. Cover the whole cluster—Google describes a “fan‑out” behavior that broadens the supporting sites and subtopics surfaced; your cluster should preempt those angles with comparisons, alternatives, pricing nuances, and implementation notes. Finally, make freshness obvious. Include “last updated” and change notes on living docs and implementation guides; fast‑moving platforms reward recent, trustworthy information.

If you need a systematic way to benchmark your current exposure first, use an audit framework. This walkthrough lays out a practical starting point: How to perform an AI visibility audit. For hands‑on on‑page patterns that earn citations, this guide provides concrete formats: Optimize content for AI citations.

Platform playbooks: what to change and where it matters most

Google AI (AI Overviews and AI Mode)

Start with the pages that answer real tasks and comparisons: integration guides and implementation checklists; product‑vs‑competitor comparisons and “best for” use cases; and straight answers on pricing, limits, and security/compliance. On‑page, insert short answer boxes above the fold for top sub‑questions—keep them self‑contained, factual, and source‑aware. Clarify entities by using consistent product and company names; add sameAs links and clean Organization/Product (or SoftwareApplication) schema where the page intent warrants it. Google states there’s no AIO‑specific markup; stick to Search fundamentals per AI features and your website. Expect uneven exposure and plan for the math: AI experiences don’t fire on every query, and CTR impacts vary. Industry tracking in 2025 showed wide swings by month and dataset; use these findings directionally, not as absolutes. Semrush’s late‑year report and this CTR impact coverage summarize the range.

Perplexity (Quick, Pro, Deep)

Perplexity rewards “show your work” content. Publish primary data (benchmarks, teardown analyses) with methods and dates. Use scannable headings with brief, numbered steps only when necessary to outline a process; include links to source evidence inside the sentence, not as dangling footnotes. Maintain a crisp summary at the top; Perplexity can cite that directly while also pulling deeper detail in Pro/Deep modes. For how its deeper mode works, see Deep Research. Where to focus first: integration pages and best‑practice guides that contain verifiable settings, code, or benchmarks. If your SaaS has an API, publish short, citable explanations next to code blocks—think “what this endpoint does in one sentence,” then details.

ChatGPT (Search and Deep Research)

Because there’s no formal, public rulebook for when ChatGPT links to the open web, reduce ambiguity in the content itself. Place decisive, quotable claims near the top of sections, with dates and citations where appropriate. Ensure canonicalization and internal anchors (for example, #implementation-steps) so snippets resolve to the right spot when referenced. Write documentation and comparison pages that can be summarized in a few sentences without losing accuracy. For feature context, see Introducing ChatGPT Search.

Technical signals and schema that actually help SaaS content

There’s no AIO‑only switch to flip, but semantic clarity still matters. Use Organization and Article schema for thought leadership; Product or SoftwareApplication when the page’s primary purpose is product information. Keep markup aligned to the page’s visible content. Employ FAQPage only when you show real Q&A. Google restricted some rich result displays in recent years, but Q&A formatting still improves extractability for AI systems and user scanning. Validate in the Rich Results Test and avoid over‑marking. Keep entity references consistent (logo, brand, sameAs). Follow Search Central updates; features change and evolve; for example, Google has adjusted structured data displays and guidance over 2024–2025. A helpful mental model: schema isn’t a ranking cheat code; it’s a clarity layer that reduces guesswork when assistants parse your page.

Internationalization: make your clusters travel, not just translate

AI experiences now span many countries and languages, and their coverage expanded through 2025. Google announced broader availability of AI Overviews in 200+ countries/territories and 40+ languages across the year; see Google’s May 2025 expansion update for scope. For SaaS teams, localize priority clusters first—pricing, integrations, and compliance—using market‑specific examples and screenshots. Implement hreflang across language‑region pairs and keep entities and product names aligned to avoid fragmentation. Localize policies, data residency notes, and security certifications; these sections are frequently summarized by AI systems for buyers. For a broader answer‑engine strategy, this executive guide offers patterns you can adapt: AEO best practices for 2025.

Measurement and iteration for agencies

Disclosure: Geneo is our product.

You can’t manage what you don’t measure, so build an AI visibility layer into your reporting stack. Track AI Mentions or citations (how often your pages are referenced across ChatGPT when visible, Perplexity, and Google AI features). Calculate Share of Voice within target clusters to understand your proportion of mentions among a defined competitive set. Monitor the platform mix and any sentiment signals, and record Time‑to‑Citation—the lag from a content change to observable inclusion—so you can tune refresh cadence.

In practice, agencies often rely on a dedicated AI visibility tool to centralize this telemetry. For example, Geneo provides white‑label dashboards that monitor ChatGPT, Perplexity, and Google AI for brand mentions, daily history, and aggregated metrics like Share of Voice and AI Mentions. It’s useful when you need a client‑facing portal without stitching together screenshots or one‑off tests. Keep the narrative neutral: the goal is to explain what changed, what the numbers mean, and which page updates you’ll ship next. If you prefer a homegrown approach, standardize prompts for periodic checks, log them with timestamps, and corroborate with analytics events when AI surfaces drive traffic.

Timelines, volatility, and sprint planning

How quickly do changes reflect? It varies. Routine updates can surface within days to a few weeks; around core or spam updates, meaningful shifts can take 4–8+ weeks, and full recalibration may take longer. Google’s guidance on core updates is the best north star for expectations: Core updates overview. Treat these as ranges, not SLAs.

- Month 1: Ship answer‑first refactors to top ten pages in two clusters; add missing schema; tighten citations; publish a fresh comparison page.

- Month 2: Review AI mentions and time‑to‑citation logs; refresh laggards; expand FAQs and add short “how it works” blocks on integration pages.

- Month 3: Internationalize one high‑intent cluster; launch a primary‑data benchmark post; reassess Share of Voice and platform mix; feed learnings back into backlog.

Pitfalls to avoid (quick QA)

- Vague headings with no quotable answer underneath

- Over‑marking pages with schema that doesn’t match visible content

- Stale “last updated” dates on changing docs

- Long comparison pages without 40–60‑word section summaries

- Missing anchors and canonical issues that make snippets resolve poorly

A brief vignette: a B2B SaaS team refactored its “Vendor alternatives” pages by adding 50‑word section answers, tightening citations to primary sources, and standardizing schema. Within six weeks, the team logged recurring inclusion in AI experiences for several comparison queries and observed steadier referral patterns on days when those queries spiked. They didn’t change everything—just the parts assistants quote first.

Where to go next

Pick one high‑intent cluster and refactor the top three pages into answer‑first modules this week. Add dates, anchors, and citation‑friendly phrasing to your implementation and comparison pages. Stand up a simple measurement loop—whether through an AI visibility platform or a repeatable manual log—and review it every month. If you’re already tracking the basics, your next edge is original data. Publish a small benchmark with a clear method and date. It’s the kind of artifact Perplexity, ChatGPT, and Google can cite without hesitation.