How to Build a GEO-Optimized Content Hub (2025 Best Practices)

Learn 2025's best practices for building a GEO-optimized content hub. Master AI search visibility, schema, E-E-A-T, and actionable analytics for digital brands.

If your content doesn’t show up inside AI answers, your audience may never see it. A GEO‑optimized content hub gives AI systems clean structure, credible identities, and quotable sections—so your brand gets cited when it matters. Below is a practitioner playbook to design, ship, and iterate a hub that performs across answer engines without chasing myths.

What a GEO‑optimized content hub means in 2025

A GEO hub is a topic center that’s built for human clarity and machine parsing. It organizes a core subject into a hub page (overview) and spokes (deep dives), each written as modular, answer‑ready blocks and backed by consistent entities and schema. Google states that inclusion in AI experiences follows standard Search eligibility—fast, indexable pages, helpful content, and clear signals—not a special tag. See Google’s guidance in the AI features documentation and blog summary in 2025: according to Google’s own docs, you succeed by being eligible for Search and by publishing reliable, people‑first content, not by gaming markup, as detailed in the publisher’s AI features and your website (Google, 2025) and the companion “Succeeding in AI search experiences” (Google Search Blog, 2025).

In practice, winning hubs demonstrate topical depth, consistent internal linking, and entity clarity. For a grounded blueprint of GEO principles, review our primer on building long‑term GEO foundations.

Map your hub‑and‑spoke plan (before you write a word)

Start by selecting one high‑impact topic your brand can cover with authority for at least 6–12 months. Then map user intents into discrete spokes and sub‑spokes. Think in tasks and questions, not just keywords.

- Hub (overview/Collection): defines the scope, links to all spokes, and summarizes key answers in short, citable blocks.

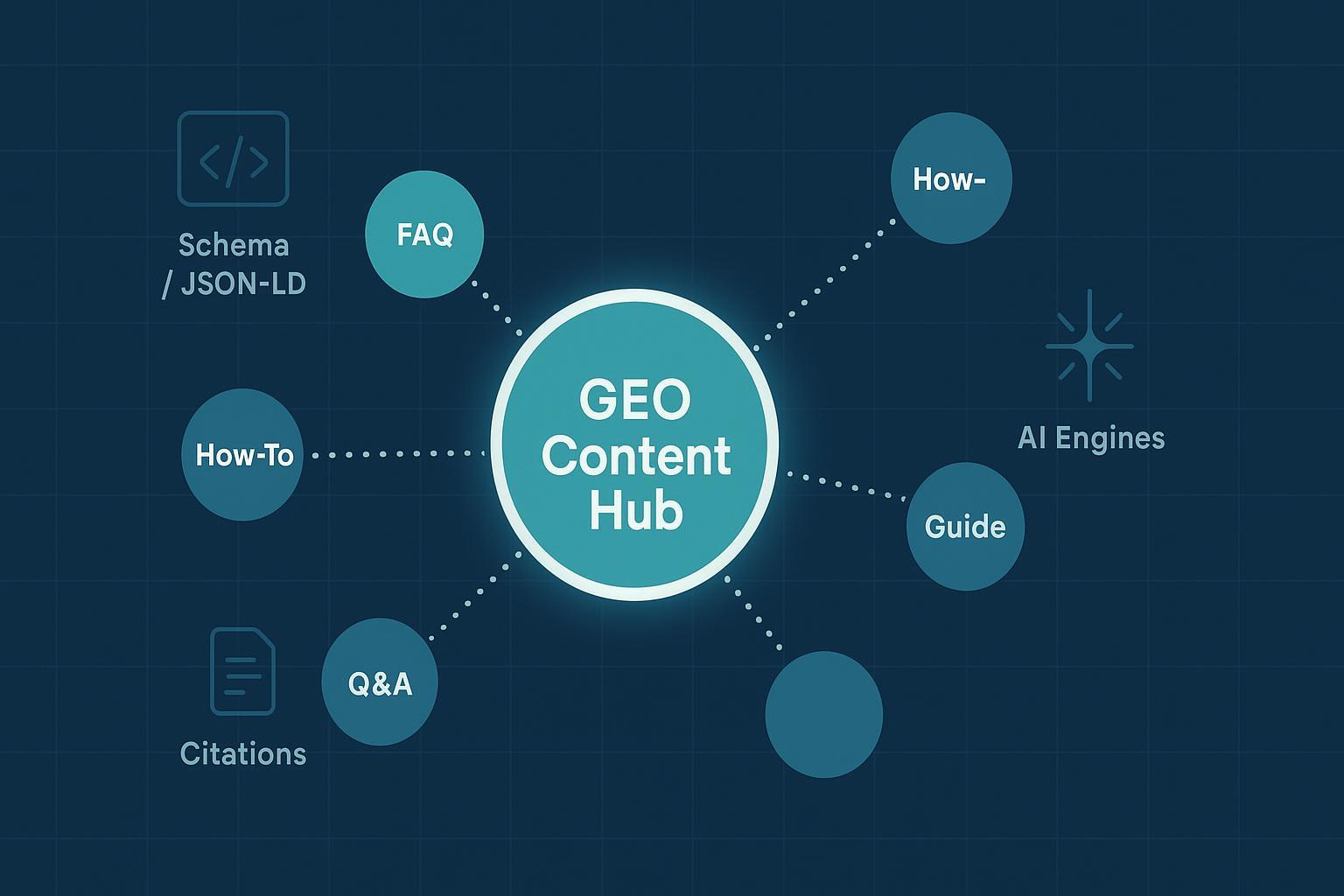

- Spokes: mix formats to match intent—Guides/Articles (in‑depth explanations), How‑To (stepwise), FAQPage (consolidated Q&A), Comparison (A vs. B), and Troubleshooting.

- Cross‑links: every spoke links back to the hub and laterally to adjacent intents; navigation is obvious to humans and machines.

Two quick sanity checks: Can a new reader find every spoke from the hub in two clicks? And can an AI agent extract a 40–80‑word definition or step summary from each page without losing context?

IA and internal links that AI can parse

AI systems do better when your information architecture mirrors how people think. Use a stable URL pattern, a clear hierarchy, and breadcrumbs. The hub should behave like a curated collection: it orients the topic, exposes the model of the domain, and routes readers to the best answer unit.

I recommend:

- A top‑level “/topic/” or “/hubs/” path for the hub and “/topic/subtopic/” for spokes.

- A visible breadcrumb trail on every page, paired with BreadcrumbList schema.

- Intent‑based navigation on the hub: How‑to, FAQs, Comparisons, and “related tasks.”

Write answer‑ready content blocks

Here’s the deal: AI answer engines prefer concise, self‑contained statements they can quote without rewriting the whole page. Structure each page so the “quotable unit” is unambiguous.

- Open with a 40–80‑word definition or summary that a model can cite verbatim without distorting meaning.

- Use question‑based H2/H3s that map to intents (“What is…?”, “How does…?”, “Which is better for…?”).

- Add short, verifiable stats with links to primary sources inside the text. Keep claims precise and dated.

- Include a short FAQ block on relevant spokes rather than scattering Q&A across multiple pages.

For platform‑specific guidance on tailoring sections to ChatGPT, Perplexity, and Claude, review our practical playbook for optimizing content for AI answer engines.

Schema and entity setup that travels well across models

Robust, consistent JSON‑LD helps machines confirm who you are, what the page covers, and how pieces connect. Use a single @graph per page that includes Organization/Person identity, WebPage/CollectionPage, BreadcrumbList, and the appropriate content type (Article, FAQPage, HowTo). Validate with Google’s Rich Results Test and the Schema.org validator. For a pragmatic overview of implementation patterns, Brian Dean’s Schema Markup Guide (Backlinko, 2025) is a dependable reference, and we maintain a practitioner‑focused explainer in our structured data best practices for AI search.

A compact hub example you can adapt:

{

"@context": "https://schema.org",

"@graph": [

{

"@type": "Organization",

"@id": "https://example.com/#org",

"name": "Example Brand",

"url": "https://example.com",

"logo": "https://example.com/logo.png",

"sameAs": [

"https://www.wikidata.org/entity/Q123456",

"https://www.linkedin.com/company/example"

]

},

{

"@type": "Person",

"@id": "https://example.com/#author-jane",

"name": "Jane Doe",

"url": "https://example.com/authors/jane-doe",

"affiliation": {"@id": "https://example.com/#org"}

},

{

"@type": "WebPage",

"@id": "https://example.com/geo-hub#page",

"url": "https://example.com/geo-hub",

"name": "GEO Content Hub",

"breadcrumb": {"@id": "https://example.com/geo-hub#breadcrumb"},

"about": {"@id": "https://example.com/#org"}

},

{

"@type": "BreadcrumbList",

"@id": "https://example.com/geo-hub#breadcrumb",

"itemListElement": [

{"@type": "ListItem", "position": 1, "name": "Home", "item": "https://example.com"},

{"@type": "ListItem", "position": 2, "name": "GEO Content Hub", "item": "https://example.com/geo-hub"}

]

},

{

"@type": "FAQPage",

"@id": "https://example.com/geo-hub#faq",

"mainEntity": [

{

"@type": "Question",

"name": "What is GEO?",

"acceptedAnswer": {

"@type": "Answer",

"text": "GEO optimizes content to be cited in AI answers."

}

}

]

}

]

}

Use stable @id values to bind entities across your site, and sameAs to connect to authoritative profiles (Wikidata, LinkedIn, etc.). Keep schema in sync with on‑page content; mismatches erode trust signals.

E‑E‑A‑T you can demonstrate

Search teams care about E‑E‑A‑T because AI features are tuned to elevate helpful, reliable content. Google’s 2025 guidance emphasizes experience and trust signals—author credentials, transparent sourcing, and editorial standards—over tricks. See the Google Search blog note on AI experiences (2025) for what “people‑first” looks like, and read the January 2025 Rater Guidelines update coverage for how raters evaluate authenticity and deceptive signals, summarized by Search Engine Journal in “Updated Raters’ Guidelines target fake E‑E‑A‑T content (SEJ, 2025)”.

Operationally, do three things consistently:

- Attribute pages to qualified authors with bios that list relevant experience and link to real profiles.

- Document editorial standards and a corrections policy; add visible last‑updated dates.

- Cite primary sources for data and definitions; avoid vague claims.

Technical access that keeps you eligible

There’s no “AI‑only” crawler you need to unlock. Eligibility for Google’s AI experiences aligns with standard Search inclusion: indexable pages, semantic HTML, mobile performance, and no blocked resources. Confirm the basics with the AI features and your website documentation (Google, 2025), then maintain XML sitemaps with accurate lastmod, avoid robots.txt over‑blocking, and keep Core Web Vitals healthy. Don’t cloak or hide content; what you want cited should be present in the DOM and readable.

Measure what matters (and iterate)

Because some answer engines don’t send referrals, you’ll use a blended KPI view. Track citations and coverage, not only clicks.

KPIs to establish and review monthly:

| KPI | What it tells you | Tools/Notes |

|---|---|---|

| AI citations/mentions per hub/spoke | If engines are using your pages as sources | Manual sampling + GEO monitors; annotate changes |

| Hub coverage (spokes live, schema validated) | Execution completeness | Rich Results Test; Schema.org Validator |

| Entity graph completeness | Disambiguation strength | sameAs/@id audit; brand profile coverage |

| GSC impressions/clicks for hub/spokes | Search eligibility and trend | Google Search Console |

| On‑page engagement (scroll depth, time) | Content quality at a glance | GA4; consider session replays |

Disclosure: Geneo is our product. One practical workflow is maintaining a rolling “AI citations log” for your hub: run weekly scripted checks and manual prompts for target queries, record which models cite which pages, and flag gaps. A GEO monitoring suite can speed this up; for example, Geneo tracks brand mentions and citations across AI answer engines, pairs them with sentiment analysis, and preserves historical query snapshots so you can compare before/after changes. Use any stack you prefer—the key is consistent, auditable tracking so your iteration cycles are data‑led.

For context on shaping content that lands in AI summaries, see our best practices to optimize your brand for AI summaries.

Freshness cadence: a lightweight operating rhythm

A hub is a program, not a post. Establish a rhythm that keeps signals fresh without creating thrash. My go‑to cadence: weekly micro‑updates (facts, FAQs), monthly spoke expansions (new comparisons, how‑tos), and quarterly hub audits (schema validation, entity/sameAs review, internal link tuning). Maintain a change log and annotate it in your analytics so performance shifts tie back to edits. When a spoke gains traction, consider adding a companion format (FAQ or How‑To) to reinforce intent coverage.

Putting it all together

If you remember nothing else, remember this: AI answer engines reward hubs that are easy to parse, easy to trust, and easy to quote. Map the cluster before you draft, write in modular answer blocks, ship clean schema with real identities, keep technical access wide open, and measure citations like a product metric. What’s the single spoke you can ship this month that would unlock the rest of the cluster? Build that first, then scale the hub around it.