How to Optimize Your Brand for AI Summaries

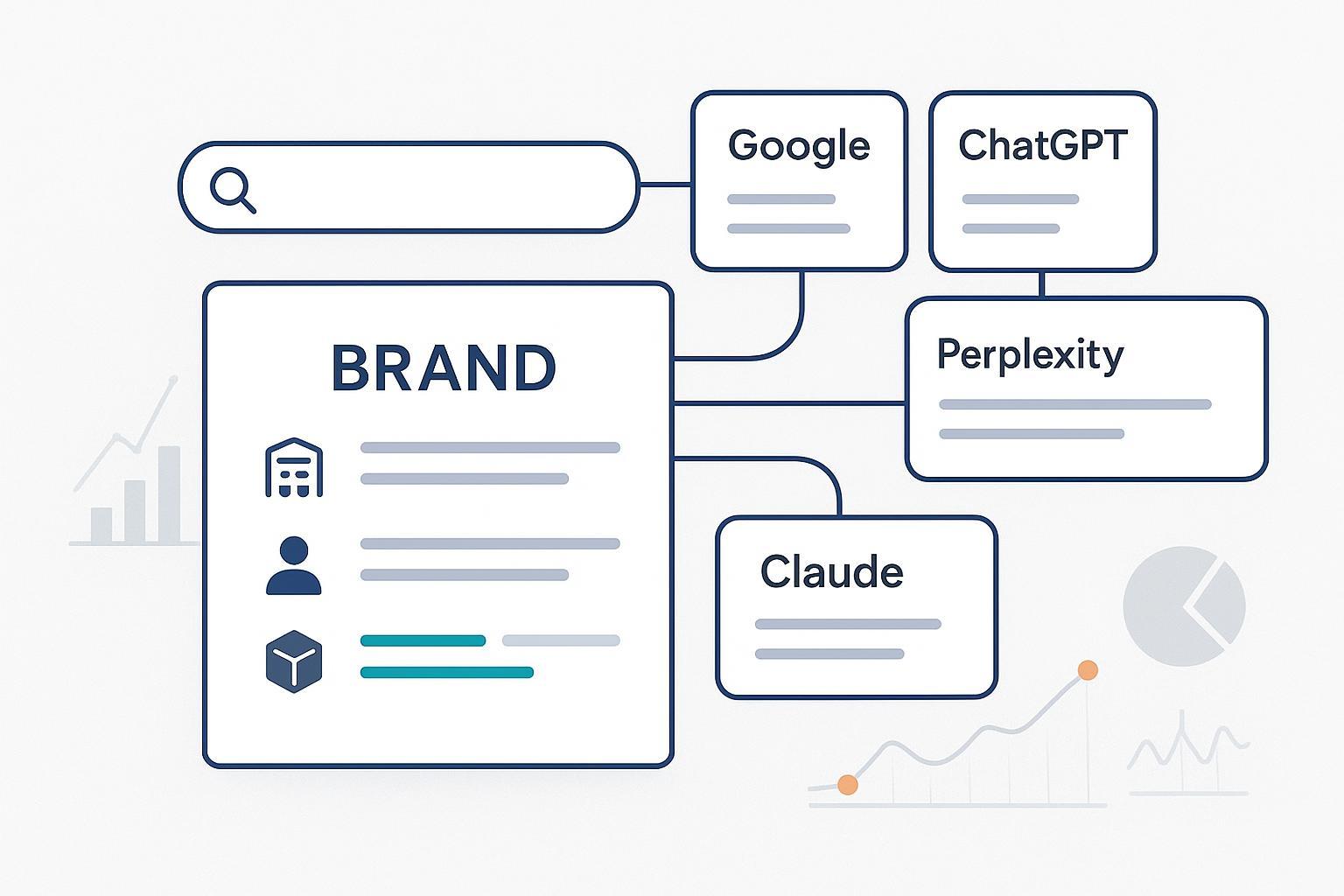

Discover proven 2025 best practices for optimizing brand visibility and accuracy in Google AI Overviews, ChatGPT, Perplexity, and Claude. Actionable steps for marketers and SEO pros.

If a customer asks an AI assistant about your category tomorrow, will your brand be cited—accurately, and in the first screen? Here’s the field-tested playbook I use to make brands “answerable” across Google AI Overviews, ChatGPT/SearchGPT, Perplexity, and Claude, without chasing fads or fragile hacks.

How AI summaries decide whom to cite

AI summaries are built from crawlable, machine-readable pages that look authoritative and easy to quote. At a minimum, you need:

- Crawl and snippet eligibility. If a page is set to noindex or nosnippet, it won’t be excerpted in Google’s AI Overviews. Google’s official guidance confirms there’s no special AIO opt-out; standard indexing/snippet rules apply. See Google’s own explanations in the AI features guidance under AI features and your website and product help for AI Overviews.

- Respect for publisher controls varies by platform. OpenAI’s GPTBot honors robots.txt for public crawling per OpenAI’s GPTBot documentation. Reports in 2025 describe cases where Perplexity content may bypass robots.txt; Cloudflare advises using network-level controls where strict blocking is required, detailed in its analysis of non-compliant crawlers (Aug 4, 2025). Anthropic describes a transparency-first posture; see the Anthropic Transparency Hub.

- Clear entities and E-E-A-T. Summaries cite pages that present a credible voice: real author bios, transparent ownership, and original or well-cited facts. Google’s raters use E‑E‑A‑T as a quality lens; it’s not a single ranking factor, but it shapes how systems approximate trust.

If you want visibility in AI results, optimize for machine readability and credibility at the same time. Think of it like stocking a well-labeled pantry: the clearer your labels and ingredients, the easier it is for AI to assemble a reliable “meal.”

The on-page blueprint for extractable answers

Design each target page so an AI can lift a concise, correct snippet without guessing. Use this repeatable pattern:

- Lead with a 50–70 word direct answer paragraph. It should stand alone and match the query intent verbatim where natural.

- Follow with scannable sections mapped to question-style H2/H3s (What is it? How does it work? Pros/cons? Steps?). Keep paragraphs tight and self-contained.

- Add a compact FAQ with adjacent intents (2–4 entries). Keep answers under 75 words each.

- Use tables for quick comparisons and clear variable labels for any formulas or definitions. Always pair images with descriptive alt text.

- Show real authorship: a byline, short bio, credentials, and links to authoritative profiles.

- Keep the page fast, mobile-friendly, and accessible. Important content should be server-rendered HTML, not hidden in heavy JS.

When we talk about AI visibility and Generative Engine Optimization, you’ll find ongoing perspectives on Geneo’s site. For broader context and related posts, see the Geneo blog.

Entities and schema that reduce confusion

Ambiguous entities cause misattribution in summaries. Formalize who you are (Organization), who writes your content (Person), and what you sell (Product) using JSON-LD that mirrors visible content. Where applicable, connect to canonical profiles (sameAs: Wikipedia, Wikidata, LinkedIn, Crunchbase) and use global identifiers for products.

Here is a compact Organization + Person example you can adapt:

{

"@context": "https://schema.org",

"@type": "Organization",

"name": "Example Brand",

"url": "https://www.example.com",

"logo": "https://www.example.com/logo.png",

"sameAs": [

"https://www.wikidata.org/wiki/Q123456",

"https://www.linkedin.com/company/example-brand/"

],

"founder": {

"@type": "Person",

"name": "Alex Rivera",

"jobTitle": "CEO",

"sameAs": [

"https://www.linkedin.com/in/alex-rivera/"

]

}

}

For a deeper dive into structured content patterns used in GEO and AI search, a practical overview of tactics is discussed in Geneo’s article on tooling and content patterns: RankScale AI review and takeaways.

Platform-specific quick wins (2025)

Two guiding principles apply everywhere: make your answer easy to quote, and make your identity easy to resolve. Then tailor to each surface:

| Platform | Quick wins | Access notes |

|---|---|---|

| Google AI Overviews | Start pages with a definitive 50–70 word answer; align H2/H3s to common queries; add FAQPage markup where valid; reinforce author bios and external citations. | Ensure indexable pages and allow snippets. No special AIO opt-out; standard controls apply, per Google’s AI features and your website. |

| ChatGPT/SearchGPT | Create self-contained explainers with TL;DR and FAQs; add clear anchors and structured data; link out to canonical definitions. | Allow GPTBot for the sections you want cited, per OpenAI GPTBot. |

| Perplexity | Provide short, quotable definitions and current sources; keep comparison tables fresh; maintain author pages. | Official PerplexityBot exists, but see Cloudflare’s crawler evasion analysis for blocking/monitoring considerations. |

| Claude | Use question-led headings, numbered steps, and compact paragraphs; cite authoritative, recent sources. | Treat robots.txt as respected; see Anthropic Transparency for posture. |

Monitor what matters—and adjust

You can’t improve what you don’t measure. Define a tight set of AI visibility KPIs and revisit them monthly:

- AI citation share: The percent of strategic prompts where your domain appears as a cited source across Google, ChatGPT/SearchGPT, Perplexity, and Claude.

- Sentiment and accuracy: Track polarity (positive/neutral/negative) and whether summaries state your facts correctly (pricing, specs, leadership). Log issues and their fixes.

- Coverage breadth: Count unique prompts, entities, and topics where you’re visible; note platform and locale splits.

- Velocity: Watch the rate of change following content updates or PR wins.

- Downstream lift: Where possible, correlate visibility gains with branded search or direct traffic increases.

Disclosure: Geneo is our product.

A practical workflow many teams use

- Create a monthly “AI Prompts Basket” of your 30–50 most important tasks and brand/category questions.

- Run the basket across core engines and capture citations, sentiment, and accuracy.

- Prioritize fixes by impact and effort, then update content (answer boxes, FAQs, schema, citations) and log the date.

- Re-query the same basket two weeks later and compare.

With Geneo, this process is centralized: it supports multi-platform monitoring of AI citations, sentiment tracking, and historical prompt archives so you can see before/after movement and share changes with stakeholders. For a broader overview of how Geneo frames AI visibility and GEO, start at the Geneo homepage.

Troubleshooting: fix what AI gets wrong

When an AI answer is inaccurate or unfavorable, move fast—but stay factual. For hallucinations or factual errors, add a clearly labeled correction on your page with citations to primary sources, reinforce entity clarity (Organization/Person schema and sameAs links), tighten the 50–70 word answer at the top, use platform feedback channels, and schedule a re-check in 2–6 weeks. If sentiment skews negative or unbalanced, address the critique head-on with verifiable statements (changelogs, certifications, customer data), link to neutral third-party validations, and add a short FAQ entry that acknowledges the issue and clarifies current status. When your brand is omitted from category answers, broaden topical coverage, interlink a focused cluster, add neutral comparison tables that include your brand, and pursue digital PR to earn independent mentions that seed future AI citations. For a wider view of ecosystem shifts and KPI frameworks that inform this triage, Geneo’s late-2025 analysis is helpful: Google algorithm update (October 2025): what changed.

International and multilingual notes

Localize like you mean it. Use hreflang for each locale, reflect the local legal entity name and address in Organization markup, convert units and currencies in the visible copy, and cite country-specific sources where appropriate. Maintain a separate, locale-aware FAQ when intents differ by market. Small detail, big trust gains.

What didn’t work in our tests

- Overloading pages with FAQs that don’t match real questions. Keep it surgical.

- Hiding key copy in carousels or accordions that aren’t rendered on load.

- Treating llms.txt as a control surface. It’s a community signal, not a reliable enforcement mechanism across platforms.

Next steps

- Pick one high-value page and implement the on-page blueprint this week. Measure for two cycles, then scale to the rest of the cluster. If you want a single place to track cross-engine citations and sentiment while you iterate, consider trying Geneo’s monitoring workflow on your “Prompts Basket.”

If you’re building your GEO and AI visibility practice, the Geneo blog regularly covers patterns, tools, and experiments you can adapt. Ready to see your citations move? Let’s make your next answer easy to quote.