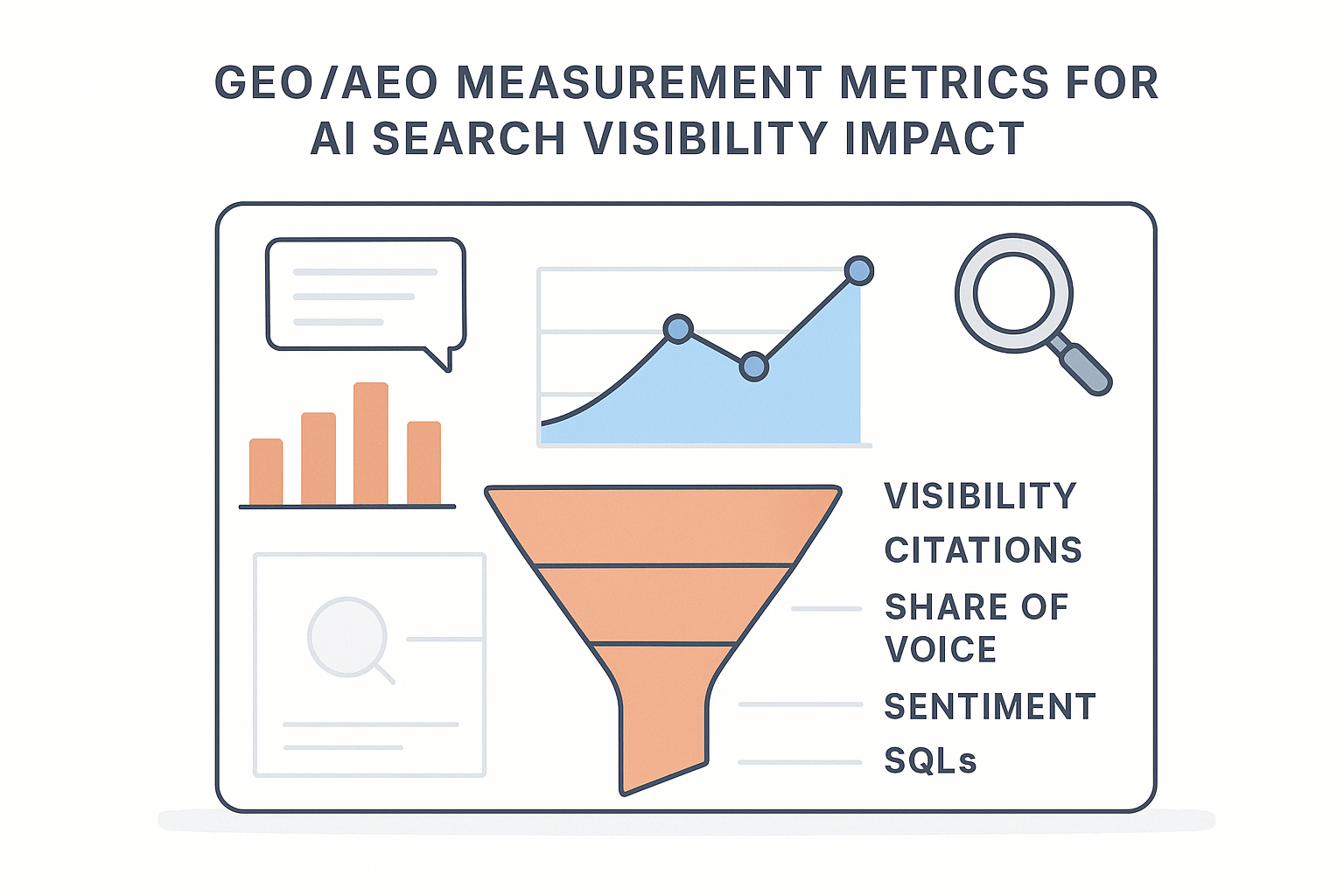

GEO/AEO measurement metrics for AI search visibility impact

Define GEO/AEO KPIs, formulas, dashboards, and SQL methods to measure AI visibility and connect it to pipeline—without vendor bias.

AI search visibility is measurable—but only if you’re honest about what you can and can’t observe.

Traditional SEO measurement assumes a click happens. Generative answers often don’t. Google AI Overviews can satisfy intent without a visit; ChatGPT-style tools can recommend vendors without linking at all. Meanwhile, the outputs are volatile: the same prompt can produce different sources, different language, and different “winners” depending on time, location, and model changes.

So the goal isn’t to recreate the old keyword-rankings dashboard with new labels. The goal is to build a defensible system that:

tracks where and how often you appear in AI answers

separates mentions from citations (and treats them differently)

ties AI visibility to business impact using controlled comparisons, not wishful thinking

This post lays out best practices for defining KPIs, writing formulas, building dashboards, and running SQL-based correlation checks—without vendor bias.

Definitions you need before you measure anything

GEO vs AEO (in measurement terms)

GEO (Generative Engine Optimization) measurement focuses on how often and how well your brand/content appears in generative answers across engines (Google AI Overviews, Perplexity, ChatGPT-style experiences, etc.).

AEO (Answer Engine Optimization) measurement focuses on whether your content is selected as the answer (and how it’s framed) for question-style queries.

In practice, measurement overlaps. The difference is in your query set and success criteria.

Mention vs citation (and why you must separate them)

A mention is when your brand is named in the answer.

A citation is when the answer includes a source link to a page on your site (or directly attributes your content).

Mentions can influence consideration. Citations can drive traffic, and they’re easier to audit. Treating them as the same metric creates a reporting mess.

Visibility ≠ clicks

Your first KPI layer is exposure. Your second layer is outcomes. If you skip the exposure layer, you’ll spend months arguing about attribution.

Best practice 1: Use a three-layer KPI stack (so you don’t confuse activity with impact)

A defensible measurement system separates leading indicators from lagging indicators.

Why this matters

If you try to prove revenue impact with only one metric (e.g., “AI visibility score”), you’ll get destroyed in a stakeholder review—because the model is opaque and the buyer journey is multi-touch.

How to implement

Use this stack:

Visibility KPIs (Leading): Are we appearing?

Engagement KPIs (Bridge): Are we getting attention or visits when we appear?

Business KPIs (Lagging): Are we generating qualified leads and pipeline?

Failure mode

You over-celebrate a visibility lift while pipeline is flat—or you call the program a failure because traffic didn’t move, even though your brand is now recommended in AI answers.

Example

Your QBR shows:

Visibility: +12 points (good)

Clicks: flat (expected in zero-click environments)

SQLs: up slightly with a 2–4 week lag (worth investigating)

That’s a coherent story. Without the stack, it’s noise.

Best practice 2: Build a prompt universe with bias controls (or your metrics won’t be credible)

Why this matters

Prompt design changes the result. If your tracked prompts include your brand name, your “visibility rate” will inflate automatically.

Moz demonstrated this bias effect in its experiment on brand bias in LLM prompts: branded prompts produce dramatically more brand mentions than non-brand prompts, and even “soft-brand” prompts can naturally elicit brand lists.

How to implement

Create three prompt buckets and report them separately:

Brand prompts (explicit): “Is Geneo a good tool for AI Overviews tracking?”

Soft-brand prompts (category-biased): “Best AI visibility tracking tools for agencies”

Non-brand prompts (problem-first): “How do I measure AI Overviews impact on organic pipeline?”

Then add structure:

Prompt clusters: group prompts by intent (definitions, evaluation, implementation, troubleshooting) and by topic (AIO, citations, brand reputation, etc.).

Sampling rules: keep a stable “core set” (e.g., 30–50 prompts) and allow a rotating set for exploration.

Controls: use consistent geo/device settings and a consistent run cadence.

Failure mode

You claim “40% visibility” and a smart stakeholder asks, “How many prompts had our brand name in them?” If you can’t answer immediately, your dashboard loses credibility.

Example

Your dashboard shows:

Brand prompts: 92% mention rate

Soft-brand prompts: 28% mention rate

Non-brand prompts: 9% mention rate

This is also where you’ll want to track citations vs mentions separately—because a program that increases mentions but not citations can change perception without changing traffic.

That distribution is normal—and actionable.

Best practice 3: GEO/AEO measurement metrics: define a metric dictionary with formulas

Why this matters

Most teams “track AI visibility” without agreeing on definitions. That’s how you end up with three dashboards and four numbers for the same KPI.

How to implement

Write the dictionary once. Store it in your analytics repo/wiki. Every metric below is definable from a prompt-run log.

The minimum viable AI search visibility KPIs

1) Answer inclusion rate (AIR)

What it tells you: “Do we show up at all?”

Formula:

AIR = (number_of_prompts_with_any_brand_mention) / (total_prompts)

2) Citation rate (CR)

What it tells you: “When we’re mentioned, are we actually sourced?”

Formula:

CR = (number_of_answers_with_a_citation_to_our_domain) / (number_of_answers_with_any_brand_mention)

For Google AI Overviews specifically, many teams compute presence/citation rates by capturing SERP feature data outside of Search Console because GSC doesn’t isolate AIO impressions. Search Engine Journal’s walkthrough of manual vs API tracking is a solid reference.

3) Share of voice in answers (SOV-A)

What it tells you: “How often are we the brand that gets named compared to competitors?”

Formula (per prompt cluster):

SOV-A = (our_mentions) / (all_brand_mentions_in_cluster)

4) Citation share (SOV-C)

What it tells you: “How often does our domain earn the source slot vs others?”

Formula:

SOV-C = (citations_to_our_domain) / (all_citations_in_cluster)

5) Citation position score (CPS)

What it tells you: “Are we a primary source or a footnote?”

Implementation note: AI engines list sources in an order. Capture that rank when possible.

Formula (one simple version):

CPS = AVG(1 / citation_rank)

A first-position citation contributes 1.0; second contributes 0.5; third contributes 0.33.

6) Representation accuracy rate (RAR)

What it tells you: “When we appear, are we described correctly?”

Formula:

RAR = (number_of_mentions_scored_accurate) / (total_mentions_scored)

Implementation: create a small rubric (accurate / partially accurate / inaccurate) and sample-review.

7) Sentiment balance (SB)

What it tells you: “Are mentions positive/neutral/negative?”

One defensible score:

SB = (positive_mentions - negative_mentions) / total_mentions

Failure mode

You adopt a single “visibility score” with no transparency. The first time results dip, everyone loses trust because they can’t see what changed.

Example

A weekly scorecard (per cluster):

AIR (non-brand prompts)

CR

SOV-A vs top 3 competitors

RAR (sampled)

That’s enough to run the program.

Best practice 4: Instrument Google AI Overviews separately from “LLM answers”

Google AI Overviews behave like a SERP feature. LLM answer engines behave like conversations. Don’t mash them into one feed without keeping the dimension.

Pro Tip: Create a single field called

surfacewith values likegoogle_aio,chatgpt,perplexity, andother_llm, so every metric can be segmented without rebuilding your model.

Why this matters

AIO can change organic CTR patterns even when your rankings don’t move. If you only watch rankings, you’ll miss the real shift.

How to implement

Track AIO with a dedicated table that captures:

keyword

date

location

device

AIO present (Y/N)

AIO expanded (Y/N)

cited domains + URLs

(optional) screenshot URL for audit

You can do this manually for a small set, or automate with a SERP API. The implementation pattern is supported by SERP API docs such as SerpApi’s AI Overview Results API documentation.

Failure mode

You attribute an organic CTR drop to “content quality” when the real reason is that AIO started triggering on your highest-impression queries.

Example

You segment your weekly GSC query export into two groups:

queries that trigger AIO (from your AIO tracking table)

queries that do not

Now CTR movement has context.

Best practice 5: Build two dashboards (executive + operator) so your team can act

Why this matters

Executives want trends and risk. Operators need diagnostics.

If you give an executive a diagnostic dashboard, they won’t use it. If you give an operator a single blended score, they can’t fix anything.

How to implement

Dashboard A: Executive scorecard (10-minute view)

Include:

AIR trend (split by prompt type: brand/soft-brand/non-brand)

LLM brand share of voice trend vs top competitors (SOV-A)

Citation rate trend

AI Overviews presence rate trend (for priority keywords)

“Top changes this week” (largest lifts/drops by cluster)

Add a note on data limitations (volatility, sampling) so the dashboard doesn’t over-promise.

Dashboard B: Operator cockpit (diagnostic view)

Include:

drill-down by engine (AIO vs Perplexity vs ChatGPT-style)

prompt cluster performance

citation sources table (which URLs get cited)

“accuracy exceptions” queue (mentions flagged inaccurate)

change log (what content changes happened in the period)

Failure mode

You see a visibility drop but can’t answer: which engine, which cluster, which competitor, which source URL changed?

Example

An operator sees:

AIR down in non-brand prompts

drop concentrated in “implementation” cluster

citations moved from your guide to a competitor’s updated page

That points to a content refresh, not panic.

Best practice 6: Correlate visibility to pipeline with guardrails (and show your work)

Why this matters

You can’t credibly claim causation from a single correlation chart. But you can show evidence that AI visibility moves with downstream indicators, and you can design tests that reduce ambiguity.

How to implement

Use three approaches, in increasing rigor:

Approach 1: Time-series correlation with lag windows

Pick a lag (e.g., 14–28 days) that matches your sales cycle.

compute weekly AIR/CR/SOV-A by cluster

compute weekly organic leads / MQL / SQL

test correlations with lags

Guardrail: include controls (overall organic clicks, branded search volume) to avoid confusing general SEO lift with AI-specific lift.

Approach 2: Difference-in-differences content experiments

Treatment group: refresh a set of pages targeting a prompt cluster

Control group: similar pages untouched

Compare visibility lift and lead lift between groups over the same period

This is the cleanest way to get closer to causation without claiming certainty.

Approach 3: Source-path attribution (when available)

If you can identify AI referrals (some platforms pass referrers; sometimes you’ll see it in server logs), segment:

sessions from AI tools

conversion rates from AI sessions vs other channels

Failure mode

You build a dashboard that implies “visibility caused revenue” with no disclaimer, and the first skeptical question stalls the program.

Example SQL: minimal viable data model

A simple schema you can implement in a warehouse:

prompt_runs(one row per prompt per run)run_date,engine,prompt_id,prompt_type,cluster_idbrand_mentioned(boolean)brand_cited(boolean)citation_rank(integer, nullable)sentiment_label(nullable)accuracy_label(nullable)

web_events(from GA4 or logs)event_date,landing_page,source,medium,sessions,leads

crm_facts(weekly rollups)week_start,mqls,sqls,pipeline_amount

1) Weekly KPI rollup

WITH weekly AS (

SELECT

DATE_TRUNC(run_date, WEEK) AS week_start,

prompt_type,

cluster_id,

AVG(CASE WHEN brand_mentioned THEN 1 ELSE 0 END) AS air,

SAFE_DIVIDE(

SUM(CASE WHEN brand_cited THEN 1 ELSE 0 END),

SUM(CASE WHEN brand_mentioned THEN 1 ELSE 0 END)

) AS citation_rate,

AVG(CASE WHEN citation_rank IS NULL THEN NULL ELSE 1.0 / citation_rank END) AS citation_position_score

FROM prompt_runs

GROUP BY 1,2,3

)

SELECT * FROM weekly;

2) Lagged correlation to SQLs (cluster-level)

WITH weekly_vis AS (

SELECT

DATE_TRUNC(run_date, WEEK) AS week_start,

cluster_id,

AVG(CASE WHEN brand_mentioned THEN 1 ELSE 0 END) AS air_nonbrand,

SAFE_DIVIDE(

SUM(CASE WHEN brand_cited THEN 1 ELSE 0 END),

SUM(CASE WHEN brand_mentioned THEN 1 ELSE 0 END)

) AS citation_rate

FROM prompt_runs

WHERE prompt_type = 'non_brand'

GROUP BY 1,2

),

weekly_sql AS (

SELECT week_start, sqls

FROM crm_facts

)

SELECT

v.cluster_id,

CORR(v.air_nonbrand, s.sqls) AS corr_air_vs_sql,

CORR(v.citation_rate, s.sqls) AS corr_citation_vs_sql

FROM weekly_vis v

JOIN weekly_sql s

ON s.week_start = DATE_ADD(v.week_start, INTERVAL 21 DAY) -- example 3-week lag

GROUP BY 1;

Correlation doesn’t prove causation. But done carefully—with segmentation, lags, and controls—it tells you where to look.

Best practice 7: Add governance so your metrics don’t drift

Why this matters

AI platforms change. Your prompt set will drift. Your definitions will get “interpreted” by different people.

How to implement

Change log: track when prompts are added/removed, when clusters change, and when scoring rubrics change.

QA cadence: sample-review accuracy and sentiment weekly (small sample is fine).

Volatility policy: require two consecutive runs before declaring a trend.

Failure mode

A metric jumps and nobody knows whether it’s a real change or a measurement change.

Example

A weekly note in the operator dashboard:

“Added 5 prompts to the ‘implementation’ cluster; AIR not comparable week-over-week; treat as a reset.”

A minimal “measurement starter kit” (what to build in your first 30 days)

If you’re a small team, start here:

Define prompt clusters and prompt types (brand / soft-brand / non-brand)

Establish baseline AIR + citation rate weekly

Track Google AI Overviews presence for 50–100 priority queries (manual or API)

Ship the executive scorecard

Add one correlation view with an explicit lag and a disclaimer

Everything else is iteration.

Where Geneo fits (without turning this into a pitch)

If you want a single workspace to organize prompt tracking, visibility KPIs, and AI Overviews monitoring, you can explore Geneo and use the templates above to evaluate any approach consistently.

For deeper reads, these are relevant starting points:

FAQ: GEO/AEO measurement

What’s the single best KPI for AI search visibility?

There isn’t one. The minimum defensible set is: answer inclusion rate (split by prompt type), share of voice vs competitors, and citation rate. If you’re not separating brand vs non-brand prompts, your “best KPI” will be misleading.

Can I measure AI Overviews in Google Search Console?

Not directly. Search Console doesn’t currently provide a clean dimension for “AI Overview present” or “cited in AI Overview,” so teams pair GSC with separate AIO tracking, as described in Search Engine Journal’s guide to tracking Google AI Overviews.

How often should I run prompt tracking?

Weekly is a practical starting point for SMB teams. AI outputs are volatile, so you should require at least two consecutive runs before declaring a trend.

How do I avoid making decisions on noisy data?

Use prompt clusters, stabilize your core prompt set, segment branded vs non-branded prompts, and implement a volatility policy (e.g., two-run confirmation). Also track changes to your measurement system in a log.

Does higher AI visibility always reduce website traffic?

Not always. Some queries become more zero-click; others still drive clicks through citations or follow-up research behavior. That’s why you track visibility and engagement separately.