Client‑Specific Prompts & Keyword Sets for AI Exposure Tracking

Learn how to define, standardize, and measure client‑specific prompts & keyword sets for effective AI exposure tracking across major answer engines.

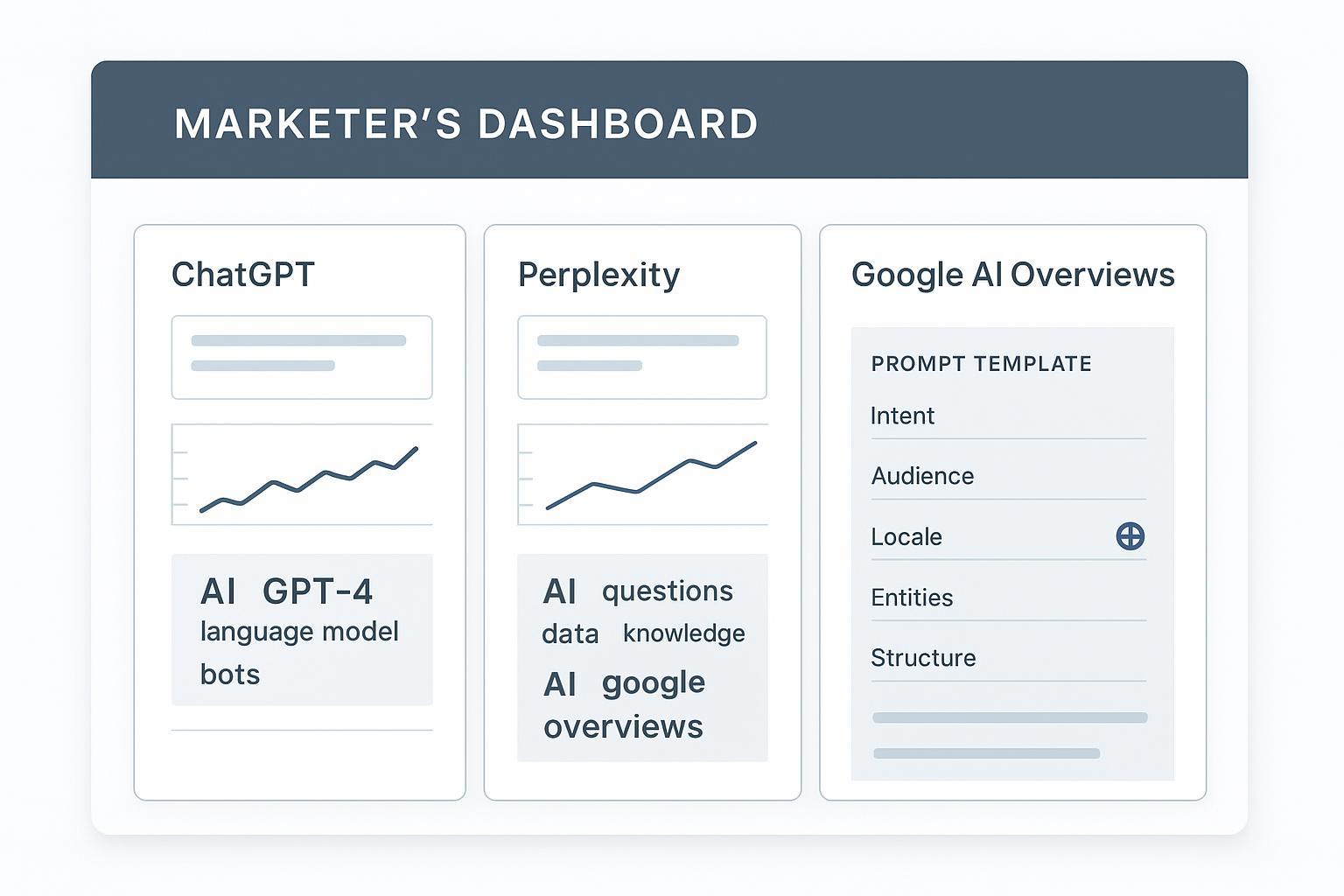

How do you design prompts and keyword clusters that reliably reflect a client’s goals—and then measure whether those inputs actually move visibility across AI answers? This guide defines the core terminology, lays out a reproducible agency workflow, and shows how to report citations, mentions, and Share of Voice across ChatGPT, Perplexity, and Google AI Overviews.

Definitions: The building blocks

A client‑specific prompt is a reproducible text input that encodes user intent (informational, commercial, transactional), audience notes, locale, structure expectations, and explicit semantic/brand entities so outputs remain comparable across time and platforms. Think of it as a mini content brief. Practitioners formalize this with user intent, semantic keywords, and content structure—principles documented in Page One Power’s prompt optimization framework (2025).

A keyword set (cluster) is layered around a topic/prompt and includes four parts: primary keyword(s), semantic/entity variants, natural question/long‑tail forms, and branded phrases/product names plus canonical URLs for citation detection. Topic clusters help establish topical authority and machine interpretability for AI‑driven SEO, as outlined by SMAMarketing’s guidance on topic clusters for AI search (2025).

AI exposure is the presence and prominence of your brand, pages, or entities inside AI‑generated answers, measured via explicit citations/links, text snippet matches, and visibility signals in the response. For a broader primer on why this matters, see AI visibility defined.

Why standardize and map to intent and locale

Standardization isn’t busywork—it’s how you make results comparable and trustworthy. Prompts should be versioned, templated, and mapped to funnel intents and locales. GEO (Generative Engine Optimization) borrows SEO fundamentals but adapts for AI answer engines, advocating people‑first content and strong crawlability and schema; see Siddharth Bharath’s overview of GEO (2025). Templates ensure that when models change or platforms update citation behavior, your inputs stay consistent enough to attribute changes to real factors, not noise.

Platform nuances you must account for

Different engines handle sources and citations differently. Understanding these mechanics makes your tracking more credible.

| Platform | Citation display | How sources are selected | Notes |

|---|---|---|---|

| Google AI Overviews / AI Mode | Overviews show helpful links near synthesized answers; AI Mode surfaces sources in a sidebar | Gemini models fan out sub‑queries; prioritizes indexed, crawlable, policy‑compliant content | Guidance emphasizes helpful, reliable content; controls via robots/noindex/snippet. See Google Search Central’s AI features docs (2025). |

| Perplexity | Numbered, clickable citations embedded in answers | Real‑time web search and summarization from authoritative sources; Deep Research can refine selection | Citations are core trust signals; see Perplexity’s docs and Deep Research announcement (2025). |

| ChatGPT (OpenAI) | With web access, responses can include links and a globe icon indicating consulted sources | ChatGPT Search/Atlas retrieves web sources; citation formatting is variable | See OpenAI’s pages on ChatGPT Search and Atlas (2024–2025) for behavior details. |

Additional comparative insight: Semrush analyzed citation patterns across AI Modes and found domain reputation often outweighs exact organic rank, with Reddit appearing frequently; Google AI Mode aligns more with ChatGPT than with AI Overviews. See Semrush’s AI Mode comparison study (2025).

Operational workflow: An agency playbook

-

Define versioned prompt templates

- Include fields: intent, audience, locale, primary keyword, semantic/entity tokens, required structure, and an instruction to include source URLs when supported (Perplexity/AI Overviews).

-

Build layered keyword clusters per prompt

- For each topic: primary keywords; semantic/entity variants; natural questions; branded phrases and canonical URLs to aid citation detection.

-

Map prompts to funnel intents and locales

- Separate informational vs commercial vs transactional; set language/country to mirror market coverage and campaign strategy.

-

Run cross‑engine checks on a schedule

- Weekly runs across ChatGPT (with web access), Perplexity, and AI Overviews; record timestamp and model/mode.

-

Capture outputs and metadata

- Store full text, citations/links, brand mentions (including sentiment), platform/mode, locale, prompt version; repeat runs to reduce non‑determinism.

-

Normalize and store consistently

- Use clear SOPs: consistent position weighting, locale codes, entity dictionaries, and canonical URL lists.

-

Govern and audit

- Adhere to platform TOS, respect rate limits, and document any prompt/version changes for comparability.

Measurement and reporting: KPIs that hold up

Share of Voice (SOV) in AI answers

- Practical definition: the percentage of a brand’s mentions—weighted by position and prompt volume—relative to all tracked brands in AI responses. Semrush’s materials discuss AI visibility and SOV as mention share in AI answers; disclose your weighting method. See Semrush’s AI visibility overview (2025).

- Example formula (disclose as methodology): SOV = (Σ weighted_mentions_brand / Σ weighted_mentions_all_brands) × 100, where weighted_mentions = frequency × position_weight × prompt_volume_weight.

AI Citation Rate (custom metric)

- Industry standard doesn’t exist yet. A practical, clearly labeled approach: AI Citation Rate = (Number of responses that include at least one valid citation to your site or brand entity / Total responses) × 100. Define validity (e.g., canonical URL match or domain + entity match) and disclose the method.

Prominence Score (custom metric)

- Combine position, snippet length, and whether a citation to your domain is present; normalize to [0,1]. Again, methodology disclosure is key. For improving prominence via content quality and schema, see Optimize for AI citations: step‑by‑step guide.

Volatility and coverage

- Track week‑over‑week changes by platform and locale (volatility) and the percent of prompts where your brand appears (coverage). For setting baselines, see Run an AI visibility audit. For logging locales and prompt versions, see AEO Best Practices 2025.

Troubleshooting low exposure

If mentions or citations are sparse, start with content and entities:

- Ensure crawlability, clarity, and helpfulness; align with people‑first guidance from Google and platform docs.

- Strengthen entity signals: consistent names, schema markup, and canonical URLs.

- Expand your cluster: answer natural questions and long‑tails; add supporting pages that reinforce topical authority.

- Review citation‑friendly formatting: publish original data, how‑tos, and references that engines prefer to cite.

Practical micro‑example (with disclosure)

An agency can log prompt versions and locale settings, then run weekly checks across ChatGPT, Perplexity, and AI Overviews using a monitoring platform that supports citation capture and SOV rollups. Disclosure: Geneo (Agency) is our product. Geneo can be used to store prompt templates, track AI mentions/citations, and compute Share of Voice over time. For a neutral overview of dashboard‑level rollups and metrics, see Geneo (Agency) product overview.

Ethics and governance

Respect each platform’s terms of service, avoid heavy automation that risks rate limits, and document every change to prompts, locales, and versions. Reproducibility matters: without a clear audit trail, trends are hard to trust.

Ready to put this into practice? Start by drafting one versioned prompt template per core topic, build its layered keyword set, and run a small weekly panel across the three engines. What will you learn about your clients’ visibility in the first four weeks?