Best Generative Engine Optimization Tools for 2026

Discover 7 top Generative Engine Optimization tools for 2026. Compare AI visibility tracking and content impact. See rankings and use cases. Click for expert picks!

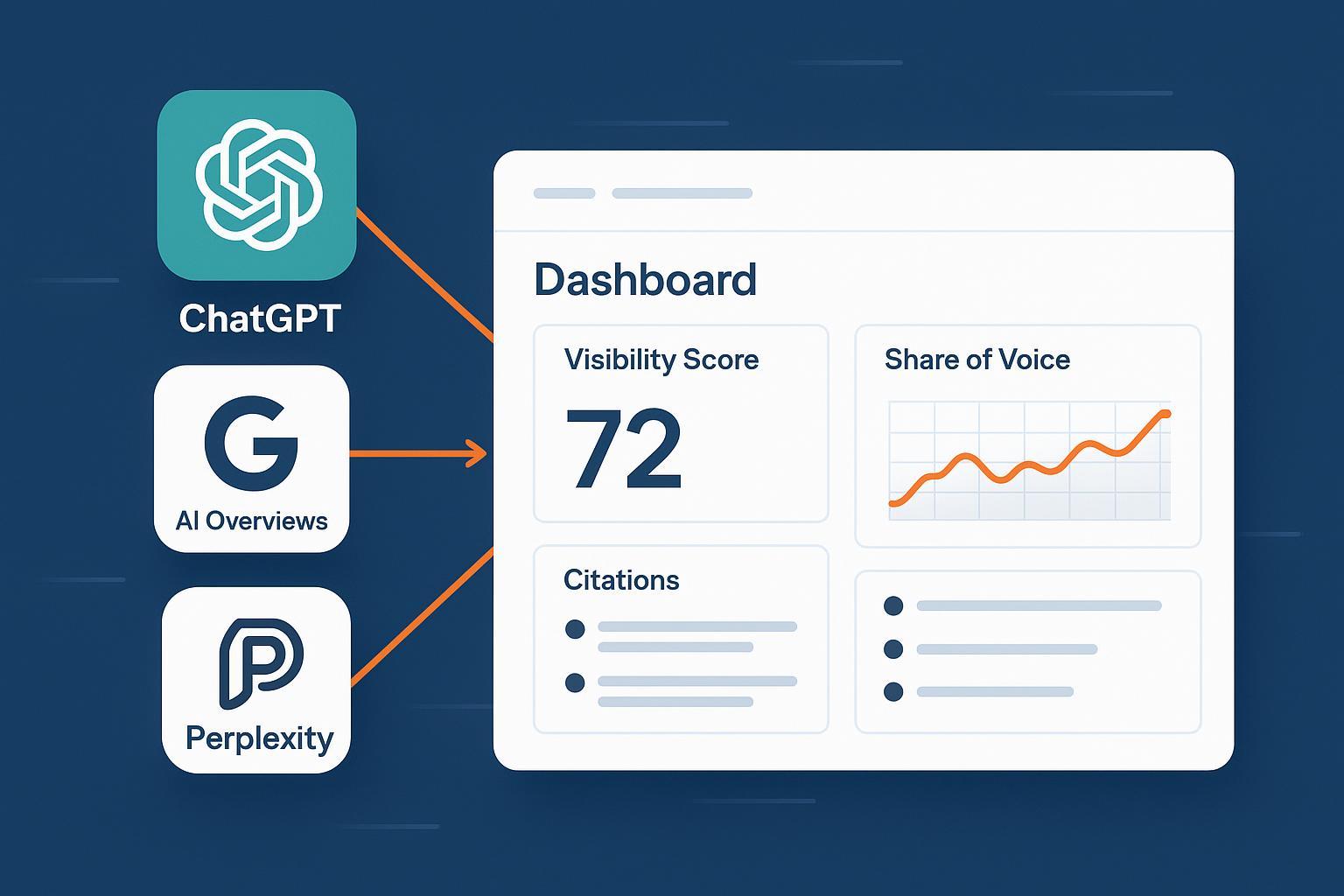

Generative Engine Optimization (GEO) is about earning visibility and recommendations inside AI answer engines—ChatGPT, Google AI Overviews/AI Mode, Perplexity, Copilot, Gemini, and others. Unlike SEO, which targets web search rankings, GEO focuses on how large language models cite sources, mention brands, and recommend products in synthesized answers. If your buyers start their research in AI assistants, you need to measure your presence there and act on it.

Key takeaways

Separate “Trackers” (measure AI visibility across engines) from “Optimizers” (improve content/technical signals to raise visibility).

Rank tools primarily by two criteria: accuracy/coverage of AI visibility tracking and the real content performance impact they enable.

Enterprise teams should weigh compliance and reporting; agencies need exports, white-label, and competitive benchmarking.

Start with an AI visibility audit, then prioritize queries and sources shaping AI answers before scaling content experiments.

How we ranked (methodology)

We evaluated tools using weighted criteria: 40% accuracy and coverage of AI visibility tracking; 35% content performance impact tied to GEO outcomes; 10% reporting quality; 10% integrations (CRM/MAP/analytics); 5% compliance/governance. We grouped tools into two segments: Trackers (multi-engine visibility monitoring) and Optimizers (content workflows that support GEO results). For context on definitions, see the primer on AI visibility and brand exposure in AI search (2026).

Disclosure: Geneo is our product.

Trackers: Multi-engine AI visibility monitoring

Profound

Positioning: Enterprise AI visibility platform with multi-engine tracking, prompt-volume datasets, and compliance features.

Capabilities: Tracks 10+ answer engines (e.g., ChatGPT, Google AI Overviews/Mode, Gemini, Copilot, Perplexity, Grok) with front-end monitoring designed to reflect real user experiences. Dashboards include visibility score, share of voice, citations, sentiment, and historical trends. Prompt Volumes help identify high-intent queries; enterprise controls include SOC 2 Type II and SSO/SAML.

Best for: Regulated enterprises and teams that need audited methods and cross-engine benchmarking.

Pros: Wide engine coverage; strong datasets on prompts; compliance posture for larger orgs.

Cons: Pricing is custom and may be higher; complexity requires onboarding.

Pricing (subject to change): Enterprise/custom.

Evidence: Engine coverage and approach in front-end vs API monitoring articles (2025), Grok support in product updates (2025), and compliance in SOC 2 announcement (2025).

Geneo

Positioning: GEO platform for monitoring brand visibility across ChatGPT, Google AI Overviews, and Perplexity, with competitive analysis and agency-ready reporting.

Capabilities: Multi-engine monitoring with scheduled queries, a Brand Visibility Score aggregating mentions/citations and sentiment, competitor benchmarking, and white-label reporting on custom domains for agencies.

Best for: Agencies needing executive-ready visibility reports and brands looking to validate share of voice and citation gains.

Pros: Focused multi-engine tracking (ChatGPT, Perplexity, AI Overviews) and competitive benchmarking; white-label dashboards for client delivery.

Cons: Coverage is centered on leading engines; advanced governance features are lighter than enterprise-first platforms.

Pricing (subject to change): From ~$129/month (Starter), ~$249/month (Growth), ~$499/month (Scale).

Evidence: Overview and pricing in the Geneo review: AI search visibility tracking (2025) and the agency portal explainer (2026). Methodology context: how to run an audit in step-by-step AI visibility audit for agencies (2026).

SE Ranking

Positioning: Broad SEO suite with AI Overviews and cross-engine visibility tracking embedded in familiar workflows.

Capabilities: The AI Overviews/AI Results Tracker monitors AIO presence, sources, volatility, and competitor comparisons. The AI Visibility Tracker (SE Visible) expands to ChatGPT, Gemini, Perplexity, and AI Mode/Overviews with dashboards for mentions, links, sentiment, positions, and history, plus exports.

Best for: Marketing teams wanting AIO plus multi-engine visibility inside an SEO platform they already use.

Pros: Accessible dashboards and exports; ties into rank tracking and GSC analyses; good documentation.

Cons: Coverage depth varies by engine; advanced compliance features are limited compared to enterprise platforms.

Pricing (subject to change): SE Visible from ~$189/month (Core) with prompt and brand limits; AI add-ons from ~$89/month; main SE Ranking plans from ~$65/month.

Evidence: Official pages for AI Visibility Tracker and SE Visible (2025–2026); AIO methodology in AI Overviews analysis guides (2025).

Semrush

Positioning: AI Visibility Toolkit and Enterprise AIO paired with deep search datasets and AIO research.

Capabilities: Visibility monitoring (mentions, citations, prompts, share of voice, sentiment) and position tracking with daily change detection; free AI Search Visibility Checker for quick checks. Semrush’s 2025 study analyzing 10M+ keywords provides context on AIO incidence and click behavior.

Best for: Teams that want visibility monitoring integrated with industry-leading SEO data and analysis.

Pros: Strong research foundation; wide ecosystem and integrations; enterprise modules available.

Cons: Toolkit features may be add-ons; enterprise features require demos/custom pricing.

Pricing (subject to change): AI Visibility Toolkit around ~$99/month as an add-on; Enterprise AIO custom.

Evidence: The Semrush AI Overviews study of 10M+ keywords (2025) and AI Visibility Toolkit documentation.

Peec

Positioning: AI search analytics focused on mapping which sources shape AI answers and how to drive visibility growth.

Capabilities: Tracks mentions/citations, visibility vs competitors, sentiment, and the domains that shape LLM outputs. Documentation references coverage of ChatGPT, Perplexity, Gemini, Claude, Grok, and others; Looker Studio connector supports reporting.

Best for: Marketing teams prioritizing source analysis to decide where to win citations next.

Pros: Emphasis on sources and actionable insights; reporting connectors.

Cons: Coverage details are spread across docs/blogs; pricing specifics are less transparent.

Pricing (subject to change): Public tiers on the site; plan specifics vary by prompts/brands.

Evidence: Funding and focus covered by TechCrunch’s report on Peec’s $21M raise (2025); features in docs overviews (2025).

Optimizers: Content workflows supporting GEO outcomes

Surfer

Positioning: SEO content optimization suite with an AI Tracker add-on for cross-engine visibility monitoring.

Capabilities: AI Tracker supports ChatGPT, Google AI Overviews/Mode, Gemini, and Perplexity, with daily refreshes and prompt-level insights. Visibility metrics include mention rate, average position, and visibility score; Compare lets you benchmark competitors. Paired with Content Editor and internal linking, it helps teams act on insights.

Best for: Content-focused teams seeking lightweight visibility monitoring inside their optimization stack.

Pros: Integrated optimization workflow; approachable dashboards; frequent product updates.

Cons: Coverage depth and governance are lighter than enterprise trackers; pricing varies by tier/add-ons.

Pricing (subject to change): Plans at surferseo.com/pricing; public ranges historically ~$79–$175/month.

Evidence: AI Tracker pages and updates: Surfer AI Tracker (2025–2026).

QuickCreator

Positioning: AI-driven content and site creation platform for scaling SEO-friendly blogs and landing pages.

Capabilities: Long-form AI blog generation with headings/meta/internal links, multilingual support, block-based editor, real-time SEO analysis, and one-click publishing. Templates help launch landing pages without code; basic analytics track performance.

Best for: Small teams needing to scale content output and simple sites fast.

Pros: Fast content production; multilingual; accessible templates and publishing.

Cons: Not an AI visibility tracker; analytics are more site-level than LLM-specific.

Pricing (subject to change): Tiers from Free/Starter/Pro/Enterprise; confirm latest rates on QuickCreator pricing.

Evidence: Feature and pricing references on QuickCreator’s official pages (2025–2026).

FAQ: Trackers vs optimizers, and how to start

When should you choose a tracker over an optimizer? If you can’t quantify your brand’s presence in AI answers across engines, start with a tracker to establish baselines—mentions, citations, share of voice, and source domains. Then use optimizers to improve content targeting the queries and sources that matter.

How do you start an AI visibility audit? Define target queries by buyer journey stage, run neutral prompts across engines, capture mentions/citations, and compare against competitors. For a step-by-step walkthrough, see how to perform an AI visibility audit for your brand (2026). To measure downstream outcomes, pair visibility tracking with analytics—see best practices for tracking and analyzing AI traffic (2025).

Next steps

If your team needs multi-engine visibility tracking with competitive benchmarking and agency-ready reporting, consider starting a pilot with Geneo to run your first 60–90 day GEO program—set baselines, prioritize engines and queries, and quantify impact on content performance. Then expand to enterprise trackers or optimizer workflows as your needs evolve.