Best Answer Engine Optimization for AI (2025): Expert Guide

Discover 2025’s authoritative AEO best practices—expert frameworks, real data, and actionable tactics for optimizing brands in AI answers across Google, ChatGPT, and Perplexity.

The way people get answers has changed faster than most teams have updated their playbooks. Organizational AI usage climbed sharply in 2024, with 78% of organizations reporting AI adoption and 71% using generative AI in at least one function, according to the Stanford HAI AI Index (2025). At the same time, the open web is receiving fewer clicks from search. SparkToro’s 2024 zero‑click study observed only 374 clicks to the open web per 1,000 U.S. Google searches. And when an AI summary appears, users are even less likely to click: Pew Research (2025) found that on visits with an AI summary users clicked traditional links 8% of the time vs. 15% without, and only about 1% clicked links inside the AI summary itself. If your content strategy still measures success by blue‑link rankings alone, you’re flying blind.

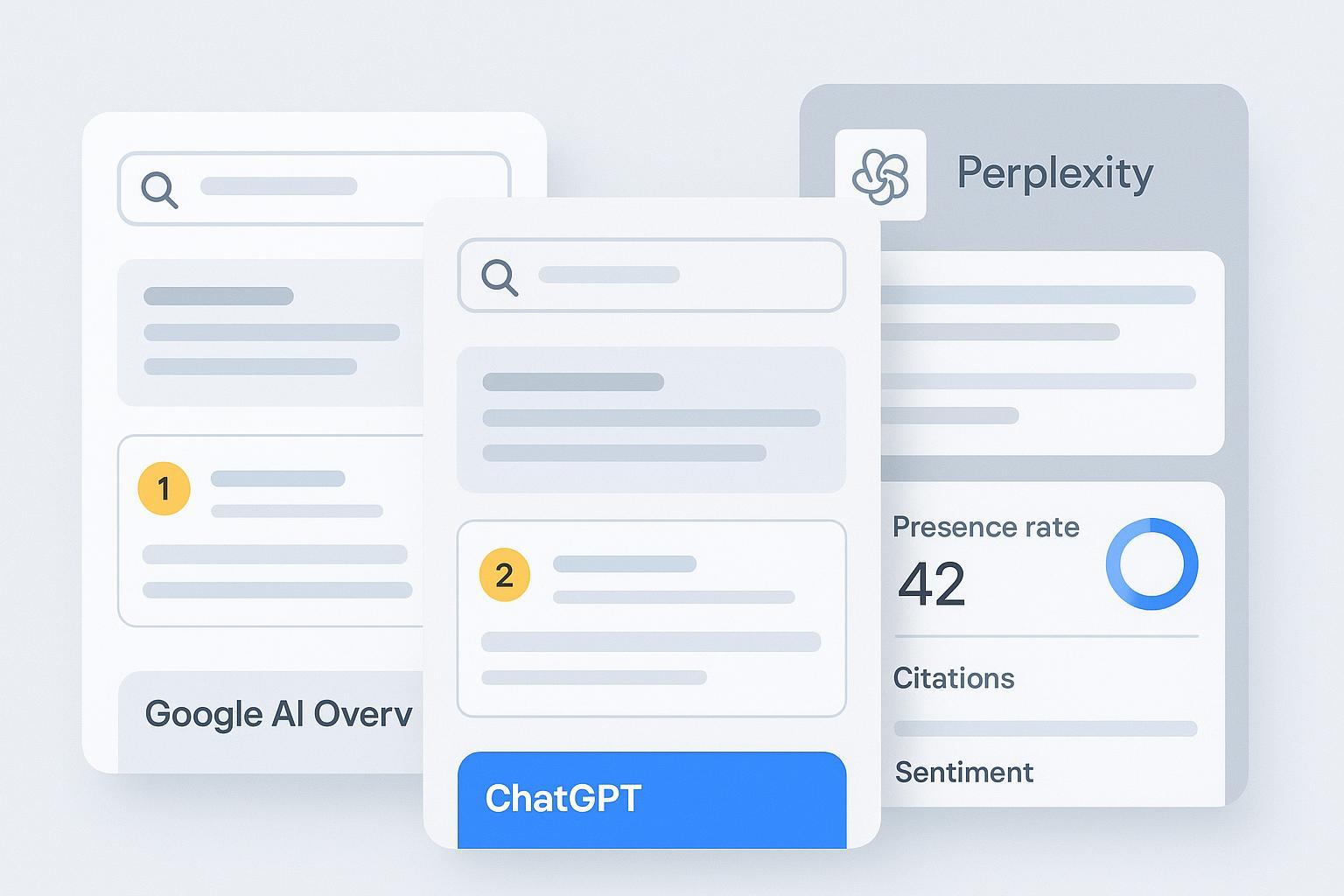

Here’s the thesis: Answer Engine Optimization (AEO) and Generative Engine Optimization (GEO) are not cosmetic rebrands of SEO. They’re a shift in objectives—from ranking to being included, cited, and correctly represented inside AI answers across Google AI Overviews/AI Mode, ChatGPT Search, and Perplexity.

AEO vs. SEO: What actually changes

Traditional SEO optimizes for rankings and click‑through. AEO/GEO optimizes for:

Inclusion as a supporting source in AI answers

Correct brand mentions and link attribution

Favorable, accurate framing of your expertise

Share of voice across engines compared with competitors

This KPI shift is grounded in user behavior. The SparkToro 2024 study quantified the zero‑click reality, and Pew’s 2025 analysis showed that AI summaries depress clicks to both the open web and the summary’s own links. So your dashboard should elevate presence rate, citation count, attribution accuracy, and sentiment tone—not just impressions and CTR.

Platforms also behave differently. Google says there are no special technical requirements to appear in AI features beyond standard Search eligibility. You should still align with people‑first content and page experience, and you can control exposure with snippet directives (nosnippet, data‑nosnippet, max‑snippet) or noindex if you truly need to opt out. OpenAI’s ChatGPT Search presents explicit source attributions and has content partnerships with major publishers in news categories. Perplexity includes numbered citations for each answer and supports real‑time retrieval. These differences matter when you craft and measure content.

For a step‑by‑step walkthrough of “how to make content citable,” see the guide on building extractable structure and schema in the post on optimizing content for AI citations.

A cross‑engine best‑practice blueprint

Use this blueprint as your default operating system. Think of it as designing crisp answer blocks that both humans and LLMs can extract with minimal friction.

Answer‑first formatting Lead with a 1–2 sentence direct answer (inverted pyramid), then expand. Use question‑based H2/H3s, short paragraphs, and occasional lists/tables. Comparative tests reported by Search Engine Land (2025) suggest succinct, well‑labeled passages are more likely to be cited.

Structured data aligned to visible content Implement JSON‑LD (Article with author/org, FAQPage, HowTo) that reflects what’s on the page. Google’s AI features guidance doesn’t claim schema guarantees inclusion, but structured data helps systems parse content and represent it appropriately.

Technical readiness Ensure indexability, canonicalization, mobile‑first UX, and strong Core Web Vitals. Avoid blocking snippets if you want eligibility in AI summaries; snippet controls can reduce or remove exposure.

Authority and verifiability Add expert bylines and bios, cite 1–2 high‑quality sources for major claims, and include first‑party data where possible. Engines favor content that can be traced and verified.

Freshness cadence Update time‑sensitive pages on a predictable schedule and surface update notes. Real‑time engines (like Perplexity) tend to reflect updates faster than traditional SEO cycles.

Measurement loop Build a prompt library that covers core intents across engines. Log answers with evidence—citations, mentions, links, and tone—so you can track presence rate, citation accuracy, and share of voice. Iterate content based on what engines actually show.

For a deeper look at how user behavior should change your KPIs, see the analysis in AI Search User Behavior 2025.

How engines differ (in brief)

Engine | How attribution appears | Freshness behavior | Controls & eligibility | Official guidance |

|---|---|---|---|---|

Google AI Overviews / AI Mode | Supporting links beneath the summary; not all passages link | Varies; draws from indexed content; appears more on informational, multi‑word queries | Standard Search eligibility; snippet directives can limit/opt out | See Google’s AI features and your website (2025) |

ChatGPT Search | Explicit source cards/links; “Sources” button | Incorporates recent web sources; partnerships influence some news surfacing | Ensure crawlable, authoritative pages; partnerships affect certain categories | See OpenAI’s Introducing ChatGPT Search (2024) |

Perplexity | Numbered citations linking to originals | Real‑time retrieval and synthesis | Accessible content; benefits from scannable structure; deep links help | See Perplexity search quickstart docs |

Engine‑specific tactics that work in 2025

Google AI Overviews / AI Mode

Eligibility mirrors standard Search: helpful, reliable, people‑first content with solid page experience. Google’s documentation clarifies there are no extra technical requirements for AI features beyond Search eligibility, and you may use snippet controls to manage exposure.

Structure content in Q&A form for queries that are likely to trigger summaries—informational and multi‑step questions are common. Keep the answer block tight (1–3 sentences) before expanding.

Use structured data where it matches the visible content (FAQPage, HowTo, Article). Treat schema as supportive, not as a switch.

Maintain author details, citations, and update notes to reinforce E‑E‑A‑T signals engines can parse.

Useful background: Google’s current guidance is summarized in AI features and your website (Search Central, 2025).

ChatGPT Search

Expect explicit attribution. Optimize for passages that read well in isolation. Use descriptive subheads that mirror user intents (“What is…,” “How to…,” “Pros and cons,” etc.).

In news categories, OpenAI’s publisher partnerships (e.g., The Guardian, The Washington Post, Financial Times) can influence what’s surfaced, but non‑partners can still earn citations with high‑quality, timely, citable content. See OpenAI’s Introducing ChatGPT Search (2024) for how sources are presented.

Use high‑quality outbound citations to anchor claims. LLMs often reward verifiable, well‑sourced passages.

Keep crawlability open; avoid heavy interstitials or dynamic regions that hide your best answer blocks from bots.

Perplexity

Perplexity shows numbered citations and performs real‑time retrieval. Write modular sections with IDs on H2/H3 so deep links can point precisely to the relevant passage.

Add concise tables and lists where appropriate. These give Perplexity neat objects to cite.

Maintain topical clusters so the engine can frame you as an authority across related questions, not a one‑off answer.

Ensure your content is reachable by real‑time crawlers and that your canonicalization doesn’t fragment authority.

For a field guide to diagnosing low mentions or missing citations in ChatGPT—and how to fix them—see How to diagnose and fix low brand mentions in ChatGPT.

Measuring what matters (and where Geneo fits)

Disclosure: Geneo is our product.

AEO succeeds when you can verify presence and improve it deliberately. That means logging evidence at the prompt level across engines, then iterating content based on what the logs show. A practical loop looks like this:

Build a prompt library organized by themes and intents (commercial, informational, how‑to). For each engine, standardize phrasing so comparisons are apples‑to‑apples.

Run prompts on a cadence (e.g., weekly). Capture screenshots or structured evidence: source links shown, brand mentions, sentiment/tone, and whether the landing page you want is cited.

Track KPIs beyond clicks: presence rate (how often you appear), citation count, correct attribution rate, and share of voice vs. key competitors. Over time, correlate these with assisted conversions.

Update pages when evidence shows gaps: add answer‑first sections, clarify claims with sources, improve schema alignment, or refresh stale data.

You can do this in spreadsheets and shared drives, but it’s easy to lose the thread. Platforms like Geneo centralize multi‑engine evidence logs, mention/link visibility, and share‑of‑voice reporting, with white‑label outputs for agencies. For an overview of what such tracking looks like in practice, see Geneo Review 2025 and the article on LLMO metrics for measuring answer quality.

A realistic 30–60–90‑day operating plan

In the first 30 days, pick 10–20 high‑intent queries across your core themes and build answer‑first pages or sections that target them. Add author bios, cite external authorities for key claims, and implement matching structured data where it fits. Establish your prompt library and run baseline audits in ChatGPT Search, Perplexity, and Google AI Overviews/AI Mode. You’ll likely discover jarring mismatches—missing citations, outdated claims, or vague passages that don’t extract well.

By day 60, you should have a weekly audit rhythm and an evidence log that shows presence and attribution trends. Use the data to prioritize updates: tighten summary blocks, add supporting data points, and fix internal linking so your topical clusters are clear. For Google, review snippet controls and ensure nothing unintentionally suppresses exposure. For Perplexity, add IDs to headings and refine tables for clarity. For ChatGPT, strengthen sections that map to common intent frames.

By day 90, begin comparative reporting against 2–3 competitors on your highest‑value intents. Tie presence and citation accuracy to down‑funnel outcomes (newsletter signups, demos, lead quality). Where you see persistent absence, run structured tests—add a FAQ segment, insert a concise how‑to step list, or publish a fresh explainer with stronger sourcing—and log the impact over two to four weeks.

References and why they matter

According to Google’s documentation, there are no extra technical requirements to appear in AI features beyond Search eligibility; snippet directives can limit exposure. See AI features and your website (2025).

OpenAI’s Introducing ChatGPT Search (2024) details explicit source attribution and the overall design of results.

Perplexity’s search quickstart outlines real‑time retrieval and citation behaviors.

Stanford HAI’s AI Index 2025 documents the surge in organizational AI usage (78% in 2024; generative AI in one function at 71%).

SparkToro’s 2024 zero‑click search study and Pew Research Center’s 2025 study on AI summaries and clicks quantify the behavioral shift that makes AEO/GEO KPIs indispensable.

The bottom line

AEO/GEO is how you win visibility inside answers—not just around them. Design extractable, verifiable content; match schema to what’s visible; keep your site technically clean; maintain a steady freshness cadence; and measure presence, attribution, and sentiment across engines. Do this consistently, and you’ll earn more citations and more accurate representation over time.

If you want a purpose‑built workflow for tracking and improving multi‑engine AI visibility—and to keep your evidence organized—apply for a free Geneo trial to pressure‑test your AEO program on real queries.