AI Search Optimization Techniques for 2025: Ultimate Step-by-Step Guide

Master 2025 AI search optimization with this ultimate, evidence-based step-by-step guide. Learn measurable AEO/GEO strategies, real KPIs, and migration tactics now!

In 2025, answer engines compress the click path. When Google’s AI Overviews or AI Mode appears, classic rank-to-CTR relationships wobble: a large-scale Ahrefs study found the #1 organic result can lose about 34.5% CTR on informational queries with AI Overviews present, as reported in the 2025 update of the analysis on the Ahrefs blog. See the evidence and methodology in the Ahrefs write-up, “AI Overviews reduce clicks” (2025). According to a synthesis on Search Engine Land (2024–2025), multiple cohorts report double‑digit CTR declines when AI Overviews render. The takeaway isn’t panic—it’s that the old SEO playbook no longer covers the whole field.

What do you do when your best pages answer the question but the engine answers it for you? That’s the migration problem this article solves with a step-by-step operational playbook.

What changed—and what to measure now

Traditional SEO optimized for ranking and snippets, while AI search experiences optimize for answerability and citation. In practice, your content must be easy to quote in a synthesized answer, your brand needs visibility in the answer panel itself (not only the blue links below it), and your reporting must capture citations, mentions, and downstream engagement—not just position and CTR.

If you’re new to this lens, start with the terminology: what “AI visibility” actually entails and how it differs from classic SERP share. A practical primer is “AI visibility” as brand exposure inside AI answers, explored in Geneo’s resource on the topic: AI visibility definition and brand exposure in AI search.

The 7-step operational playbook for 2025

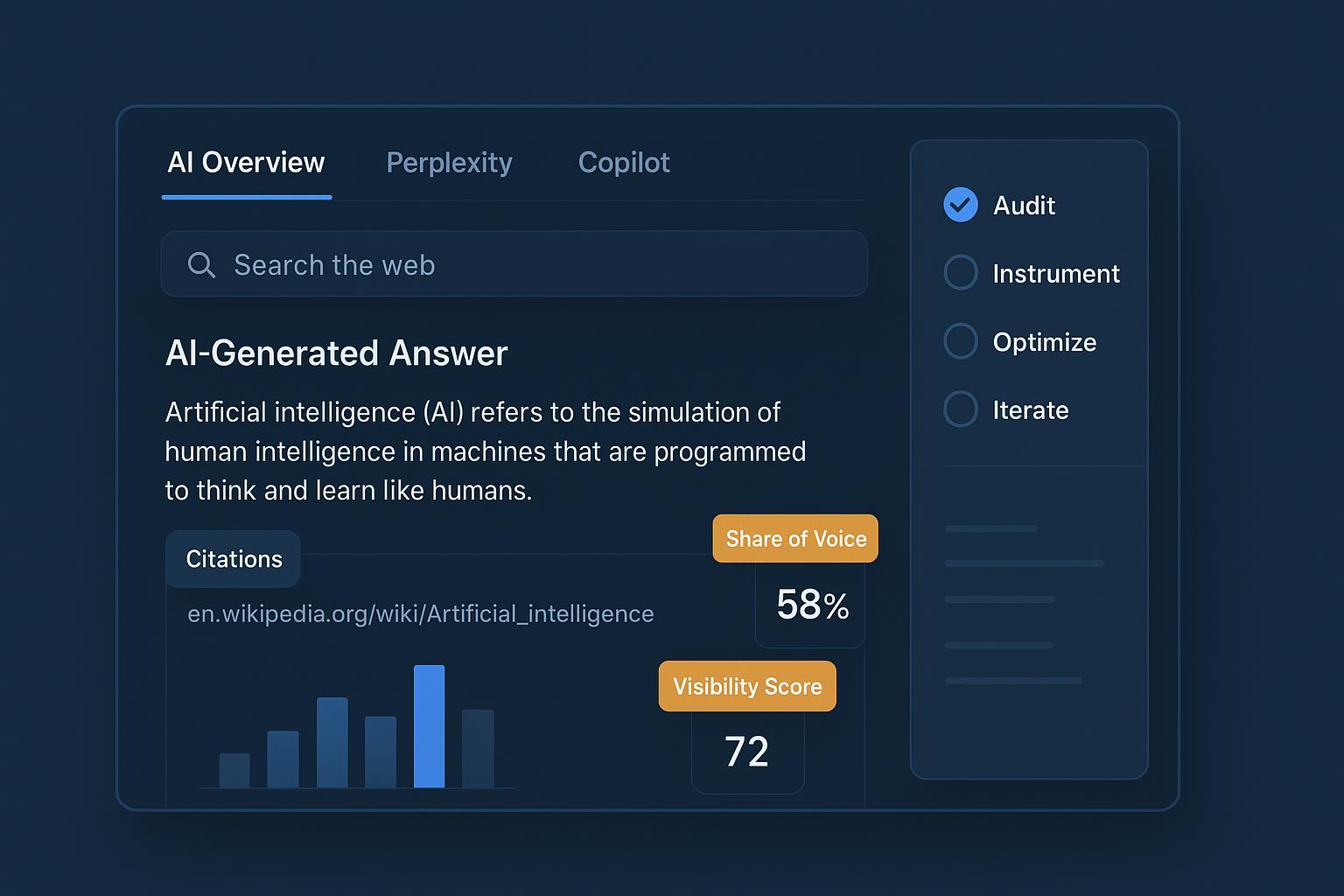

Step 1 — Audit your AI visibility baseline

You can’t fix what you can’t see. Build a reproducible baseline across Google’s AI Overviews/AI Mode, Perplexity, Bing Copilot search, and ChatGPT with browsing/search enabled. Start by defining a seed set of high‑intent questions—mix informational and commercial queries for your top topics. For each engine, check whether your brand is cited, mentioned, or omitted; log the URLs and precise passages used and capture competitors cited alongside you. Repeat weekly for four weeks to smooth randomness.

For a ready-made approach, see this audit workflow: How to perform an AI visibility audit for your brand.

Step 2 — Build a KPI scorecard you can actually report

Classic rank-based KPIs don’t map cleanly to AI answers. Instrument a minimal but robust scorecard you can trend over time.

KPI | What it means | Why it matters |

|---|---|---|

Citation Frequency (per engine) | How often your pages are cited in AI answers | Direct evidence your content powers answers |

Share of Citations (topic-set) | Your citations divided by total citations for the same questions | Competitive share-of-voice inside answers |

Attribution Rate | Percent of AI answers that explicitly link to you | Visibility and click eligibility inside the panel |

Answer Coverage | Number of distinct questions your content helps answer | Topic footprint inside AI surfaces |

Downstream Engagement | Visits/conversions from AI-linked sessions (approximated) | Business impact beyond visibility |

Want a deeper, step-by-step focus on earning citations? Consider this neutral, tactical walkthrough: How to optimize content for AI citations.

Step 3 — Instrument GA4/GTM for AI‑influenced traffic (with known limits)

You won’t get a clean “AI Overview” referrer in GA4 today. But you can approximate and trend. Segment known referrers such as chat.openai.com, perplexity.ai, and bing.com/copilot, then create a custom channel called “AI / LLM” using referrer and UTM rules. Consider server‑side GTM to persist an ai_source dimension on links you control (for example, from your own chat tools or demo bots). Finally, triangulate with Search Console impression deltas and server logs.

A practical walkthrough with caveats is outlined in Search Engine Land’s guide: see the step‑by‑step on segmenting LLM traffic in GA4 (2024).

Step 4 — Restructure content and ship schema the engines can quote

Answer engines “lift and link” from clear, extractable blocks. Think in chunks: short, precise answers near the top; structured sections; and valid JSON‑LD for eligible formats.

Two useful JSON‑LD starters (validate with Google’s Rich Results Test; ensure on‑page content matches):

{

"@context": "https://schema.org",

"@type": "FAQPage",

"mainEntity": [

{

"@type": "Question",

"name": "What is AI visibility?",

"acceptedAnswer": {

"@type": "Answer",

"text": "AI visibility is how often and how prominently your brand is cited or referenced inside AI-generated answers across search and chat surfaces."

}

},

{

"@type": "Question",

"name": "Does JSON-LD help with AI citations?",

"acceptedAnswer": {

"@type": "Answer",

"text": "JSON-LD doesn’t guarantee citations, but clear structure improves machine understanding and eligibility for rich results."

}

}

]

}

{

"@context": "https://schema.org",

"@type": "HowTo",

"name": "Set up an AI / LLM channel in GA4",

"description": "Create a custom channel to approximate AI-influenced traffic.",

"step": [

{"@type": "HowToStep", "text": "Open Admin → Data Settings → Channel Groups."},

{"@type": "HowToStep", "text": "Add rules for referrers (perplexity.ai, chat.openai.com, bing.com/copilot)."},

{"@type": "HowToStep", "text": "Publish and test with Explorations to validate sessions."}

]

}

Google’s official 2025 guidance underscores that you can’t “opt into” AI Mode; eligibility stems from quality, clarity, and helpfulness. See Google’s ‘Top ways to succeed in AI search experiences’ (May 21, 2025) for the principles and control hints.

Step 5 — Cluster entities and reinforce E‑E‑A‑T

Answer engines ground on entities and relationships. Map your topics to named entities (people, brands, products, places), then build clusters around them. Create pillar pages that define the entity and its relationships in plain language, and link to supporting subtopics that clarify attributes, alternatives, and how‑tos. Add bylines and affiliations; cite primary sources for key claims. Update cadence matters—recency bias shows up in several analyses of AI Overviews. Ask yourself: if a model clipped 60 words from your page, would it still be accurate, current, and attributable to you?

Step 6 — Platform‑specific moves that compound

Engines behave differently; tailor your tactics. Perplexity uses inline, claim‑level citations; make it easy to lift clean sentences and data points. Independent researchers have noted distinct citation patterns and a relatively broad source mix; see the 2025 pattern summary by Profound in their platform citation patterns article. Bing Copilot Search often surfaces links tied to the exact grounding passage, so clear headings, strong factual anchors, and crawlability help. ChatGPT with browsing/search can be nudged toward source attribution when your content provides concise, citable summaries and up‑to‑date references.

Step 7 — Monitor, benchmark, and iterate

Treat this as a continuous program, not a one‑off project. Track your KPI scorecard monthly by engine and topic cluster, then compare your share of citations to two or three direct competitors. Run structured experiments—change answer blocks, update facts, adjust headings—and re‑check citation outcomes after each change. Report estimates clearly and document your rules, limitations, and known gaps.

Practical workflow example (neutral, tool‑agnostic pattern)

Disclosure: Geneo is our product.

Here’s a replicable workflow you can implement with a dashboard or a disciplined spreadsheet:

Scan & sample

Build a list of 50–100 questions across your top five topics. For each engine (Google AI Overviews/Mode, Perplexity, Copilot, ChatGPT with browsing), record whether you’re cited, merely mentioned, or absent. Log competitor citations too.

Analyze & score

Calculate Citation Frequency and Share of Citations by topic. Note which specific passages the engines lift (quote the sentences). Flag content gaps and out‑of‑date claims.

Optimize & ship

Rewrite answer blocks for clarity and brevity; move them higher on the page. Add or fix JSON‑LD (FAQPage/HowTo where appropriate). Update stats with the latest year and sources.

Re‑check & iterate

Re‑run your question set. Look for movement in citations and attribution rate. Keep a changelog so you can correlate edits with outcomes. A multi‑engine monitor—whether a commercial tool or your own tracker—can help reduce missed changes.

This sequence mirrors what many teams do in practice: a monitor → analyze → optimize → validate loop that keeps improving AI answer eligibility.

Case lessons from 2024–2025: why teams win (or don’t)

Evidence from multiple sources paints a consistent picture: AI answers depress classic CTR, but you can still win visibility and qualified visits if you earn the citations.

Large‑scale analysis from Ahrefs indicates a roughly 34.5% CTR drop for position #1 on informational queries when AI Overviews render; see the 2025 edition of Ahrefs’ “AI Overviews reduce clicks” analysis for methods and caveats.

Industry rollups on Search Engine Land’s coverage compiled results from agencies and tool vendors showing significant CTR reductions on AIO queries.

Google’s own guidance emphasizes there is no direct “opt-in” to AI Mode; focus on helpful content, clarity, and eligibility signals rather than gaming a switch, per Google’s AI search experiences post (May 21, 2025).

Common success patterns

Short, precise answer blocks near the top of the page (40–60 words), with a supporting paragraph that can be lifted intact.

Fresh citations and updated stats (year in anchor text where material). Engines show recency bias.

Entity‑centered clusters, making it easy to understand who/what/where you’re talking about.

Valid, visible structured data that matches the on‑page content.

Failure patterns

Burying the answer under fluff or gating critical details.

Out‑of‑date numbers and missing primary sources.

Over‑fitting for one engine and ignoring the others.

Reporting without acknowledging GA4/attribution limits, which erodes trust.

What to do this quarter

Week 1: Run a baseline audit for 75–100 questions across engines. Create your KPI scorecard and GA4 “AI / LLM” channel.

Week 2: Rewrite the top 10 pages with answer‑first blocks; ship FAQPage/HowTo JSON‑LD where eligible.

Week 3: Update stale facts across 20 pages; add citations to original sources. Map entity clusters and internal links.

Week 4: Re‑check citations, document movement, and plan the next iteration.

If you operate as an agency and need client‑ready reporting, consider white‑label outputs so stakeholders can track AI visibility alongside classic SEO. Here’s a neutral overview of the approach: white‑label AI visibility reporting for agencies.

References and further reading

Google Search Central: Top ways to succeed in AI search experiences (May 21, 2025)

Ahrefs Research: AI Overviews reduce clicks (2025 update)

Search Engine Land: Segmenting LLM traffic in GA4 (2024 guide)

Profound: AI platform citation patterns (2025)

Google Developers: AI features and your website (documentation)