Tracking Keyword Rankings on Perplexity: Executive Best Practices

Discover why legacy keyword tracking fails on Perplexity and how to measure AI visibility, citation share, and executive KPIs for competitive leadership.

If you still treat Perplexity like Google, your measurement will mislead your board. Perplexity is an answer engine, not a ranked list. It selects sources, synthesizes a response, and shows inline citations. In this world, “position 3” is irrelevant; what matters is whether your brand is cited—how often, in which contexts, and versus whom.

Perplexity’s Answer Model Isn’t Built on Ranks

Perplexity performs real-time searches, reasons across multiple sources, and displays clickable citations inside the answer. Its team underscores transparency: every response includes source links users can verify. See the platform’s explanation in “How does Perplexity work?” (Help Center) and the newer Deep Research mode, which “performs dozens of searches, reads hundreds of sources, and reasons through the material,” as described in “Introducing Perplexity Deep Research” (2024–2025).

What does that mean for measurement? Traditional SERP rank trackers tell you your page sits at #5. Perplexity tells the user a synthesized answer and cites a handful of sources. So the KPI shifts from “rank” to “selection and attribution.”

Why Legacy Keyword Ranking Fails on Perplexity

No ordered list to track. Perplexity doesn’t present a 1–10 list; it presents an answer with citations.

Source selection is dynamic. The engine can change which sources it pulls, based on query intent, freshness, and context.

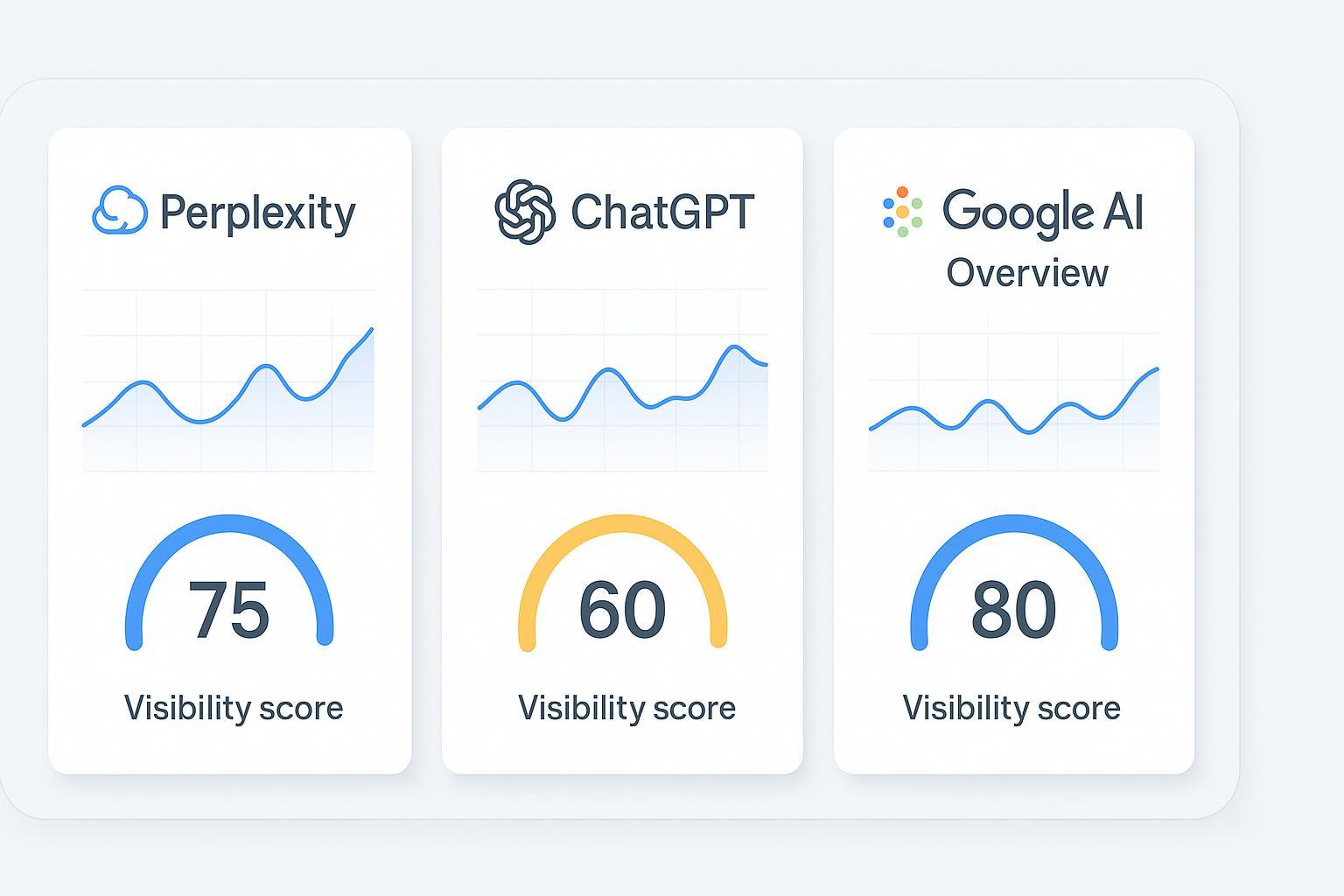

Cross-engine reality. The same query cluster can yield different citation behavior on Perplexity vs. ChatGPT vs. Google AI Overviews.

Independent comparisons show Perplexity frequently cites diverse domains—including sources beyond traditional top SERPs—supporting the need to measure citations rather than rank positions, as noted in SE Ranking’s platform comparison research (2024–2025).

Measure What Matters: Executive KPIs for Answer Engines

Start by reframing the KPIs to match Perplexity’s behavior and your board’s need for clarity.

Share of Answer: The proportion of tracked queries (prompt clusters and semantic variants) where your brand is mentioned or cited in the AI answer. Useful for platform presence and trend monitoring. For definitions and context, see Geneo’s explainer on AI visibility and brand exposure in AI search.

Citation Rate / Link Attribution: The percentage of answers that include a citation to your domain versus third-party sites. This indicates referral opportunity and perceived authority.

Brand Visibility Score (Aggregate): A directional score combining mentions, sentiment, and relative prominence in answers. It is designed to stay informative despite AI fluctuations. Learn how GEO differs from traditional SEO in this comparison for marketers.

Competitive Displacement: Who is being cited instead of you—and how that changes month to month.

If you need a quick analogy: think of Perplexity like a panel moderator choosing quotes from the most relevant experts. Your aim isn’t “seat 3,” it’s “get quoted—often—and in the right segments.”

Cross‑Engine Reality: One Question, Three Behaviors

Execs shouldn’t evaluate Perplexity in isolation. Different engines have different sourcing tendencies and transparency levels. Studies indicate variance in how often platforms cite certain types of sources (e.g., Wikipedia, brand-managed sites, media outlets).

Ahrefs’ 2025 AI SEO statistics show notable differences in Wikipedia citation rates across engines, highlighting platform‑specific sourcing patterns: see their analysis of AI citation tendencies (2025).

Yext’s 2025 research estimates that a large majority of AI citations come from brand‑managed sources, reinforcing the value of owned content and listings for visibility: “86% of AI citations come from brand‑managed sources” (2025).

What to monitor across engines

KPI | Perplexity | ChatGPT | Google AI Overviews |

|---|---|---|---|

Share of Answer | High variability by intent; citations are visible | Mentions may be implicit; citations vary by mode | Answer visibility depends on overview trigger; sources shown |

Citation Rate | Inline links in most answers | Often limited unless browsing/tools enabled | Links to sources when an overview appears |

Competitive Displacement | Clear via cited domains | Harder without explicit links | Clear when overview lists sources |

Freshness Sensitivity | Strong (Deep Research emphasizes recent material) | Moderate | Moderate–high depending on query |

For a deeper primer on cross‑engine monitoring, see Geneo’s comparison guide for ChatGPT, Perplexity, Gemini, and Bing.

A Practical Workflow Your Team Can Run

This is a board‑to‑team loop you can adopt across quarters. It’s intentionally simple so it sticks.

Define prompt clusters. Map strategic topics to clusters and identify semantic variants people actually ask.

Track presence and attribution. Measure Share of Answer, Citation Rate, and Link Attribution across Perplexity, ChatGPT, and Google AI Overviews.

Benchmark competitors. Build monthly tables comparing platform presence and who’s cited instead of you. Note drift and volatility.

Investigate and act. Publish fresh, definitive answers; add data, tables, and original research; strengthen author credentials and E‑E‑A‑T indicators.

Report to the board. Show cross‑engine coverage, citation trends, key wins/losses, and a clear next‑quarter plan.

Example: Neutral use of Geneo for multi‑engine visibility

Geneo provides cross‑engine monitoring that tracks brand mentions, citations, sentiment, and competitive benchmarks across Perplexity, ChatGPT, and Google AI Overviews. In practice, teams use it to log Share of Answer and Citation Rate by prompt cluster, observe where competitors displace them, and summarize findings in executive‑ready reports. See the agency overview for context: Geneo for agencies.

Pitfalls and Guardrails

Freshness is non‑negotiable. Perplexity’s Deep Research feature emphasizes recent sources. If your last update is stale, expect fewer citations.

Evidence beats opinion. Lead with data, dates, and concrete answers. Tables and original research get cited more often.

Monitor volatility. Source selection can drift. Keep monthly trend lines and annotate changes.

Governance matters. Align PR, content, and SEO/GEO teams on topics, authorship, and refresh cadence.

Getting Started: A Short Executive Checklist

Choose 5–7 strategic prompt clusters with clear business impact.

Define KPIs: Share of Answer, Citation Rate, Competitive Displacement, and an aggregate Brand Visibility Score.

Establish a monthly cadence for monitoring and reporting across Perplexity, ChatGPT, and Google AI Overviews.

Prioritize updates: publish definitive, data‑backed answers with clear citations, tables, and expert bios.

Set governance: assign owners, refresh schedules, and escalation paths for visibility losses.

Ready to evaluate a cross‑engine visibility approach? Book a Geneo demo to see how executive‑level AI visibility scoring and citation tracking come together in one workflow.