How to Rank Higher in AI-Generated Lists: 2025 Best Practices

Essential 2025 best practices for ranking higher in AI-generated lists. Actionable steps for AI SEO, content structure, platform authority, and advanced measurement.

If your brand isn’t getting cited or listed in AI answers, you’re losing visibility where decisions increasingly start. The twist? You’re not “optimizing for AI” in a vacuum—you’re optimizing for how AI systems read, interpret, and cite the web. Want to move up from a buried mention to a visible, first-citation placement?

What AI systems actually reward now

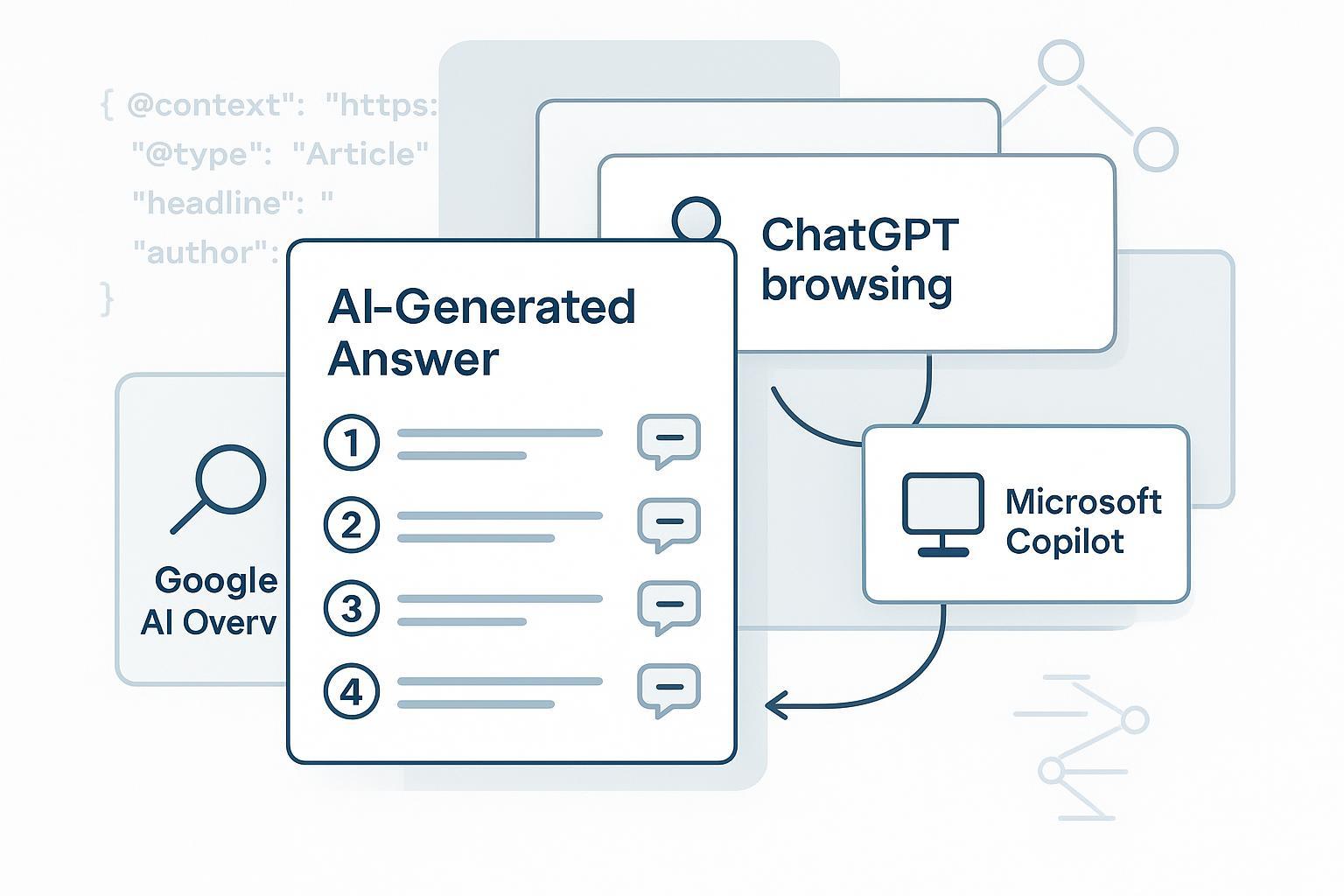

Across Google AI Overviews/AI Mode (GAIO), Microsoft Copilot, ChatGPT with browsing, and Perplexity, a consistent pattern shows up: pages that are clear to parse, factually dense, and entity-consistent are more likely to be cited or listed. Google emphasizes that its AI features rely on the same underlying Search systems and surface links when generative answers add value; eligibility depends on standard indexing and quality. See Google’s guidance for site owners in “AI features and your website” (Google Search Central, 2025).

Three signals stand out:

- Extractability: Can an engine cleanly lift a definition, list, or process from your page without confusion?

- Entity clarity: Are your brand, authors, products, and topics unambiguously represented—and consistent across the web?

- Authority and provenance: Do you pair claims with credible sources, provide expert bylines, and maintain a trustworthy editorial footprint?

Think of it this way: if your page is a tidy toolkit—definitions upfront, lists labeled, data sourced—LLMs can reuse it with less risk. If it’s a wall of prose with no sources, you’re asking them to guess.

Make your pages citation‑ready

You don’t need a rewrite of your entire site. You do need to reshape your highest-opportunity pages so they’re easy to quote and list.

- Put a TL;DR or “Direct Answer” paragraph near the top that cleanly answers the core query.

- Convert dense explanations into short lists or compact tables where appropriate.

- Add an FAQ section that covers specific sub‑questions the engines tend to answer.

- Pair every critical claim or stat with a source in the same paragraph.

- Align visible content and JSON‑LD: Article, Organization, Person (author), Product/Review, FAQPage or HowTo (only where on‑page Q&A/how‑to truly exist).

If you need a refresher on the concepts behind AI visibility, start with this explainer on AI visibility and brand exposure. For technical implementation, map your markup to content using our structured data for AI search guide.

Quick reference: how engines handle sources and monitoring

| Engine | How it gathers and shows sources | What to prioritize | How to monitor |

|---|---|---|---|

| Google AI Overviews/AI Mode | Uses standard Search systems; generative answers surface supporting links when helpful. See Google’s AI features guide (2025). | Indexability, extractable sections, strong entity/author clarity, up‑to‑date data with citations. | Google Search Console (Web data); third‑party AIO trackers for inclusion and cited URLs. |

| Microsoft Copilot | Grounded via Bing; emphasizes clickable citations and provenance (per Microsoft documentation). | Bing indexability, IndexNow for freshness, fast pages, clear summaries. | Bing Webmaster Tools; session tools like Clarity; third‑party monitors. |

| Perplexity | Returns answers with numbered, clickable sources; API exposes citation metadata. See Perplexity Quickstart and guides (2025). | Clean, canonical URLs; concise, source‑backed sections; original data helps. | Manual sampling; specialized trackers; compare referral patterns over time. |

| ChatGPT (Browse) | No official publisher criteria; browsing typically relies on web results and summarizes with links. Inference‑based best practices apply. | Visibility in the upstream engine(s), extractability, recent, sourced content. | Sampled prompts; third‑party monitors; compare changes after content updates. |

Platform‑by‑platform mini playbooks

Google AI Overviews/AI Mode

- Technical health first: clean crawl/index signals, stable canonical URLs, valid sitemaps, and JSON‑LD that mirrors on‑page content. Google clarifies AI features build on Search; follow the same fundamentals outlined in its guidance for site owners.

- Content format: Top‑of‑page direct answers, labeled lists/tables, and FAQs that align with query sub‑intents.

- Authority: Expert bylines, transparent editorial standards, and references. Avoid “FAQPage” unless the Q&As are visible and genuine.

- Monitoring: Use GSC’s Web data to track impressions/clicks for queries known to trigger Overviews, then validate inclusion with a reputable tracker.

Microsoft Copilot

- Grounding and provenance: Copilot emphasizes citations. Ensure Bing discoverability, validate rendering for JS content, and adopt IndexNow for rapid updates.

- Content format: Same extractability patterns; make definitions and steps scannable.

- Monitoring: Track in Bing Webmaster Tools and session tools; use third‑party trackers for systematic AIO presence checks.

Perplexity

- Treat Perplexity as a meticulous summarizer: it blends community and authoritative sources, often showing multiple perspectives. Its docs note citation metadata in responses.

- Content format: Short, precise sections that cite sources, with updated facts. Original research can earn outsized inclusion.

- Monitoring: Run regular prompt sets and log cited URLs; a third‑party tracker or internal sampling framework helps build trendlines.

ChatGPT (Browse)

- There’s no official, publisher‑side rulebook. Optimize for the browsing engine’s SERPs and clarity. Label your answers, cite your sources, and keep data fresh.

- Monitoring: Sample consistent prompts and track whether your domain appears among linked sources. Triangulate with third‑party trackers and analytics.

Pitfalls that still tank inclusion in 2025

- Over‑marking with schema that doesn’t match visible content (misaligned FAQPage/HowTo blocks).

- Walls of text without direct answers, lists, or tables.

- Claims without sources or out‑of‑date statistics.

- Inconsistent entity naming across your site, profiles, and directories.

- JS‑dependent content that fails to render for bots; unstable canonicalization.

- Chasing speculative “AI‑SEO tricks” instead of strengthening extractability and authority.

A 90‑day action plan to move up the list

Days 1–30: Technical and entity foundations Audit crawlability and indexability for both Google and Bing; fix status codes, canonical conflicts, and blocked resources. Submit healthy sitemaps. Add or validate JSON‑LD for Organization, Person (authors), and Article/Product/Review as applicable—always mirroring what users see. Create or refresh entity pages for your brand, key people, and flagship offerings; align naming and references across the web. If your leadership team is active on social or professional networks, ensure profiles consistently reference the same entities and URLs.

Days 31–60: Reformatting and topical hubs Add TL;DR blocks to your top 25 opportunity pages. Turn dense sections into concise lists or a compact table where it helps extractability. Add FAQ sections that reflect real sub‑queries; interlink within topic clusters to reinforce topical authority. For every statistic, add a nearby source link. Update timestamps when you genuinely refresh content and add figure captions for diagrams. If long‑term resilience is on your roadmap, consider these strategic diagnostics in our 2028 traffic planning guide.

Days 61–90: Research publishing and monitoring Publish one piece of original research (or a unique dataset) in your niche and promote it to credible communities and publications. Establish a weekly monitoring cadence: track Web performance in Google Search Console; use Bing Webmaster Tools; and adopt an AI Overview monitor that can detect inclusion and extract cited URLs. A well‑maintained sampling spreadsheet of priority queries (and prompts) across GAIO, Copilot, Perplexity, and ChatGPT will expose trends within weeks.

Disclosure: Geneo is our product. Example workflow (neutral, reproducible): After reformatting five hub pages with TL;DR sections, lists, and FAQ blocks, a team schedules weekly monitoring for 50 queries that reliably trigger GAIO, Copilot, Perplexity, and ChatGPT (Browse). They log when their domain is cited and track first‑citation share. In parallel, they compare GSC “Web” impressions for affected queries against a control set with similar intent but no reformatting. In week three, a tracker shows two hubs cited in GAIO across three markets; week four adds a Perplexity citation on a comparison query. Using these signals, the team updates related subpages and publishes a short data note to deepen topical authority. The point isn’t a proprietary trick; it’s a measurement‑driven loop you can replicate with any monitoring stack.

Measure, interpret, iterate

Reading the tea leaves requires discipline. Google clarifies that AI feature appearances are captured under the Web search type; use that view, then validate inclusion with a specialized tracker. For Microsoft’s ecosystem, build fast feedback cycles with IndexNow and grounding principles documented in Bing tooling. For Perplexity, its Quickstart confirms citation metadata—helpful when you sample outputs programmatically or manually. To complement native consoles, use a reputable third‑party tracker that detects AI Overview presence and extracts cited URLs; an industry roundup like thruuu’s AIO tracker overview (2025) can help you shortlist options.

What should you change first when inclusion is sporadic? Start with extractability: add or sharpen a TL;DR, restructure steps into a list, and insert claim–source pairs near key facts. If that doesn’t move the needle in 2–4 weeks, address entity clarity and author signals, then expand the topical hub with deeper sub‑pages. One more question to sanity‑check your plan: if your page were stripped to raw text, would an unfamiliar reader still find a clear definition, a simple list of steps, and a cited statistic?

A last word

AI engines reward clarity, provenance, and consistency. Make your pages easy to quote, your entities impossible to confuse, and your evidence hard to dispute. Do that, measure relentlessly, and you’ll climb from an occasional mention to a reliable, top‑of‑answer citation—without chasing ghosts.