How to Quantify Tone Inconsistency Impact on AI Visibility (2025)

Learn proven 2025 methods to measure tone inconsistency with the Tone Consistency Score and map AI Visibility Impact (AVII) across ChatGPT, Perplexity, Google AIO. Includes audit steps, benchmarks, and real-case evidence.

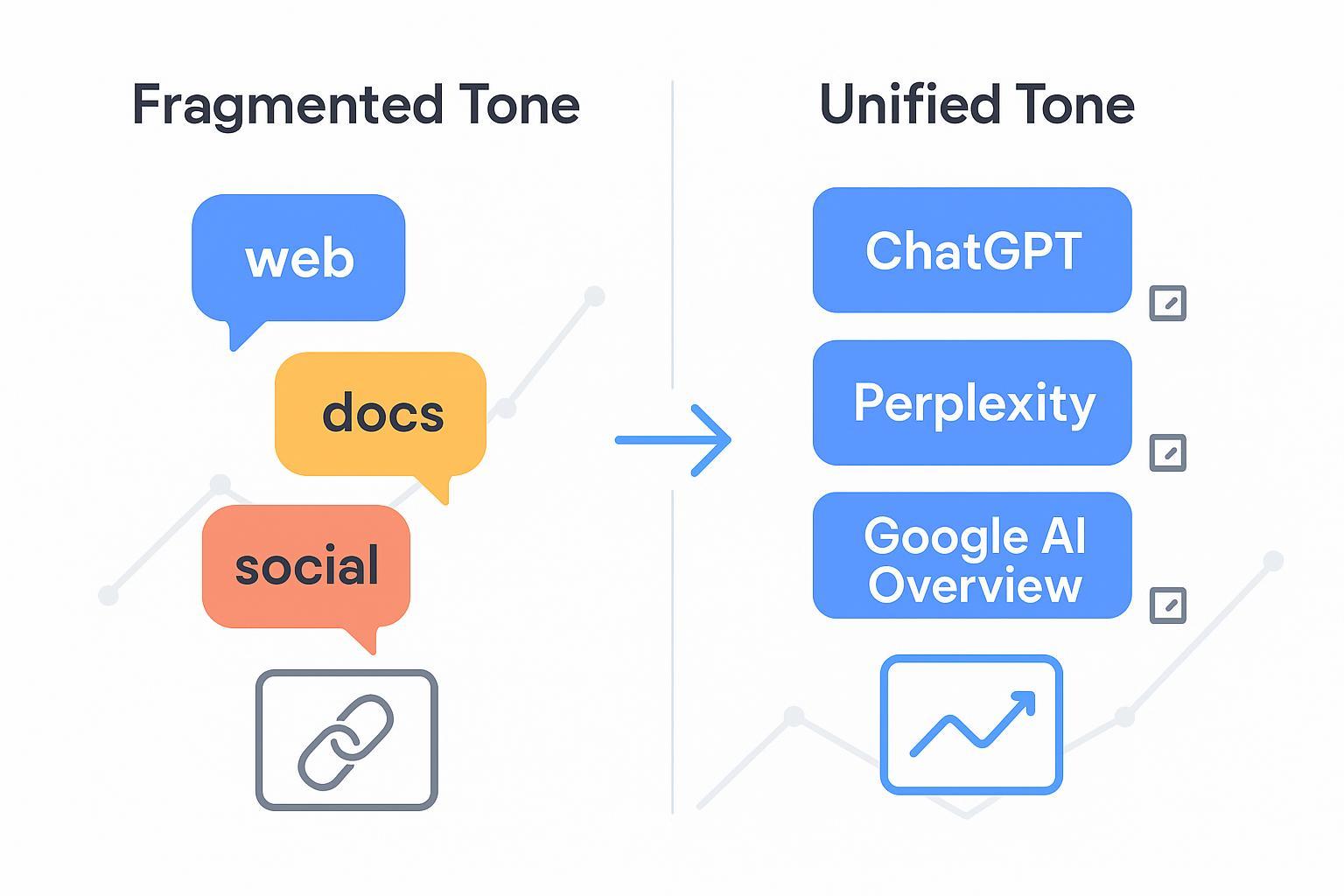

When AI systems summarize the web, they don’t just parse facts—they also pick up tone. If your brand sounds polished on documentation but hype-heavy on social, or empathetic in support but dry in press, those mismatches can dilute trust. And trust shapes selection: which sources get cited and how prominently they appear in AI answers. This best-practices guide explains the centerpiece we use to quantify tone—the Tone Consistency Score (TCS)—and how we connect it to an AI Visibility Impact Index (AVII) across ChatGPT, Perplexity, and Google AI Overview. Expect clear definitions, citable evidence, anonymized micro-cases, and a practical path to action.

What “tone inconsistency” means—and why it matters

Tone inconsistency is the measurable divergence of voice, emotional intensity, and style across your key surfaces—docs, product pages, help center, PR, social, and third-party reviews. The consequences are subtle but real. AI engines favor sources that feel authoritative, stable, and aligned; inconsistent tone can suggest fragmented governance, which erodes perceived reliability even when your facts are correct.

Several public studies help frame the visibility context. Across large query cohorts, AI summaries often change user behavior and citation exposure. For example, an Ahrefs analysis of 300,000 keywords found that when Google’s AI Overview (AIO) appeared, the typical position‑1 CTR dropped by roughly a third in 2025, highlighting how exposure is redistributed within summaries rather than classic blue links; see the methodology in the Ahrefs study on AIO’s impact (2025). Semrush tracked AI Overview trigger rates and visibility shifts across millions of keywords in 2025, offering engine‑level baselines marketers can use to evaluate performance windows; review their core findings in Semrush’s AI Overviews study (2025).

The Tone Consistency Score (TCS)

TCS is a 0–100 composite score that quantifies how consistently your brand’s tone shows up across channels. It blends four dimensions drawn from established UX/content frameworks and practical measurement signals.

How TCS is built

Dimensions and signals

Style alignment: Persona/voice match against your brand guide; consistent terminology and point of view.

Emotional intensity: Avoids mismatched hype/urgency; encourages clarity, neutrality, and empathy bounds.

Structure & readability: Sentence length variance, passive‑voice ratio, clarity of scannable anchors.

Cross‑channel adherence: Conformity across web/docs/help/social/PR; alignment with governance checklists.

Measurement inputs

UX tone rubrics: We adopt the four measurable tone‑of‑voice dimensions defined by NNGroup (humor, formality, respectfulness, enthusiasm), and validate with user testing; see NNGroup’s tone‑of‑voice dimensions.

Readability signals: Flesch‑Kincaid indices, sentence variance, passive voice ratio.

Sentiment & consistency: Owned/earned channel sentiment and terminology consistency checks.

Normalization and weighting

Per‑channel z‑scores and coverage thresholds; higher weights for authoritative surfaces (docs, support articles, product pages).

Confidence bands increase with sample size and cross‑channel coverage; volatility tracked monthly.

TCS Dimension | Key Signals | Primary Inputs | Weighting Guide |

|---|---|---|---|

Style alignment | Persona fidelity; terminology consistency | Brand guide, lexicon checks | High for docs, product |

Emotional intensity | Empathy, clarity, hype bounds | Sentiment scores, rubric ratings | Medium, all channels |

Structure & readability | Sentence variance; passive voice; scannability | Readability indices; anchor checks | Medium‑high |

Cross‑channel adherence | Same voice across web/docs/help/social/PR | Governance checklist; coverage ratio | High for owned surfaces |

Practically, think of TCS as your “quality control dial” for tone. A score in the 60s typically indicates noticeable divergence between surfaces; 80+ suggests mature governance and consistent voice.

Mapping TCS to the AI Visibility Impact Index (AVII)

AVII translates tone consistency into directional visibility expectations across major engines. It doesn’t claim hard causality; rather, it models correlation between TCS and visibility proxies while controlling for authority signals (entity clarity, E‑E‑A‑T, linking profiles).

Outcome proxies

Share of voice in AI answers per engine.

Citation presence counts and first‑mention rate.

Sentiment‑adjusted prominence of mentions.

Per‑engine differences

Google AI Overview tends to cite multiple sources within compact summaries and may diversify citations compared with top organic results; summary trigger rates and citation breadth have been documented in 2025 cohort studies like Search Engine Land’s AI Overviews analyses (2025).

Perplexity embeds clickable citations in every answer and offers “Focus” filters that shift source selection; review platform behavior in Perplexity’s Pro Search quickstart.

ChatGPT’s Deep Research exports include linked citations; enterprise connectors enhance source attribution for integrated content; see OpenAI’s Deep Research introduction (2025).

Lag effects and confidence

Tone remediations usually require a 30–90‑day window to reflect in visibility proxies as engines crawl and retrain. Confidence intervals widen when sample sizes are small or query classes are mixed.

Evidence, windows, and thresholds

To keep measurement credible, we set explicit audit windows, sample sizes, and thresholds.

Coverage window: 8–12 weeks per assessment cycle, aligned to major engine updates.

Sample size: At least 250 queries per engine per brand, balanced across informational, navigational, and brand‑intent classes.

TCS thresholds: Below 70 often indicates governance gaps; 80–90 indicates mature consistency; above 90 is rare and suggests tight editorial control.

Confidence intervals: Reported at 90–95% when per‑engine sample sizes exceed 250 queries and per‑channel coverage is broad.

External baselines: For context on user behavior and trigger rates, review large‑sample analyses such as Pew Research’s 2025 study on AI summaries and click behavior.

Anonymized micro‑cases

Case A (B2B documentation‑led brand)

Situation: TCS 68. Docs were neutral and clear; social posts used hype‑heavy phrasing; PR alternated between technical and salesy tone.

Intervention: Harmonized lexicon, tightened hype bounds, standardized structure and scannable anchors across docs/help/social.

Window & sample: 12 weeks; 300 queries per engine; informational and brand‑intent weighted.

Outcome: AVII indicated directional uplift: +14–22% citation presence in Perplexity; +9–13% first‑mention rate in ChatGPT Deep Research exports; +6–10% share‑of‑voice gains within Google AIO summaries. Confidence: 90% with balanced coverage.

Case B (Consumer brand with heavy earned media)

Situation: TCS 62. Owned channels were tidy; third‑party reviews and UGC mixed sarcasm with promotional language.

Intervention: Reviewer outreach, clarified brand voice guidelines, updated help center tone and terminology.

Window & sample: 10 weeks; 260 queries per engine; skewed toward informational intent.

Outcome: AVII gains: +8–15% citation presence in AIO; +10–16% prominence improvements in Perplexity (top‑row citations); modest (+4–7%) first‑mention rate lift in ChatGPT. Confidence: 90%.

These ranges reflect directional changes under controlled conditions; they are not guarantees and vary by industry, authority, and query class.

Practitioner workflow (example)

Brands often ask: how do we operationalize this without derailing the content team? A practical sequence is:

Baseline visibility and tone audit across engines and channels.

Harmonize voice and terminology; set hype/urgency bounds; update governance checklists.

Track visibility proxies and tone metrics monthly; adjust per‑engine strategies.

If you want a deeper primer on definitions and tracking methods, see Geneo’s overview of AI visibility and brand exposure in AI search and the platform Generative Engine Optimization (GEO) pages. These resources explain how multi‑engine monitoring and query‑level visibility reporting support audits without forcing a wholesale CMS rebuild.

What good looks like—and common pitfalls

Guardrails

Keep tone neutral‑empathetic on docs/help; reserve enthusiasm for announcements.

Maintain consistent terminology and POV across surfaces; avoid abrupt persona shifts.

Monitor passive‑voice ratio and sentence variance; clarity wins.

Pitfalls

Over‑correcting into sterile copy that erodes brand personality.

Ignoring earned/UGC tone, which AI summaries increasingly ingest.

Treating tone as a one‑time project instead of ongoing governance.

Request your free Tone Consistency & AI Visibility Audit

Want a data‑driven diagnosis of where tone inconsistencies are suppressing your AI exposure? Request a no‑obligation audit and get an engine‑by‑engine impact profile, including your current TCS, AVII trends, and prioritized remediation steps.

A consistent voice doesn’t mean bland—it means intentional. If your brand’s story sounds like the same trusted expert across every surface, why wouldn’t AI choose to cite you first?