How to Track Keyword Rankings on Perplexity: The Ultimate Guide

Master Perplexity keyword ranking and Share of Voice tracking. Learn actionable workflows, competitive insights, and the best tools to boost your brand’s AI visibility.

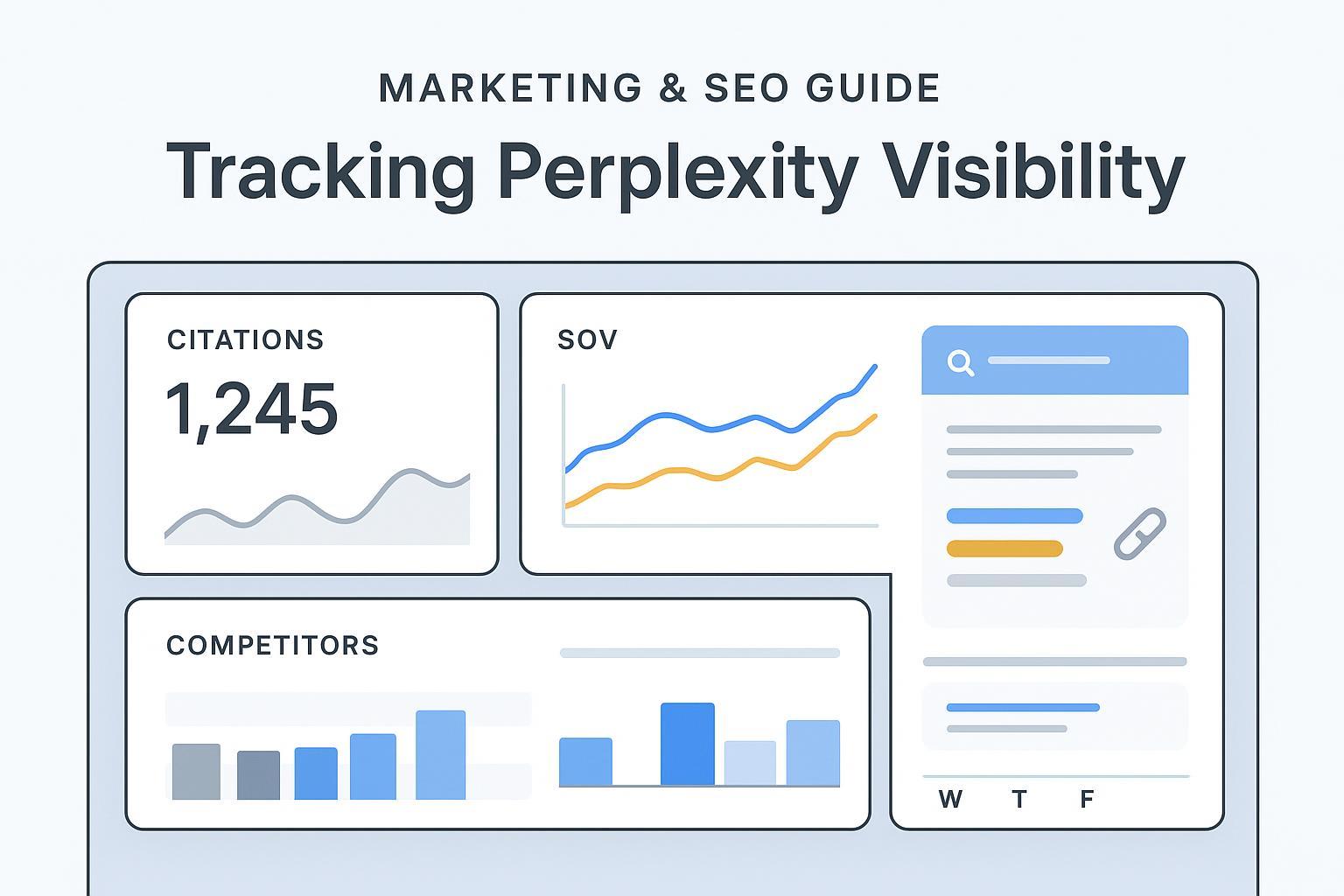

If you’re trying to “track keyword rankings” on Perplexity, here’s the deal: Perplexity doesn’t expose classic positions like Google. Visibility lives in its linked citations, how often your domain appears across relevant prompts, and how your presence compares to competitors over time. In 2025, the most reliable way to measure progress is Competitive Share of Voice (SOV) across a defined prompt set.

According to independent research into Perplexity’s pipeline, the engine employs entity-centric reranking and authority boosts that shape which sources get cited in answers. See the analysis in Search Engine Land’s 2025 report, How Perplexity ranks content: research uncovers core ranking logic and signals (Aug 2025). Perplexity’s own guidance emphasizes accurate, trustworthy, and fresh sources; review the AI Search Ranking Guide (Perplexity, Oct 2024).

If you come from traditional SEO, think of GEO (Generative Engine Optimization) and answer engines as a parallel track where SOV replaces rank position as your north-star KPI. For a primer, see Traditional SEO vs GEO: 2025 Marketer’s Guide (Geneo).

What “Ranking” Really Means on Perplexity

On Perplexity, “ranking” is best framed as citation-based visibility across a prompt library. The key question isn’t “Are we in position three?” but rather “Are we cited in answers for our topics, how frequently do we appear across the cluster, how prominent are our citations (e.g., among the first few sources), and what share do we hold versus competitors week to week?” Because Perplexity synthesizes answers and then surfaces sources that support those claims, early or repeated citations can be a proxy for authority within a topic. You won’t get “position 3 for keyword X,” but you can get “our domain appears in 62% of answers across 40 decision-intent prompts, with top-3 placement in 41% of those answers.”

The Metrics That Matter: From Citations to SOV

For reliable Perplexity monitoring, three metrics are foundational: citation frequency and coverage (how often your domain appears across the prompt set), citation prominence (whether your source is among the first citations as a simple proxy for emphasis), and link attribution rate (in brand-specific claims, whether Perplexity points to your official domain). Roll those up into SOV — your share of answers citing your domain versus competitors within a defined cluster and time window. A practical (unweighted) formula:

SOV = Number of answers citing your domain / Total answers across the tracked prompts

You may add weights (e.g., +1.0 for top-3 citations; +0.5 for later citations) and even prompt-level importance weighting if some queries matter more to the business. If you need a broader measurement framework (platform presence, citation coverage, attribution), see LLMO Metrics: Measure Accuracy, Relevance, Personalization (Geneo, 2025).

Tracking outcomes in analytics is just as important. Perplexity typically passes a referral header, allowing you to segment sessions in GA4. Guides like How to track Perplexity referrals in GA4 (Rankshift.ai, Sep 2025) and Can you see traffic from ChatGPT/Perplexity in GA4? Here’s how (Hedgehog Marketing, Sep 2025) explain how to filter Source/Medium “perplexity.ai / referral” or build regex-based segments.

Build Your Prompt Library (and Sampling Cadence)

Design an intent-based prompt library that mirrors how buyers actually ask questions across awareness (category definitions and use cases), consideration (comparisons, alternatives, pros/cons), decision (pricing, features, integrations, ROI), and post-purchase (implementation steps, troubleshooting). Include conversational follow-ups such as “What are the downsides?” or “Show me recent data,” because Perplexity’s citations can change across multi-turn sessions. For cadence, run weekly audits on your core decision and comparison prompts; bi-weekly or monthly for broader awareness sets. Volatility is normal — annotate notable changes, such as competitor launches or a major content refresh. For operational context across AI search behavior, this overview helps: AI Search User Behavior 2025 (Geneo).

Step-by-Step Workflow: Auditing Perplexity Visibility

Define your prompt clusters and competitor set

25–75 prompts grouped by funnel stage; include brand and category variants.

Run Perplexity queries and capture the answer + citations

Save the answer card and record cited domains, citation order, and URLs.

Log data in a sheet or database

Columns: prompt, date/time, cited (Y/N), citation order, official domain attribution (Y/N), competitor cited (which?), notes.

Compute SOV and trend lines

Per cluster, calculate your SOV and competitors’ SOV; chart weekly/monthly trends.

Annotate changes

Note content updates, PR hits, product releases, or technical fixes coinciding with swings.

Tie to analytics and outcomes

Segment GA4 by perplexity.ai referrals; track engagement and conversions for the monitored topics.

Troubleshooting — common failure modes include stale content (answers bias toward fresher sources; update last-modified dates and add current data), weak evidence (thin pages get skipped; add citations, tables, and scannable summaries), and poor attribution (Perplexity links to third-party profiles for your brand facts; strengthen your official pages with clear, verifiable claims).

Practical Example: Manual vs Tool-Assisted Setup (Disclosure)

Manual (sheet + GA4) — quick proof of concept: create a Google Sheet with prompts, citations, and attribution columns; audit weekly; calculate SOV with simple formulas; and chart trends. In GA4, build a segment for Perplexity referrals and track conversions tied to your monitored pages.

Tool-assisted — consolidating SOV and competitor views: Disclosure: Geneo is our product. In practice, teams use Geneo to define a Perplexity prompt set, log citations across sessions, and read SOV trend lines versus competitors in one place. Because Geneo is multi-engine, you can also compare Perplexity to ChatGPT or Google AI Overview without stitching separate reports. The aim is operational clarity — one dashboard, consistent sampling, and exportable weekly/monthly views.

If you prefer to evaluate alternatives, ensure they cover Perplexity citations reliably, expose competitor comparisons, and offer exports or APIs.

Tools & Alternatives: How to Choose

When selecting a tracker, prioritize depth of Perplexity prompt coverage (including multi-turn answer logging), competitor benchmarking with explicit SOV calculations, trend granularity with weekly or monthly views and annotations, and robust exports or APIs with Looker Studio connectors. If you operate across multiple engines, assess whether the tool offers cross-engine monitoring for Perplexity, ChatGPT, and Google AI Overview in one place. Representative resources include Keyword.com’s practitioner content — Perplexity AI ranking factors: a guide for SEOs (Keyword.com, Oct 2025) and Track your brand mentions in Perplexity AI results (Keyword.com, Aug 2025) — and SE Ranking’s broader context via AI traffic research study (SE Ranking, Aug 2025).

Keep in mind: capabilities evolve quickly; confirm Perplexity-specific features and SOV outputs at purchase time.

Advanced: Automation, APIs, and Cross-Engine View

Once your workflow is stable, automate sampling and reporting. Schedule weekly jobs to capture answers and citations for your core prompts, use your tool’s API or CSV exports to feed a warehouse, and build Looker Studio dashboards for SOV and trend overlays. Many teams track Perplexity alongside ChatGPT and Google AI Overview to spot platform-specific shifts and identify content or PR signals moving the needle across engines. For a high-level overview of multi-engine monitoring, see ChatGPT vs Perplexity vs Gemini vs Bing: AI search monitoring comparison (Geneo, Aug 2025).

Think of it this way: Perplexity is one pane of glass in the broader AI search picture. Unified reporting helps you attribute changes to strategy rather than guesswork.

Real-World Notes: Volatility, Freshness, and Authority Signals

Perplexity iterates frequently; citation behavior can shift with product updates that favor recency, peer-reviewed sources, or trusted domains. Track changes against platform updates via the Perplexity changelog (2025) and annotate your trend charts when you notice swings. Practical observations: freshness bias means newly updated pages tend to surface more often for time-sensitive prompts; authority scaffolding — clear evidence, transparent sourcing, and structured summaries — improves citation likelihood; and PR or reputation signals often lift inclusion rates. For why certain brands get cited more, see Why ChatGPT mentions certain brands (Geneo, Dec 2025).

SOV Example Table (Weekly Trend)

Below is a simple SOV snapshot for a decision-intent prompt cluster over three weeks. Top-3 citation weighting is applied (+1.0), later citations count as +0.5.

Week | Total Answers | Your Domain (Top-3 / Later) | Your Weighted SOV | Competitor A Weighted SOV | Competitor B Weighted SOV |

|---|---|---|---|---|---|

W1 | 40 | 12 / 6 | (121 + 60.5)/40 = 0.45 | 0.32 | 0.23 |

W2 | 40 | 14 / 4 | (141 + 40.5)/40 = 0.45 | 0.28 | 0.27 |

W3 | 40 | 16 / 5 | (161 + 50.5)/40 = 0.49 | 0.26 | 0.25 |

Interpretation: You held steady from W1 to W2 and improved in W3, primarily via more early citations. Annotate these weeks with any content updates or PR events.

FAQ and Quick Answers

Does Perplexity have classic keyword positions? No. Visibility is measured via citations across prompts, prominence, and competitor SOV.

Should we track every prompt daily? Not necessary. Weekly on core decision/comparison prompts is sufficient, plus bi-weekly/monthly for broader sets.

How do geo or personalization impact results? Perplexity can vary citations with recency and context; keep sampling consistent and note any location-specific patterns relevant to your audience.

What counts as success? Sustained SOV gains, higher top-3 citation rates, improved link attribution to your official pages, and meaningful referral engagement in analytics.

Next Steps

Ready to operationalize this? Start a trial and run your first Perplexity visibility tracking project this week. Build a 40–60 prompt set, audit citations, compute SOV, and tie outcomes to GA4 referrals. Keep it simple at first, then automate once trends are stable.