How LLM Reasoning Affects GEO

Learn how LLM reasoning (self-consistency, retrieval, tool-use, o3 planning) impacts Generative Engine Optimization (GEO) and drives brand citations in AI Overviews.

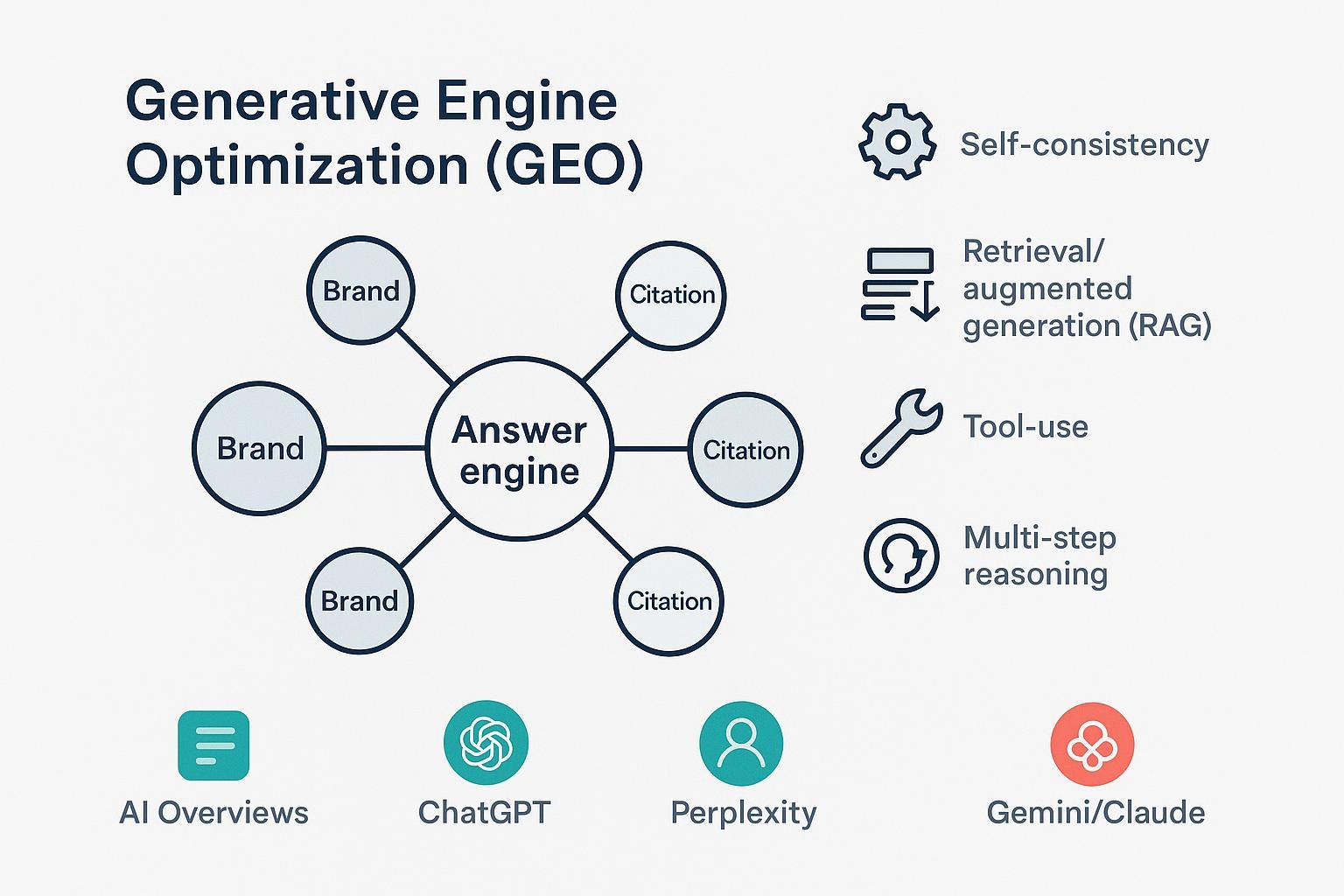

Generative Engine Optimization (GEO) is no longer a niche conversation. As AI Overviews and answer engines synthesize results instead of listing ten blue links, visibility depends on how models read, reason, and verify. If AI is doing the reading, what makes it trust and cite you?

This article explains GEO in practical terms and maps the biggest reasoning upgrades—self-consistency, retrieval/RAG, tool-use, and o3-style multi-step planning—to concrete moves you can make to increase citations and accurate brand mentions across AI Overviews, ChatGPT Search, Perplexity, Gemini, and Claude.

What GEO is (and how it differs from SEO/AEO)

GEO is the practice of shaping your content, structure, and entity signals so AI-driven answer systems can confidently include and cite you in their synthesized responses. That means optimizing for how models assemble answers, not just how search engines rank pages. A widely cited definition frames GEO as optimizing visibility in AI-driven search that prioritizes synthesis over ranked lists, as outlined in Search Engine Land’s “What is generative engine optimization (GEO)?” (2024).

Traditional SEO targets rankings and click-through from link lists. AEO (Answer Engine Optimization) overlaps with featured snippets and answer boxes inside SERPs. GEO spans those surfaces but focuses on multi-source synthesis across engines—and on being cited, named, and accurately represented inside an AI-composed answer.

Google documents how its AI features select and display links to support synthesized results, which helps site owners understand eligibility and display mechanics; see Google Search Central’s guidance on AI features. ChatGPT Search shows inline citations when it goes to the web, per OpenAI’s Help Center article on ChatGPT Search citations (2024). Perplexity emphasizes citations in every answer as part of its trust model, which it describes in Perplexity’s Publishers Program post.

If you’re wondering why certain brands appear in model answers more often than others, we summarized common selection dynamics and entity signals in our explainer on why certain brands get mentioned by ChatGPT.

The reasoning stack in modern LLMs

Think of answer engines as debate moderators. They gather viewpoints, weigh evidence, discard shaky claims, and settle on a position they can support with links. Four reasoning capabilities matter most for GEO:

- Self-consistency: sampling multiple reasoning paths and selecting the answer that is most consistent across them. This approach improves reliability across tasks, as shown by Wang et al. in Self-Consistency Improves Chain of Thought Reasoning in LLMs (2022).

- Retrieval/RAG: conditioning generation on retrieved documents so outputs are grounded in external evidence. Lewis et al. introduced Retrieval-Augmented Generation (RAG) (2020), which remains foundational in modern browsing and retrieval pipelines.

- Tool-use: orchestrating external tools—browsers, calculators, data stores—to check facts or fetch additional context before answering.

- o3-style multi-step planning: models trained to “think longer before responding” and plan tool usage and verification steps tend to favor multi-source corroboration and explicit evidence, as described in OpenAI’s o3-mini overview (2025).

These behaviors shape which pages get retrieved, which claims get quoted, and which brands get named.

How reasoning changes the GEO levers

Reasoning upgrades don’t change your audience’s questions—but they change what the engines need from your pages to confidently cite you.

- Content structure and parsability. Short, verifiable statements; clear headings; Q&A blocks; and tables make it easy to extract precise passages for synthesis. Engines that retrieve passages do better with modular, scannable sections.

- Schema and structured data. Add schema.org types (Article, Product, FAQ, HowTo, Organization, Person) with canonical identifiers. For GEO, schema is like a nutrition label—explicit facts in predictable fields help disambiguate entities and align claims with citations.

- Entity clarity. Use consistent names for your brand, people, and products. Maintain canonical entity pages and cross-links to authoritative profiles to reduce ambiguity.

- Evidence and outbound citations. When your page cites primary sources, it gives answer engines a clean provenance chain to validate your claims against. Self-consistency and multi-step planners prefer claims that are easy to corroborate.

- Freshness and update cadence. Retrieval-heavy systems reward current, stable information. Maintain update notes and visible datelines so engines—and users—can see recency.

A compact matrix: Reasoning mode → practical GEO moves

| Reasoning mode | What the engine tries to do | What helps your brand get cited |

|---|---|---|

| Self-consistency | Compare multiple internal reasoning paths and converge on the most coherent answer | Clear, unambiguous claims; consistent terminology; explicit definitions; on-page citations to authoritative sources |

| Retrieval/RAG | Ground responses in external documents and passages | Semantic headings; FAQs; tables; updated stats with dates; schema to disambiguate entities; stable canonical URLs |

| Tool-use | Call browsers/calculators/APIs to verify and fetch | Evidence blocks with links; step-by-step explanations; measurable data; machine-readable snippets (e.g., JSON-LD) |

| o3-style multi-step planning | Plan tasks, cross-check sources, and reconcile conflicts | Multi-source corroboration on-page; links to primary data; author bios and credentials; coverage of edge cases and exceptions |

Measurement and monitoring across engines

What gets measured gets improved. Track inclusion where answers actually form, not only on traditional SERPs.

Start with inclusion and citation presence. For ChatGPT Search, look for inline citations and the Sources sidebar behavior described in the Help Center. For AI Overviews, monitor when your pages appear as linked sources and which passages get pulled. Perplexity exposes citations in-line, making it easier to audit.

Add sentiment and accuracy checks. Are brand mentions positive, neutral, or negative? Are product descriptions precise? Keep a log with examples and date stamps. For a concrete view of cross-engine tracking, here’s a sample AI Visibility Report tracking cross-engine citations across multiple queries.

Compare AI answers to classic SEO metrics. Watch impressions and clicks in Search Console for AI-related surfaces (where available) alongside your standard SERP positions. Then contrast with AI answer inclusion to see gaps between ranking and synthesis.

When you focus specifically on Google’s AI Overviews, you may want operational tips and tooling comparisons; we compiled practical considerations in our guide to AIO tracking tools and workflow choices.

Practical example (with disclosure): monitoring cross-engine citations and sentiment

Disclosure: Geneo is our product. When teams need a consolidated view of whether and how they’re cited across ChatGPT Search, Perplexity, and Google’s AI features, Geneo can be used to:

- capture inclusion events (citations, links, named mentions) by query and engine,

- classify sentiment in answers mentioning your brand, and

- compare historical snapshots to see how updates affected inclusion.

This kind of neutral monitoring supports GEO experiments without guessing at hidden heuristics. It also helps you spot entity confusion early and prioritize fixes.

Risks, safeguards, and ethical guardrails

- Overfitting to one engine. Formats that work in one UI can underperform elsewhere. Keep your content robust across engines and devices.

- Evidence hygiene. Avoid speculating about undisclosed ranking or citation rules. Anchor claims to primary sources and explain your methods.

- YMYL discipline. In health, finance, or legal contexts, add expert review and conservative language. Link to recognized authorities.

- UI vs. API differences. Some APIs don’t expose citations the way UIs do. Don’t assume parity when you’re measuring.

A working playbook: steps you can run this quarter

- Define your entity fundamentals. Ship or update Organization, Person, and Product schema with consistent names, sameAs links, and canonical IDs. Align bios and product specs on-site and on major profiles.

- Refactor one core topic hub for parsability. Add a top-level summary, Q&A blocks for high-intent questions, and a compact table of the most referenced facts (with dates). Keep sections linkable.

- Bind every key claim to evidence. Add 1–2 authoritative outbound references per section. Prefer primary data or official documentation. You’ll make verification easier for self-consistency and multi-step planners.

- Add freshness signals. Include visible updated-on notes and a short changelog. Update stale stats and link to the underlying data set or methods page.

- Run cross-engine measurements. For your top 10 queries, capture whether you’re cited in AI Overviews, ChatGPT Search, and Perplexity. Note sentiment and any inaccuracies. Repeat monthly to see movement.

- Close entity gaps. Where engines misname your products or attribute competitors’ features to you, tighten naming, add disambiguation lines on-page, and link to canonical references.

- Plan an experiment per engine. For Google’s AI Overviews, test adding an FAQ and a dated methods section; for ChatGPT Search, add a concise “evidence” box with primary sources; for Perplexity, add a table that summarizes the answer-ready facts.

Further reading and next steps

- Definitions and scope: Search Engine Land offers a clear primer in “What is generative engine optimization (GEO)?”.

- Platform behavior: Google summarizes how AI features select and display links in Search Central’s guidance; ChatGPT Search explains inline citations in OpenAI’s Help Center; Perplexity discusses its citation-first approach in its Publishers Program post.

- Reasoning mechanics: See Wang et al.’s Self-Consistency in LLMs (2022), Lewis et al.’s RAG paper (2020), and OpenAI’s o3-mini overview.

Are you measuring inclusion where it actually happens—in the answers people read? If you need a consolidated view of AI citations and sentiment to run your GEO experiments, you can try Geneo. It supports cross-engine tracking and helps you compare progress over time without over-optimizing for a single platform.