How to Track Multi-Platform AI Awareness: Complete Guide

Actionable guide to measuring AI awareness across ChatGPT, Perplexity, Google AI Overview, and Bing Copilot. Includes workflow, metrics, and practical tools.

When AI answer engines summarize the web, your brand can win or lose attention before anyone clicks. Tracking that “pre‑click” presence across ChatGPT, Perplexity, Google’s AI Overview/AI Mode, and Bing Copilot isn’t guesswork—you can measure it. This guide shows you how to quantify visibility, citations, placement, and sentiment, then turn observations into weekly, repeatable reporting.

What Counts as AI Awareness (and Why It Matters)

Think of AI awareness as your brand’s share of the narrative inside AI answers. It’s upstream of traffic and conversions, but it strongly signals future demand. Practically, you’ll track:

- Citations and source links: Are your pages linked or named as sources inside answers? Visibility-first metrics like citations, placement, and prominence are becoming core KPIs as clicks decline in AI-mediated results, a shift highlighted in the industry discussion in the Search Engine Journal “Pragmatic Approach to AI Search Visibility” (2025).

- Placement and prominence: Where do AI answers appear on the page? If you’re cited, how visible is that citation (inline, hover card, “show all” list)? For example, observers have noted Google testing inline, hover-style citation links in AI Mode, which affects how users notice sources; see Search Engine Roundtable’s report on hover-style links (2025).

- Mention frequency/share of voice: How often your brand is named versus competitors within answers for a representative query set.

- Sentiment polarity and subjectivity: Whether the surrounding text frames your brand positively/negatively and how opinionated it is.

- Query coverage and entity presence: If your brand/product entities are recognized and show up for key intents (branded, category, comparisons).

- Recency/freshness: Whether answers surface current sources and reflect your latest updates.

How the Major Platforms Behave (Field Notes)

A successful measurement program respects each engine’s quirks. Here’s what to watch.

Google AI Overview and AI Mode

- Citation UI is in flux. Tests of inline, hover-style citation links/cards in AI Mode have been reported; log that UI behavior alongside your screenshots so you can judge prominence. For context, Google Search Console currently rolls AI Mode traffic into standard Web performance data without a dedicated AI filter, so you can’t isolate AIO impressions natively—see Search Engine Land on AI Mode data in GSC (2025).

- Practical implication: Treat Google data as blended. Use manual snapshots to track AIO presence, whether you’re cited, and where those citations sit within the answer. When you see hover-revealed sources, capture both the visible answer and the opened citation card; Search Engine Roundtable’s hover-link coverage (2025) offers a reference point for what to expect.

Bing Copilot

Microsoft emphasizes transparent, clickable citations directly in responses, often with a “Show all” view listing sources. This design makes counting and validating your link presence more straightforward; Microsoft details these transparency choices in its official notes—see Microsoft’s Transparency Note for Copilot.

Perplexity

Perplexity displays inline, clickable citations and grounds multi‑source outputs, especially in Deep Research mode. Structured, scannable content with clear claims tends to be favored for sourcing and display; the company’s announcement of Deep Research describes its multi‑source grounding—see Perplexity’s “Introducing Deep Research”.

ChatGPT (Search mode)

OpenAI’s ChatGPT Search surfaces source‑linked results adjacent to answers, which makes it possible to monitor whether your content or third‑party mentions appear as evidence; see OpenAI’s “Introducing ChatGPT Search”. Always log whether you were cited directly, named without a link, or omitted.

A Replicable Weekly Workflow (Manual Starter Program)

Set up a standing weekly audit that any analyst can run in 60–90 minutes.

- Build a query set of 20–30 prompts that represent branded, category, comparison, and informational intents. Keep the text stable week to week.

- For each query, collect answers from four engines: ChatGPT (Search), Perplexity, Google AI (Overviews/AI Mode), and Bing Copilot. Use the same locale and an unpersonalized session when possible.

- Capture full-page screenshots and, if available, the citation panels/cards. Save to a timestamped folder.

- Log whether your brand is mentioned, whether a link to your site appears, citation position/visibility, competitor mentions, and a sentiment score for the surrounding text.

- Review changes over time: mention rate, citation frequency and placement, and sentiment trends. Flag any sharp swings for qualitative review.

Below is a compact schema you can copy into a spreadsheet or database.

| timestamp | platform | locale | query sop_mode | query | brand_mentioned (Y/N) | brand_linked (Y/N) | citation_position | competitors_mentioned | sentiment_polarity (-1..+1) | subjectivity (0..1) | screenshot_url | notes |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 2025-12-01 09:00 | Google AI Mode | US-en | AIO | best [product] | Y | Y | inline hover card, first paragraph | A, B | +0.3 | 0.4 | https://... | hover reveals source card |

| 2025-12-01 09:05 | Perplexity | US-en | default | what is [brand] | Y | Y | inline citation, top | A | +0.1 | 0.2 | https://... | deep research off |

Quick checklist to keep you honest:

- Use the same time window each week.

- Record the answer mode (e.g., ChatGPT Search vs non‑Search; AIO vs standard SERP).

- Store raw answer text alongside screenshots for auditability.

Scale Up: Semi‑Automation and Low‑Code Options

As your coverage grows, consider semi‑automated tools and workflows:

- Enterprise monitoring: Configure tools that track AI citations and mentions across engines. Set alerts for new citations or sentiment swings, and export weekly summaries for your dashboard.

- Low‑code orchestration: Use platforms like Zapier, n8n, or ActivePieces to schedule queries where permitted, upload screenshots to storage, append rows to your sheet, and email a weekly digest to stakeholders.

- Analytics alignment: Keep AI awareness metrics separate from referral traffic in GA4. Pre‑click visibility is its own KPI set.

One caution: many tools rely on sampling and scraping. Always validate trends with manual spot checks and evidence captures.

Sentiment Scoring That Holds Up

You don’t need a PhD to score sentiment, but you do need a rubric that your team can reproduce. A simple, defensible approach:

- Polarity scale: −1.0 (very negative) to +1.0 (very positive). Treat −0.2 to +0.2 as neutral; outside that range as negative/positive.

- Subjectivity: 0.0 (fully objective) to 1.0 (highly opinionated). Prioritize human review for high‑subjectivity passages.

- Tools and calibration: Lexicon methods like VADER/TextBlob are decent baselines. Sample 10–15 mentions each month, have two reviewers score independently, and compute inter‑rater agreement (e.g., Cohen’s kappa). Iterate your rubric until agreement is acceptable (around κ≥0.7 is a practical target). For a concise background on lexicon approaches, see the arXiv overview of sentiment lexicons (2024).

Data Normalization, Governance, and Evidence

Raw counts are misleading without normalization and governance.

- Normalize for scale: Express “brand cited” as a percentage of total queries per platform. Track share of voice across your query set rather than raw totals.

- Weight by prominence and sentiment: Consider a composite visibility score that combines “presence” with placement and sentiment. For example, award more weight if your link appears in the first sentence and is framed positively.

- Control for mode and locale: Always log engine mode (e.g., Google AI Mode vs standard results) and locale. Don’t mix datasets.

- Evidence pack: Maintain timestamped screenshots, raw answer text, and citation URLs in a controlled repository. Keep a change log of your query set and any rubric edits. For governance perspective and traceability practices, see IAPP’s “AI Governance in Practice” overview.

- Reporting cadence: Weekly for operations, monthly for strategy. Trendlines tell the story; single‑week swings can be noise.

Troubleshooting Common Pitfalls

- Mode variance: If Google AI Overviews show for some users and not others, you may be mixing AI Mode with standard results. Log the mode explicitly and segment your analysis.

- Geography and personalization: AI answers can vary by locale and user context. Standardize with neutral accounts and consistent location settings; document both.

- Paywalls and access: Sources may be cited behind paywalls. Treat “cited but not readable” differently from “not cited.” Capture the URL either way.

- Rate limits and drift: Platforms and tools enforce rate limits; answers also change frequently. Stagger collection, keep your query set tight, and rely on scheduled snapshots.

- False confidence from single platforms: Don’t extrapolate Perplexity wins to Bing Copilot or vice versa. Each engine has distinct sourcing behavior—your logs should reflect that.

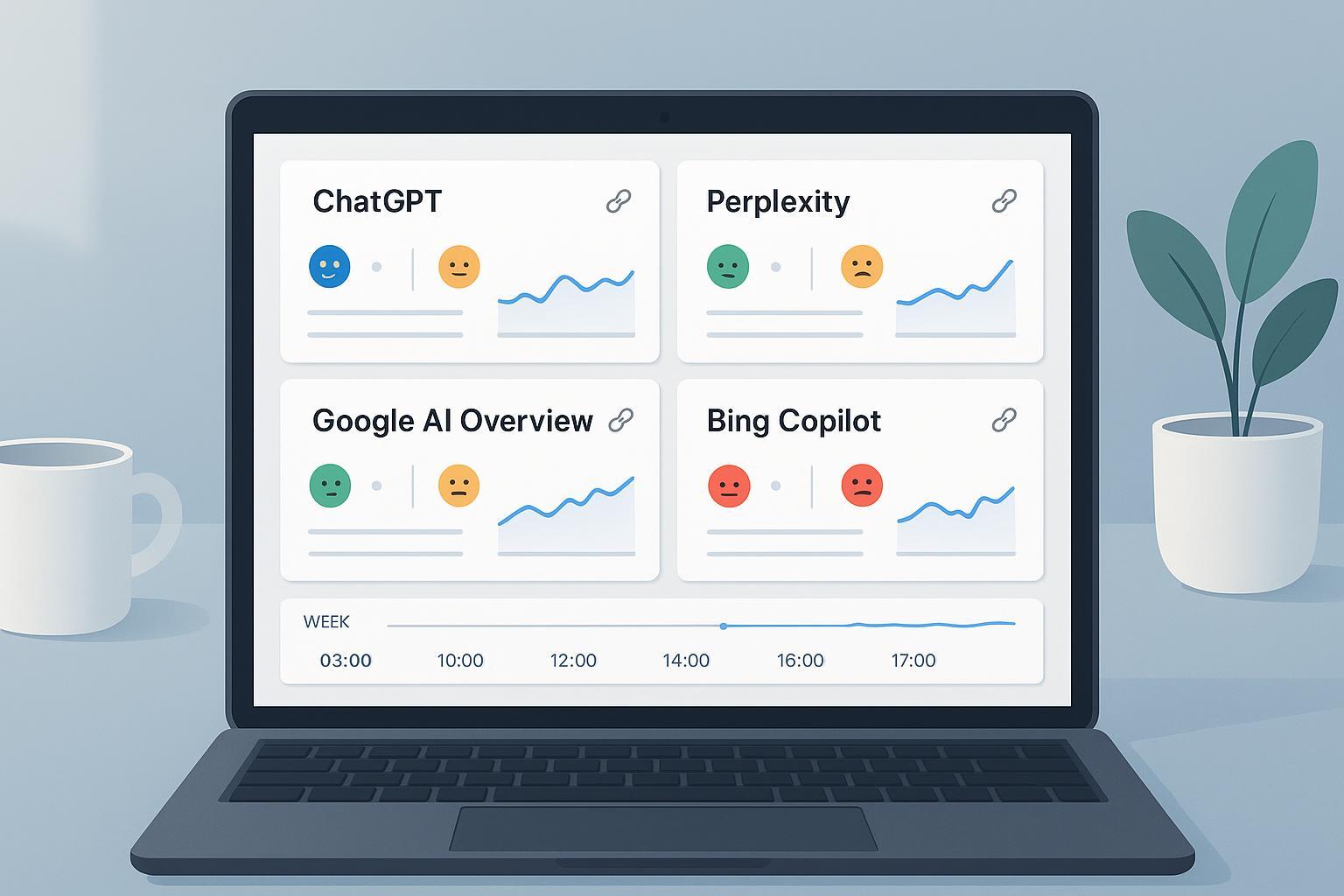

Practical Example: Centralizing Monitoring With Geneo

Disclosure: Geneo is our product.

Once your manual workflow is humming, centralizing the work streamlines scale. With a platform like Geneo, you can configure representative queries across ChatGPT (Search), Perplexity, Google AI Overview/AI Mode, and Bing Copilot; monitor whether your brand is mentioned or cited; capture sentiment trends; and retain historical query records for side‑by‑side comparisons. Use your existing weekly schema as the source of truth, then import or sync into a single view so your team can track mention rate, citation placement, and sentiment trajectory across engines without juggling folders and spreadsheets. Keep your evidence pack (screenshots, raw text) in parallel storage for audits.

Your First 14 Days: An Action Plan

- Day 1–2: Finalize your 20–30 query set and build the logging sheet with the schema above.

- Day 3–4: Run your first cross‑engine audit; capture screenshots and score sentiment. Share a short readout with three observations and one hypothesis for improvement.

- Day 7: Validate any automated components (scheduled captures, storage) and spot‑check tool outputs against manual snapshots.

- Day 14: Report trendlines from two weekly runs. Decide whether to add five more queries or refine sentiment thresholds.

One last thought: if AI summaries are the new front page, your presence inside them is the new billboard. Measure it with the same discipline you’d bring to any performance channel—and build the evidence trail to back your decisions.