How to Build a Generative Engine Optimization (GEO) Monitoring System: Step-by-Step Guide

Learn how to design, implement, and operate a compliant GEO monitoring system to track AI visibility, citations, and sentiment across Google, Perplexity, and ChatGPT.

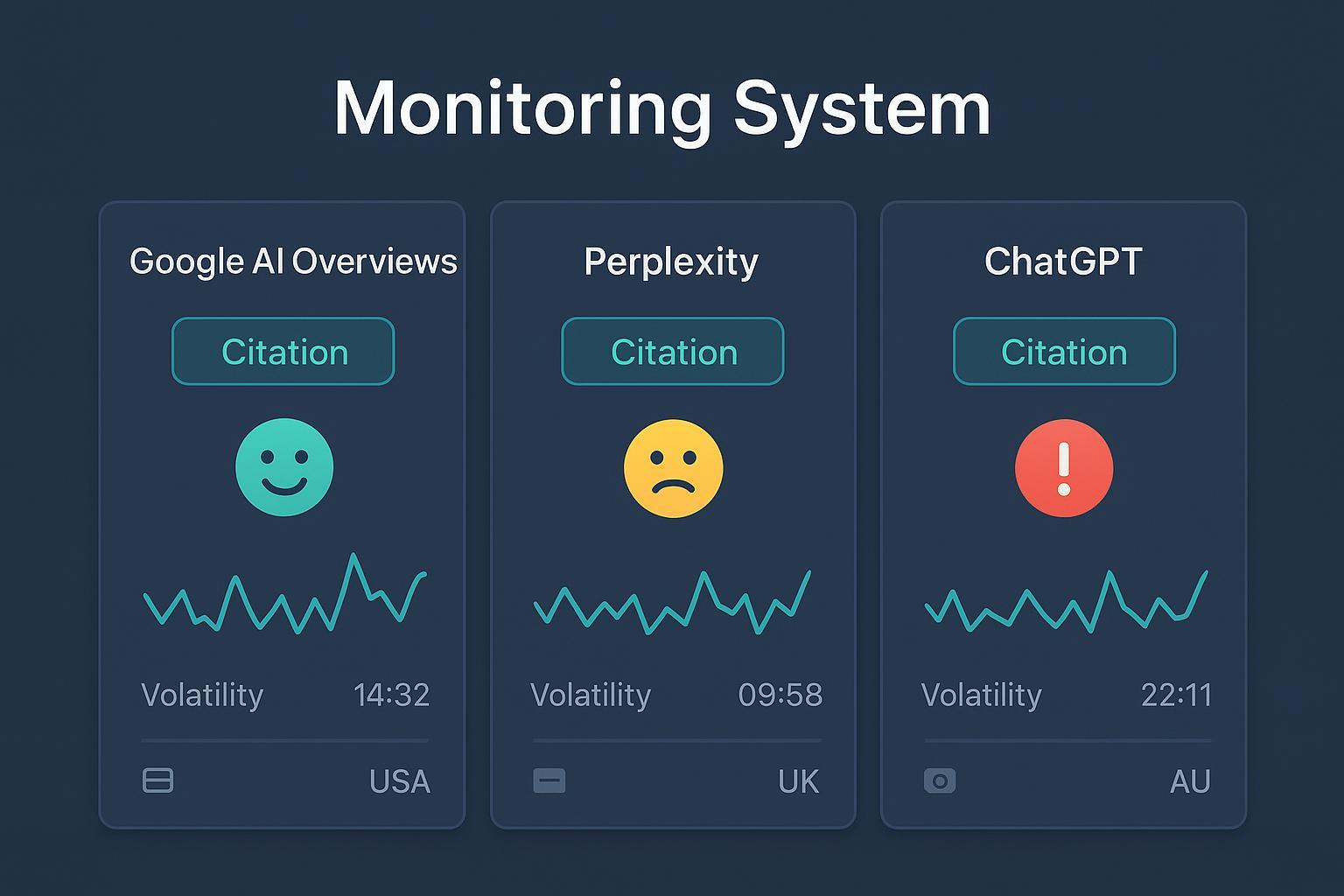

If answer engines are rewriting the front page of the internet, you need a way to see whether your brand shows up—cited, recommended, and represented accurately. This guide walks you through building a compliant GEO monitoring system that tracks visibility, citations, sentiment, and volatility across Google AI Overviews, Perplexity, and ChatGPT, with practical steps you can run today.

To anchor objectives, we’ll treat monitoring as a measurable slice of your broader AI visibility program—evidence-backed observations that inform content, PR, and product marketing.

1) Define scope and your query taxonomy

Start by deciding what—and where—you’ll measure.

- Platforms: Google AI Overviews/AI Mode, Perplexity, and ChatGPT.

- Locales/languages: Regions where you sell, plus English/Spanish/etc. Don’t forget device splits (mobile vs. desktop).

- Journeys and roles: Informational, comparative, and transactional queries; buyer roles (practitioner vs. exec).

- Branded/unbranded: Include brand + competitor + category terms. Favor longer, conversational phrasing that answer engines prefer.

- Sampling frame: Plan stratified samples by geography, user state (logged‑in/logged‑out), device, and time window. Capture stratum metadata for every observation.

Why this upfront rigor? Without clear strata and query clusters, you can’t diagnose personalization drift or model updates when results change.

2) ToS‑safe data collection by platform

A reliable GEO program respects platform policies. Here’s the compliant path for each.

Google AI Overviews

Google’s AI Overviews (AIO) appear selectively when a synthesized summary adds value. Public guidance explains AIO draw on ranking systems, the Knowledge Graph, and Gemini capabilities, and they are being expanded with AI Mode experiences. See Google’s own updates in Generative AI in Search (May 2024) and Expanding AI Overviews & AI Mode (Mar 2025). Implementation notes for site owners are documented in Google Search Central’s AI features guidance.

Monitoring reality: Search Console does not provide a dedicated AIO filter; impressions are mixed under Web. Industry coverage confirms this, including Search Engine Roundtable’s 2025 note on “no AIO filter”.

What to do, safely:

- Manual/authorized checks: Run scheduled queries using neutral accounts across your target regions. Record whether AIO appears, and capture screenshots with timestamps.

- Correlate with GSC: Investigate shifts around position‑1 queries with low CTR; pair with your manual observations to infer AIO presence. Keep in mind aggregation limits.

- Third‑party trackers: If you consider vendors, validate legal posture and ensure they respect Google policies before procurement.

Perplexity

Perplexity is the most programmatically accessible answer engine for compliant monitoring. Use official APIs/SDKs to fetch answers and citation arrays, and tag model versions.

- Start with the Search API quickstart or SDK search guide to ingest structured payloads (titles, URLs, snippets, and citation order).

- For conversational answers, the Chat Completions SDK returns responses with model identifiers and citations.

- Respect rate limits and usage tiers documented in Mintlify’s rate limits guide; implement queues and backoff.

Store response IDs, text hashes, and full citation lists. That evidence trail supports audits and reproducibility.

ChatGPT

OpenAI does not offer a public browsing API that replicates consumer search. Policy requires that any retrieval or browsing flow uses authorized integrations and respects third‑party ToS. Review OpenAI’s App Developer Guidelines (2025).

What’s acceptable:

- Observe ChatGPT recommendations through permitted enterprise contexts or approved third‑party platforms.

- Capture screenshots when consent and policy allow; avoid automation that attempts to scrape chat.openai.com.

3) Normalize and store: a minimal schema

Your monitoring is only as good as your data model. Normalize observations across platforms into a consistent structure.

| Entity | Key fields |

|---|---|

| Query | query_id; text; intent_type; cluster; buyer_role; locale; language |

| Platform | platform_id (google_aio, perplexity, chatgpt); model_version; account_state; region; device |

| Snapshot | answer_id; query_id; platform_id; timestamp; evidence_uri (screenshot/log); hash; answer_type |

| Citation | citation_id; answer_id; domain; url; position; inclusion_type (explicit/generic/exclusion) |

| SentimentEvent | event_id; answer_id; brand_entity; polarity; subjectivity; confidence; rationale_snippet |

Keep screenshots and hashed text per snapshot; log model_version and stratum metadata (geo, device, user state) to diagnose variance.

4) Compute KPIs that matter

Measure what changes behavior—content updates, PR actions, and outreach.

- AI Share of Voice: Share of citations by domain across your monitored query set; compute per platform and aggregate.

- Coverage Rate: Percent of queries where your brand is cited or recommended; break down by intent cluster and locale.

- Recommendation Mix: Explicit endorsement vs. generic inclusion vs. exclusion mentions.

- Volatility Index: Rolling variance of appearance and citation order; track week‑over‑week rank deltas.

- Sentiment Polarity and Subjectivity: Polarity (+/–/neutral) and confidence for brand mentions; alert on flips and spikes.

- Freshness/Recency: Time since last change in answer text or citation set; note model_version shifts.

- Competitive Overlap: Jaccard overlap of citation domains with competitors; flag displacement events.

For framework depth and dashboard mapping, see AI search KPIs.

5) QA, reproducibility, and governance

Think of QA as your guardrails. Without evidence trails, you’re guessing.

- Evidence packs: Store screenshots, answer text hashes, full citation arrays, and request logs per snapshot.

- Stratified sampling: Tag observations by geography, device, user state, and time window. Use deterministic selection to keep weekly samples reproducible.

- Drift monitoring: Track model_version and hash changes; investigate volatility spikes.

- Red‑team prompts: Probe adversarial phrasings to see boundary behavior; document and mitigate via content improvements or factual statements.

- Policy hygiene: Never retain PII tied to account states; restrict access and define retention windows.

6) Practical workflow (example)

Disclosure: Geneo is our product.

Here’s a repeatable workflow you can run quarterly across 200 priority queries:

- Define your taxonomy by intent and buyer role; include branded/unbranded and regional variants.

- Ingest Perplexity results via its official APIs, capturing answer text, citation arrays, and model versions. Respect rate limits with queued jobs and backoff.

- Sample Google AIO weekly using neutral accounts in three regions; record AIO appearance and citations with timestamps and screenshots.

- Observe ChatGPT recommendations only through authorized integrations; capture permitted screenshots with context notes.

- Normalize all observations into the schema above; attach evidence URIs and hash each answer payload.

- Compute AI Share of Voice, coverage, volatility, sentiment, and competitive overlap. Segment by platform, cluster, and locale.

- Build dashboards and set alerts for citation loss, negative sentiment spikes, and sudden volatility jumps. Retain evidence packs for audits.

Could you do this by hand in spreadsheets? Sure—for a few queries. But once you’re tracking hundreds across regions and devices, you’ll want a system.

7) Reporting and alerting in practice

Operationalize your insights so teams can act.

- Weekly deltas: Show changes in coverage, SoV, and sentiment by platform and cluster.

- Thresholds: Alert when coverage drops below target or sentiment flips negative for high‑value queries.

- Stakeholder views: Executives need high‑level SoV and risk; practitioners need query‑level evidence and next actions.

- Change notes: Annotate dashboards with model updates and major content releases to explain movements.

References and policy anchors

For definitions and context on GEO, see industry coverage such as Search Engine Land’s primer. Platform behaviors and site‑owner guidance appear in Google’s own communications: Generative AI in Search (May 2024), Expanding AI Overviews & AI Mode (Mar 2025), and Search Central documentation on AI features. The absence of a Search Console AIO filter is discussed in Search Engine Roundtable’s 2025 report. For Perplexity ingestion, use the official Search API quickstart and Chat Completions SDK. For policy constraints around ChatGPT browsing/automation, review OpenAI’s App Developer Guidelines.

Next steps

- Choose 100–200 high‑impact queries and design your strata (geo, device, user state).

- Stand up API ingestion for Perplexity and a manual sampling routine for Google AIO; codify evidence retention.

- Build your first dashboard and alerts; schedule weekly deltas and QA checks.

- If you manage multiple brands or clients, consider an agency dashboard for multi‑client monitoring to streamline cadence and reporting.

Let’s make your GEO monitoring not just visible—but verifiable.