GEO vs AEO vs SEO: an agency beginner’s guide

Practical, beginner‑friendly guide to GEO vs AEO vs SEO for agencies — clear definitions, a 1‑week pilot, llms.txt sample, and how to measure citation share.

If you pitch, report, or execute search for clients in 2026, you’re juggling three “surfaces” at once: classic SEO (the blue-link SERP), AEO (AI answers baked into search), and GEO (answers generated inside standalone LLMs). Most agencies I talk to are doing bits of all three already—they just don’t have a clean way to explain it to clients.

So that’s what this is: a plain taxonomy you can reuse, plus a one‑week pilot you can actually run without turning it into a six‑week “initiative.” The pilot ends with one metric you can show on a one‑pager—share of answer (aka citation share)—so you’re not hand‑waving about “AI visibility.”

And yes, there’s overlap. In practice, SEO/AEO/GEO feel less like three separate disciplines and more like three angles on the same job: getting your client referenced when the answer gets written.

Taxonomy at a glance: GEO vs AEO vs SEO

Here’s the cleanest way I’ve found to explain it without melting someone’s brain.

SEO is still the “rank and earn the click” work: make pages discoverable, credible, and technically sound so they show up in web results.

AEO is what happens when the interface shifts from “ten links” to “here’s the answer.” You’re shaping your pages so search‑integrated AI systems can confidently lift an accurate response (and, if you’re lucky, surface your brand alongside it).

GEO is the next layer out: making your brand and content reference‑worthy inside LLMs like ChatGPT and Perplexity, where the user may never see a traditional SERP at all.

A few practical notes before the table:

SEO foundations (technical health, EEAT, internal links) still do a lot of the heavy lifting across all three.

For AEO tactics, I like how CXL’s updated AEO guide (2026) and SEMrush’s AEO vs SEO breakdown (2025) draw the line between “ranking” and “answer extraction.”

For GEO, these primers are good starting points: Walker Sands’ GEO explainer (2025) and GoFish Digital’s GEO guide.

Dimension | SEO | AEO | GEO |

|---|---|---|---|

Primary surface | Google/Bing SERPs | Google AI Overviews, answer/snippet panels | ChatGPT, Perplexity, other LLM UIs |

Primary goal | Rankings and clicks | Inclusion in AI answers visible in search | Mentions/citations inside generated answers |

Key tactics | Technical SEO, EEAT, content & links | Answer‑first content, FAQ/HowTo schema | Entity clarity, fact‑dense pages, citation monitoring |

Measurement | Rankings, traffic, CTR | Answer/snippet presence, assist clicks | Citation frequency, “share of answer” |

Why agencies should care now

This is the part clients feel before they can articulate it: the brand either shows up in the answer, or it doesn’t. And when it doesn’t, your beautiful #2 ranking can still look like a loss in the Monday dashboard.

AI Overviews can grab attention even when they link out, and LLMs like ChatGPT or Perplexity can summarize a whole category without sending much traffic back. So the job shifts a bit: you’re not only chasing clicks—you’re trying to become the source the answer engine trusts enough to reference.

One grounding detail that matters for expectations-setting: Google has been pretty explicit that there isn’t a magical “AIO-only” playbook. It’s largely helpful content + normal technical eligibility, plus understanding how AI features pull from and link to the web. The canonical references are Google’s AI features guidance (2025) and the Google AI Mode product posts (2025).

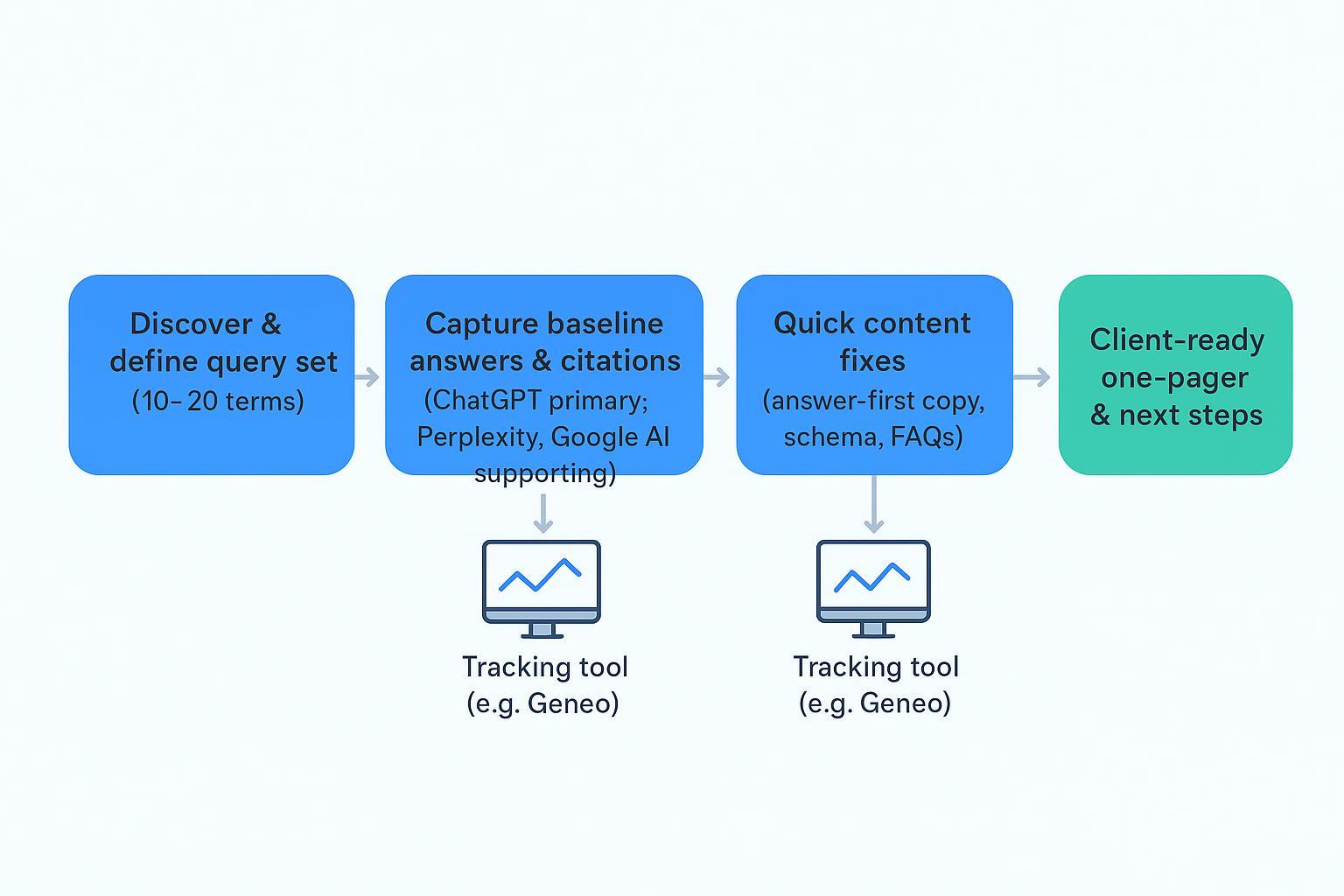

A 1‑week pilot workflow you can run this week

The point of a one-week pilot isn’t perfection—it’s momentum. You’re trying to get a baseline, make two or three obvious fixes, and then re-check the same questions so you can show movement.

Step 1 — Discover a focused query set. Pick 10–20 questions your client genuinely cares about (mix non‑branded and lightly branded). Group by intent if that helps you stay organized, but don’t overthink it. The main thing is stability: you want the same set next week.

Step 2 — Capture baseline answers and citations (ChatGPT primary; Perplexity and Google AI Overviews supporting).

ChatGPT: run each query in a mode that shows sources. Log the model/mode and date, and archive the full output. Future-you will thank you.

Perplexity: capture the answer and the source list.

Google AI Overviews: when an overview appears, screenshot the panel and the cited links.

Disclosure: Geneo is our product. If you’d rather not juggle screenshots, tabs, and spreadsheets, Geneo can be used to monitor multi‑engine visibility and citations across ChatGPT, Perplexity, and AI Overviews; see Geneo docs for the specific capabilities.

Step 3 — Quick content fixes. Move the direct answer higher on the page. Tighten (or add) an FAQ that mirrors the query set. Add schema where it genuinely fits (FAQPage, HowTo, Product, Organization). And make entity details unambiguous—names, locations, product specs, “this is what we do” statements. If you cite data, link to the primary source.

Step 4 — Re‑capture and compare. Repeat the same queries in the same engines with the same modes. Then tally what changed in citation share and jot a few notes on how the answers changed (which page got cited, which paragraph got lifted, whether the brand name appears).

Step 5 — Package a client one‑pager. Show baseline vs week-one “share of answer,” call out what you changed, and list 3–5 next actions. If you keep it simple and visual, clients usually get it immediately.

Core artifacts you’ll use

llms.txt (proposed, non‑standard) This is one of those things that’s useful even if it never becomes “official.” The idea is simple: you publish a short /llms.txt (or a variant) that points AI crawlers toward the pages you’d most want cited—docs, canonical explainers, FAQs, and your cleanest “here are the facts” pages.

To be clear: it’s not a standard, and no engine is obligated to read it. I treat it as a hint you control, not a guarantee. If you want examples and current convention, see GeoChecker’s llms.txt explainer (2026) and Techwise Insider’s Rank Math overview (2025).

Example llms.txt

# Proposed, non-standard helper for AI crawlers

# Keep the list short, high-signal, and maintained

# Core product & docs

https://example.com/product/overview

https://example.com/docs/getting-started

# Authoritative answers & FAQs

https://example.com/blog/industry-fundamentals

https://example.com/support/faq

# Company/organization facts

https://example.com/about

https://example.com/legal

Answer‑ready JSON‑LD snippets These schemas help machines parse intent and steps. Use them where they genuinely fit the page.

FAQPage (minimal example)

{

"@context": "https://schema.org",

"@type": "FAQPage",

"mainEntity": [

{

"@type": "Question",

"name": "What is share of answer?",

"acceptedAnswer": {

"@type": "Answer",

"text": "Share of answer is the percent of tracked queries where your brand is cited in an AI-generated answer."

}

}

]

}

HowTo (minimal example)

{

"@context": "https://schema.org",

"@type": "HowTo",

"name": "Calculate share of answer (citation share)",

"step": [

{"@type": "HowToStep", "name": "Select queries", "text": "Pick 10–20 representative questions."},

{"@type": "HowToStep", "name": "Capture answers", "text": "Query ChatGPT, Perplexity, and note AIO appearances."},

{"@type": "HowToStep", "name": "Tally citations", "text": "Map cited domains per query and engine."},

{"@type": "HowToStep", "name": "Compute share", "text": "Divide your cited answers by total answers with citations."}

]

}

Measuring “share of answer” (citation share)

Definition Share of answer = the percentage of tracked queries where your brand (domain/entity) is cited in the answer for a given engine—or across engines. In a pilot, I’d keep it binary: cited or not. You can get fancy later (position weighting, sentiment, etc.), but don’t start there.

Method you can reproduce this week

Build a sheet with columns: Query, Engine, Date/Model, Your Domain Cited? (Y/N), Cited Domains, Notes.

For each query, run ChatGPT first (in a mode with visible sources), then Perplexity, and note whether a Google AI Overview shows up.

Mark Y if your domain is among the cited sources; leave N otherwise.

Compute citation share per engine: Y count ÷ total answers with any citation.

Repeat next week and compare deltas.

Worked micro‑example (10 queries)

Engine | Your domain cited in answers | Total answers with any citation | Citation share |

|---|---|---|---|

ChatGPT | 3 | 9 | 33% |

Perplexity | 5 | 10 | 50% |

Google AIO | 2 | 6 | 33% |

For broader KPI framing (visibility, sentiment, conversion proxies), see this internal perspective: KPI frameworks for AI search.

Evidence notes Independent roundups compare how ChatGPT and Perplexity handle citations; see SE Ranking’s comparisons (2025) and Nexos.ai’s head‑to‑head (2025). Treat ChatGPT as mode‑dependent for sources; treat Perplexity as consistently transparent with inline citations.

Engine‑specific tips (2026)

ChatGPT (primary) Capture the model and mode in your log because citation behavior varies by browsing/tools. Prompt with a short instruction like “list your sources with canonical URLs.” Archive full outputs so you can replicate later. Why insist? Some tests show variable link density depending on settings and question type; comparative reviews in 2025 document this variability.

Perplexity Write fact‑dense pages with canonical, high‑authority references; Perplexity tends to show and rank sources in a visible list. Make sure your title and H1 reflect the question being answered so your page looks like a strong candidate source.

Google AI Overviews You cannot “force” inclusion beyond helpful‑content and technical eligibility, per Google. However, formatting your page with answer‑first sections and schema improves extractability. Review Google’s AI features and your website and neutral industry overviews like Botify’s primer on AI Overviews for mechanics and expectations.

Bing/CoPilot and Claude (briefly) Monitor them for your client’s space, but for most agencies the first two weeks are better spent on ChatGPT and Perplexity, with AIO captured opportunistically.

For a practical comparison of engine monitoring approaches, see this internal roundup: ChatGPT vs Perplexity vs Gemini vs Bing monitoring.

Where tracking tools fit (and a quick note on Geneo)

Category view Manual spreadsheets work for a pilot, but ongoing ops benefit from a tracker that captures multi‑engine answers, citations, and trends, plus white‑label views for clients.

Neutral mention Disclosure: Geneo is our product. Geneo can be used to monitor multi‑engine visibility and citation share across ChatGPT, Perplexity, and Google AI Overviews, with benchmarking and reporting options detailed in the docs and general product overview at geneo.app.

Next steps for your team

Block one week to run the pilot: define queries, capture baseline, ship two quick fixes, and re‑measure.

Keep the sheet simple and repeatable; expand engines or add sentiment later.

If you move beyond manual tracking, evaluate any tool that supports multi‑engine monitoring; Geneo is one option to consider alongside others.

What will your client remember after this week? That you gave them a clear taxonomy and a simple, auditable way to track whether AI answers are finally citing their brand. Let’s get that win on the board and build from there.