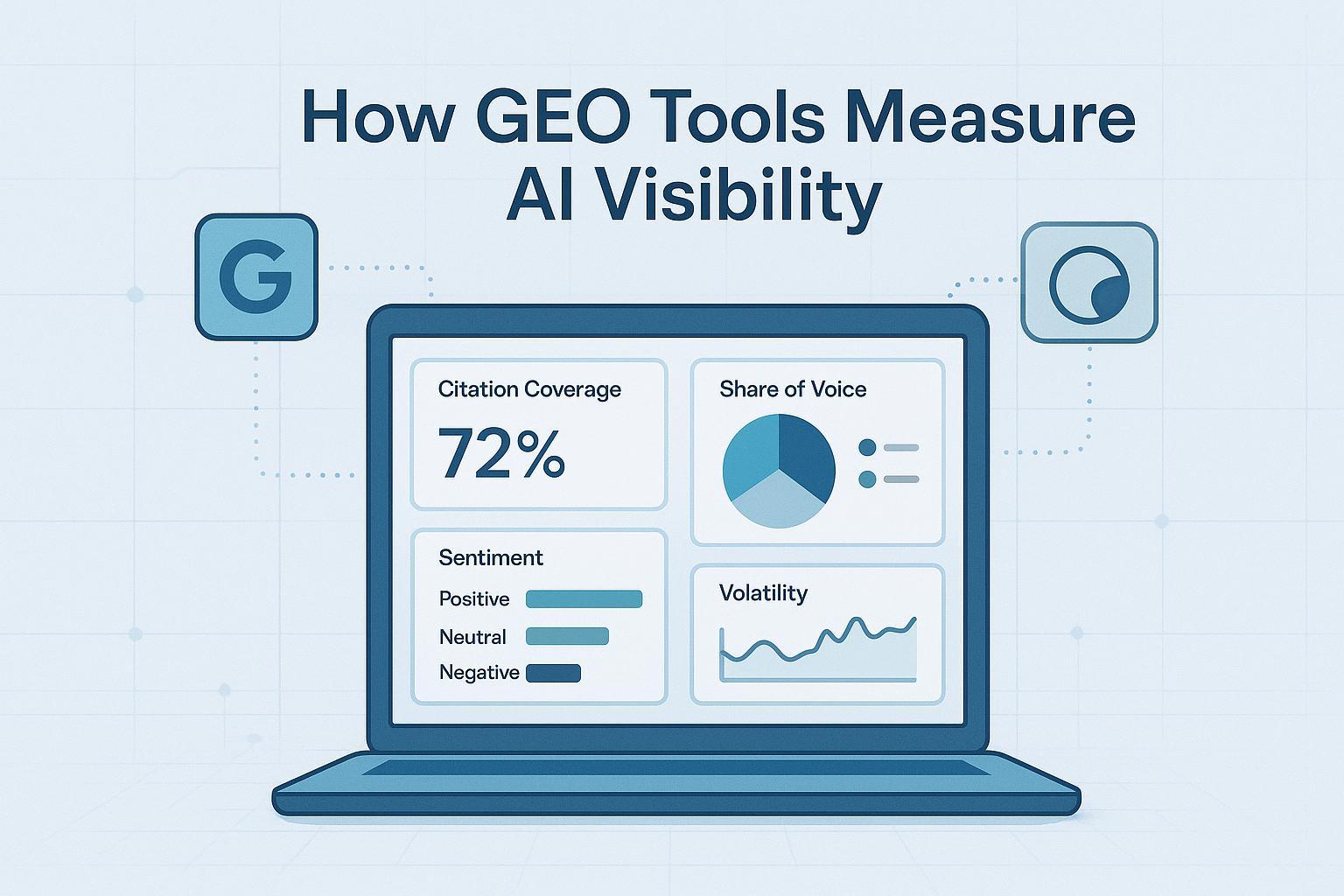

How GEO Tools Measure AI Visibility

Discover how GEO tools measure AI visibility in top AI answer engines. Learn core metrics, tracking workflows, and citation strategies for brands.

If AI answers are the new front door to discovery, how do you know your brand is actually visible there? Generative Engine Optimization (GEO) is the discipline of understanding and improving how your content gets discovered, cited, and represented inside AI answer engines—Google’s AI Overviews/AI Mode, Perplexity, ChatGPT, and others. This explainer shows how GEO tools measure that visibility, which metrics matter, and how to build a repeatable workflow without hype or guesswork.

What “GEO” and “AI visibility” mean

GEO, short for Generative Engine Optimization, focuses on optimizing for systems that generate answers rather than just listing links. AI visibility is the degree to which your brand or domain appears as a cited source or a referenced entity within those answers, and how favorably it’s portrayed.

If you need a quick glossary of adjacent terms (AEO, LLMO, AI SEO), see the plain-language overview in GEO, GSVO, GSO, AIO, LLMO & AI SEO acronyms explained.

How AI engines find and cite sources

- Google’s AI features expand a query into subtopics (a “fan‑out”), blend large‑language‑model reasoning with live retrieval, and surface supporting links beneath the overview. Google treats these AI features as part of Search, with visibility guidance for site owners documented in AI Features and Your Website (Google Search Central).

- Perplexity uses retrieval‑augmented generation with numbered inline citations and source previews by design, making provenance more transparent. See Perplexity Help Center: How does Perplexity work? for an official summary.

- ChatGPT can show citations when search is enabled; in many cases you’ll observe brand mentions in text without clickable attribution. Treat mentions and linked citations as separate measurement categories.

For a practical view of how sources are selected in Google AI Overviews, marketers often reference analyses like White Peak’s review of source selection. It’s directional rather than doctrine, but helpful context alongside Google’s official guidance.

The measurement framework: metrics and formulas

Rather than tracking classic SERP positions, GEO tools quantify visibility with a standardized set of metrics you can compute across engines.

- Citation coverage (%): The percentage of tracked queries where your domain is cited in the AI answer.

- Formula per engine E over query set Q: coverageE = cited(Q,E) / |Q|

- Share of citation voice (SOV, %): Your share of citations within an answer and across a query set.

- Within one answer: SOVbrand = brand_citation_count / total_citations

- Sentiment distribution: The stance of AI answers toward your brand or product (positive/neutral/negative), tracked over time and per engine.

- Accuracy flags: Notes where answers misstate facts or misattribute content; tag by error type (hallucination, outdated info, misattribution).

- Freshness/recency: The age of cited sources and the percentage updated within a time window (e.g., 90‑day freshness rate).

- Volatility: Week‑over‑week changes in coverage or SOV.

- Example index: VolatilityE = |coverageE,t − coverageE,t−1|

- Referral impact: Sessions and conversions attributable to AI surfaces where referrers are available (e.g., Perplexity), or via standard analytics attribution for Google.

If you’re building a KPI program around these, see the practical templates in AI search KPI frameworks: visibility, sentiment, conversion.

Quick worked examples

- You test 100 queries in Perplexity and your domain is cited in 32 answers → CoveragePerplexity = 32%.

- A Perplexity answer cites 8 sources and your domain appears twice → SOV = 2/8 = 25%.

- Over a week, 40 AI answers reference your brand: 10 positive, 24 neutral, 6 negative → 25%/60%/15% sentiment distribution.

- Coverage in Google AI Mode shifts from 20% to 26% after a content refresh → volatility delta = +6 percentage points.

- Of 50 cited sources, 28 were updated in the past 90 days → 56% freshness rate.

When accuracy and relevance are central to your auditing, the rubrics in LLMO metrics: measuring accuracy, relevance, personalization can help standardize scoring.

A cross‑engine weekly workflow (tool‑agnostic)

Here’s a repeatable measurement cycle you can adapt to your stack.

- Fix your query set: Compile 50–200 high‑intent questions by topic cluster and funnel stage; include “how,” “best,” “compare,” and “vs.” patterns.

- Set cadence: Weekly for volatile topics; monthly baseline otherwise. Time‑stamp every run.

- Cover engines and modes: Google Search (check for AI Overviews/AI Mode and capture cited sources), Perplexity (run tracked queries, export citations), ChatGPT/Gemini/Claude/Copilot (manual checks; separate mentions vs linked sources).

- Log evidence: Store raw outputs, screenshots, and JSON where available. Record cited domains, sentiment, accuracy flags, freshness, and volatility.

- Build a dashboard: Table by engine with coverage, SOV, sentiment breakdown, volatility deltas, and notable accuracy notes. Overlay analytics for referral impact by AI source.

- Review and act: Identify gaps (topics where you’re not cited), prioritize content refreshes and structured data, and plan external authority building. Re‑test after major releases or model updates.

Programmatic and manual specifics

- Google AI Mode: You can programmatically pull cited sources and answer objects using services described in SerpApi’s guide to tracking Google’s AI Mode cited sources. Use parameters for language and locale to control sampling. Expect format changes over time.

- Perplexity: Answers include numbered citations by default. Run your tracked queries, capture/export the citation sets, and compute coverage and SOV. The official summary of retrieval behavior is in Perplexity’s Help Center. For practical tips on being cited, practitioners reference guides like Rankshift’s walkthrough.

Pitfalls and how to mitigate them

- Volatility and personalization: AI answers can change by time, context, and user state. Mitigate with consistent sampling windows, time‑stamped runs, and averaging across sessions.

- Opaque signals: Source selection isn’t fully disclosed. Prioritize freshness, authority, clarity, and E‑E‑A‑T; follow Google’s quality guidance in AI Features and Your Website.

- Hallucinations and inaccuracies: Maintain an error taxonomy; remediate with clearer claims, references, and structure. Validate critical facts.

- Measurement blind spots: Google Search Console aggregates traffic from AI features into “Web.” Distinguish via analytics where possible, but accept some aggregation.

- Referral undercounting: Not all AI tools pass referrer headers. Use UTM tagging where feasible and triangulate with qualitative logs.

Example micro‑workflow using a monitor

Disclosure: Geneo is our product.

A neutral, practical example to illustrate the measurement cycle:

- Centralize your query set by topic cluster and funnel stage; log each run with timestamps.

- For Google AI Mode, ingest cited source lists programmatically; for Perplexity and ChatGPT, capture citation sets and mentions via screenshots or exports.

- Compute coverage, SOV, and sentiment per engine weekly; flag accuracy issues and freshness.

- Export a weekly report with volatility deltas and referral impacts; share with content and PR owners to prioritize fixes.

Tools that support cross‑engine logging and report exports can reduce manual overhead and standardize your evidence trail.

Optimization levers tied to measurement

Your measurement should inform what to optimize next.

- Topical authority and clarity: Structure content with explicit definitions and direct answers; keep headings scannable.

- E‑E‑A‑T: Display author credentials, cite primary sources, and make claims transparent.

- Freshness: Update high‑velocity topics frequently and note recent changes visibly.

- Structured data: Use FAQPage, HowTo, and Article markup to improve machine interpretability.

- External signals: Earn reviews, community mentions, and third‑party roundups that increase your likelihood of being cited.

Think of SOV in AI answers as your brand’s “share of conversation.” If you’re barely present in citations today, your next sprint isn’t guesswork—it’s targeted content refreshes, better references, and clearer topical coverage.

GEO metrics at a glance

| Metric | What it measures | Primary data source |

|---|---|---|

| Citation coverage (%) | % of tracked queries where your domain is cited | Engine outputs (AI Overviews/AI Mode; Perplexity citations; ChatGPT Sources) |

| Share of citation voice (%) | Your share of citations within an answer or query set | Per‑answer citation counts across engines |

| Sentiment distribution | Positive/neutral/negative stance toward brand | Answer text + sentiment rubric |

| Accuracy flags | Presence and type of errors/hallucinations | Answer text + error taxonomy |

| Freshness/recency | Age of cited sources; % updated within window | Source publish/update dates |

| Volatility | Week‑over‑week change in coverage/SOV | Time‑series logs per engine |

| Referral impact | Sessions/conversions from AI surfaces | Analytics referrers/UTM; GSC aggregate |

What to do next

GEO measurement is practical and repeatable: define your query set, run a weekly cross‑engine cycle, compute coverage/SOV/sentiment, and act on what the data shows. If you want help centralizing the logging and reporting, try a monitoring tool and benchmark your visibility across engines—start small, then scale to your full topic map.

For deeper background and templates, explore GEO acronyms and adjacent concepts and AI search KPI frameworks for visibility, sentiment, and conversion. And when accuracy scoring becomes the focus, consult LLMO metrics for auditing accuracy and relevance.