GEO for Education Brands: AI Search Best Practices for 2025

Discover 2025's top AI-driven GEO strategies for education brands—crawlability, schema, compliance, and KPI measurement. Practical guides for education SEO pros.

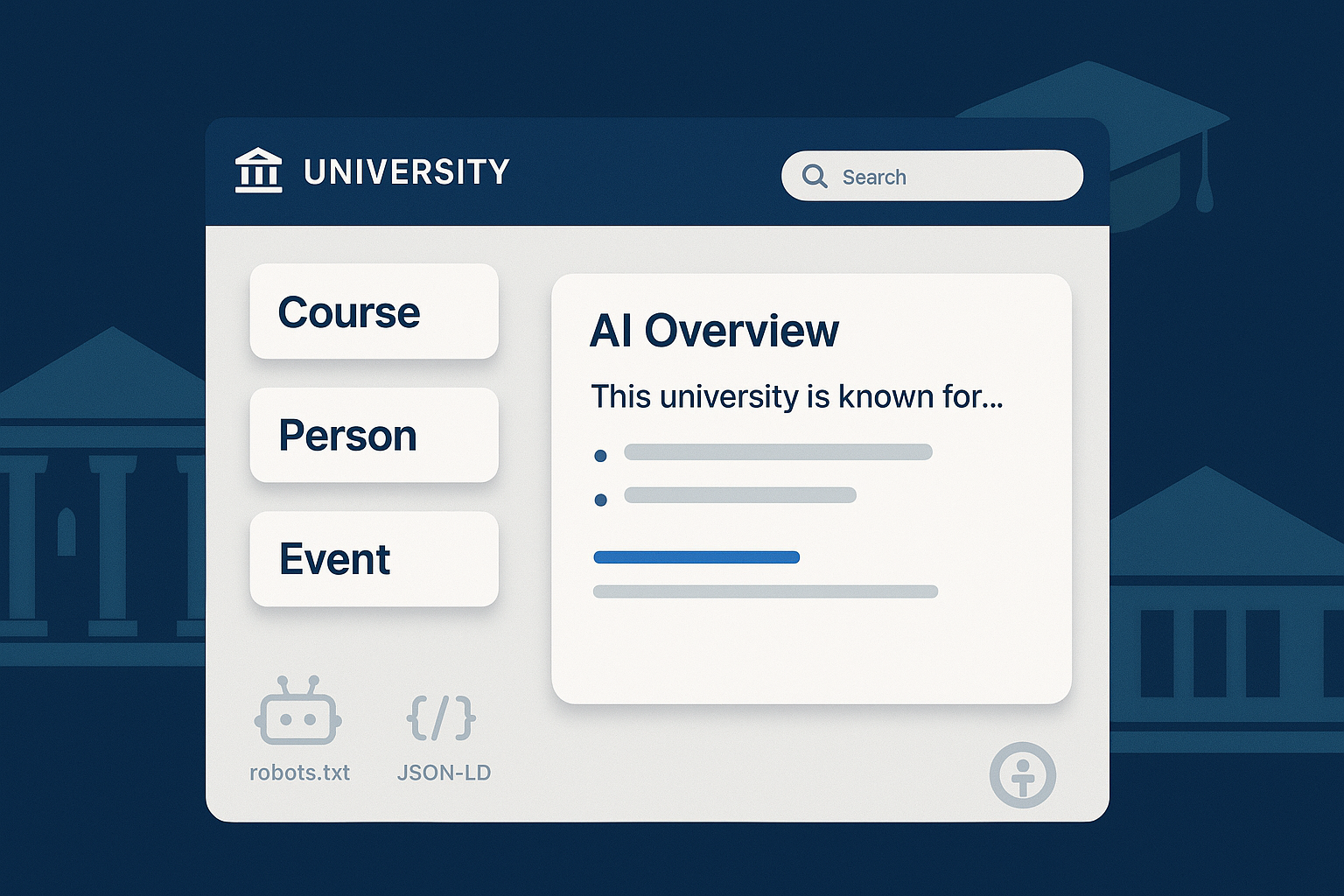

AI-driven search is changing how students, parents, and faculty discover information. For education brands, the question isn’t “Will AI search impact us?” but “How do we make our program pages, admissions content, faculty profiles, and resources reliably show up in answer-style results?” Think of Generative Engine Optimization (GEO) as the practical extension of your SEO program for engines that summarize, cite, and sometimes link—your job is to be the source worth citing.

GEO for EDU: What changed—and what didn’t

Here’s the good news: Google’s AI features don’t require exotic new tags or hidden endpoints. Google states that AI Overviews and AI Mode rely on standard Search eligibility—meaning your pages still need to be crawlable, indexable, and helpful. There are “no additional technical requirements” beyond established best practices. See Google’s guidance in the 2025 docs under AI features and your website and its companion post Succeeding in AI search (2025).

What does that mean in practice for EDU teams?

- Ensure core pages return HTTP 200, avoid blocking critical resources (CSS/JS), and present accurate, people-first content with clear answers.

- Structure content so AI systems can quickly extract definitions, dates, and details (program descriptions, outcomes, deadlines, costs).

- Stay current on presentation changes: Google has simplified some rich result types over time; monitor the docs rather than assuming deprecated features hurt AI eligibility.

A simple litmus test: if your content is easy for a busy student to scan and understand, it’s usually easier for AI systems to ground and cite.

Your first 90 days: a pragmatic implementation plan

Weeks 0–2: Establish technical and accessibility baselines. Run a crawl/index audit, review robots.txt and page-level indexing/snippet controls for sensitive areas, and apply structured data where it’s missing (Course, Organization, Person, Event, VideoObject). Begin WCAG 2.2 AA triage—caption media, fix headings, and ensure keyboard operability.

Weeks 2–6: Map intents across programs, admissions, aid, outcomes, and faculty. Create answer-first blocks (short definitions, FAQs with visible content), publish complete faculty profiles with Person markup, and tighten mobile performance. If you’re thinking, “Do we really need FAQ blocks?”—use them only when they mirror visible, genuine FAQs.

Weeks 4–8: Update governance. Refresh privacy notices and consent practices; align marketing workflows with FERPA/GDPR requirements. Publish an accessibility statement and remediation plan. This phase makes your content safer to cite.

Weeks 6–12: Set up measurement. Define prompt sets and engines; track inclusion and citations by topic; monitor sentiment. Establish a quarterly baseline, then iterate content where inclusion or citations are weak. Aim for tangible movement, not vanity metrics.

Technical foundations for LLM crawlability and structured data

Crawler controls and snippet/indexing settings still do most of the heavy lifting. Use robots.txt for crawl scope, and apply X-Robots-Tag or meta robots on sensitive or non-index targets. For AI crawlers specifically, OpenAI indicates its crawler respects robots.txt; if you need to block it, target its user-agent. According to OpenAI’s policy docs, you can manage the crawler via robots directives as described in the OpenAI approach to data and AI.

Here’s a practical robots.txt excerpt you can adapt:

# Example: restrict GPTBot

User-agent: GPTBot

Disallow: /

# Keep public learning resources crawlable

User-agent: *

Allow: /courses/

Disallow: /internal/

For Perplexity’s crawler, authoritative user-agent and robots directives are not clearly documented publicly as of late 2025. If your institution needs specific controls, contact Perplexity support for official guidance.

On structured data, favor core types that clarify EDU entities for both Search and LLMs:

- Course and CourseInstance for catalog pages, with consistent naming, provider, and codes.

- EducationalOrganization/Organization for institutional identity (legalName, url, logo, contactPoint).

- Person for faculty/staff profiles, including affiliation and sameAs for authoritative profiles.

- Event for admissions webinars, open houses, and deadlines; use ISO-formatted dates.

- VideoObject for lectures and explainers; follow Google’s video SEO best practices.

Google has simplified the search results page and deprecated some education-specific rich features over time. Keep markup aligned with visible content, validate in Rich Results tests, and track documentation updates. The W3C’s WCAG materials and Google’s Search Central docs remain your anchor references.

Entity and intent mapping that AI systems can understand

AI systems reward clarity. You’ll want a matrix that ties each entity (program, campus, faculty, outcome) to the intents people express and the answers they expect. Start with the content you already have, then fill gaps.

Below is a concise template to guide planning.

| Intent Type | Example Query | Primary Page Entity | Required Answer Block | Supporting Schema |

|---|---|---|---|---|

| Program definition | “What is the BSN program at State University?” | Program page (BSN) | 2–3 sentence definition + accreditation | Course, EducationalOrganization |

| Admissions deadline | “Fall 2025 application deadline for BSN” | Admissions page | Date + link to apply | Event |

| Cost/aid | “BSN tuition and scholarships” | Cost & aid pages | Tuition table + top 3 aid options | Organization, FAQPage |

| Outcomes | “BSN graduate employment rates” | Outcomes page | Latest percentages + methodology | Organization |

| Faculty | “BSN program chair profile” | Chair’s profile | Bio + publications + office hours | Person |

Make the “Required Answer Block” visible on the page. Hidden or accordion-only content tends not to be trusted. Keep language concise and plain; if a reader can quote your definition easily, a model can cite it.

Answer-first content and accessibility that improve inclusion

Answer-first doesn’t mean robotic. It means your page has a crisp summary or definition up top, followed by deeper context. Use FAQPage markup only when the FAQs are visible and genuinely match the content. Pair text with short videos where appropriate; for videos, implement captions, transcripts, and clear thumbnails. Google’s guidance on video SEO best practices is a useful checklist.

Accessibility directly affects trust and comprehension—two signals that make citations more likely. Prioritize WCAG 2.2 AA actions: captions and audio descriptions for prerecorded media, transcripts for audio-only content, keyboard operability, predictable navigation, and semantic headings. Refer to the WCAG 2.2 normative specification (W3C, 2023–2024) for criteria, and use WAI tool lists to test pages. These improvements help all users—and help AI systems parse structure, timing, and meaning.

Compliance and governance for education marketing

Education marketing touches regulated data and student records. Under U.S. FERPA, personally identifiable information (PII) from education records generally requires written consent before public disclosure unless a valid exception applies. “Directory information” is disclosable only when designated by the institution and the student hasn’t opted out. Practical guardrails: obtain consent for student testimonials/photos, anonymize outcomes data, avoid sharing student contact lists with third parties without controls, and align CRMs/analytics with privacy settings. See the U.S. Department of Education’s FSA Handbook coverage of FERPA obligations in record-keeping and privacy (2024–2025).

If you recruit in the EU/UK, GDPR applies. Establish lawful bases (consent vs legitimate interests), provide transparent notices at collection, enable rights, apply minimization and retention, secure data, manage processor contracts, and conduct DPIAs for high-risk processing. For practical detail on de-identification and safeguards, the EDPB’s Guidelines on pseudonymisation (2025) are a helpful resource. Compliance doesn’t boost rankings directly—but without it, trustworthy citations and institutional credibility suffer.

Measuring AI visibility and citations (with a practical workflow)

You can’t manage what you don’t measure. Define a set of prompts/queries spanning programs, admissions, cost, aid, outcomes, and faculty. Track engines separately (Google AI Overviews/Mode, ChatGPT, Bing, Perplexity), then report two families of KPIs:

- Inclusion rate: the percentage of prompts where your brand appears inside the AI answer.

- Citation frequency and citation share: how often your pages are cited, and your share of citations in a competitive set.

- Sentiment of mentions: positive, neutral, or negative; investigate negative or misleading references.

Published analyses reinforce these focus areas. Search Engine Land’s 2025 guide offers definitions and practical setups for visibility tracking—see How to measure brand visibility in AI search (2025). Cross-industry observations on what correlates with citations have also emerged; for instance, a 2025 summary suggests sites with substantially higher authority and longer, comprehensive content tend to earn more ChatGPT citations, as covered by Search Engine Journal’s analysis (Nov 2025). Treat these as directional, not deterministic.

Disclosure: Geneo is our product. In practice, teams use monitoring platforms to capture AI inclusion, citation counts, and sentiment across engines and prompt sets. When we set up reporting, we align the dashboard with an “AI visibility” view and LLM-oriented metrics. For terminology and framework background, see What Is AI Visibility? Brand Exposure in AI Search and the companion discussion on evaluation criteria in LLMO Metrics: Measuring Accuracy, Relevance, Personalization in AI. Those resources help standardize definitions across marketing, analytics, and leadership.

The workflow cadence is straightforward: baseline quarterly inclusion and citation share; set 90-day improvement targets by topic; iterate answer-block quality, schema coverage, and freshness where you lag. When AI answers cite competitors, review what they did right—then update your content to fill gaps.

Platform-specific notes and operational tips

Some EDU teams worry about model crawlers indexing sensitive materials. The safest policy is layered controls: authentication for genuinely sensitive content, noindex/nosnippet for public-but-should-not-be-reused pages, and robots directives for crawler scoping. OpenAI’s page above explains how its crawler handles robots; apply agent-specific rules rather than blanket disallows that inadvertently block helpful indexing. If you need official details for Perplexity’s bot, request them directly from support.

Accessibility and performance tune-ups pay off across channels. WCAG 2.2 AA and mobile-first improvements make content easier for all users to consume and for AI systems to parse. EDU sector guidance from EAB emphasizes question-driven content, snippet-friendly formatting, topic clusters, and routine audits, as summarized in EAB’s higher ed SEO checklist (2025). Pair that with internal behavior insights—for example, Geneo’s AI Search User Behavior 2025—to sharpen your editorial plan.

Pitfalls and quick wins

- Don’t mark up hidden content with FAQPage. Use visible, reader-first Q&A.

- Don’t rely on robots.txt alone to prevent indexing; use page-level noindex/nosnippet where needed.

- Do publish faculty Person pages with clear affiliations and sameAs links; these are easy wins for entity clarity.

- Do add concise answer blocks near the top of program/admissions pages; they improve scannability and citation likelihood.

- Do maintain accurate dates for events and deadlines using Event markup; stale dates erode trust.

Next steps: build an audit cadence and keep improving

Set a quarterly cadence: revisit accessibility and structured data, re-baseline inclusion/citation share, and refresh content where answers have drifted or policies changed. Keep governance aligned—privacy notices, consent management, and ADA remediation are not one-and-done tasks. If your team needs a measurement scaffold, consider adopting an AI visibility dashboard and KPI definitions shared with leadership; it saves time and avoids “dueling metrics.”

The takeaway is simple: be the most reliable, scannable source for the queries you care about, and make it effortless for both people and AI systems to quote you. Ready to tighten your 90-day plan? Let’s dig in and make your next round of content the one models reach for first.