Content Quality Scores and Their Impact on GEO

Learn what content quality scores really mean for GEO, how AI engines pick sources, and which quality signals boost search and citation odds.

There isn’t a single, official “content quality score” you can optimize to earn citations in AI answers. That can feel unsettling—until you realize quality is a system of signals, not a secret meter. In Generative Engine Optimization (GEO), the practical goal is simple: create pages that AI systems understand, trust, and want to cite.

If you’re new to the acronyms swirling around GEO and AI search, this quick primer will help: see our explainer on GEO, GSVO, GSO, AIO, and LLMO.

What “content quality scores” are (and are not)

“Content quality scores” is shorthand for the bundle of signals search and AI systems use to assess your page: experience and expertise, clarity and coverage, technical eligibility, freshness, and more. It’s not a public, single-number metric in organic search or AI features.

Two important clarifications:

- Google does not publish a holistic page “quality score” for organic search or AI Overviews. Their guidance emphasizes helpful, people-first content and E-E-A-T—Experience, Expertise, Authoritativeness, Trust—rather than any one metric. See Google’s advice on creating helpful content and E‑E‑A‑T.

- Don’t confuse this with Google Ads’ Quality Score. That’s an ads-only 1–10 diagnostic for expected CTR, ad relevance, and landing-page experience, and it has nothing to do with organic ranking or AI Overview inclusion. Reference: Google Ads Quality Score.

If there’s no master score, how do you know you’re getting better? By tracking whether AI engines actually cite you more often, with neutral-to-positive sentiment, across the queries that matter.

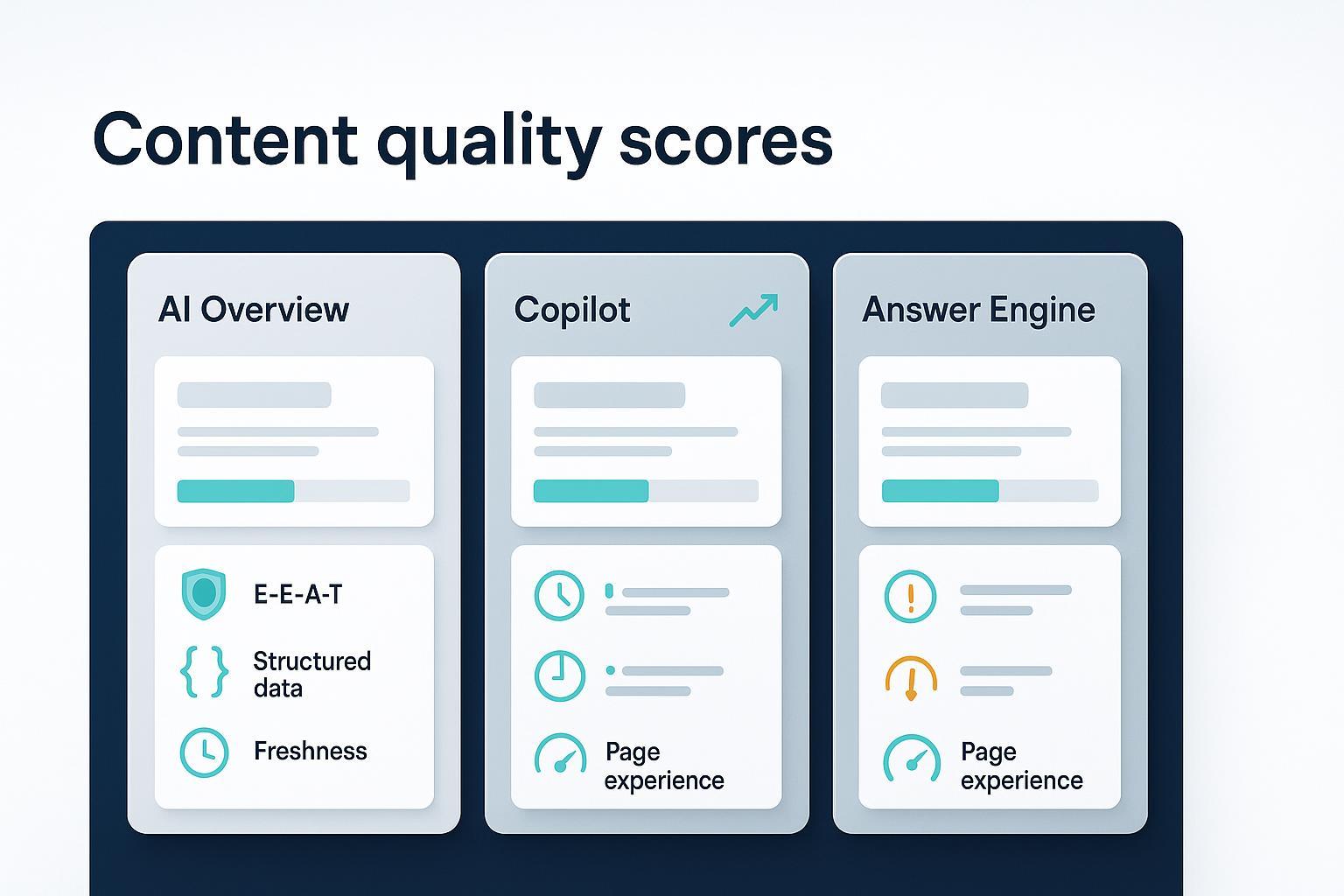

How AI engines pick sources

Different engines surface and attribute sources in slightly different ways, but the through-line is consistent: discoverable, helpful pages that machines can parse and humans can trust.

-

Google AI Overviews Google describes AI Overviews as generated by models grounded in Search systems, accompanied by supporting links. Success follows Search fundamentals: helpful content, clear entities and structure, and technical eligibility for discovery and indexing. See Google’s AI features and your website.

-

Microsoft Copilot/Bing Copilot presents linked citations for answers that come from web search, making provenance visible. In enterprise scenarios, admins can designate knowledge sources and apply grounding and retrieval safeguards. See Microsoft Learn’s Copilot privacy and protections.

-

Perplexity Perplexity behaves like an answer engine, blending live retrieval with model reasoning and showing citations inline so users can verify claims. This is documented as product behavior rather than formal ranking policy. See Perplexity’s Help Center: How does Perplexity work?.

The takeaway: there’s no “AI inclusion switch.” Eligibility rises when content is helpful, unambiguous at the entity level, technically accessible, and supported by credible sourcing.

Signals that move the needle for GEO

The following signals consistently align with being selected and cited in AI answers. Think of them as a system you tune over time.

| Signal | Why it matters for AI answers | Practical moves |

|---|---|---|

| E‑E‑A‑T and authorship transparency | Engines prefer sources that demonstrate experience, credentials, and a trustworthy editorial context. | Publish author bios with credentials, add an editorial policy, and maintain robust About/Contact pages. Cite primary sources. See Google’s helpful content & E‑E‑A‑T guidance for alignment. |

| Source‑backed claims | Verifiable, cited claims are easier for AI to trust and summarize without distortion. | Link to original research, standards, or official docs within the body. Use descriptive anchors and avoid link stuffing. |

| Structured data and entity clarity | Clear entities help models map your page to the right topics and organizations/people. | Add Organization/ProfilePage schema and sameAs links; use supported structured data types. Keep canonical URLs stable. |

| Freshness and update cadence | For time‑sensitive questions, engines often favor up‑to‑date sources. | Timestamp updates, maintain public changelogs for cornerstone guides, and refresh when substance—not just dates—changes. |

| Page experience and Core Web Vitals | Good UX can be a tie‑breaker when multiple pages are helpful. | Track LCP/INP/CLS and remediate regressions. Reference Google’s Core Web Vitals overview. |

| Technical eligibility and indexability | If it’s hard to crawl, index, or consolidate, you reduce your odds of being selected and cited. | Ensure robots directives, sitemaps, canonicals, and internal linking are clean; validate structured data; fix duplication. |

A quick sanity check: Are your most important pages trivially easy for a machine to parse and a human to trust? If not, that’s your starting line.

Using third‑party content scores the right way

Many SEO platforms compute proprietary “content scores” based on topical coverage, headings, term usage, and readability. These can be useful diagnostics—but they’re not ranking factors, and they don’t predict AI citation odds on their own.

A 2025 analysis by Surfer reports a weak‑to‑moderate correlation between its score and rankings and cautions against over‑optimization; the nuance matters. See the Surfer Content Score study (2025). Treat such scores as prompts to improve coverage and clarity, then validate progress by whether AI engines cite your pages more often.

Practical framing:

- Use tool scores to spot gaps (missed subtopics, ambiguous terminology, thin sections).

- Decide edits based on user value and credible sources, not just term frequency.

- Re-measure success by AI citation share and sentiment—not by the proxy score itself.

A GEO measurement plan you can run this quarter

Instead of chasing a mythical score, track the outcomes that matter for GEO. Two core constructs help: visibility (are you cited?) and sentiment (how are you described?). For background on these ideas, see our primer on AI search KPI frameworks for visibility, sentiment, and conversion.

The KPI set:

- Share of citations in AI answers (by query cluster and by engine).

- Sentiment distribution (positive/neutral/negative) attached to your brand mentions.

- Freshness velocity (days since last meaningful update for each key page).

- E‑E‑A‑T completion rate (bios, sources, schema coverage, sameAs consistency).

- Technical health (CWV pass rate, indexation coverage, structured data validation).

- Entity clarity scorecard (internal): consistency of naming and profiles across site and external properties.

Practical workflow (neutral example)

Disclosure: Geneo is our product.

Here’s a simple weekly loop you can run to operationalize the plan without fixating on a single score:

- Define query clusters tied to your core topics and the entities you want to be known for.

- Monitor AI answers from Google AI Overviews, Perplexity, and Copilot/Bing for those clusters; log which URLs/brands are cited and in what context. For a sense of what a cross‑engine snapshot looks like, see this example query report on 8K video streaming bandwidth.

- Classify sentiment for each brand mention; tag by engine and by intent (how‑to, definition, comparison).

- Audit E‑E‑A‑T elements and structured data; verify Organization/ProfilePage markup and sameAs links.

- Update pages that lost citations: expand topical coverage, add primary sources, clarify entities, and document changes with visible timestamps or changelog notes.

- Track CWV and indexation weekly; remediate regressions quickly.

This closes the loop: diagnose, improve, and validate by whether engines cite you more often and more positively.

Common pitfalls—and a better path

- Treating a third‑party “content score” as a goal in itself, leading to term stuffing and bland writing.

- Ignoring entity clarity and structured data, so AI systems can’t confidently map your content to the right concepts or organizations.

- Neglecting freshness and technical eligibility, which quietly erodes your chance of being included in competitive answer sets.

The better path is disciplined and simple: write genuinely helpful content, make it machine‑readable and source‑backed, keep it current, and measure success by share of citations and sentiment—across engines, not just the traditional SERP.

Wrap‑up

There’s no magic dial labeled “content quality score.” There is, however, a reliable system: helpful content, clear entities and structure, solid UX, and clean technical foundations—measured by whether AI engines actually cite you. Think of it this way: if a researcher had to justify quoting your page, would they find clear authorship, credible sources, and up‑to‑date facts? If yes, you’re on the right track for GEO.