Citation tracking for Google AI Overviews: complete how-to

Set up AI Overview citation tracking with baselines, live-SERP validation, and exports for clear reporting—without guesswork.

If you’ve noticed Google’s AI Overviews showing up for your non-branded queries, you’ve probably had the same two reactions:

“This is going to hit CTR.”

“How do I measure whether we’re being cited—without turning my week into screenshots and guesswork?”

This guide gives you a complete, repeatable workflow for citation tracking for Google AI Overviews—from setup and baselining to live-SERP validation and exports you can actually report.

You’ll also see how to track Google AI Overviews citations separately from brand mentions, so your reporting doesn’t blur two different signals.

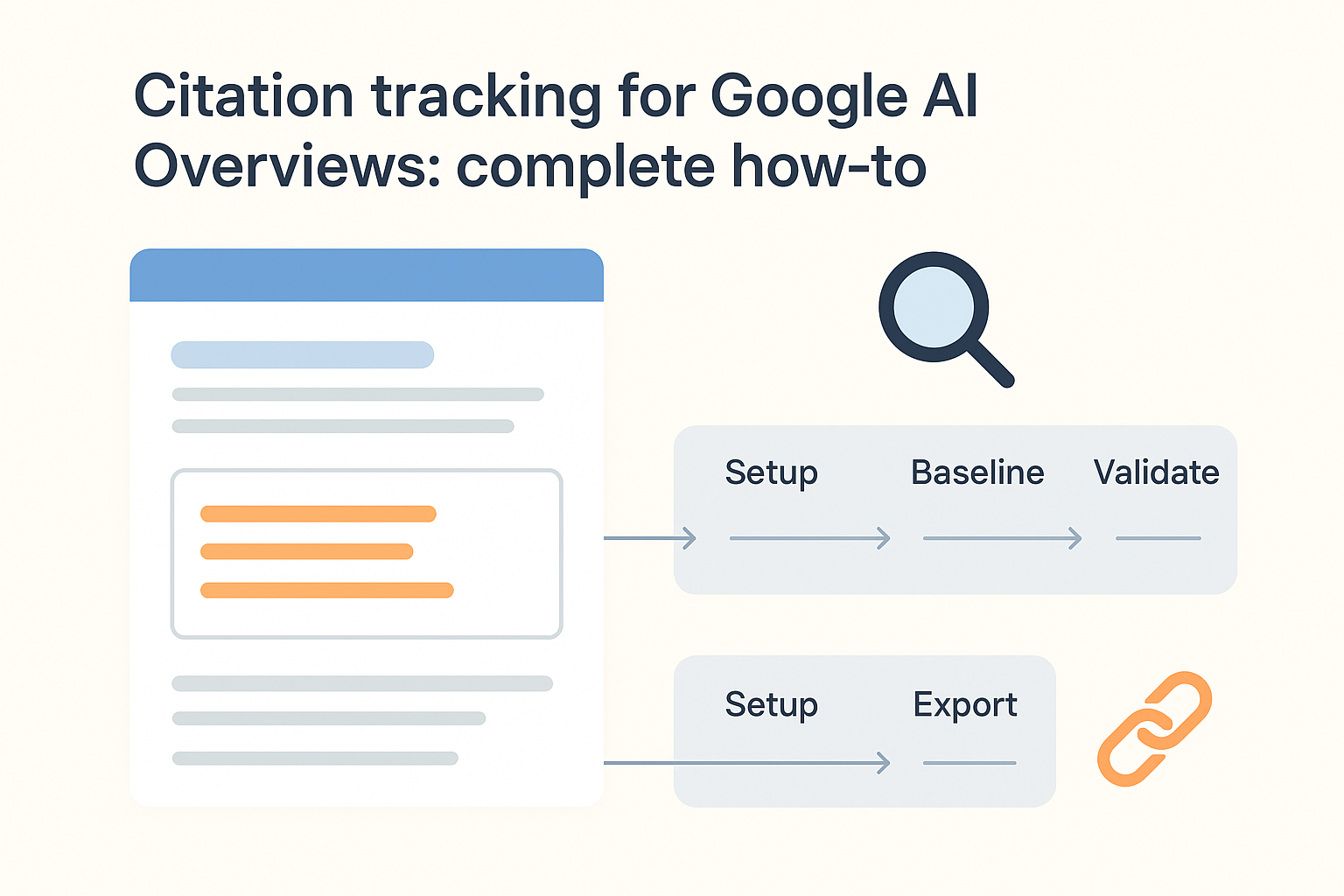

Citation tracking for Google AI Overviews: the workflow at a glance

For most teams, the win isn’t perfection—it’s a repeatable system you can run weekly, explain to stakeholders, and validate against live SERPs.

At a high level, you’ll:

Set up a consistent tracking context (US, device, language) and a keyword set.

Baseline what you see today (AIO present, citations, mentions).

Validate weekly with live SERP spot checks so volatility doesn’t look like “data errors.”

Export a dataset you can trend over time and turn into reporting.

This is essentially AI Overview SERP monitoring with an extra layer of rigor: you’re tracking the AI Overview itself (presence + variant changes) and the sources it cites.

What you’re tracking (so your data doesn’t lie)

Before you set up anything, align on definitions. Otherwise your charts will look “up and to the right” while your visibility is actually flat.

AI Overview present: The SERP shows an AI Overview for a query in your target location/device context.

Citation: An AI Overview links to a specific source (a page or domain). This is what most teams mean by “we got cited.”

Mention: Your brand is named in the AI Overview text without a link.

If your goal is to track AI Overviews mentions vs citations, treat them as two separate columns and report them separately.

Tracked event: One query checked at one point in time, with the observed AI Overview state (present/not present) and its citations.

Pro Tip: Track citations and mentions separately. Mentions can indicate awareness; citations indicate source authority and referral opportunity.

Prerequisites (15 minutes)

You don’t need an enterprise stack to start. You need consistency.

A keyword set (start with 25–50 queries)

Focus on informational, non-branded queries where an AI Overview is likely.

Include a mix of: core service terms, problems you solve, and “how to” questions.

A fixed tracking context

Target country: US

Decide device: desktop or mobile (pick one for baselining)

Decide language: English

A place to store your observations

A spreadsheet works fine initially.

If you have data ops support, use a database table.

Step 1: Build your baseline dataset

Your baseline answers one question: “If we do nothing, what’s our current citation/mention footprint?”

1.1 Create a keyword set you can defend

Use three buckets (you’ll thank yourself later when you segment results):

Money queries: high-intent service queries (even if AI Overviews appear less often)

Problem queries: “why” / “how” / “best way to” questions tied to your services

Entity queries: your brand + close competitors (to see how AIO frames the category)

Aim for 25–50 to start. You can scale once the workflow is stable.

1.2 Decide what you’ll capture for each check

Minimum viable fields:

date_time_utcquerycountry(US)device(desktop or mobile)aoi_present(yes/no)brand_mentioned(yes/no)cited_domains(list)cited_urls(list if available)notes(anything odd: local pack, heavy personalization, no citations shown)

If you want something your future self will trust, add:

serp_screenshot_urlorserp_html_archive_idaoi_variant_id(a simple hash you generate from the visible AIO text)

1.3 Run the baseline checks (manual, but controlled)

Do this for your first baseline so you understand the shape of the SERP. Automation without understanding tends to produce confident nonsense.

Practical guardrails:

Use a clean browser profile.

Use the same location settings each time.

Don’t “fix” results by clicking around—your goal is observation, not interaction.

As you do this, remember Google says AI features may use a query fan-out approach (multiple related searches across subtopics) to build responses, which is one reason citations can vary even when your query doesn’t change. See Google Search Central’s AI features documentation (2026).

Step 2: Separate signal from noise (mentions, citations, and AI Overviews share of voice)

After your baseline pass, compute three metrics.

2.1 Citation rate

Citation rate = % of tracked events where your domain appears as a cited source.

This is your cleanest “are we a source?” metric.

2.2 Mention rate

Mention rate = % of tracked events where your brand is named in the AIO text.

This can rise before citations do (especially when the model recognizes you as an entity but doesn’t yet treat your content as source material).

2.3 Share of citations (lightweight share of voice)

For each query, count:

total cited domains

how often you appear vs. top 3 competitors

You’re not trying to prove causality. You’re trying to make visibility measurable.

Key Takeaway: For SMB reporting, directional trend + repeatable methodology beats pretending you have perfect attribution.

Step 3: Validate against live SERPs (so your tracker stays honest)

Tracking breaks in two predictable ways:

the SERP changes (layout, citations UI, AIO eligibility)

your method changes (location, device, personalization)

Validation is how you catch both.

3.1 Use a sampling plan

Every week, pick 5–10 queries from your set.

Re-check them manually.

Compare against what your dataset says.

If you’re seeing a mismatch, don’t “average it out.” Diagnose it.

3.2 Use a simple validation checklist

For each validation query, confirm:

Location and device match your baseline.

AI Overview is present (or not) as recorded.

Citations are visible in the UI you’re checking.

If citations differ, note whether the AIO content also differs (it often will).

If you want a canonical reminder that AI Overviews are built to be additive and can show a wider range of sources, Google’s own guidance on AI search experiences is a good north star: Top ways to succeed in Google’s AI experiences (2025).

3.3 What “good” validation looks like

Your tracked

aoi_presentmatches reality most of the time.When citations shift, you have enough context (timestamp + notes + archive) to treat it as volatility, not a data bug.

Step 4: Choose your tooling path (neutral options, from DIY to full coverage)

You can do this four ways. The right choice depends on how many keywords you track and how often stakeholders ask for updates.

Option A: Manual tracking (fastest to start)

Best for: 25–50 queries, weekly cadence.

Spreadsheet baseline + weekly sampling validation

Screenshot archive for “proof” when stakeholders ask

Tradeoff: Doesn’t scale. Human time becomes the bottleneck.

Option B: SEO suites and rank trackers

Best for: teams already paying for an SEO platform.

Some platforms increasingly surface AI Overview presence and related SERP features.

Useful for: query set management and basic reporting.

Tradeoff: Feature coverage varies. Confirm exactly what’s captured (AIO presence vs. citations vs. cited URLs).

Option C: SERP capture / scraping tools

Best for: scaling to hundreds+ queries with consistent archiving.

Capture SERP HTML or structured extracts.

Build your own parsing logic (or pay for it).

Tradeoff: Engineering + maintenance overhead, and you need to be careful with reliability.

Option D: GEO/AEO monitoring tools

Best for: ongoing cross-engine visibility (AIO + other answer engines) and exports.

These tools typically focus on: citations, mentions, competitive benchmarks, and reporting workflows.

If you want an overview of categories and evaluation criteria, see AI Overview tracking tools (internal resource).

Tradeoff: You’re buying convenience. Validate with live SERPs and spot checks.

Step 5: AI Overviews reporting / export: a dataset you can reuse

The most common failure mode: teams track citations, but can’t answer basic questions like “which queries moved?” or “which competitors replaced us?”

5.1 Use a simple export schema

Whether you export from a tool or your own sheet, keep the schema stable:

datequerycountrydeviceaoi_presentbrand_mentionedcited_domain_1...n(or a JSON list)cited_url_1...n(if available)top_competitor_domains(optional)

5.2 Produce two reports (SMB-friendly)

Weekly pulse (1 page)

AI Overview presence rate (in your keyword set)

Citation rate

Mention rate

Top cited domains (you + competitors)

Notes: what changed, what you validated

Monthly trend (executive-ready)

4-week trend lines

Query segments: money vs problem vs entity queries

“Wins and losses”: queries where you gained or lost citations

If you need KPI ideas that map cleanly to stakeholder conversations, use this as a reference: AI visibility KPIs best practices (internal resource).

Step 6: Troubleshooting (common reasons your tracking looks wrong)

You tracked “citations,” but you’re really tracking “visibility”

Fix: separate AIO presence, mentions, and citations.

Your baseline doesn’t match next week

Fix: tighten your context (location/device), add timestamps, and validate with a weekly sample.

You’re not seeing AI Overviews at all

Fix: expand to more informational queries, longer-tail questions, and problem framing. Keep the set US-focused if that’s your target market.

Stakeholders demand click attribution

Fix: be explicit: GSC/GA4 don’t cleanly isolate AIO citation clicks. Use visibility metrics and a repeatable methodology. For a broader perspective on click behavior shifts when AI summaries appear, Pew has relevant analysis: Google users are less likely to click when an AI summary appears (2025).

⚠️ Warning: Treat AI Overviews as a changing interface, not a stable “SERP feature.” Your tracking system needs timestamps and validation, not just keyword lists.

Next steps

If you’re starting from zero, run a 2-week pilot:

Baseline 25–50 queries.

Validate weekly with a 5–10 query sample.

Export weekly, report monthly.

Only then decide whether to automate.

If you want a step-by-step companion for improving your odds of being cited (not just tracking), start here: How to get content cited in AI-generated search answers (internal resource).

And if you want a neutral, light-touch way to operationalize monitoring across multiple answer engines, you can use Geneo as one option—alongside your existing SEO suite and a live-SERP validation routine.