How to Build Content That AI Wants to Recommend (2025 Playbook)

Discover 2025’s top strategies for building AI-recommended content: answer-first structuring, schema, entity identity, technical hygiene, and multi-platform AI citation optimization.

Traffic patterns are shifting. Independent studies show fewer clicks when AI summaries appear, with publishers reporting meaningful drops in organic CTR on AI‑Overview queries, while Google reports greater overall usage on those same queries. Both can be true: more lookups, fewer downstream clicks. The opportunity is to be the source those AI experiences cite and recommend.

This playbook gives senior marketers and SEO leaders a practical build—from answer‑first drafting to schema, technical readiness, platform nuances, measurement, and governance—so your pages are easier for AI systems to extract, verify, and link.

What “AI‑recommended” content looks like in 2025

AI experiences prefer content that is easy to quote, verify, and attribute. Think of your page as a set of clean, labeled “tiles” that a model can pick up and place into an answer.

- Answer‑first structure: Lead sections with a crisp, self‑contained answer, then support it with steps, evidence, or a compact table. Microsoft’s guidance for inclusion in grounded answers emphasizes clear, snippable formatting and freshness, not walls of prose (see Microsoft Advertising’s 2025 post on optimizing for AI answers).

- Entity clarity: Define the people, organizations, products, and concepts you reference. Tie them to public knowledge graph entries when accurate, and reflect that identity in JSON‑LD.

- Verifiable sourcing and authorship: Use named authors with bios, dates, and primary sources. Google’s 2025 Search Central docs on AI features reiterate technical readiness plus helpful, accurate content as prerequisites.

- Accessibility and multimedia: Provide alt text, transcripts, and Video/Image metadata. AI systems benefit when meaning is available as machine‑readable text.

Why does this work? Passage rankers and answer engines look for compact, high‑confidence snippets. If your content already reads like a trustworthy answer, you raise the odds of being cited.

The 2025 build: from topic to passage‑level answers

Here’s the simple logic that keeps teams aligned: intent → entities → questions → passages. Start with the primary intent, list the key entities, enumerate the questions a user actually asks, and draft one answer‑first passage per question.

Use this fast checklist when drafting:

- State the answer in 1–2 sentences at the top of each section, then provide the why/how.

- Embed one scannable element per section (a short list, a small table, or a diagram caption). Don’t overdo it.

- Name entities consistently (brand, product, people) and use the same terms in headings, body, and schema.

- Cite one or two primary sources for claims that matter. Keep anchors descriptive.

- Close with a short “what to do next” line that tees up internal linking and related tasks.

Think of each section as a tile. If it can stand alone, it can be lifted and cited.

Schema and entity identity you can’t skip

Structured data helps machines understand who you are, what the page is about, and how pieces relate. In 2025, prioritize JSON‑LD for:

- Organization and Person: Give your brand and authors stable @id values and link sameAs to authoritative profiles (website, LinkedIn, Wikidata/Wikipedia when accurate).

- Article/NewsArticle, HowTo, and FAQPage: Use Article widely; apply HowTo when true step‑by‑step instruction exists; be cautious with FAQPage as Google limits rich results eligibility in many niches.

- Product, Review, and VideoObject: If you cover offerings or embed video, mark them up fully.

- BreadcrumbList: Reinforce hierarchy and context across your site.

Common pitfalls to avoid:

- Missing @id and inconsistent entity names across pages.

- Client‑only rendering of JSON‑LD that fails for crawlers.

- Ineligible use of FAQPage markup just to “get a box.” Eligibility has narrowed; use where it genuinely fits.

For technical references and current eligibility notes, see Google’s living documentation on AI features and your website (2025) and the companion post on succeeding in AI search (2025).

Technical readiness still gates inclusion

The best information won’t surface if crawlers can’t reliably access it or if rendering hides your answers.

- Crawlability and indexability: Confirm Googlebot access, proper canonicals, valid sitemaps, and HTTP 200 for primary content.

- Rendering and performance: Avoid burying key content in JavaScript that never hydrates server‑side. Monitor Core Web Vitals and fix LCP/INP/CLS regressions. A stable, fast page is more reliably parsed and cited.

- Freshness routines: Time‑sensitive pages need an update cadence. Refresh stats, rotate examples, and add recent sources so AI systems can justify including you.

A quick quality gate before publishing: validate schema, test mobile rendering, and confirm the primary question’s answer appears in the first 100–150 words of its section.

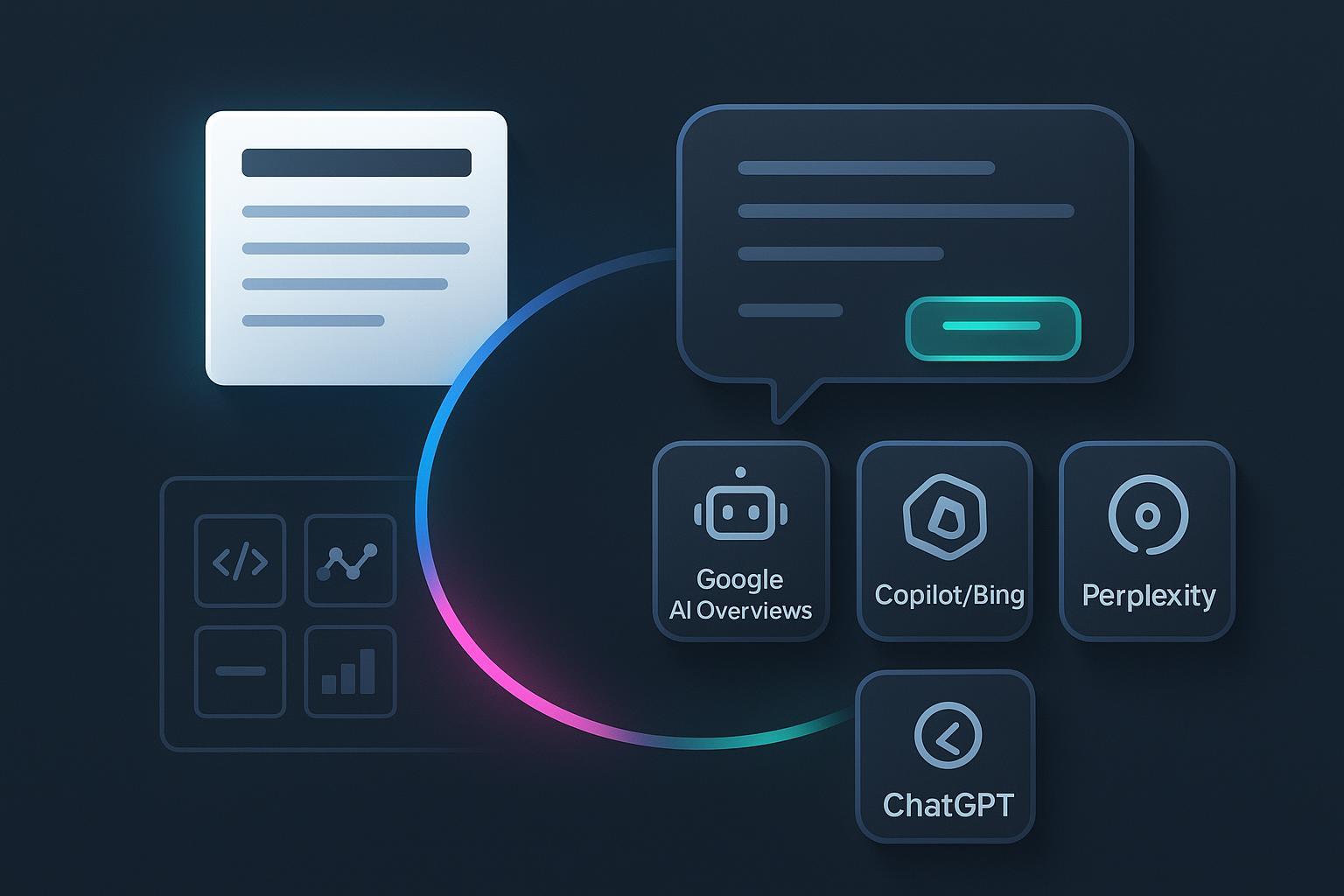

Platform nuances (at a glance)

Two goals unite the platforms: clarity and verifiability. The table highlights practical differences you should account for.

| Platform | What helps you get cited | Notes for 2025 |

|---|---|---|

| Google AI Overviews / AI Mode | Answer‑first passages, robust schema, indexability, fresh evidence | See Google Search Central guidance on AI features (2025). |

| Microsoft Copilot (Bing) | Snippable sections, explicit steps, updated facts | Microsoft’s 2025 guidance emphasizes structure and freshness in grounded answers. |

| ChatGPT with browsing | Clear claims with primary sources; accessible, crawlable pages | OpenAI provides crawler controls; browsing rewards well‑cited, readable content. |

| Perplexity | Concise, source‑backed explanations with transparent citations | The product consistently surfaces inline citations; recency and clarity matter. |

Measurement that won’t mislead you

Chasing single metrics will burn cycles. Instead, track a balanced set that reflects inclusion and impact. If you need definitions and formulas for these, see Geneo’s guide to AI search KPIs and the explainer on AI visibility.

- Inclusion and citation share: How often your domain appears in Google AI Overviews, Copilot answers, and Perplexity responses by topic cluster.

- Sentiment and framing: Are AI systems describing your brand positively, neutrally, or negatively? Is your positioning accurate?

- Assist‑to‑click outcomes: Downstream traffic and conversions attributable to AI answer exposure, even if CTR is lower than classic SERPs.

- Recency and evidence health: Average age of stats, last update timestamps, and the ratio of primary to secondary sources.

Remember the context: independent reporting in 2025 found notable click declines when AI summaries appear, while Google shared that usage rose on those queries. Treat measurements as directional and compare trends over windows, not days.

For methodology and KPI selection, read the internal resources:

- AI Search KPI Frameworks for Visibility, Sentiment, Conversion (2025)

- What Is AI Visibility? Brand Exposure in AI Search Explained

Post‑publication workflow (with a neutral tool example)

If you only “publish and pray,” AI visibility decays. You need a loop: monitor → diagnose → iterate.

- Monitor: Track which queries trigger AI answers and whether your pages are cited. Segment by persona and topic.

- Diagnose: When you’re absent, compare your page’s answer placement, schema completeness, recency, and entity clarity against cited competitors.

- Iterate: Promote buried answers to the top of sections, add or correct schema, update facts, and add a compact table or list where extraction would benefit.

Disclosure: Geneo is our product. Here’s how a team might use it in this loop without overclaiming. Create an AI visibility workspace with priority branded and non‑branded queries. Each week, review where your domain appears in Google AI Overviews, Microsoft Copilot citations, and Perplexity answers. For gaps, open the top cited competitor pages and audit: Is their core answer higher on the page? Do they use HowTo or Article schema more completely? Are their stats from the current year? Apply changes to your content, validate JSON‑LD, and recheck inclusion over the next two to four weeks. For deeper methodology and KPIs, see the AI Search KPI Frameworks (2025).

Governance for 2025: crawler controls and experiments

Control what models can use, and document your preferences clearly.

- Robots controls for AI crawlers: OpenAI’s GPTBot documentation explains robots.txt directives; block or allow based on your policy. Google‑Extended offers an opt‑out signal for certain generative AI uses. Use these to shape model training access, while recognizing that robots directives are voluntary.

- Optional llms.txt: An experimental, community proposal to point models at prioritized URLs. Implement only if you can maintain it alongside sitemaps and keep scope tight.

Governance isn’t about shutting doors; it’s about setting rules and reducing surprises.

You don’t need to guess what AI likes. Build answer‑first, entity‑sound pages, ship with clean schema and technical hygiene, and run the monitor → diagnose → iterate loop. Then repeat—because freshness and clarity are what AI systems reward. For deeper GEO insights and ongoing tactics, explore the latest posts on the Geneo blog.