Brandlight vs Profound: Ultimate Guide to Brand Trust in Generative Search 2026

Discover the 2026 ultimate guide to Brandlight vs Profound—compare trust signals, source quality, and generative search visibility. Run a neutral brand trust audit. Benchmark with evidence-driven strategies.

AI answers increasingly shape how customers perceive your brand. When a prospective buyer asks an engine for “best payment platforms for startups” or “which fintech offers X,” the result isn’t just a list—it’s a narrative backed by citations, sources, and implied trust. For CMOs, that narrative is now a core brand asset. The question is: how do you measure and improve trust inside generative and answer engines without chasing noise or vendor hype?

What “brand trust” means inside AI answers

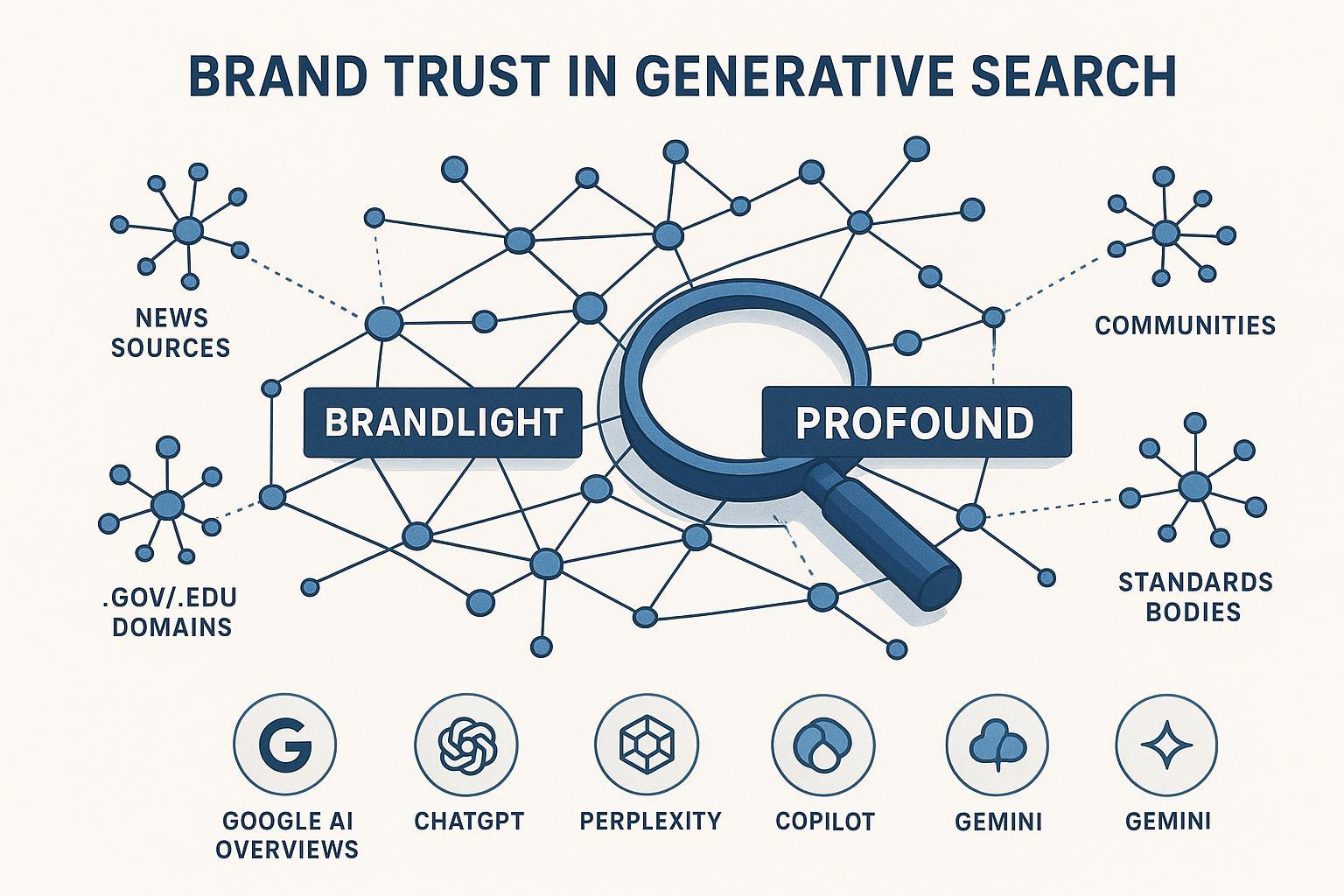

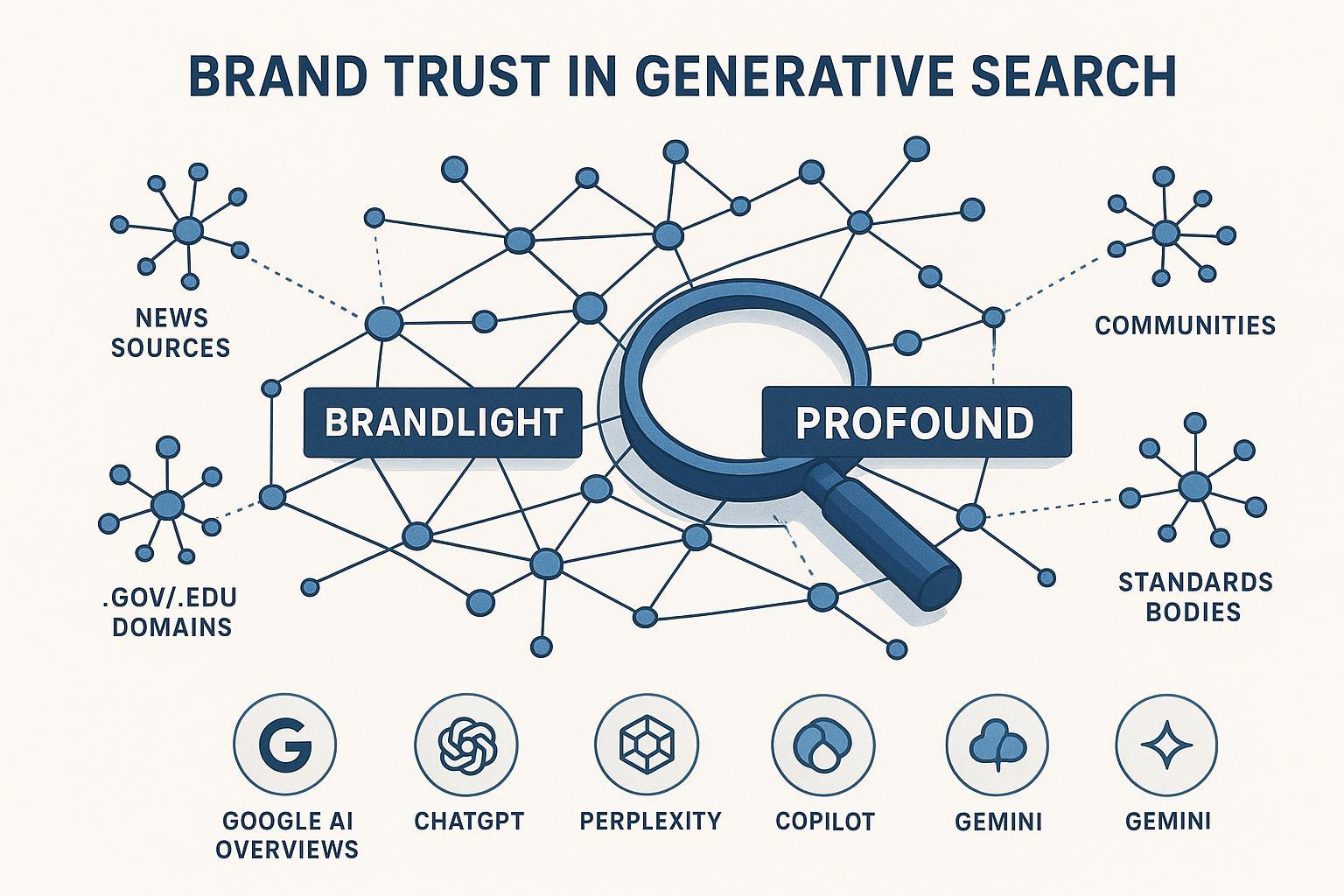

Brand trust in answer engines is the sum of visible signals that justify why an engine mentions or recommends you. Two lenses matter most for executives:

Coverage: how broadly your brand appears across queries, engines, and contexts.

Source quality: which sources the engine leans on to justify that appearance—authoritative media, standards bodies, .gov/.edu, reputable communities, professional associations, or thin/low-quality references.

Industry frameworks emphasize evidence and provenance. Semrush’s recent guidance groups trust signals into three pillars—entity identity, evidence/citations, and technical/UX integrity—prioritizing authoritative domains and transparent, expert-backed content; see the 2025–2026 materials such as the Semrush AI search trust signals overview. Governance bodies echo this. NIST’s AI Risk Management Framework highlights transparency and continual monitoring as foundations of trustworthy AI, relevant to how we evaluate cited sources and answer provenance; reference NIST AI RMF 1.0.

The engines themselves reveal how trust shows up. Google’s AI Overviews synthesize from multiple sources and surface links directly in the answer, meaning authoritative citations are discoverable and actionable; see Google’s documentation on AI features in Search. Perplexity includes numbered, clickable citations in every answer, reinforcing a culture of traceability; see Perplexity’s help center note on how it works.

Here’s the deal: if your citations skew toward thin blogs or outdated references, engines will learn and reproduce that bias. If, instead, your ecosystem includes credible sources across varied authority classes, you earn more stable visibility—across more engines.

Measurement caveats and a pragmatic audit protocol

Generative engines are volatile. Personalization, regional differences, and model updates (sometimes silent) can shift visibility week to week. Treat measurement as a rolling audit, not a one-off.

A pragmatic, reproducible protocol:

Prompt set design

Build a balanced set across branded, category, and competitor intents. Create 3–5 phrasing variants per intent to control for wording sensitivity. Maintain a codebook and finalize with human-in-the-loop review.

Cadence and sampling

Start with daily sampling for 2–3 weeks to establish variance baselines. Move to weekly or twice-weekly thereafter, re-baselining after visible model/version changes.

Engine/version logging

Record engine, model/version (where known), region, device, logged-in state, language, and timestamp for each run. Use clean sessions to minimize personalization.

Evidence capture

For engines with citations (Google AI Overviews, Perplexity), store answer text and full link lists with positions. For engines that don’t consistently expose citations, capture screenshots and any implicit references; hash files and retain timestamps.

Metrics to compute

Per-engine visibility (mentions/impressions), citation coverage (unique domains and authority classes), citation rate (answers with your brand cited ÷ total relevant answers), source quality mix (share of .gov/.edu/major media/associations/communities), sentiment polarity and stance, and stability (day-to-day variance). In multi-brand contexts, add share of voice.

Reproducibility log

Keep a changelog of prompt edits, observed engine/model updates, sampling cadence changes, and measurement exceptions. It’s the backbone of credible trend reporting.

Caveats to communicate upstream: measurements reflect public, observable answers; private personalization may differ; geo variance can be material; rate limits and answer formats change. Make those limits explicit in exec reports.

Comparing Brandlight vs Profound on trust-signal coverage and source quality

Both vendors operate in the GEO/AEO monitoring space, but their public narratives and documentation differ. To keep this executive-friendly, we anchor on observable criteria tied to coverage and source quality, and we cite canonical references for vendor-stated claims.

Brandlight positions as an AI visibility and brand intelligence platform tracking portrayal across multiple engines. Its blog emphasizes mapping citation ecosystems and argues that diversity of unique referring domains correlates more strongly with AI citations than raw traffic. See Brandlight’s post “Where AI Citations Actually Come From—and Why Traffic Isn’t the Answer” (2025). Multiple pages refer to monitoring “11 top AI engines,” with repeated mentions of ChatGPT, Gemini, Perplexity, Google AI, and Copilot; however, a single canonical list of all 11 was not located during this research window. Treat the engine count as vendor-stated.

Profound publicly documents support for Google AI Overviews and publishes enterprise-focused content on direct, front-end monitoring to “see what customers see,” rather than relying on APIs alone; see “Direct AI search engine monitoring vs API limitations” (2025). Coverage extends to ChatGPT, Gemini, Copilot, Perplexity, and Grok via features and posts across 2024–2025.

The comparison below is limited to trust-signal coverage and source quality factors, with vendor claims date-stamped and attributed.

Dimension | Brandlight (sources) | Profound (sources) |

|---|---|---|

Engines covered (claimed) | References to “11 top AI engines,” repeatedly naming ChatGPT, Gemini, Perplexity, Google AI, Copilot; canonical list not found (2025). [Brandlight site/blog] | Support for Google AI Overviews; posts and features indicate coverage of ChatGPT, Gemini, Copilot, Perplexity, Grok (2024–2025). [Profound blog/features] |

Citation mapping | Emphasis on citation diversity over traffic; dashboards for source attribution (2025). [Brandlight blog] | Cross-engine source identification via direct capture; rationale for front-end monitoring (2024–2025). [Profound blog/features] |

Measurement examples | Vendor narratives on audits, sentiment/SOV; CB Insights mention appears vendor-stated (2025). [Brandlight posts] | Case studies and third-party reviews citing enterprise outcomes and multi-vector data (2025). [Profound customers; reviews] |

Compliance posture | No public SOC 2 claim located in research window. | SOC 2 Type II announced (2025). [Profound blog] |

Evidence capture in engines | Leans on citation-exposing engines; blog analysis centers on link ecosystems (2025). [Brandlight blog] | Direct interface capture across engines; supports link evidence where present (2024–2025). [Profound blog/features] |

Limitations to note: vendor blogs reflect their own narratives; independent technical audits are scarce. Where possible, triangulate with third-party reviews and public engine documentation.

Establishing a baseline and ongoing monitoring

Disclosure: Geneo is our product. In this guide, Geneo is referenced solely as a neutral data baseline and monitoring source.

Practically, a baseline helps you distinguish volatility from signal. Using Geneo’s public concepts for AI visibility and GEO/AEO, you can track metrics such as brand mentions, citation counts, share of voice, and sentiment across engines. For definitions and taxonomy, see Geneo docs: AI visibility and GEO/AEO, and for metric guidance (Brand Visibility Score, citation rate, SOV, sentiment) see Geneo’s best practices for AI traffic and visibility tracking (2025). Note that public pages describe concepts without exposing proprietary formulas; avoid inferring exact calculations without primary documentation.

A neutral micro‑workflow example (illustrative, tool‑agnostic in principle):

Monitor: Establish a weekly baseline across engines for your top 50 prompts; record mentions, citations, and source mix by authority class.

Diagnose: Identify weak or missing authoritative sources (e.g., no references from leading associations or .gov/.edu) and volatility hotspots.

Remediate: Update or publish expert‑bylined content; add or correct schema; pursue coverage in reputable trade publications; address inaccuracies on third‑party listings or community posts.

Re‑measure: Repeat your baseline sampling; track changes in citation coverage, source quality mix, and share of voice. For AI Overviews specifics, cross‑check with guidance like Geneo’s Google algorithm update overview (Oct 2025).

From measurement to action: what CMOs should prioritize next

Governance and transparency: Make the audit protocol part of your measurement governance. Document prompts, cadence, and exceptions. Require source-type reporting (media, associations, .gov/.edu, communities) in executive dashboards.

Cross-engine parity: Set a quarterly goal for parity—your brand should be present with comparable source quality across ChatGPT, Google AI Overviews/Mode, Perplexity, Copilot, and Gemini. Disparities reveal where to focus PR, partnerships, or content improvements.

Evidence-led content: Commission expert-byline updates where engines have cited outdated material. Expand original data assets; engines favor clarity and provenance.

Community hygiene: In categories where communities influence citations (as Brandlight’s blog observes), map the forums that matter and correct inaccuracies; aim for factual, helpful contributions with transparent identities.

Executive reporting and ROI: Tie trust-signal improvements to revenue proxies (qualified pipeline changes in categories where AI answers matter). For mapping visibility to buyer journey and outcomes, see Geneo’s guide to AI search buyer journey mapping, and for agency or multi‑brand reporting practices, see how to pitch GEO services to non‑technical decision-makers.

FAQs for decision-makers

How reliable are vendor engine coverage claims?

Treat them as directional unless accompanied by dated, public lists and change logs. For Brandlight, “11 engines” appears across site/blog copy without a canonical roster page (2025). Profound publishes specific support content and rationale for direct capture.

Does compliance (e.g., SOC 2) matter for trust measurement?

It matters for enterprise procurement and data handling. Profound announces SOC 2 Type II (2025). No public SOC claim was found for Brandlight during this research window. Compliance does not directly change how engines cite sources but affects internal controls.

Should we rely on APIs or direct capture?

For trust-signal evaluation, direct, front-end observation often provides the most faithful record of “what customers see,” especially when answers include citations and UI context. APIs can complement but may lag or differ.

How often should we audit?

Establish weekly baselines, with daily sampling during pilot periods or around visible engine updates. Re-baseline after major model/version changes.

What’s the fastest path to improving source quality?

Identify the missing authority classes in your ecosystem (e.g., no recent trade press, no association references, weak .gov/.edu signals). Prioritize updates with expert bios, original data, and correct schema. Encourage factual coverage in reputable venues.

If you’re ready to establish a defensible baseline and run a 4–6 week pilot, you can use a neutral monitoring layer to track trust-signal coverage and source quality improvements. Geneo can serve that baseline with auditable visibility metrics and evidence capture across engines—used strictly for monitoring.