Tips for Transitioning from Brandlight to Geneo for Tone‑of‑Voice Training in AI Visibility Teams

Step-by-step guide for AI visibility teams migrating from Brandlight to Geneo, preserving tone-of-voice governance, enabling white-label reporting, and improving collaboration.

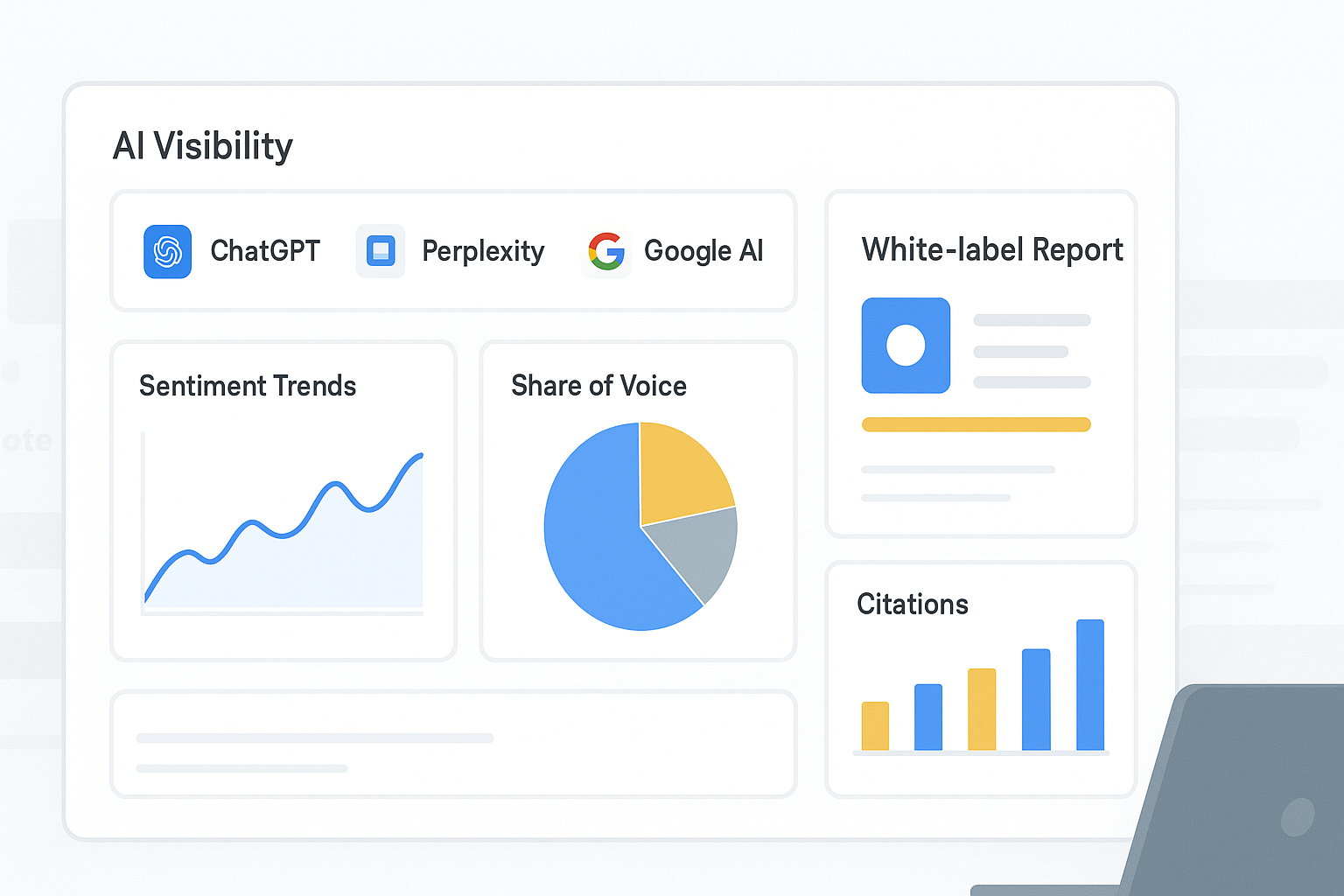

AI visibility is about being seen, cited, and accurately described by answer engines like ChatGPT, Perplexity, and Google’s AI Overview—not just ranking on classic SERPs. If your team has been using Brandlight to guide tone‑of‑voice (TOV) and governance, and now needs to operationalize that intent inside multi‑engine monitoring and agency‑ready reporting, this tutorial gives you a pragmatic path. We’ll assume some gaps in Brandlight export documentation, show how to preserve governance intent via baselines, tagging, competitor libraries, and annotations, and focus on white‑label reporting and cross‑team collaboration.

For foundational context on AI visibility and GEO vs. traditional SEO, see the explanations in the AI visibility definition and the GEO vs traditional SEO comparison.

Key takeaways

Start with an asset inventory and a master CSV/JSON schema to preserve tone governance intent during “Brandlight to Geneo migration.”

Establish cross‑engine baselines first; then layer tags, competitor sets, and segmentation so reports reflect how tone manifests in answers.

Use white‑label dashboards on a custom domain to align stakeholders while controlling narrative and audit continuity.

Treat tone via visibility cues and sentiment trends; add annotations that tie observed phrasing back to your lexicon and voice pillars.

Keep an external audit log and conservative RBAC practices where platform parity is unknown.

Step 1: Inventory governance and training artifacts

Before moving anything, catalog what your team relies on in Brandlight. Because detailed export specs aren’t publicly documented, capture both structured assets and narrative guidance.

TOV guides: lexicons (preferred/disallowed phrases), voice pillars, sample copy blocks, brand briefs.

Prompt/Q&A logs: historic prompts, outputs, reviewer notes on correctness and tone.

Sentiment/tone scores: polarity classifications and tone markers (e.g., authoritative, friendly, formal).

Tags/taxonomy: product lines, segments, regions, campaigns, and competitor sets.

Reporting templates: sections, KPIs, widgets used by stakeholders.

Baselines/alerts: thresholds for visibility and sentiment, escalation rules.

RBAC: roles, client access profiles, handoff procedures.

Outcome: A spreadsheet or repository folder that records the above, plus locations and owners. Verification: make sure each asset has provenance and a last‑updated date.

Step 2: Normalize and map fields for portability

Create a master CSV/JSON schema that can travel. This keeps tone governance intent intact when you start tracking answers across engines.

Required columns: Query, Engine, Timestamp (UTC), Brand_Mention (Y/N), Sentiment (pos/neu/neg), Citation_URL, Competitor_Mention(s), Tag(s), Annotation, Evidence_Ref.

Tone metadata: Voice_Pillar, Allowed_Phrase_Flag, Disallowed_Phrase_Flag.

Identity/versioning: Record_ID, Version, Editor, Change_Reason.

Two notes:

If Brandlight only outputs PDFs, reconstruct tables via careful copy or OCR. Mark reconstructed fields clearly.

Keep raw exports as read‑only; create a normalized layer for operational use.

Field (normalized) | Purpose | Example |

|---|---|---|

Query | Buyer/visibility question tracked across engines | “Best project management SaaS for agencies” |

Engine | The answer engine queried | ChatGPT / Perplexity / Google AI Overview |

Timestamp_UTC | Collection time for auditing | 2026‑01‑06T12:34:00Z |

Brand_Mention | Whether your brand appears | Y |

Sentiment | Polarity of the mention | pos |

Citation_URL | Source cited in the answer | |

Competitor_Mentions | Named rivals observed | RivalOne; RivalTwo |

Tags | Segment/region/campaign | SMB; North America; Q1‑Launch |

Annotation | Tone observations tied to governance | “Uses ‘trusted partner’—acceptable per lexicon.” |

Evidence_Ref | Screenshot or stored answer copy | s3://answers/2026/01/06/qa‑123.png |

Voice_Pillar | Pillar linked to phrasing | “Authoritative clarity” |

Allowed_Phrase_Flag | True if phrase is whitelisted | true |

Disallowed_Phrase_Flag | True if phrase violates rules | false |

Record_ID | Stable ID for this row | QA‑123 |

Version | Edit iteration | v2 |

Editor | Who last touched it | Analyst A |

Change_Reason | Why it changed | “Updated citation link” |

Outcome: A portable dataset you can use to seed tracking and reporting. Verification: sample 10–20 records across engines; confirm consistency and auditability.

Step 3: Establish cross‑engine baselines

Pick 50–100 high‑value queries spanning buyer questions, comparisons, and category leadership prompts. Monitor these across ChatGPT, Perplexity, and Google AI Overview to capture your starting point.

Include product‑specific queries, competitor contrasts, and category definers.

Track mention rate, sentiment, citations, and where the answer positions your brand.

Use the baseline to identify gaps and quick wins.

For KPI design aligned to AI search, see the AI search KPI frameworks. These emphasize visibility and sentiment over traditional rankings.

Outcome: A baseline report you can compare against as you migrate. Verification: daily collection integrity plus manual spot checks for 5–10 queries.

Step 4: Recreate tags and competitor libraries

Mirror your Brandlight segmentation so stakeholders can slice reports the way they’re used to.

Tags: carry over segments, regions, campaigns, and product lines.

Competitors: build libraries per segment to benchmark share of voice and sentiment.

Filters: verify that filtering produces expected subsets and comparisons.

Outcome: Filterable dashboards and reports that reflect prior governance structure. Verification: run tag‑specific views and confirm competitor coverage.

Step 5: Configure white‑label reporting and access

Agencies and multi‑brand teams need stakeholder‑ready views that match house style and access policies.

Branding: configure logo, color accents, and (if supported) a custom domain/CNAME for client‑facing dashboards. See the agency white‑label overview for capabilities.

Report sections: map Brandlight report areas (mentions/citations, sentiment trend, share of voice, engine breakdown) to equivalent widgets.

Access: adopt least‑privilege roles for internal collaborators and clients; document who sees which brands and segments.

Outcome: Consistent, client‑ready reporting that minimizes retraining. Verification: preview on the custom domain; walkthrough with stakeholders to confirm KPIs and filters behave as expected.

Step 6: Operationalize tone via visibility cues and annotations

Most AI visibility platforms don’t “train” tone directly. Treat tone as something you infer from phrasing, sentiment, and positioning in answers.

Use sentiment and phrasing observations to detect tone drift.

Annotate answers with references to your lexicon and voice pillars.

Create weekly reviews for annotated queries (e.g., 15–20 representative prompts).

Outcome: A practical bridge from governance intent to observed AI behavior. Verification: week‑over‑week annotation quality checks and drift detection.

Practical example (with disclosure) — Brandlight to Geneo migration in action

Disclosure: Geneo is our product.

Let’s say your team notices a softening of “authoritative clarity” in answers on message‑critical queries. In Geneo, you can monitor sentiment trends across ChatGPT, Perplexity, and Google AI Overview, compare competitor phrasing in visibility dashboards, and add annotations when answers use or avoid preferred lexicon terms. Over a few weeks, track whether the share of voice and phrasing align more closely with your tone pillars after adjusting your cited sources and on‑site copy. Keep notes and evidence references in your normalized dataset to sustain auditability. For platform context, consult the docs overview.

Step 7: Risk controls—audit log continuity and escalation

When platform parity is uncertain, preserve governance with external safeguards.

Maintain an external audit log capturing baseline definitions, alert thresholds, query changes, and stakeholder sign‑offs.

Define escalation paths if sentiment or visibility worsens: PR/legal review, content updates, outreach to authoritative citation sources, or query‑set adjustments.

Record incidents and outcomes for future retrospectives.

Outcome: Documented continuity and a playbook for adverse shifts. Verification: quarterly audit reviews plus a tabletop exercise to test escalation.

Step 8: QA gates and rollout

Run a structured post‑migration QA before you call the migration “done.”

Compare current KPIs against baselines for key queries.

Validate tag accuracy, competitor inclusion, and widget mapping.

Confirm data freshness and that stakeholders accept the white‑label report structure.

Outcome: Approved production rollout with minimal surprises. Verification: a sign‑off checklist with named approvers.

Step 9: Collaboration cadence and permissions

Make performance reviews a habit to keep teams aligned and governance alive.

Weekly: dashboard review for tone cues, visibility shifts, and competitor moves; assign actions.

Permissions: least‑privilege for editors; read‑only for most client viewers; document exceptions.

Notes: store meeting decisions and changes in your audit log.

Outcome: Sustainable collaboration that surfaces issues early. Verification: meeting notes and action tracking.

Step 10: Continuous improvement and query refinement

AI answer behavior evolves. Your monitoring and governance should, too.

Monthly: expand/refine query sets, adjust tags, and update lexicon references.

Track uplift and setbacks; correlate tone changes with visibility and sentiment movements.

Consider buyer‑journey stages when selecting queries.

Outcome: Iterative gains in AI visibility and tone alignment. Verification: documented changes and KPI movement.

Troubleshooting and conservative guidance

Missing Brandlight fields: reconstruct from PDFs or report visuals; mark inferred fields and keep originals intact.

Tag misalignment: create a new taxonomy tier; keep legacy labels as secondary metadata if needed.

RBAC parity unknowns: avoid over‑claiming permissions; apply least‑privilege and maintain external audit logs.

Tone drift without a native module: rely on sentiment trends and phrasing analysis; supplement with manual annotations and governance reviews.

Cross‑engine discrepancies: expect differences between ChatGPT, Perplexity, and Google AI Overview; adapt queries and content tactics to each engine’s behavior.

Next steps

Stand up your normalized dataset and initial baseline this week; run a pilot with one segment and a small competitor set.

If you want an agency‑ready, branded dashboard and daily cross‑engine monitoring while you iterate, explore Geneo.

For deeper context on frameworks and capabilities, see the AI search KPI frameworks and platform docs mentioned earlier.