2025 Best Practices for Answer Engine Optimization in AI-Focused Companies

Discover proven strategies for Answer Engine Optimization (AEO) in AI-focused companies. Learn cross-engine monitoring & optimization for ChatGPT, Perplexity, and Google AI Overview.

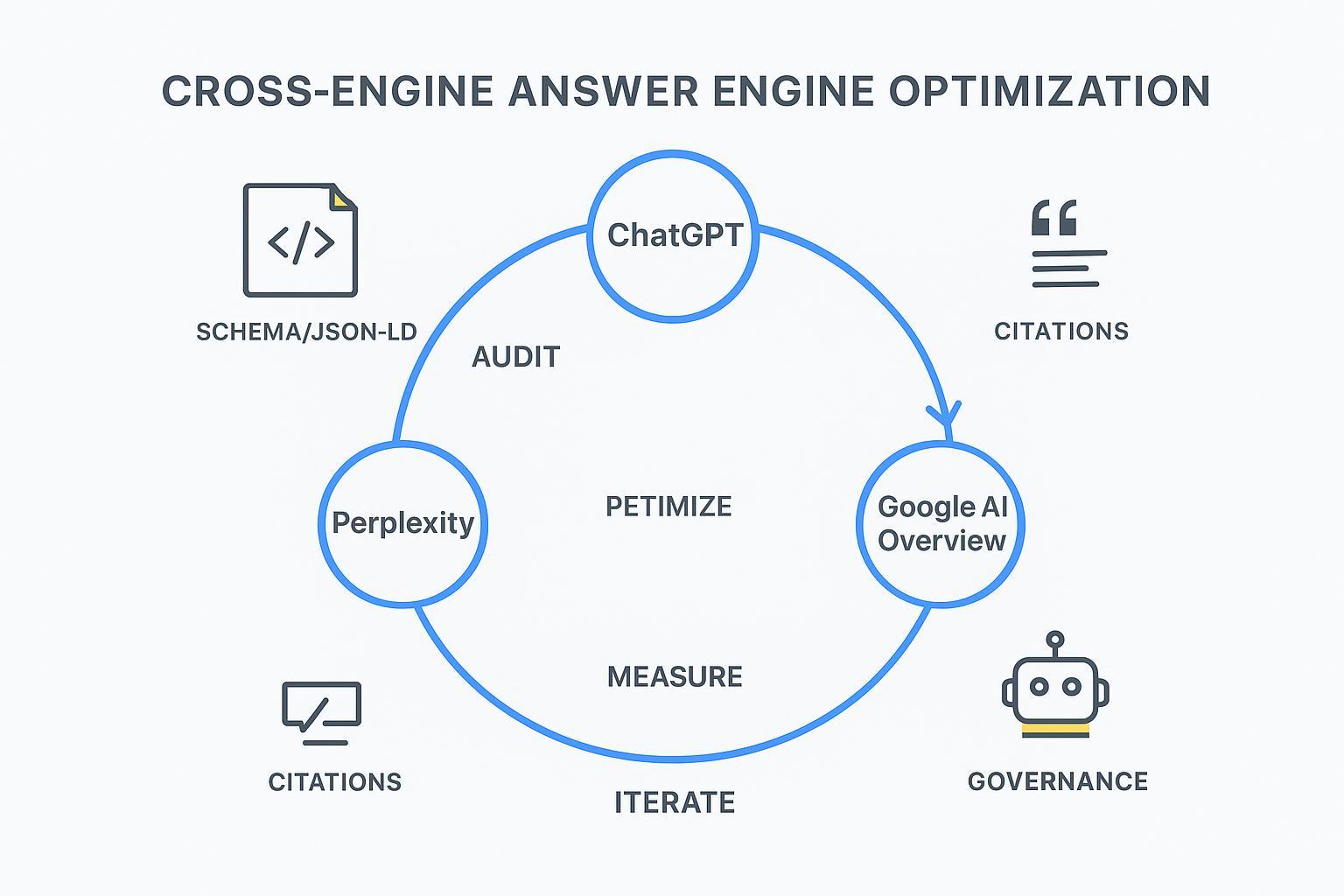

If AI engines are where questions get answered, then AEO is how your brand becomes the source that’s cited. For enterprise market leaders, the mandate is clear: design content that answer engines can trust, monitor how it’s used across ChatGPT, Perplexity, and Google AI Overview, and run a closed loop of optimization that lifts visibility and conversions.

What AEO Means in 2025—and Why It Matters

Answer Engine Optimization is the discipline of shaping content so generative systems can extract, verify, and cite it as the “best answer.” It’s not just about ranking pages; it’s about becoming the reference those engines choose when they synthesize answers.

Three realities shape AEO today:

Engines synthesize, then cite. Your content must be extractable, consistent, and verifiable.

Citations depend on visible, corroborated signals (clear answers, schema, provenance, authority)—not secret “AI ranking factors.”

Cross‑engine differences are real; you need one playbook with engine‑specific tactics.

Google’s guidance emphasizes building content that can succeed in AI surfaces and controlling crawlers via REP/robots.txt; see AI features and publisher controls (Google, 2025) and Succeeding in AI Search (2025). For structured data, align with canonical Schema.org types like FAQPage, QAPage, and HowTo.

How Answer Engines Differ—and What That Means for Optimization

ChatGPT (Search/Browse): Displays sources and supports precise source requests; see OpenAI’s ChatGPT Search announcement and help docs. Experiments should test prompt phrasing that requests citations and specific domains.

Perplexity: Query-led discovery with prominent citations; favor concise, fact-rich answer blocks and corroborated references.

Google AI Overview: Draws from indexed content and signals surfaced in Search. Publisher controls and structured data matter; align with Google’s AI features guidance.

Think of each engine like a different editor. They’ll all ask: “Is the answer clear? Is the source reliable? Can I verify this with more than one signal?” Your job is to make saying “yes” effortless.

The Closed‑Loop AEO Playbook

AEO is an operating system, not a one‑off project. Run this loop continuously:

1) Audit: Know what engines see

Map entities (brand, products, key topics) and consolidate naming. Confirm pages present consistent facts, dates, and definitions.

Extract answer blocks: identify canonical Q&A, how‑to steps, definitions, and summaries. Place them high on the page.

Validate structured data in JSON‑LD; mirror visible content and test with Rich Results tools. Use FAQPage, QAPage, and HowTo when appropriate.

Check provenance and authority: cite primary sources, maintain author bios, and cross‑link corroborating pages.

Review robots.txt/REP and bot access to ensure intended crawlability; Google provides robots.txt specifications and a creation guide.

2) Experiment: Shape answers for extraction

Create modular answer units (100–180 words) that directly answer a single high‑intent question.

Test phrasing variations that improve extractability (“First, … Next, … Finally, …” for HowTo; direct Q/A for FAQ).

In ChatGPT, run controlled prompts requesting sources and domain emphasis; log which pages get cited under different wording.

In Perplexity, compare concise vs. expanded answers and track citation frequency.

In Google, validate that your structured answers align with visible page content and see whether AI Overviews begin pulling them.

3) Optimize: Strengthen signals

Align schema types with the visible answer blocks and keep JSON‑LD in sync.

Reinforce entity clarity: consistent names, glossaries, and canonical pages.

Improve page speed, UX, and clarity—engines prefer clean, unambiguous content.

Add corroboration: link to authoritative sources and internal references that reinforce key facts.

Publish update notes when facts change; engines reward current, consistent information.

4) Measure: Track visibility and impact

Log impressions of your brand in AI answers, citation frequency, and share‑of‑voice across engines.

Record answer quality markers: whether your exact phrasing is used, whether key facts are intact.

Tie answers to outcomes: associate sessions and conversions with AI‑sourced traffic where possible; track assisted conversions.

Maintain an experiment journal: prompt wording, page changes, dates, and resulting citation shifts.

5) Iterate: Operational cadence

Weekly: run small prompt/content tests and update the experiment log.

Monthly: re‑audit key pages, refresh schema, and roll up performance.

Quarterly: retire outdated facts, launch new canonical answers, and re‑align stakeholder goals.

Engine‑Specific Cues (Quick Reference)

Engine | Citation Behavior | Optimization Cue |

|---|---|---|

ChatGPT | Shows sources; supports specific source requests | Test prompts that request citations and target domains; keep concise, verifiable blocks |

Perplexity | Prominent citations per query | Prioritize fact density; provide corroborated references |

Google AI Overview | Draws from indexed content; relies on publisher controls | Align structured data with visible content; maintain crawlability and provenance |

Governance and Risk You Can’t Ignore

Good AEO runs on guardrails. Pair advisory signals with enforcement:

Robots and REP controls: define crawler behavior with robots.txt; see Google’s robots.txt refresher series (2025).

Content provenance and bot control: monitor non‑human access and signal preferences. Cloudflare outlines policy expectations in Content Signals and surveys bot ecosystems in From Googlebot to GPTBot (2025).

Compliance: maintain author disclosures, update logs, privacy policies, and data handling standards. Don’t rely on a single control; layer your defenses.

A Practical Cross‑Engine Workflow Example (Geneo)

Disclosure: Geneo is our product.

When you need one place to monitor citations, phrases, and share‑of‑voice across engines, a cross‑engine workflow helps you keep the loop tight. For example: run an audit to capture where your brand appears (or doesn’t) in ChatGPT, Perplexity, and Google AI Overview; test answer phrasing on priority pages; update structured data and entity glossaries; then measure citation shifts weekly. A platform like Geneo centralizes this loop, tracking brand mentions, link visibility, and reference counts so your team can see which experiments increase AI visibility and where you still need reinforcement. Keep the tone neutral: the goal is operational clarity, not hype.

Measurement and Attribution Framework

To win resources, you need to connect AEO to outcomes:

Map answer exposure to sessions: label traffic influenced by AI answers (where possible) and measure assisted conversions.

Track share‑of‑voice by engine and topic cluster; compare before/after optimization windows.

Maintain a change log with precise dates and URLs; correlate citation changes with content updates.

Build stakeholder‑friendly rollups that summarize visibility shifts, key experiments, and ROI.

For deeper guidance on shaping content for citations, see Geneo’s step‑by‑step guide Optimize Content for AI Citations, and for cross‑engine monitoring differences, see ChatGPT vs Perplexity vs Gemini vs Bing: Monitoring Comparison.

Next Steps: Run a Free AI Visibility Audit

Ready to see where your brand stands in AI answers? Start by running a free AI visibility audit to identify your citation gaps, engine‑specific opportunities, and the quickest paths to improvement. Use Geneo’s audit to capture baseline visibility and prioritize experiments on the pages most likely to be cited in ChatGPT, Perplexity, and Google AI Overview. Then, operationalize the closed loop—Audit → Experiment → Optimize → Measure → Iterate—and track the lift.