Best Answer Engine Optimization Tools: 2025 Strategies & Best Practices

Discover 2025’s best answer engine optimization strategies for enterprise SEO—cross-platform AEO tools, unified observability, actionable benchmarks.

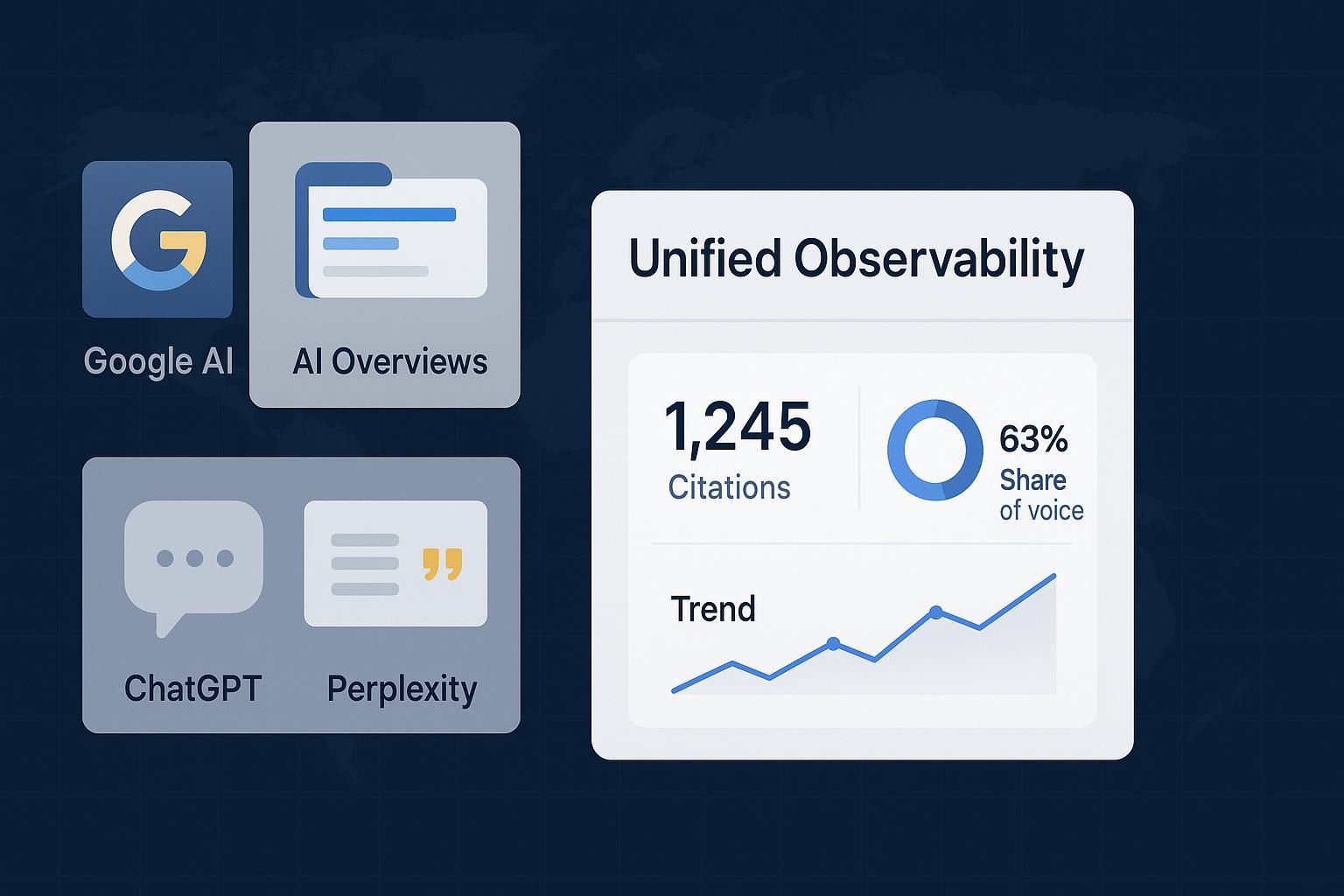

Your brand’s answers are increasingly decided by systems you don’t control. Do you know when, where, and why you’re cited across Google AI Overviews, ChatGPT Search, and Perplexity?

AEO isn’t just “SEO with AI” — it’s visibility in a zero‑click world

Answer Engine Optimization (AEO) is about earning inclusion and citations in AI‑generated answers, not just ranking blue links. The stakes are growing: in the United States, 58.5% of Google searches result in zero clicks to the open web, with only 374 of every 1,000 searches sending traffic to publishers, according to the 2024 cohort in the SparkToro zero‑click study. Meanwhile, the prevalence of Google’s AI Overviews has been volatile, rising from roughly 6–7% of queries in early 2025 to peaks above 24% in mid‑2025 before settling closer to 16% by November, per the Semrush AI Overviews study hub (2025).

Two things still carry over from classic SEO: crawlability and helpful, people‑first content. Google’s own guidance says there are no special AIO‑only technical requirements beyond standard Search fundamentals—ensure your pages are easy to fetch, parse, and verify, and you’ll be eligible for inclusion in AI answers, as documented in Google’s AI features guidance (2025).

Why unified observability across ChatGPT, Perplexity, and Google AI Overviews is non‑negotiable

Each engine synthesizes answers differently and cites sources with distinct patterns. Treating them as one channel hides risk and opportunity.

Google AI Overviews/AI Mode: synthesizes with custom Gemini models and includes links in‑line so users can verify the information. Google has continued adjusting link placement and quality controls, documented in Google’s updates and AIO quality notes (2024–2025).

ChatGPT Search: retrieves results through partners and displays in‑line attributions and links, evolving from the SearchGPT prototype to a consumer product. See OpenAI’s ChatGPT Search announcement (2024).

Perplexity: operates citation‑first—every answer surfaces clickable sources—with added analytics for publishers, per the Perplexity Publishers’ Program (2024).

What you need is a single, consistent view of: Which prompts cite you; where your links appear inside answers; whether you’re named or merely implied; and how often competitors are cited instead. Without cross‑engine visibility, you’ll optimize in the dark.

The enterprise AEO workflow: pilot → benchmark → scale

AEO wins come from repeatable processes and verifiable signals. Here’s a pragmatic five‑step workflow we use with enterprise teams.

Audit inclusion foundations Ensure Googlebot access, fast rendering of primary content, clear H1–Hn hierarchy, and “answer capsules” (a concise, plain‑language summary near the top). Apply appropriate schema (FAQPage, HowTo, Organization, Product, Author). Make authorship, credentials, and update history explicit. Outbound‑link to canonical sources. Google reiterates these fundamentals in its AI search guidance (2025).

Design queries and run controlled tests Group questions by intent (informational, commercial, transactional, navigational). For each platform, run weekly prompt sets and record whether you’re cited, where links appear, and if your brand is mentioned by name. Expand with fan‑out questions (comparisons, pros/cons, alternatives) to match how AI chains reason.

Establish unified observability and dashboards Disclosure: Geneo is our product. In practice, teams need a cross‑engine dashboard that logs AI share of voice (SoV), citation frequency by intent, in‑text link presence, and sentiment across ChatGPT, Perplexity, and Google AI answers, then ties those to Search Console and analytics. One reference implementation that explains how such systems ingest prompts, normalize sources, and track visibility is outlined in our post on GEO/GEO‑style monitoring architecture. Keep the setup neutral: even if you build in‑house, mirror this structure so executives can see deltas week over week.

Optimize with sprint cycles Prioritize topics with clear under‑citation versus competitors. Improve machine readability (headings, fragments, schema coverage), add original data or examples, and tighten answer capsules. Track whether inclusion improves and whether link position inside the AI panel moves closer to the top or appears in‑text.

Executive roll‑ups and scale Summarize baselines, deltas, and business impact. Seer Interactive’s longitudinal cohorts show CTR often falls when AI Overviews appear, but sites cited inside AIOs fare far better than those excluded; see the September 2025 update on AIO impact on CTR. Use that pattern to justify investment: connect “cited vs. not cited” status to trial/demo conversions on pages receiving AIO traffic.

Platform‑specific notes that actually move the needle

Google AI Overviews and AI Mode

Inclusion mechanics: pages eligible via normal Search fundamentals; no AIO‑specific tags required, per Google’s AI features page (2025).

What helps: concise lead answers, strong internal linking, and schema for FAQs/How‑tos where applicable. Google has increased in‑text links and improved quality guardrails; see Google’s AIO update notes (2024).

Measurement nuance: AIO prevalence fluctuates by topic and time; align cadence with category volatility, as tracked in the Semrush prevalence series (2025).

ChatGPT Search

Retrieval + attribution: OpenAI highlights clear in‑line attributions and partner search retrieval in its 2024 announcement.

What helps: pages with clear provenance (authorship, citations), minimal rendering barriers, and concrete facts or original data increase the odds of being chosen as supporting sources.

Measurement nuance: Test both short and long‑form prompts; log how often your brand is named versus your URL being linked.

Perplexity

Citation‑first behavior: every answer shows clickable sources; the Publishers’ Program exposes citation analytics for participating sites, per Perplexity’s 2024 post.

What helps: succinct, verifiable answers with outbound citations and anchorable sections. Perplexity favors clear fragments and source clarity.

Measurement nuance: Track how frequently you’re cited in the first three sources and whether your snippets are reused verbatim.

KPIs and executive reporting that earn buy‑in

Tie AEO to outcomes with a small, durable set of metrics.

AI Share of Voice (SoV): percentage of prompts/responses that cite or name your brand, by platform and intent.

Citation Frequency and Link Position: counts by query class and in‑text versus footnote placement in answers.

Panel Prominence: whether links appear in the body of the answer versus below it, and whether your brand name is visible.

CTR/Conversion Delta: compare sessions and conversion rates from AIO‑present SERPs when cited vs. not cited; Seer’s cohorts illustrate the directional impact in 2024–2025.

Content Quality Signals: machine readability score (headings, fragments, schema coverage), E‑E‑A‑T markers (authorship, credentials), and freshness.

KPI | What to Track | Why It Matters |

|---|---|---|

AI SoV | % of prompts citing/naming you, by engine/intent | Proves competitive visibility in zero‑click spaces |

Citation Frequency & Position | Count and in‑text vs. footnote placement | In‑text links tend to drive higher engagement |

Panel Prominence | Appearance inside answer text vs. below | Signals engine trust and user attention |

CTR/Conversion Delta | Lift when cited vs. not cited on AIO SERPs | Links visibility to pipeline outcomes |

Content Quality Signals | Schema, authorship, freshness cadence | Improves parseability and eligibility |

For background on AEO analytics framing, see Avinash Kaushik’s perspective on answer‑engine analytics and KPI design. Align with leadership reporting cycles: weekly detection, monthly exec summaries.

Build vs. buy: choosing AEO tooling without the hype

Most enterprise teams blend internal scripts with vendor platforms. Evaluate claims with a simple rubric:

Coverage breadth: Does the tool reliably test and log citations across ChatGPT, Perplexity, and Google AIO? How is prompt sampling governed?

Normalization: Can it unify mentions, citations, and link‑position data into one schema for apples‑to‑apples comparison?

Attribution plumbing: Does it join AI visibility with Search Console, analytics, and CRM so you can quantify demo/trial impact?

Governance and access: Role‑based controls, audit trails, and white‑label reporting for agencies.

For an example of what a cross‑engine monitoring approach looks like in practice (architecture, ingestion, normalization), review our overview of AI search visibility tracking workflows. And if you’re comparing vendors, this market context can help you ask the right questions about observability scope and reporting depth.

Governance, risk, and change management

Accuracy and provenance are non‑negotiable. Google’s 2024 quality updates aimed to reduce low‑quality material and tune when AIOs appear, which raises the bar on E‑E‑A‑T—clear authorship, citations to primary sources, and transparent updates; see Google’s March 2024 update note. Accessibility matters, too: semantic HTML, alt text, and readable summaries assist both users and parsers. Finally, set policies for privacy (PII handling), hallucination escalation, and brand voice consistency across answer engines.

A 30‑day pilot plan tied to demo/trial goals

Use this to prove value quickly and secure broader investment.

Days 1–5: Audit 25–50 priority pages; fix crawlability/rendering issues; add answer capsules and appropriate schema; document authorship and update cadence.

Days 6–10: Define 200–300 prompts across engines (intent‑labeled). Run baseline tests and log citations, link positions, and brand mentions.

Days 11–20: Stand up a unified dashboard; connect Search Console and analytics; set weekly detection jobs; flag under‑citation gaps vs. two key competitors.

Days 21–25: Execute optimization sprints on 8–12 topics with the largest gaps; add original data, tighten summaries, and improve fragment anchoring.

Days 26–30: Re‑test; quantify deltas in AI SoV and citation position; report CTR/conversion deltas when cited vs. not cited; present an executive decision memo for scale‑up.

Looking for a concrete artifact to compare against? Skim a sample AI visibility report with annotated prompts and citations, then adapt the structure to your stack.

Where this goes next

AEO will keep changing as engines evolve. But the combination of solid inclusion foundations, unified observability, and disciplined optimization cycles is durable. Think of it this way: if you can’t measure when you’re cited, you can’t manage your brand narrative in AI answers. Ready to run the pilot? Let’s make it measurable and tie it to pipeline.

If you want a hands‑on walkthrough of a cross‑engine observability setup mapped to your queries and KPIs, you can request a short trial or demo. We’ll align on your prompts, connect your data, and validate the dashboard against your conversion goals.