How AI Summaries Pick Product Recommendations: 2025 Best Practices

Master AI summary product recommendations in 2025: actionable best practices for Google AI Overviews, ChatGPT, and Perplexity. Optimize visibility, credibility, and measurement.

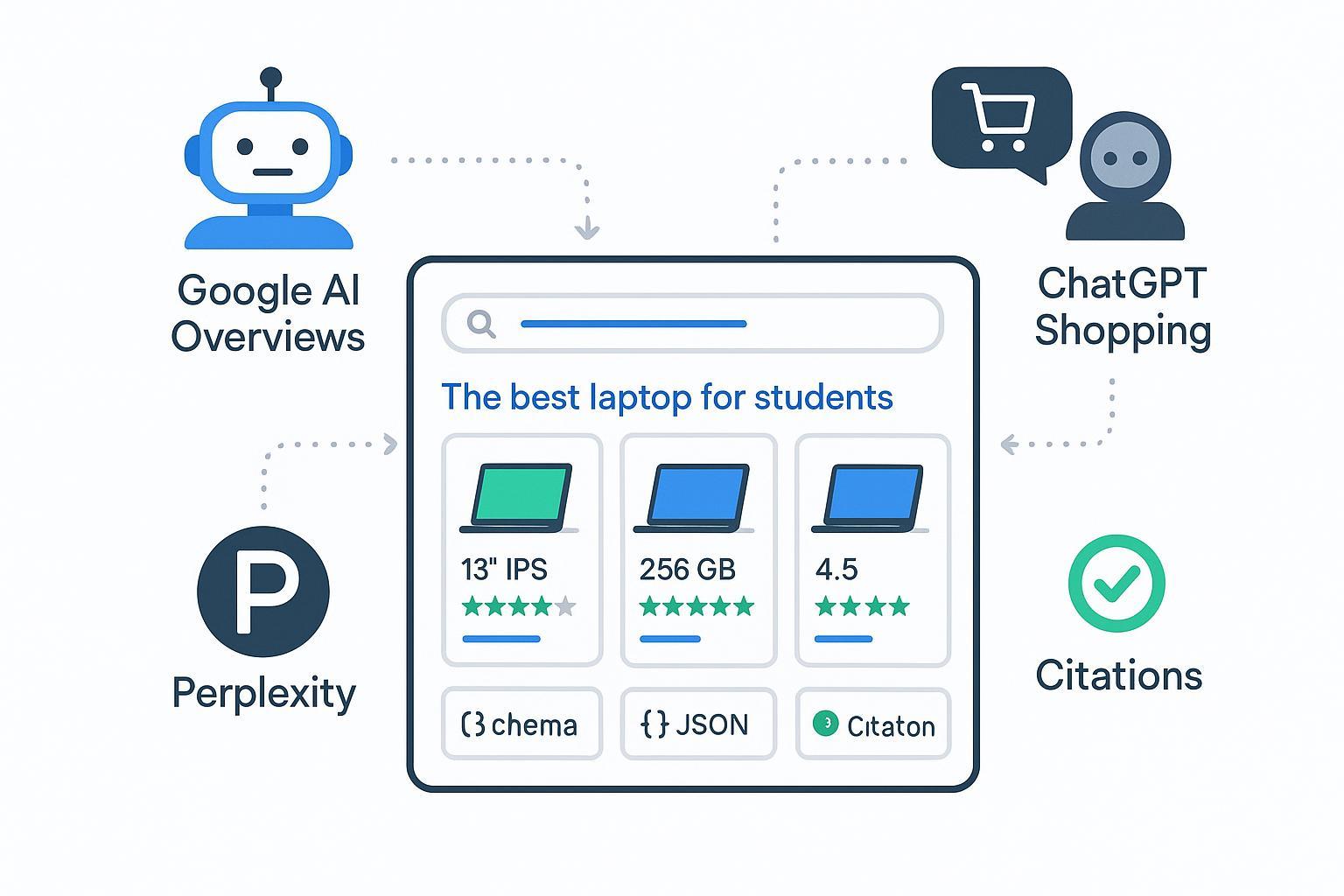

When an AI summary recommends a product at the top of a result, your brand either gets prime visibility—or disappears from the conversation. The tricky part? None of the engines publish full ranking rules. So, how do Google AI Overviews, ChatGPT Shopping Research, and Perplexity actually decide which products to show, and what can you do to be the source they cite?

What AI engines look for (the common threads)

Across platforms, a few patterns show up again and again: accurate, structured product data; content that’s easy to extract into answer blocks; freshness; and credible signals of expertise.

- Make product details extractable: specs tables, concise summaries, FAQs, pros/cons, and clear images with alt text.

- Keep data fresh and consistent: on-page content must match structured data, feeds, and inventory.

- Show credibility: real reviews, expert authorship, clear policies, and references to authoritative sources.

If you’re wondering whether schema or feeds “guarantee” inclusion, here’s the deal: they don’t. They simply increase eligibility and trust while making your information easier to reuse.

Platform mechanics and differences

Google AI Overviews

Google has explained that AI Overviews are generated answers that synthesize multiple sources and include links for exploration, powered by Gemini and surfaced in Search’s AI features. Citations appear inside the overview, and ads sit in clearly labeled, separate slots. See Google’s explanations in the May 2024 AI Overviews announcement and 2025 AI Mode update, and general site-owner guidance in AI features and your website (Search Central, 2025).

What’s not published: granular ranking criteria for which sources and products get cited. Industry studies do show AIO prevalence and CTR changes, but treat them as observations rather than official rules.

ChatGPT Shopping Research

OpenAI’s Shopping Research experience performs multi-site product research, asks clarifying questions, and tailors options as it discovers current details like price and availability. OpenAI’s announcements outline how Search returns links to sources and how Shopping Research curates options: see Introducing ChatGPT Search (OpenAI, 2024) and Shopping Research (OpenAI, 2024). For merchant integrations, OpenAI provides a Product Feed Spec and Agentic Checkout Spec, plus setup guides.

What’s not published: a ranking formula for listing order or a paid auction for Shopping Research placements on those pages. Practically, completeness, accuracy, and frequent feed updates matter a lot.

Perplexity

Perplexity emphasizes transparent citations and clearly labeled advertising. Answers show inline citations, and sponsored content is identified as “sponsored follow-up questions” or side media. See Why we’re experimenting with advertising (Perplexity, 2024) and the Publishers Program announcement (2024). Exact source-selection criteria aren’t public; the best bet is to publish citation-friendly, well-evidenced content.

| Platform | Official source selection details published? | Citations format | Ads/sponsored disclosure | Product data integration |

|---|---|---|---|---|

| Google AI Overviews | No detailed algorithm; general guidance only | Links within the overview | Ads in separate labeled slots | Structured data (Product/Offer/Review); variants supported |

| ChatGPT Shopping Research | Not full ranking; Search returns links; Shopping uses web + merchant feeds | Links to web sources; curated options | No documented ad auction in Shopping on linked pages | Merchant Product Feed Spec; Agentic Checkout |

| Perplexity | No detailed selection criteria | Inline bracketed citations | “Sponsored follow-up questions” or side media, labeled | Not documented; focus on citations transparency |

Signals you can actually influence

Make your content extractable

AI summaries prefer clean, structured facts they can confidently lift. Think of your product page like a “data layer” for answers: plain-language summaries, specs tables, highlights, and short FAQs.

- Summaries: 2–3 sentences that name the product, use case, and standout attributes.

- Specs: a compact table with the key technical details a shopper would compare.

- Pros/cons: a balanced, evidence-backed list (avoid puffery; link to proof).

- FAQs: 4–6 questions addressing compatibility, sizing, setup, policies.

Keep structured data spotless

Google stresses that structured data supports eligibility but doesn’t guarantee features. The markup must match visible content and pass validation.

- Use Product, Offer, and Review/AggregateRating where appropriate; avoid self-serving reviews. See Product structured data and Review snippet guidance.

- Implement variants using ProductGroup/hasVariant/variesBy so each variant URL accurately reflects selected attributes, images, price, and availability. Google detailed variants support in Product variants (Search Central, 2024).

- Validate routinely with Rich Results Test and monitor Search Console for coverage and errors.

Feed completeness and freshness for ChatGPT

If you sell online, treat OpenAI’s product feed like your single source of truth. Enrich beyond the basics, and update frequently.

- Required fields: id, title, description, link, image_link, price, availability; enable_search for discoverability.

- Enrichment: ratings/reviews, rich media, categories, GTIN/MPN, variant attributes.

- Policies: seller identity, returns, privacy, terms—essential for agentic checkout.

- Frequent refreshes: keep price/inventory accurate to avoid demotion; OpenAI’s production guides emphasize automated updates. See Product Feed Spec and Production guidance.

Workflow: from audit to measurement

A practical sequence helps teams ship improvements without getting lost in theory.

- Audit extractability and accuracy: inventory product pages for summaries, specs tables, FAQ blocks, image alt text, and review integrity. Check that visible content matches schema and feeds.

- Implement and validate: add or refine JSON-LD (Product/Offer/Review), set up variants, and validate in Rich Results Test; configure OpenAI feed fields and test ingestion.

- Refresh cadence: schedule monthly content and schema reviews; automate feed updates to reflect price/availability changes.

- Monitor and iterate: track inclusion and citations across engines, plus CTR and sentiment shifts; prioritize pages with the highest opportunity.

Key KPIs:

- AIO trigger rate: how often tracked queries show AI Overviews.

- Citation share-of-voice: the percentage of answers or overviews that cite your domain.

- Sentiment: the tone of AI answers referencing your brand.

- CTR deltas: organic CTR changes when AIO appears; segment by cited vs. non-cited.

- Feed freshness: update cadence and mismatch rate on price/inventory.

Tool example (disclosure): Geneo is an AI search visibility platform that monitors brand mentions, citations, and sentiment across ChatGPT, Perplexity, and Google AI Overviews, with historical prompt tracking and dashboards. If you need a single place to watch citation share-of-voice and trends, Geneo’s beginner guide explains how teams operationalize monitoring without reinventing the wheel.

For deeper optimization playbooks, see Geneo’s Best practices for AI answer engine content optimization (2025) and an overview of new AI search terms in Decoding GEO, GSVO, GSO, AIO, LLMO.

Evidence snapshot: why citations matter

Multiple 2024–2025 studies show that when AI Overviews appear, traditional organic CTR often drops, and being cited can mitigate the impact.

- Seer Interactive’s 2024–2025 analysis across thousands of queries observed organic CTR falling sharply on AIO-triggering queries, while cited brands saw notably higher CTR than non-cited peers. See Seer’s summary and coverage in Seer Interactive’s AIO CTR analysis and Search Engine Land’s report (2025).

- Amsive’s 2025 research across 700K keywords reported an average -15.49% CTR drop on AIO-triggering terms and offered remediation strategies that emphasize earning citations and improving extractable content. See Amsive’s analysis (2025).

These findings don’t reveal Google’s algorithm, but they reinforce a practical takeaway: make your pages the easiest, most credible sources to cite.

Pitfalls and compliance notes

- Self-serving reviews: avoid marking up testimonials you control as independent reviews; follow Google’s Review Snippet guidance.

- Stale feeds and mismatched schema: engines penalize inconsistency via decreased trust; ensure price, availability, and variant attributes align everywhere.

- Overclaiming and thin content: unsupported superlatives or filler make your page less likely to be cited.

- Ignoring validation: skip Rich Results Test and Search Console at your peril; schema errors quietly erode eligibility.

Action plan: what to do this quarter

- Weeks 1–2: Run an extractability audit on your top 50 product URLs; add summaries, specs tables, and 4–6 FAQs per page. Fix image alt text and ensure on-page details match schema.

- Weeks 3–4: Implement or correct Product/Offer/Review JSON-LD; set up ProductGroup variants; validate and fix errors. Stand up or enrich your OpenAI product feed with optional fields (ratings, GTIN, rich media) and automate updates.

- Weeks 5–8: Monitor AIO trigger rate, citation share-of-voice, sentiment, and CTR deltas. Identify pages where citations are absent and iterate—improve evidence density, refresh content, and pursue authoritative references.

Want a quick win? Start with one category, ship the full workflow, and compare before/after citation rates. What do you learn when your best-selling SKU finally earns a citation?

Notes on sources and policies

- Google publishes general guidance for AI Search features and structured data but does not disclose detailed AIO selection criteria. See AI features and your website (2025) and Product structured data docs.

- OpenAI’s Shopping Research relies on web sources and merchant feeds, with official specs for product and checkout. See Product Feed Spec and Agentic Checkout Spec.

- Perplexity labels sponsored content and emphasizes citations, but does not publish selection criteria. See Advertising disclosure (2024).