How AI Summaries Choose Which Brands to Recommend

Learn how AI summaries on Google, ChatGPT, and Perplexity choose which brands to recommend. Get expert insights on eligibility, selection signals, and citation strategies.

Ever wondered why your competitor gets named in AI answers while your brand doesn’t? The short version: AI summaries blend classic search eligibility with a new layer of retrieval and synthesis. If your pages aren’t easily found, verifiable, and “answerable,” you’re unlikely to be cited—no matter how pretty your homepage looks.

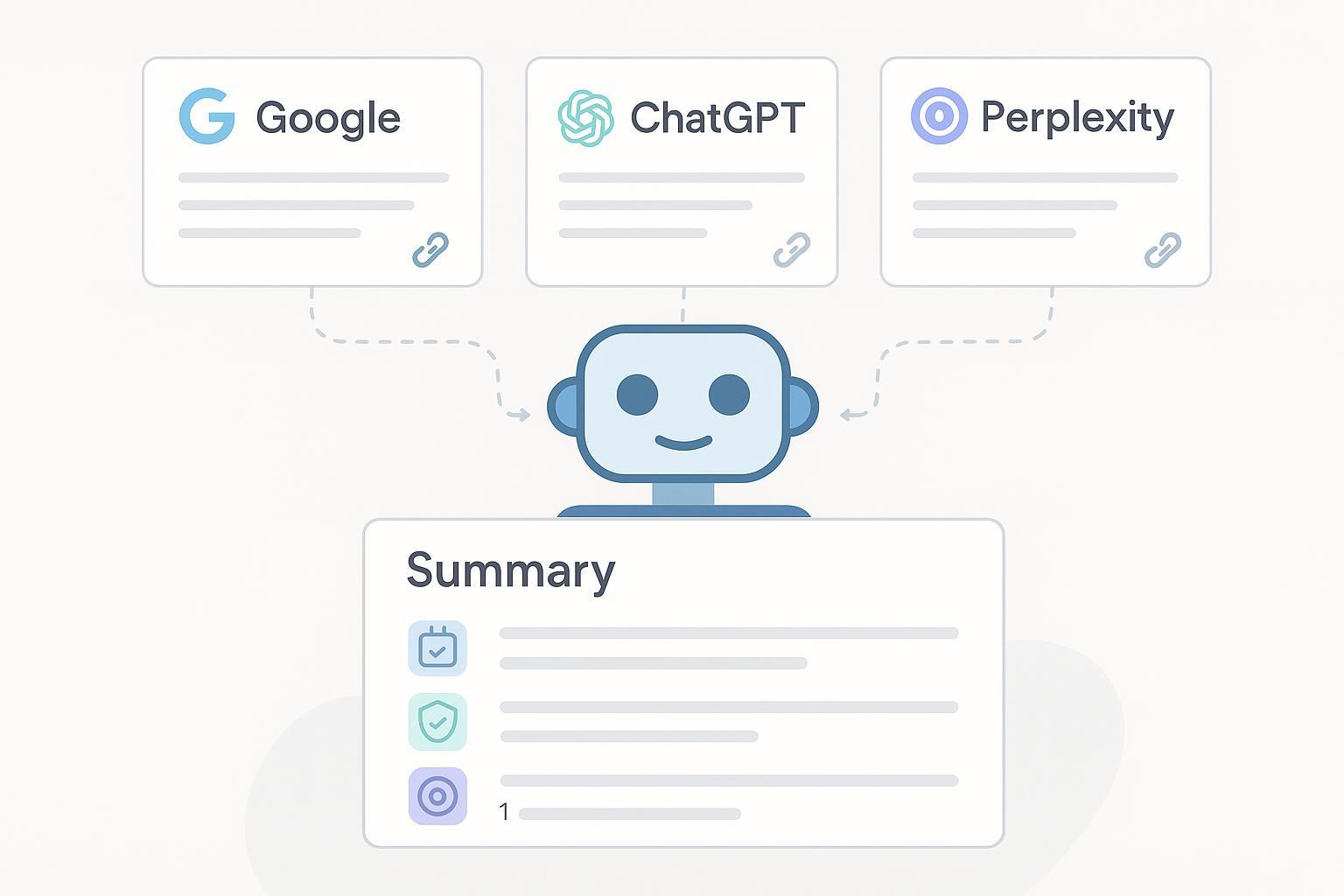

Think of an AI summary like a research assistant compiling a brief from several articles: it pulls relevant passages, checks a couple of corroborating sources, and then writes a concise recommendation. The mechanics differ by platform, but the principle holds across Google AI Overviews (or AI mode), ChatGPT Search, and Perplexity.

What counts as an AI summary (and how it differs from classic SERPs)

Traditional search results list web pages in a rank order. AI summaries synthesize information into a narrative answer and display supporting links so readers can verify claims. That synthesis usually involves multiple steps—query expansion, retrieval from several sources, and consolidation under policy and safety filters. The outcome isn’t simply “position 1–10,” it’s a curated justification with citations.

Eligibility and transparency: When your pages can be cited

-

Google According to Google’s own documentation, AI features in Search draw from the standard web index and display “relevant supporting links.” Pages need to be indexable and eligible for a Search snippet; the normal controls (robots, noindex, nosnippet) still apply and can affect whether a page is shown as a supporting link. See Google Developers’ guidance on the AI experience in Search in the document titled “AI features in Search.” You’ll also find that Google’s quality and spam policies shape this pool of eligible sources, especially after recent updates that target low‑quality or manipulative content. Read the policy context in Google Search Central’s March 2024 core update and spam policies post.

- Reference: Google Developers — AI features in Search

- Reference: Google Search Central Blog — March 2024 core update and spam policies

-

ChatGPT Search When ChatGPT uses search, it shows inline citations and a Sources panel that aggregates links. Under the hood, the system issues one or more targeted queries and grounds its answer on retrieved pages. To be among those citations, your content must be retrievable, relevant to the intent, and easy to verify. OpenAI documents these behaviors in its help center article on ChatGPT Search.

- Reference: OpenAI Help — ChatGPT search

-

Perplexity Perplexity operates a real‑time, AI‑assisted search flow and consistently surfaces citations alongside answers so readers can check claims. Its “Deep Research” mode goes further—issuing many queries, reading broadly, and compiling a more exhaustive report before citing sources. This emphasis on verifiability and breadth means recency, clarity, and cross‑source corroboration matter.

- Reference: Perplexity Help Center — How does Perplexity work?

- Reference: Perplexity Blog — Introducing Perplexity Deep Research

The common selection signals (what consistently matters)

- Semantic relevance and answerability: Clear sections that match user intent, with concise, citable statements up top and detail below.

- Authority and experience signals: Visible authorship, credentials where appropriate, and transparent sourcing. Treat E‑E‑A‑T as a quality lens rather than a “ranking factor knob.”

- Freshness and timestamps: Clearly dated updates for time‑sensitive topics; quick responsiveness to new information.

- Machine‑readability: Clean HTML, descriptive headings, and well‑structured facts. Where appropriate, add supported structured data from Google’s search gallery (for example, FAQ, HowTo, Product) to aid understanding—not as a guarantee of inclusion.

- Corroboration and safety: Claims supported by reputable sources; conservative framing in sensitive categories (health, finance, legal).

For schema reference and what Google actually supports today, see Google Developers — Structured data: Search Gallery.

Platform nuances and practical implications

-

Google AI Overviews Sources are drawn from the standard Search index and constrained by Search Essentials and spam policies. You won’t be cited if the page isn’t indexable or is blocked from snippets. Practical implication: fix crawl/indexing issues first, then improve “answerability.” Don’t present E‑E‑A‑T as a badge; demonstrate it through author identity, references, and on‑page clarity. Core updates and spam policy enforcement shape the eligible pool, which is why thin or unoriginal content often disappears from supporting links.

-

ChatGPT Search The system issues targeted web queries and grounds answers with citations. Precision matters: concise claims, clear titles, and obvious evidence improve selection odds. If the model had to pick three sources to justify a claim, would your page make the cut? Make it easy with short, verifiable summaries and links to primary data.

-

Perplexity (including Deep Research) Perplexity favors verifiability and breadth. Recency signals—visible timestamps, changelogs, or updated sections—can help. Being referenced by reputable third‑party domains that Perplexity often crawls can indirectly improve your likelihood of inclusion by strengthening corroboration.

If you’re comparing platform behaviors and planning monitoring workflows, you may find it helpful to review a prompt‑level visibility approach described in our site’s piece on prompt tracking across engines: see Geneo’s blog — Peec AI Review 2025: Prompt‑level Search Visibility. For agency‑grade processes, there’s an overview of workflows in Geneo’s blog — RankScale.ai Review 2025. And for broader context on how Google’s updates ripple into reporting, see Geneo’s blog — Google Algorithm Update October 2025.

Make your pages citation‑friendly (policy‑safe checklist)

- Ensure indexability and snippet eligibility: correct canonicals, no accidental noindex, and avoid nosnippet if you expect to be cited.

- Lead with an “answer block”: a 2–4‑sentence summary that directly addresses the query, followed by detail.

- Show author identity and experience: brief bio with relevant credentials; link to research or firsthand work.

- Add visible “last updated” dates; maintain a short changelog for evolving topics.

- Provide primary data or unique analysis, and link to your sources for verification.

- Use supported structured data where it genuinely fits the content; keep HTML clean and headings descriptive.

- Cross‑source corroboration: get referenced by credible third‑party sites in your niche.

Measurement and monitoring (with a neutral micro‑example)

What should you actually track over time? Focus on four threads: whether you’re cited for priority intents and which URLs are attributed; the sentiment or stance of the mention; the share of competing brands that appear alongside you; and how quickly updates on your pages show up in citations. Manual spot‑checks still work—rerun queries on a fixed schedule, log screenshots and cited URLs, and note changes. But once you’re tracking dozens of queries across multiple engines, it’s easier to centralize the work.

Disclosure: Geneo is our product. In a typical monitoring workflow, you can set up recurring watches for brand mentions across Google AI Overviews, ChatGPT Search, and Perplexity; record the cited source URLs; and tag each mention with sentiment for weekly review. Over time, this builds a history that shows which content updates actually move the needle and where competitors are gaining share.

If you operate in an agency model, documenting this as a repeatable “AI visibility report” helps clients understand progress and budget allocations—and it keeps everyone focused on answerable content rather than vanity pages.

YMYL and safety guardrails

Sensitive categories such as health, finance, and legal deserve a higher bar. Keep claims conservative and tie them to primary or official sources, bring qualified reviewers into the process where needed, and make that review visible on‑page. Avoid prescriptive language that could be misapplied without context, and keep disclaimers and dates current. These steps align with platform safety systems and the spirit of Google’s quality guidance, which tends to favor cautious, well‑sourced content in YMYL spaces.

Next steps

Fix eligibility first by confirming crawl, indexing, and snippet settings on priority pages. Make answers obvious with short, citable summaries, clear authorship, and visible dates. Publish something worth citing—original data, methods, or head‑to‑head comparisons—and earn corroboration from credible third parties. Finally, baseline today’s citations and sentiment, then track how they change after updates.

Here’s the deal: brands that treat AI summaries as “research‑backed answers with receipts” earn more citations over time. Build pages that are easy to find, easy to verify, and easy to quote—and the recommendations will follow.