How to Boost Your Brand’s AI Recommendation Rate (2025)

Discover proven 2025 strategies for increasing your brand’s AI recommendation rate. Actionable frameworks, advanced best practices, and expert insights for digital marketers, SEO, agencies.

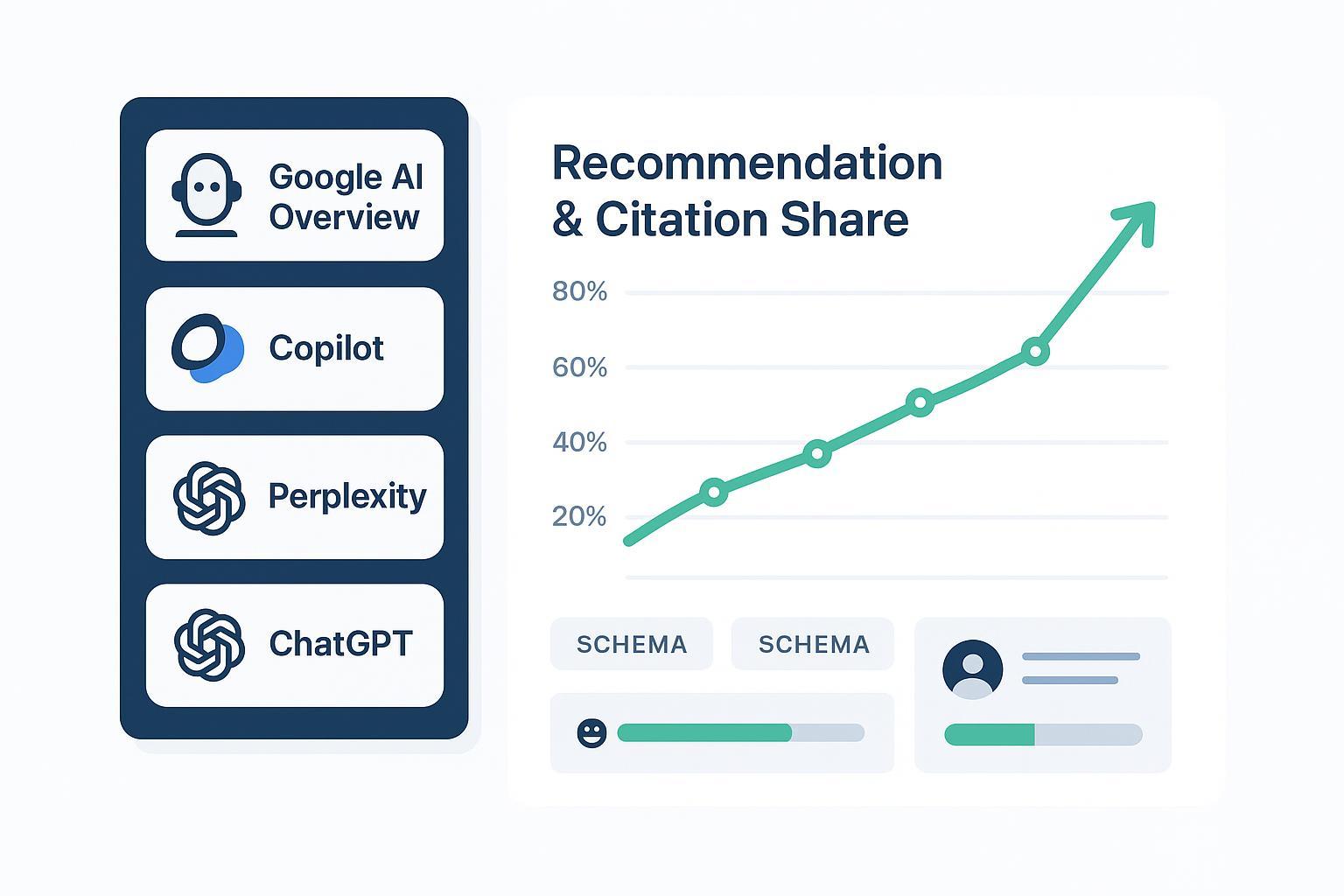

If AI assistants increasingly answer before people click, your brand can’t afford to be invisible inside those answers. By “AI recommendation rate,” I mean the frequency with which your brand (domains, products, authors, or claims) is surfaced, cited, or recommended across AI answer engines for a defined query set. In 2025, this matters because when an AI summary appears, people click fewer traditional links—Pew Research reported a measurable reduction in click-through behavior when Google shows an AI summary in results, shifting attention to the sources cited inside the module itself (Pew Research Center, July 2025). See the analysis in the study, Do people click on links in Google AI summaries? Pew Research Center (2025).

So, how do you reliably increase that recommendation rate? Here’s the playbook I use with teams that need outcomes, not theory.

1) Set the goal and baseline

Start by framing AI visibility as a measurable funnel. If you need a deeper primer, this explainer on What is AI Visibility? Brand Exposure in AI Search provides definitions and implications.

- Define your universe: topical cluster, geo/language, and commercial vs. informational intent.

- Baseline three weeks of data: appearance rate (where AI modules show up), your citation share, your sentiment inside answers, and any downstream traffic/leads attributable to cited links.

- Lock KPIs and cadences (see the KPI table below) and assign ownership for weekly experiments.

Why start this way? Because the mechanics of AI selection don’t always mirror organic rankings. In 2025, Ahrefs found only 12% overlap between URLs cited by AI assistants and Google’s top 10 results, showing that traditional SEO alone won’t cut it. See the finding in Ahrefs’ 2025 analysis.

2) What AI engines actually reward

Google’s guidance for AI Overviews reiterates people-first content, experience (E-E-A-T), and structured data. No special “AIO-only” tricks—quality fundamentals still rule. See Google’s stance in Succeeding in AI Search (2025).

Across major engines, the recurring inclusion signals you can influence are authority and credibility, answerability, freshness, structure, and technical accessibility. In practice, that means recognizable entities and expert authors, concise answers supported by citations, recent updates with visible datestamps, clean HTML with helpful headings and appropriate schema (Organization, Person, FAQPage, HowTo), and a crawlable, fast site.

Platform-wise, Google AI Overviews can cite beyond the top 10 when an answer fits intent and quality signals. Microsoft Copilot (with web grounding) shows inline citations and leans on Bing Webmaster standards. Perplexity prioritizes clarity and authority in live retrieval and cites inline. ChatGPT (browsing) displays sources when browsing is enabled; vendor language on crawlers and controls evolves, so avoid absolute claims.

3) A step-by-step playbook: from content to citations

Step 1: Map question intents

- Cluster your core topics and enumerate the conversational questions people and journalists actually ask. Prioritize those likely to trigger AI modules.

- Capture variants (comparisons, pros/cons, “best for X”) and align them to specific pages.

Step 2: Make pages answerable

- Give a crisp, supported answer high on the page. Use short paragraphs, scannable lists or a compact table where useful, and cite primary sources.

- Add appropriate schema (FAQPage, HowTo, Organization, Person). SurferSEO’s 2025 reporting associates reformatted, answerable content and schema with a 20–35% increase in AI citation share over 3–6 months; treat this as directional, not guaranteed. See the methodology in SurferSEO’s AI Citation Report (2025).

Step 3: Strengthen entity and author signals

- Ensure you have robust Organization and Person pages with credentials, editorial standards, and contact details.

- Cross-validate the brand and authors via reputable third parties and professional profiles.

Step 4: Freshness and change logs

- Add update notes when you revise facts, screenshots, or pricing. Keep a cadence for volatile topics.

- Refresh supporting references so models retrieve recent, authoritative evidence.

Step 5: Monitor and iterate weekly

- Track appearance rate, citation share, sentiment, and misattributions across engines.

- Run small-page experiments: headline rephrasing for clarity, schema adjustments, and evidence upgrades. Seer Interactive’s client work shows that restructuring pages for “AI answerability” correlates with 20–30% organic growth on informational queries and double-digit lead lifts; results vary by vertical and baseline authority. For details, see Seer Interactive’s 2025 update.

4) Practical monitoring example (neutral workflow)

Disclosure: Geneo is our product.

Here’s a reproducible process you can mirror in your analytics stack. The objective is to monitor AI recommendation rate and act on gaps—without assuming any vendor “magic.”

- Define your query sets by intent cluster and geography. Add known “AI module triggers.”

- Instrument collection for: module appearance rate, your citations by engine, sentiment of mentions, and downstream clicks/leads from cited links.

- Segment by entity: brand, author, product, and competitor.

- Review weekly and annotate changes (content updates, schema edits, PR wins, negative coverage).

How this looks in a multi-brand context: Teams configure dashboards to pull Google AI Overviews, Copilot, Perplexity, and ChatGPT browsing results, then visualize citation share, sentiment, and share of voice over time. They compare before/after for pages that were restructured for answerability and refresh cadence. If you want a visual reference for multi-brand monitoring layout, see the Geneo Agency Dashboard overview. The key is not the tool—it's the habit: measure, explain variance, and ship controlled updates every sprint.

5) Troubleshooting and risk controls

When citation share stays low, start with intent alignment. Are you answering the exact question the module targets, with precise language and current sources? Next, audit structure and schema for scannability and consistency; eliminate competing or duplicative pages. To handle bias, outdated snippets, or misattributions, provide balanced coverage with multiple reputable sources, publish clarifications where your brand is commonly misinterpreted, and keep a visible changelog that creates fresh, cit-able artifacts. For privacy-by-design measurement, separate consenting vs. non-consenting users in reporting, minimize personal data in any personalization logic, document profiling purposes, and ensure users have clear opt-out routes. In the EU, expect more transparent “why this source” disclosures under AI/DSA/DMA regimes—consistent entity and author signals help those explanations land.

6) Agency vs. in-house nuances

Agencies benefit from standardized intent mapping and cross-engine monitoring templates that allow like-for-like comparisons. Prioritize clients by “improvable share” (modules appear but the brand isn’t cited) and by commercial-intent density. Normalize reporting windows for credible before/after comparisons, and keep a weekly change log per account. In-house teams should coordinate with PR, product marketing, and social—third-party validations and executive profiles often tip inclusion. Institutionalize a freshness calendar with clear owners and approval flows, and maintain an “answerability pattern library” so writers can reuse winning structures.

7) Quick reference: KPIs and a weekly sprint checklist

Two rules of thumb: measure what models can see (clarity, structure, evidence) and what users can feel (helpfulness, sentiment, conversion). Then iterate.

| KPI | What it Measures | Data Source | Cadence |

|---|---|---|---|

| AI module appearance rate | % of tracked queries where an AI answer module appears | SERP/assistant sampling | Weekly |

| Brand citation share | % of those modules that cite your brand (by domain/entity) | AI engines’ citations | Weekly |

| Sentiment of mentions | Tone of brand mentions within AI answers | Content/sentiment review | Weekly |

| Downstream traffic/leads | Clicks and conversions from cited links | Analytics/CRM | Weekly/Monthly |

| Page “answerability” score | Structural readiness: clarity, schema, evidence | Content audit | Biweekly |

| Freshness delta | Days since last meaningful update | CMS/audit log | Weekly |

Weekly sprint checklist

- Audit 10–20 priority queries: where did the module appear, and were you cited?

- Ship 2–3 on-page improvements: clearer lead answer, schema updates, fresher sources.

- Publish one net-new, question-first page in a weak cluster and log the change.

- Review sentiment shifts and address any negative or stale claims.

- Attribute impact to changes using before/after windows in your dashboard.

If you need a deeper framework for setting goals, metrics, and reporting cadence, see this guide: AI Search KPI Frameworks for Visibility, Sentiment & Conversion (2025).

Bringing it together

Here’s the deal: AI engines reward the same fundamentals users do—clear answers, credible authors, recent evidence, and tidy structure. The twist is that inclusion and citations don’t always mirror classic rankings, so your operations need a tighter measurement loop. External studies point to meaningful gains after teams reformat for answerability and implement steady refresh cycles—SurferSEO reports higher citation share; Seer shows growth on informational queries; and both align with Google’s quality guidance. For the record, Google’s own messaging keeps it simple: build people-first content with strong experience signals and structured data, then keep it current. See Google’s “Succeeding in AI Search” (2025) and the CTR context from Seer Interactive’s September 2025 update.

Want a sanity check for your own baseline? Start with 100 queries, document module appearance rate, then chase a 10–20% relative lift in citation share over the next two months through focused, week-over-week improvements. And if you’re curious about why models pick certain sources over others, the overlap research from Ahrefs (2025) is a handy reminder: optimize for the answer, not just the rank.