How AI Predictive Targeting Is Transforming Omnichannel Marketing in 2025

Discover how AI-powered targeting is reshaping omnichannel marketing with privacy-safe precision, RMN growth, and hybrid measurement. Read actionable 2025 strategies now.

Why predictive targeting is having a moment

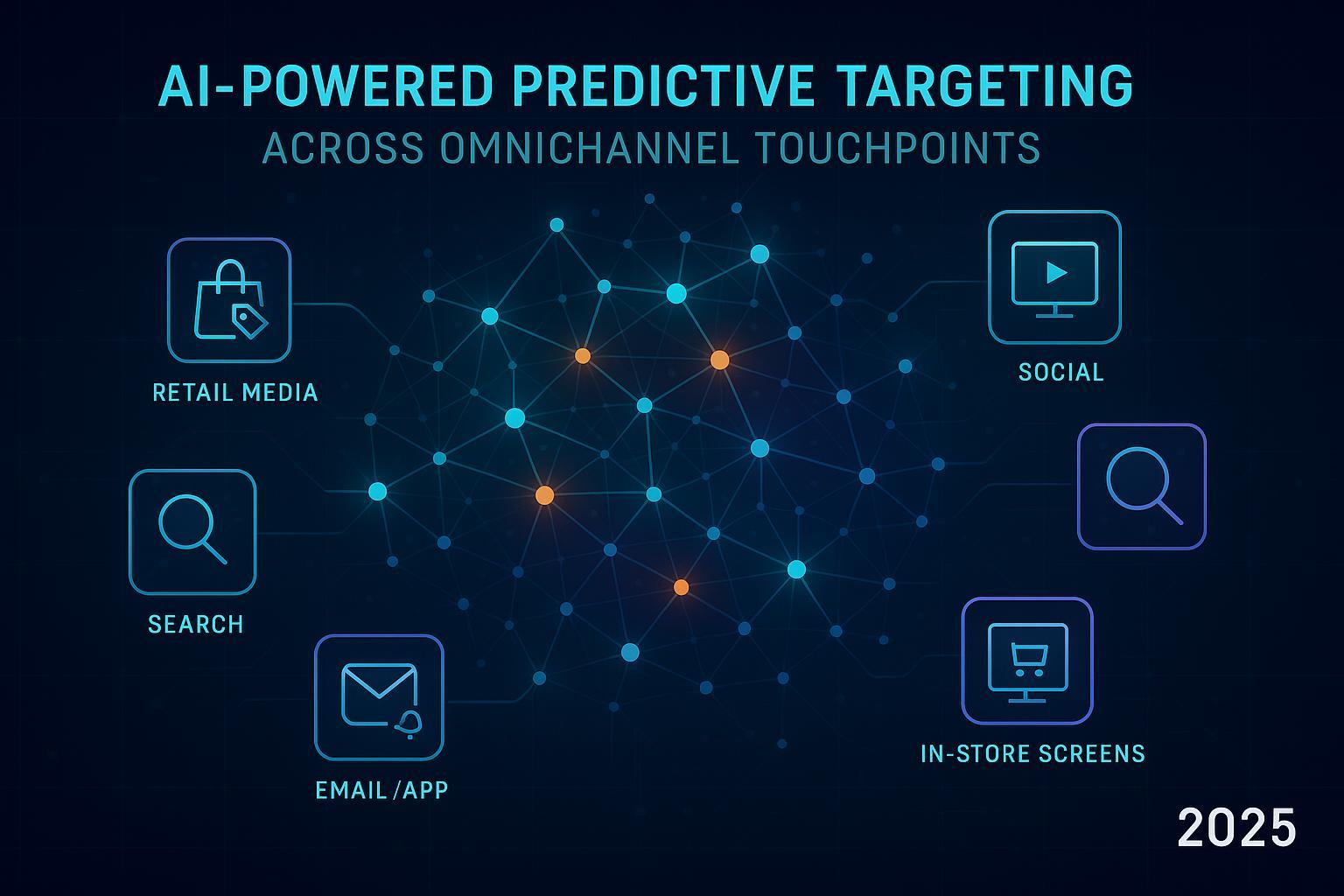

AI has moved from pilot to production across marketing, and nowhere is that more visible than in predictive targeting. In 2025, brands are using propensity, churn, and next-best-action models to decide who to reach, where, and with what creative—then orchestrating those decisions across retail media networks (RMNs), CTV, search/social, email/app, and even in‑store displays. What changed? Three forces converged:

- Privacy and identity shifts are forcing marketers to extract more value from first‑party data and privacy‑preserving signals rather than relying on third‑party cookies.

- RMNs have become the fastest‑growing ad channel in the U.S., with off‑site placements unlocking scale beyond retailer domains.

- Modeling maturity and tooling now make it feasible to operationalize predictive decisions across channels, not just analyze them after the fact.

The result: smarter reach, tighter pacing, and content variations that meet audiences in the right moment—without leaning on fragile cross‑site identifiers.

The 2025 realities shaping strategy

- Chrome privacy and cookies: As of April 22, 2025, Google stated it will maintain user choice over third‑party cookies and continue developing the Privacy Sandbox APIs, committing to share an updated roadmap. See the announcement in the official post, the Next steps for Privacy Sandbox and tracking protections in Chrome (Google, 2025). In parallel, the UK regulator’s CMA case page tracking Google’s Privacy Sandbox changes (2025) details ongoing oversight and quarterly progress reports.

- RMN growth and off‑site expansion: U.S. retail media spending will exceed $62B in 2025, adding more than $10B year over year, per Insider Intelligence/eMarketer’s 2025 outlook. Off‑site retail media will grow even faster—42.1% in 2025 per eMarketer’s analysis—as retailers extend beyond owned sites to reach shoppers across the open web and CTV. Nielsen’s 2025 view adds macro context, noting U.S. retail media is set to grow about 20% this year (versus ~4.3% for the total ad market), in Nielsen’s 2025 retail media outlook.

Together, these shifts mean predictive targeting must be privacy‑durable, interoperable with RMNs (on‑site and off‑site), and measurable without cookie‑level tracking.

How predictive targeting actually works in an omnichannel world

At a high level, predictive targeting turns signals into decisions:

- Signals: First‑party event streams (web/app), CRM and loyalty data, product catalog and pricing, zero‑party preference inputs, and contextual/retailer data. Where available, RMN signals (e.g., category/basket) and privacy‑safe browser APIs can augment.

- Models: Propensity (likelihood to convert), churn (likelihood to lapse), AOV/LTV prediction, and next‑best‑action policy models that map audience segments to the most effective channel/creative/offer.

- Activation: The model outputs inform channel‑specific execution—audience cohorts for RMNs, bidding/pacing rules for paid media, trigger logic for email/app, and creative variants aligned to predicted needs.

- Measurement and learning: Outcomes feed back into model retraining and budget allocation.

Privacy‑durable building blocks that make this possible:

- Customer data platform (CDP) to unify and activate first‑party data in real time.

- Data clean room (DCR) to collaborate with RMNs and publishers using privacy‑enhancing technologies (e.g., PSI/TEEs), with standardized matching and measurement. The IAB Tech Lab’s Attribution Data Matching Protocol (ADMaP) v1.0 (2025) is an important interoperability step for privacy‑centric attribution based on authenticated, consented data.

- Emerging privacy APIs (e.g., Topics, Protected Audiences, Attribution Reporting) to help reach and measure without cross‑site identifiers—especially useful for prospecting and upper‑mid funnel.

For a deeper orientation on orchestration and content variation, see this practical primer on AI-driven hyper‑personalization and multi‑platform content operations.

A practical activation playbook you can run this quarter

Below is a step‑by‑step workflow we see working across mid‑market and enterprise teams. It’s deliberately vendor‑neutral and emphasizes privacy, interoperability, and measurement clarity.

- Data foundation and governance

- Capture high‑quality first‑party signals (web/app events, CRM/loyalty, subscription status, product feed). Align consent and retention policies; audit for GDPR/CCPA.

- Stand up a CDP with ML‑ready schemas and real‑time activation. Define identity resolution rules and feature stores for modeling.

- Privacy‑safe audience collaboration

- Hash identifiers and join with RMNs in a data clean room. Build cohorts with minimum group sizes. Use PET‑based matching and export only aggregated insights.

- Adopt interoperable specs and naming conventions early (e.g., ADMaP‑aligned workflows) to reduce partner‑by‑partner custom work.

- Modeling for action

- Train propensity and churn models; add value‑based targets (AOV/LTV). Validate with cross‑validation and back‑testing; prioritize interpretability for auditability.

- Translate predictions into execution rules: audience tiers, bidding/pacing thresholds, creative variants, and offer logic.

- Channel execution (on‑site and off‑site)

- RMNs: Start with on‑site for high intent, then expand off‑site to scale. Use retailer category/basket signals where offered to refine cohorts. Prepare variants for CTV, display, and social via RMN extensions.

- Search/social/programmatic: Deploy predictive tiers as custom audiences; align bids and budgets to value tiers; enforce frequency caps.

- Email/app: Use next‑best‑action logic for journeys, suppressing low‑likelihood segments to control fatigue.

- Creative and content operations

- Generate offer/creative variants mapped to predictive tiers. Maintain brand guardrails and human‑in‑the‑loop review.

- Operational tip: A streamlined content stack helps teams ship high‑quality variants quickly across channels. The platform QuickCreator can be used to standardize briefs, generate channel‑ready copy in multiple languages, and publish efficiently across owned destinations. Disclosure: QuickCreator is our product.

- For a deeper dive into execution collateral across touchpoints, see how to structure omnichannel support content for predictive campaigns.

- Privacy Sandbox experiments

- Where fit, test Topics for interest‑based reach and use Attribution Reporting to gauge aggregated conversion paths—comparing against cookie‑based baselines where still available. Document assumptions and gaps.

- Feedback loop and iteration cadence

- Refresh models on a set cadence (monthly/quarterly) and rotate creative/offer tests often. Pipe results back into feature stores and MMM.

- For hands‑on best practices, explore this playbook for real‑time AI campaign optimization and audience segmentation.

Measurement that moves as fast as retail media

With user‑level telemetry patchy and RMN reporting uneven, hybrid measurement is essential:

- MMM for strategic allocation: Build an econometric model to estimate channel and tactic contributions and to plan budgets. Many teams update MMM monthly or quarterly; Google’s Meridian documentation notes that refresh cadence should match your budgeting rhythm, not a one‑size‑fits‑all weekly schedule, per the Meridian guidance on refreshing models (Google Developers, 2025).

- MTA where feasible: Use multi‑touch attribution on channels with adequate consented telemetry (e.g., some paid social/search) to guide weekly optimizations.

- Incrementality experiments: Run geo/cell tests to validate causal lift and calibrate MMM and MTA, especially for RMNs. Keep test cells stable for enough time to reach power; document seasonality controls and confidence criteria.

- Governance: Normalize RMN taxonomies (placements, formats, audiences). Maintain a measurement playbook that captures assumptions, known gaps, and how you triangulate.

Field notes: common pitfalls and how to avoid them

- Data sparsity and bias: Over‑feature engineering can mask bias. Start with interpretable models, run fairness checks, and keep features tight. Validate on out‑of‑time samples.

- Identity fragmentation: Don’t force brittle user‑level joins. Favor cohort‑based activation in clean rooms, with strong consent records and minimum audience sizes.

- Over‑indexing on a single RMN: Spread tests across two to three RMNs and use off‑site expansion to find incremental reach at acceptable CPMs.

- “Fast‑MMM” hype: Weekly MMM refreshes are not universally productive; ensure your data can support the cadence and that decisions genuinely change with new runs.

- Creative debt: Predictive audiences underperform without matching creative and offers. Budget time and resources for content ops alongside data and media.

Rolling change‑log (we keep this section updated)

- Updated on 2025-10-08 — Chrome cookies and Sandbox: Google maintains user choice and continues Privacy Sandbox API development; awaiting updated roadmap and continued CMA oversight (see Google’s April 22, 2025 post and the CMA case timeline cited above).

- Updated on 2025-10-08 — RMN momentum: 2025 U.S. spend remains on track to exceed $62B, with off‑site placements forecast to grow ~42% year over year; Nielsen expects ~20% overall U.S. RMN growth in 2025.

Your 90‑day roadmap

Weeks 1–2: Readiness and governance

- Stand up a cross‑functional pod (media, analytics, data engineering, product/CRM). Define success metrics (incremental ROAS, CAC/LTV, conversion rate lift, AOV, churn reduction).

- Confirm consent flows and data retention policies. Inventory first‑party data and instrument gaps.

Weeks 3–6: Modeling and pilot activation

- Build baseline propensity and churn models; validate with back‑tests. Translate scores into audience tiers and execution rules.

- Launch an RMN on‑site pilot and one off‑site test; align creative/offer variants to predictive tiers. Start a Privacy Sandbox Topics/Attribution Reporting experiment for prospecting.

Weeks 7–10: Measurement and scaling

- Implement a “hybrid” stack: MMM for macro allocation (initial calibration), MTA where telemetry supports, plus a geo/cell test on one RMN campaign to measure lift.

- Normalize taxonomies and dashboards; define a refresh cadence for models and MMM.

Weeks 11–13: Iterate and expand

- Scale off‑site RMN where incremental reach is evident; retire underperforming placements. Tighten frequency caps and pacing.

- Enrich features (inventory, margin, store proximity) and test next‑best‑action policies.

Closing note: For efficient content operations that support predictive programs—brief standardization, multilingual variants, and rapid publishing across owned channels—you can use QuickCreator to help your team work faster while maintaining brand and SEO governance.