How to Improve Your AI Answer Coverage (2025 Best Practices)

Discover 2025’s best practices for AI Answer Coverage: actionable checklists, KPIs, monitoring, schema markup, and compliance for search visibility.

If your content isn’t getting cited inside AI-generated answers, you’re invisible where decisions increasingly happen. AI Answer Coverage is the percentage of priority questions where AI engines include or cite your content. When coverage is low, your brand misses zero‑click exposure, credibility, and downstream conversions—no matter how strong your classic SEO rankings are.

This guide focuses on practical, 2025-ready steps: make content liftable, align structured data, keep technical foundations tight, and monitor cross‑engine KPIs. You’ll also see a real workflow example and an implementation template you can adapt immediately.

What “AI Answer Coverage” Really Means Today

AI answer engines synthesize responses and often show supporting links. Coverage is not a rank; it’s eligibility and inclusion. Think of it this way: if an engine can easily extract a crisp fact or steps from your page—and trust your provenance—it’s more likely to cite you.

- Google explains that AI Overviews provide summaries with links to support exploration, and site owners should focus on content quality and technical readiness. See Google’s guidance in AI features and your website (Search Central, 2024–2025) and the 2025 blog on succeeding in AI search.

- Microsoft notes that when Copilot answers are grounded in web data, citations appear with hyperlinks beneath the response. Read the Transparency Note for Microsoft Copilot (updated Oct 23, 2025).

- ChatGPT’s browsing/grounding modes vary by release; don’t assume consistent citations. Track behavior using hands‑on tests and current ChatGPT Release Notes.

- Perplexity is citation‑forward and typically presents numbered sources, though publisher control nuances have been debated publicly. Treat its behaviors empirically.

Why does coverage matter now? AI layers change user flow and clicks. Multiple 2025 studies indicate reduced traditional SERP CTR where AI answer units appear, shifting value to visibility inside the AI box and to brand recall over time. For a deeper overview of evolving search behavior, see AI Search User Behavior 2025.

Make Your Content Liftable: Extractability by Design

Engines favor content that can be lifted in exact sentences, bullet points, or table rows. Design pages so an answer engine can quote, summarize, and cite without guesswork.

- Lead with an answer-first intro: a 1–2 sentence resolution of the primary question before details.

- Use Q&A blocks with clear prompts (H2/H3 phrased as questions) and concise answers.

- Convert long walls of text into short lists, compact paragraphs, and semantic tables.

- Keep terminology consistent; avoid synonyms that fragment signals across the page.

- Add examples: a small “how-to” with numbered steps or a mini checklist near the top.

- Align visible content with structured data (next section) so engines see the same facts in both forms.

A quick heuristic: if a human analyst can copy two sentences and a list from your page to answer a query confidently, it’s likely “liftable” for AI.

Structured Data & Provenance That Get Picked Up

Structured data mirrors your visible content and clarifies what’s an answer, who authored it, and when it was updated. It’s not magic, but it reduces ambiguity.

- Use appropriate schema.org types: FAQPage for Q&As, HowTo for step-by-step guides, Product/Offer/Review for catalog pages, Organization and Person for publisher/author identity.

- Populate key properties: mainEntity, acceptedAnswer/suggestedAnswer, author, publisher, datePublished, dateModified.

- Keep JSON‑LD in sync with what users see on the page—if you state “updated November 2025” on-page, match it in schema.

- Establish provenance: visible bylines, author credentials, editorial policy, and references.

For official orientation, review Google’s AI features and your website and structured data documentation. Strong provenance also supports E‑E‑A‑T signals and selection confidence.

Example JSON‑LD notes

- FAQPage: Use clear questions and concise acceptedAnswer text; avoid stuffing.

- HowTo: Provide named steps and required tools/supplies where relevant.

- Organization/Person: Include sameAs links to authoritative profiles; keep naming consistent.

Technical Discoverability & Refresh Cadence

Even the best content can be skipped if discoverability is weak.

- Maintain XML sitemaps with canonical URLs and accurate lastmod timestamps.

- Ensure crawlability: robots.txt and meta directives should allow indexing for pages you want cited.

- Canonicalization: resolve duplicates; don’t fragment signals across near‑duplicate Q&A pages.

- Snippet controls: if you limit snippets, understand the tradeoffs for AI features.

- Freshness: bump dateModified and keep facts current; engines favor up‑to‑date content for fast‑moving topics.

Troubleshooting tips:

- Strong organic rank but no AI citation? Validate JSON‑LD, check question phrasing and answer placement, and ensure your page directly resolves the query.

- Recently updated but not re‑cited? Confirm sitemap lastmod and look for crawl anomalies; test alternative phrasing that matches common user prompts.

Monitor Cross-Engine Coverage with KPIs

Measurement turns best practices into operations. Instrument a set of KPIs that reflects visibility, quality, and risk across engines.

| KPI | What it measures | How to capture |

|---|---|---|

| Share of Answer | % of prompts where your brand/site is cited or named across engines | Maintain a representative prompt set; log citations per engine weekly |

| AI Citation Count | Total distinct citations to your content across engines per period | Tally by engine; note link quality and placement |

| Coverage of Priority Queries | % of your priority question set covered by citations | Weight by engine and intent; track month‑over‑month |

| Accuracy Incidents | Incorrect/misleading statements about your brand | Severity rubric; mean time to detect/remediate |

| Sentiment in AI Answers | Polarity when your brand is mentioned | Capture sentiment and rationale; monitor trends |

| Freshness/Re‑index Velocity | Lag from content update to reappearance in citations | Note dateModified vs first re‑citation timestamp |

Industry practitioners have proposed expanded KPI sets for GenAI search. For conventions and formulas, see the discussion via Search Engine Land’s 2025 KPI overview and our deep dive, LLLMO Metrics: Measure Accuracy, Relevance, Personalization.

Practical Example — Monitoring and Remediation with Geneo

Disclosure: Geneo is our product.

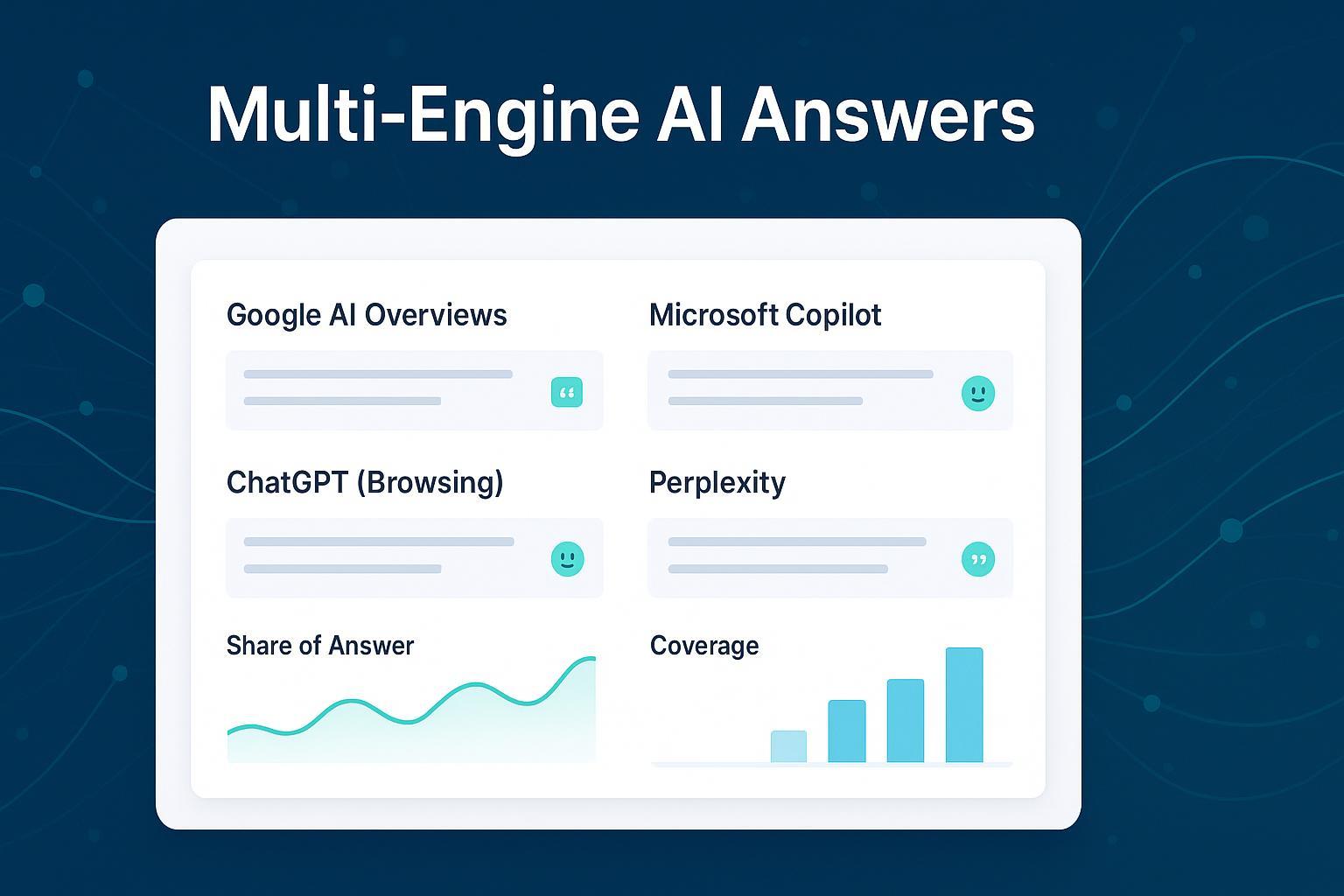

Here’s a lightweight, repeatable workflow you can run across Google AI Overviews, Copilot, ChatGPT (browsing), and Perplexity:

- Define a 100–200 prompt set covering branded, product, and problem‑solution queries. Include regional variants if you operate internationally.

- Monitor weekly: record whether answers cite your pages; capture link anchors, positions, and sentiment. Flag any accuracy issues.

- Triaging inaccuracies: classify severity (low/medium/high), assign owners, and gather corrective references.

- Remediate content: tighten answer‑first intros, add/validate FAQPage or HowTo schema, update facts, and clarify author credentials and references.

- Re‑validate: push sitemap lastmod, confirm crawlability, and spot‑check how engines render and cite the updated pages.

- Track impact: compare Share of Answer and Coverage of Priority Queries before/after fixes; note freshness velocity.

Geneo centralizes this cross‑engine monitoring with real‑time citation logs, sentiment analysis, historical query tracking, and content strategy suggestions—so teams can see where coverage drops, why, and what to do next. If you work with multiple brands or regions, multi‑brand management and collaboration features help standardize the workflow.

Implementation Template — Your Cross-Engine Dashboard

Your dashboard should map KPIs to data sources and define cadence and thresholds.

- Prompt set: list queries, intents, and regions; assign engine coverage (AIO, Copilot, ChatGPT, Perplexity).

- Data capture: for each engine, log citations, link quality (anchor clarity, placement), and sentiment.

- Benchmarks and thresholds: set escalation when Share of Answer falls ≥10% week‑over‑week or when accuracy incidents exceed defined limits.

- Freshness tracking: note update timestamps and first re‑citation events.

- Workflow states: triage → fix → re‑validate → monitor, with ownership and SLAs.

Geneo’s dashboard templates make this practical, and the platform supports regional monitoring. For behavior nuances, see Why ChatGPT Mentions Certain Brands and AI Overview Tracking Tools for China & GEO.

Compliance & Brand Safety: Reduce Risk While You Scale

Accuracy and trust are not optional—especially in regulated sectors.

- Maintain a hallucination log: record incidents, severity, remediation steps, and outcomes.

- Gate regulated claims through SME/legal review before publishing optimization changes.

- Publish a corrections page and link from relevant content where you’ve corrected material facts.

- Licensing and provenance: expose machine‑readable license text if you permit reuse; keep canonical URLs, author, publisher, and timestamps clear.

- Privacy: exclude PII and sensitive data from “liftable” content by design; use pre‑publication scans.

For regulatory anchors and context:

- The FTC’s 2023 revisions address endorsements and material connections in digital content—see FTC Endorsement Guides (16 CFR Part 255).

- The EU AI Act phases transparency obligations for generative systems through 2026. Review the European Parliament’s AI Act overview.

Monthly Audit Checklist (Compact)

- Extractability: answer‑first intros; Q&A blocks; short lists/tables; consistent terminology.

- Structured data: validate JSON‑LD; match FAQPage/HowTo/Product/Organization/Person to visible content; confirm dateModified/datePublished.

- Discoverability: XML sitemap with canonical URLs and correct lastmod; resolve canonical conflicts; allow crawl/indexing.

- E‑E‑A‑T: bylines, author credentials, publisher info, references; editorial policy and corrections page.

- Freshness: update facts and examples; sync on‑page dates with schema and sitemap.

- Monitoring: log engine appearances/citations; investigate gaps; capture sentiment and accuracy incidents; queue remediation.

Next Steps

If you’ve made your content liftable, aligned schema, and instrumented KPIs, you’re ready to run your first coverage audit and remediation cycle. Geneo can streamline the monitoring and reporting across engines while your team owns the content fixes. Start with a 100‑query set, build the dashboard, and set weekly cadence—then iterate.

Want help operationalizing this? Try Geneo’s free trial and adapt the workflow to your brand portfolio: geneo.app.